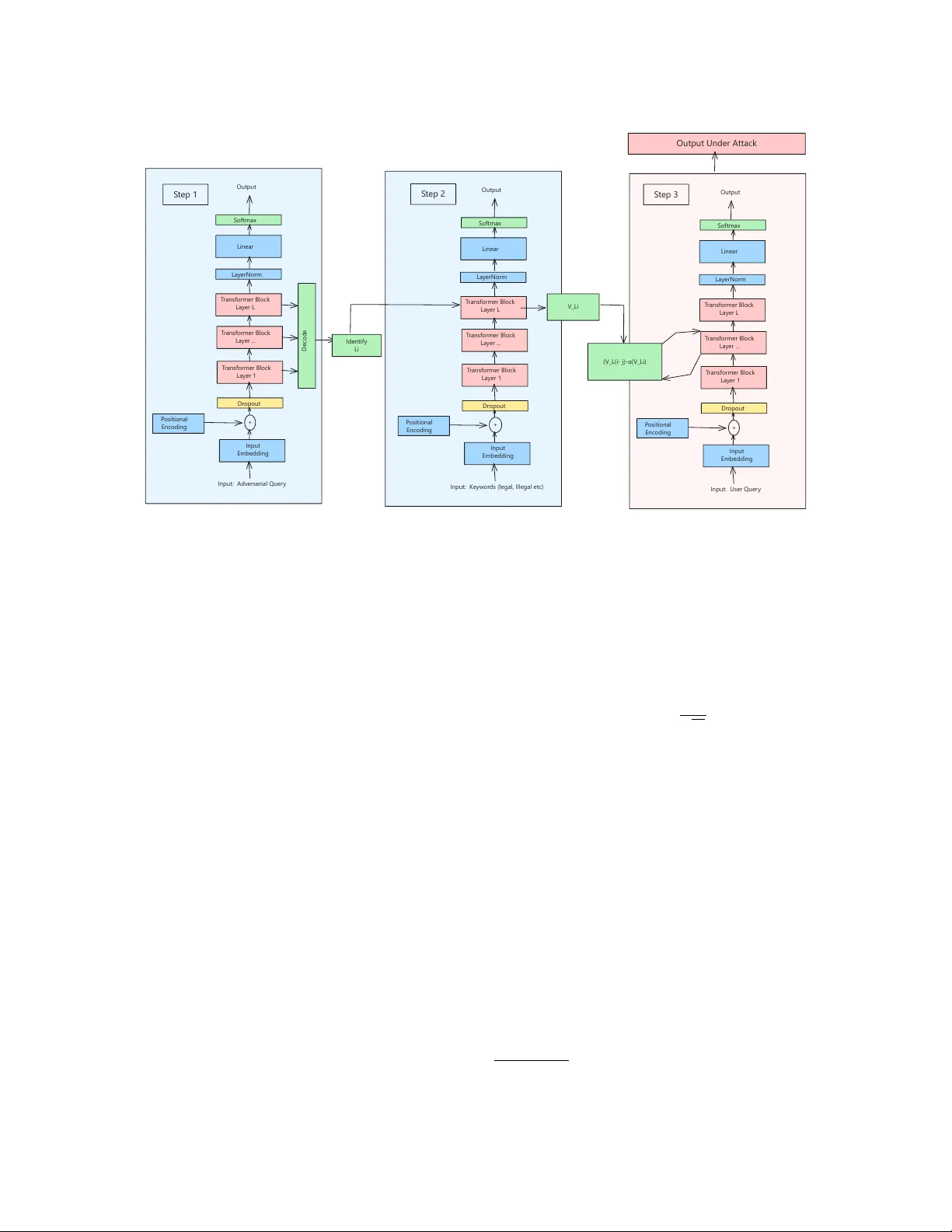

Amnesia: Adversarial Semantic Layer Specific Activation Steering in Large Language Models

Warning: This article includes red-teaming experiments, which contain examples of compromised LLM responses that may be offensive or upsetting. Large Language Models (LLMs) have the potential to create harmful content, such as generating sophistica…

Authors: Ali Raza, Gurang Gupta, Nikolay Matyunin