Fluids You Can Trust: Property-Preserving Operator Learning for Incompressible Flows

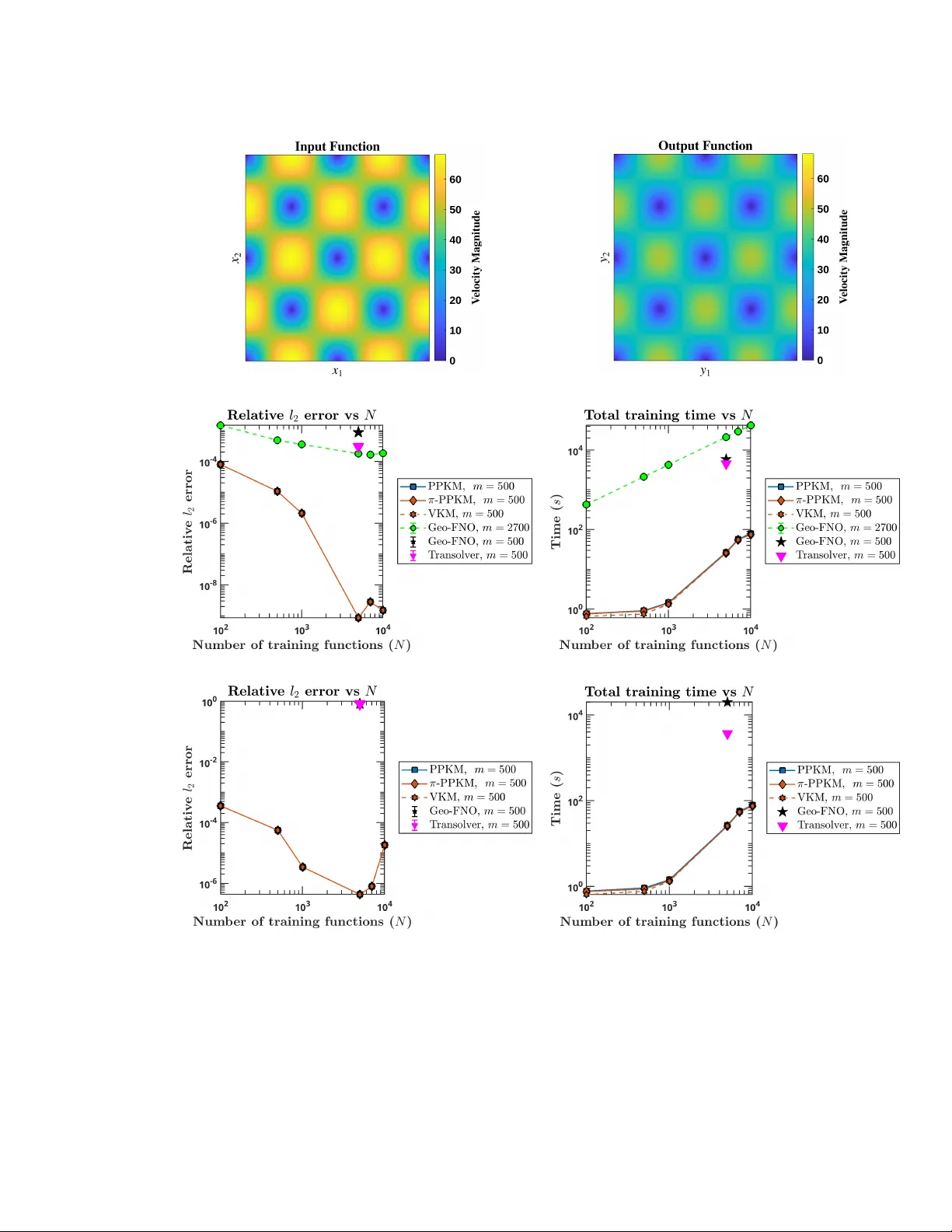

We present a novel property-preserving kernel-based operator learning method for incompressible flows governed by the incompressible Navier--Stokes equations. Traditional numerical solvers incur significant computational costs to respect incompressib…

Authors: Ramansh Sharma, Matthew Lowery, Houman Owhadi