Bundle Adjustment in the Eager Mode

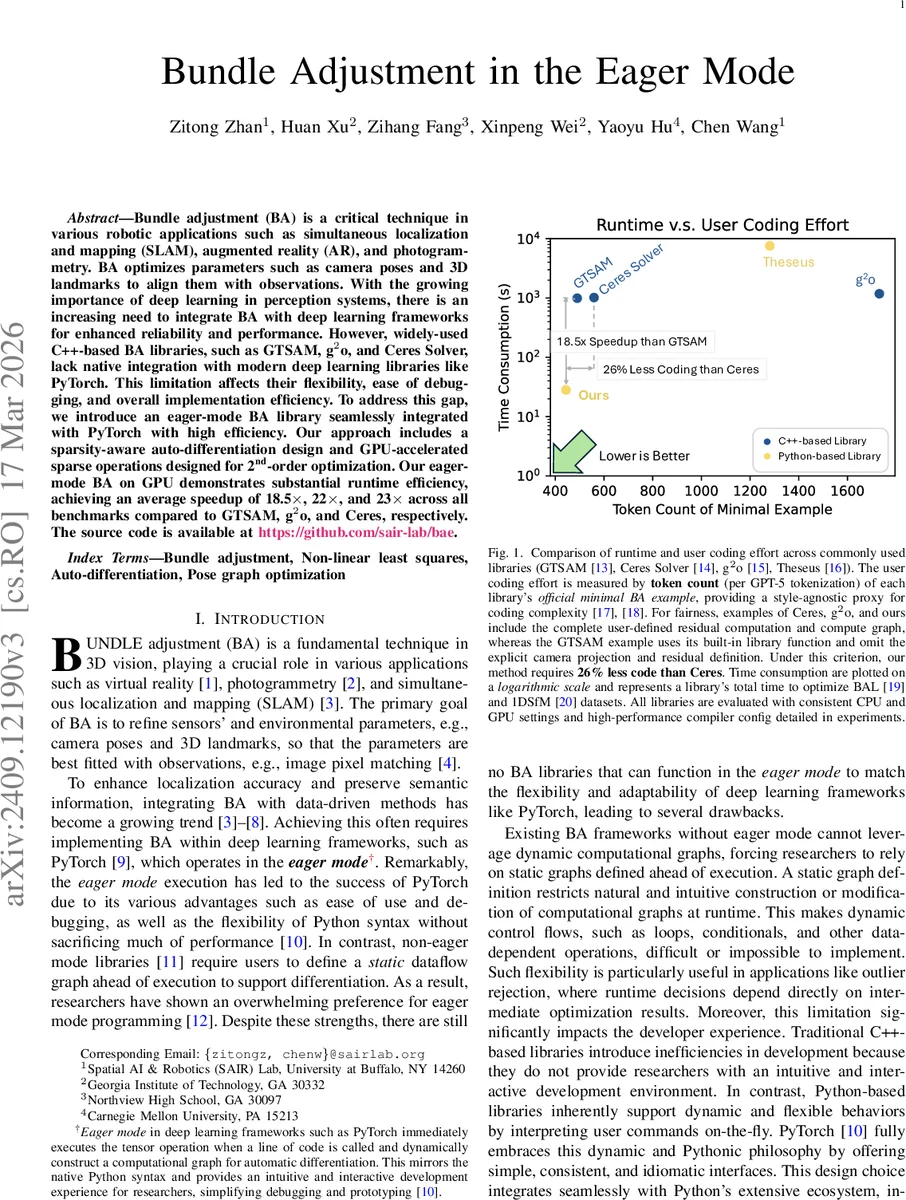

Bundle adjustment (BA) is a critical technique in various robotic applications such as simultaneous localization and mapping (SLAM), augmented reality (AR), and photogrammetry. BA optimizes parameters such as camera poses and 3D landmarks to align them with observations. With the growing importance of deep learning in perception systems, there is an increasing need to integrate BA with deep learning frameworks for enhanced reliability and performance. However, widely-used C++-based BA libraries, such as GTSAM, g$^2$o, and Ceres Solver, lack native integration with modern deep learning libraries like PyTorch. This limitation affects their flexibility, ease of debugging, and overall implementation efficiency. To address this gap, we introduce an eager-mode BA library seamlessly integrated with PyTorch with high efficiency. Our approach includes a sparsity-aware auto-differentiation design and GPU-accelerated sparse operations designed for 2nd-order optimization. Our eager-mode BA on GPU demonstrates substantial runtime efficiency, achieving an average speedup of 18.5$\times$, 22$\times$, and 23$\times$ across all benchmarks compared to GTSAM, g$^2$o, and Ceres, respectively.

💡 Research Summary

The paper introduces a novel bundle adjustment (BA) library that operates entirely within PyTorch’s eager execution mode, addressing a critical gap between traditional C++‑based BA solvers and modern deep‑learning workflows. Existing libraries such as GTSAM, g2o, and Ceres Solver rely on static factor graphs, CPU‑centric implementations, and dense Jacobian computation, which hampers flexibility, debugging, and GPU utilization. By contrast, the proposed “eager‑mode BA” library integrates seamlessly with PyTorch, allowing developers to write BA code using ordinary Python syntax while retaining full automatic differentiation support.

Key technical contributions include a sparsity‑aware auto‑differentiation framework that dynamically traces tensor operations to construct a directed acyclic graph (DAG) of the computation. This DAG is analyzed at runtime to infer the Jacobian’s sparsity pattern, which is then represented using PyTorch’s native sparse tensor format. The approach eliminates the need for dense Jacobian materialization, drastically reducing memory consumption and enabling efficient sparse linear algebra on the GPU. To handle Lie‑group parameters (e.g., SE(3) camera poses), the authors leverage LieTensor from the PyPose library, providing batched, differentiable representations of poses and their algebraic derivatives.

The library implements second‑order optimization algorithms—Gauss‑Newton and Levenberg‑Marquardt—using GPU‑accelerated sparse solvers that are fully compatible with PyTorch’s autograd engine. All core operations, including Jacobian assembly, damping, and linear system solution, are expressed as standard PyTorch operators, preserving interpretability and ease of debugging.

Experimental evaluation spans classic BA benchmarks (1DSfM, BAL) and deep‑learning‑driven structure‑from‑motion pipelines. Under identical hardware (Intel Xeon CPU, NVIDIA RTX 3090 GPU) and compiler settings, the eager‑mode BA achieves average speedups of 18.5× over GTSAM, 22× over g2o, and 23× over Ceres Solver. In addition to runtime gains, the authors measure code complexity by tokenizing minimal BA examples from each library using GPT‑5 tokenization; their implementation requires 26 % fewer tokens than the minimal Ceres example, highlighting the productivity benefits of the Python‑centric design.

Beyond pure BA, the framework’s design is generic enough to support other sparse non‑linear least‑squares problems such as pose‑graph optimization (PGO). Dynamic control flow constructs (loops, conditionals) can be used directly in Python to implement runtime strategies like outlier rejection, which are cumbersome in static‑graph systems. The source code is publicly released (https://github.com/sair‑lab/bae), facilitating reproducibility and further extension.

In summary, the paper demonstrates that high‑performance, GPU‑accelerated, sparsity‑aware bundle adjustment can be realized within PyTorch’s eager execution model without sacrificing accuracy. This bridges the long‑standing divide between classical geometric optimization and modern deep‑learning pipelines, opening new avenues for end‑to‑end trainable SLAM, AR, and photogrammetry systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment