SpatialPoint: Spatial-aware Point Prediction for Embodied Localization

Embodied intelligence fundamentally requires a capability to determine where to act in 3D space. We formalize this requirement as embodied localization -- the problem of predicting executable 3D points conditioned on visual observations and language …

Authors: Qiming Zhu, Zhirui Fang, Tianming Zhang

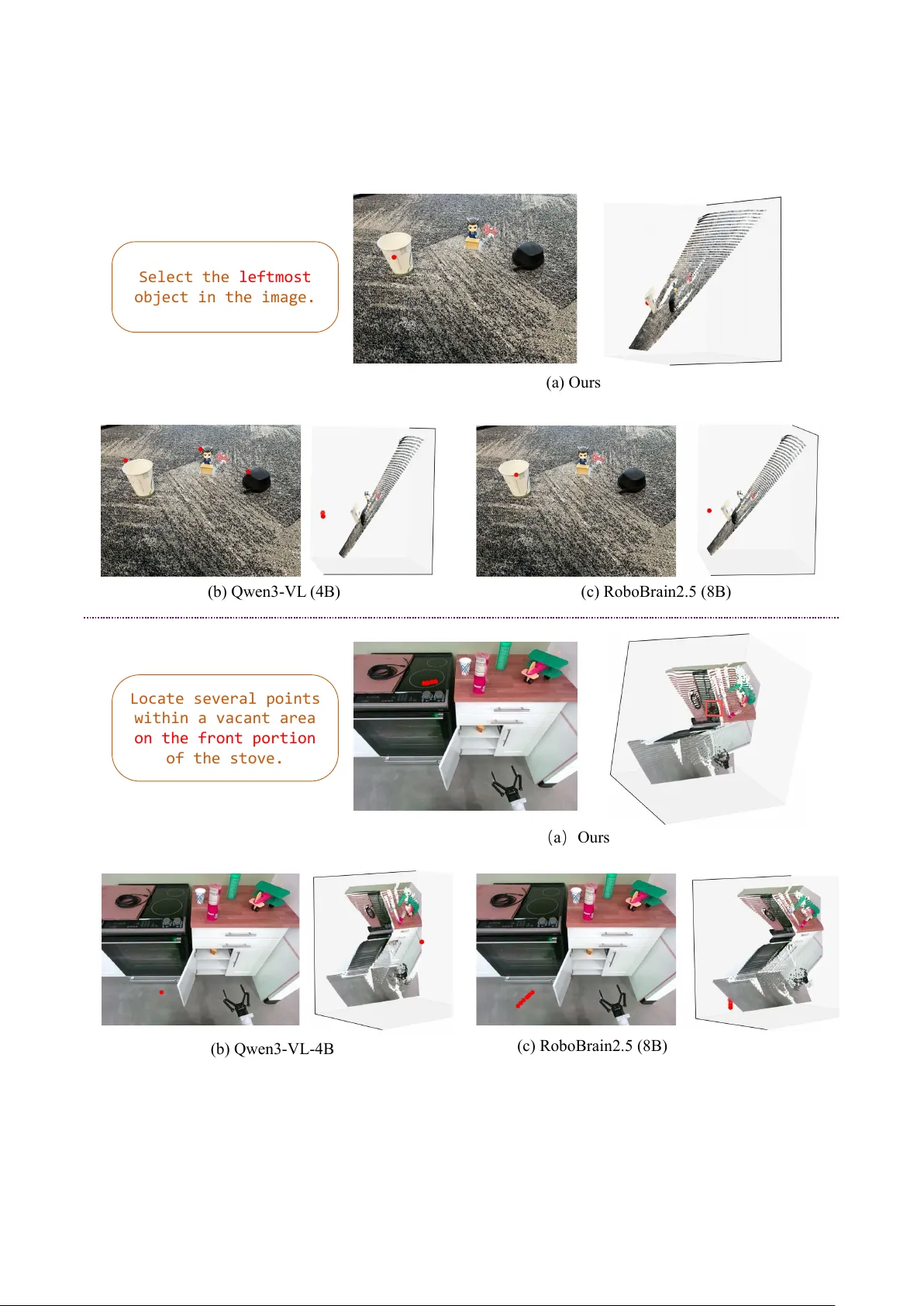

SpatialPoint: Spatial-a ware Point Prediction for Embodied Localization Qiming Zhu 1 , 2 ∗ , Zhirui Fang 1 , 2 ∗ , T ianming Zhang 1 , Chuanxiu Liu 1 , Xiaoke Jiang 1 † , Lei Zhang 1 , 3 1 V isincept Research 2 Tsinghua Uni versity 3 International Digital Economy Academy (IDEA) ∗ W ork done during an internship at V isincept. † Corresponding author . Abstract Embodied intelligence fundamentally r equires a capability to determine wher e to act in 3D space . W e formalize this r e- quir ement as embodied localization — the problem of pre- dicting executable 3D points conditioned on visual obser- vations and language instructions. W e instantiate embodied localization with two complementary tar get types: touchable points, surface-gr ounded 3D points enabling dir ect physical interaction, and air points, fr ee-space 3D points specifying placement and navigation goals, directional constraints, or geometric r elations. Embodied localization is inherently a pr oblem of embodied 3D spatial reasoning — yet most ex- isting vision-language systems r ely pr edominantly on RGB inputs, necessitating implicit geometric r econstruction that limits cr oss-scene g eneralization, despite the widespr ead adoption of RGB-D sensors in r obotics. T o addr ess this gap, we propose SpatialP oint , a spatial-aware vision-language frame work with car eful design that integr ates structur ed depth into a vision-language model (VLM) and generates camera-fr ame 3D coor dinates. W e construct a 2.6M-sample RGB-D dataset covering both touchable and air points QA pairs for training and evaluation. Extensive e xperiments demonstrate that incorporating depth into VLMs signifi- cantly impr o ves embodied localization performance. W e further validate SpatialP oint thr ough r eal-r obot deployment acr oss three repr esentative tasks: language-guided robotic arm grasping at specified locations, object placement to tar- get destinations, and mobile r obot navigation to goal posi- tions. Pr oject page: https://qimingzhu- google. github.io/SpatialPoint/ . 1 Intr oduction Recent advances in embodied intelligence [ 1 – 3 ] increas- ingly shift focus from passi ve visual recognition tow ard spa- tially executable perception dri ven by the great wave of A GI. In many manipulation-oriented and language-conditioned robotic tasks [ 4 , 5 ], the central requirement is to determine wher e to act in 3D space. W e ar gue that a broad class of em- bodied behaviors—ranging from grasping and placement to navigation and motion specification—can be unified under a single abstraction: predicting executable 3D points condi- tioned on visual observ ations and language instructions. W e term this problem embodied localization . W e instantiate embodied localization with two com- plementary target types. T ouchable points are surface- grounded 3D coordinates that enable direct physical inter- action, such as grasping or contact [ 6 , 7 ]. Air points are free-space 3D coordinates that specify placement and navi- gation goals, directional constraints, or geometric relations beyond visible surf aces [ 8 ]. T ogether , these two types con- stitute a minimal yet expressi v e representation of ex ecutable actions, unifying perception-supported and reasoning-driv en targets within a single frame w ork, as illustrated in Figure 1 . Embodied localization is inherently a problem of embod- ied 3D spatial reasoning. Howe ver , most existing vision- language models (VLMs) [ 9 – 11 ] rely predominantly on RGB inputs, predicting 2D bounding boxes, masks, or other image-lev el outputs. While such models can produce guesses for 3D queries, they cannot guarantee alignment with real-world metric geometry [ 12 – 14 ]. Monocular RGB cues lack explicit metric depth, often resulting in insuffi- cient geometric fidelity for precise spatial reasoning. Al- though multi-view reconstruction can recover 3D structure, it is mathematically complex and heavily dependent on ac- curate camera calibration and relati v e poses. Consequently , learning 3D spatial structure implicitly from RGB views alone is challenging, prone to ov erfitting to training scenes, and often exhibits weak cross-scene generalization—despite the widespread adoption of RGB-D sensors in robotics and industrial systems that provide direct metric depth measure- ments. T o address this gap, we propose SpatialPoint , a spatial- aware vision-language framew ork that directly integrates structured depth into a VLM and generates camera-frame 3D coordinates via the language modeling head. Howe ver , incorporating a new depth modality into a pre-trained vision- language model that expects only RGB and language in- puts is non-trivial. This is precisely why most existing ap- proaches a void using depth at the input stage, instead treat- ing it as an auxiliary cue for feature augmentation or post- hoc refinement (see Section 2.3 ). T o tackle this modality in- tegration challenge, SpatialPoint takes RGB and structured depth as parallel inputs: depth is first encoded into a 3- channel representation and processed by a dedicated depth backbone, producing depth tok ens that are fused with visual and language features within the multimodal backbone for 1 Embodied Loc aliz a on as P oin t P r edicon Pr edict : T ouchab le and A ir poin ts T ouchable : surf ace - gr ounded Ai r : fr ee - space (a) 2D refe ren ce (b) 3D surfa ce tar ge ts (c) 3D free - sp ac e tar ge ts Move to the cent er of it In front of the obje ct Keep 0.3 m away fro m t he object Free - spa ce between A a nd B Navigate to the specifie d l ocation Grasp th e o bject Touch th is point Point ou t sth Pick up fro m here In t er ac t wi th a tar get object part Pinpoint so me area Figure 1: Embodied localization as ex ecutable 3D target prediction. W e reduce embodied ex ecution to predicting camera- frame 3D points of two complementary types: touchable points grounded on observed surfaces, and air points located in free space and specified by spatial language. depth-aware reasoning. T o fully acti v ate the depth modality , we further adopt a two-stage training strategy that progres- siv ely aligns the depth encoder with the pre-trained VLM. T ogether, these careful design choices are what enable struc- tured depth to contribute meaningfully to embodied 3D spa- tial reasoning, rather than introducing noise or degrading the pre-trained capabilities. T o enable large-scale training and ev aluation, we con- struct SpatialPoint-Data , a 2.6M-sample RGB-D dataset cov ering both touchable and air points question-answer pairs. T ouchable targets are obtained by lifting 2D inter- action annotations into camera-frame 3D coordinates using depth and camera geometry . Air targets are automatically synthesized to co ver direction-specified and relation-centric queries with optional metric constraints. W e further estab- lish SpatialPoint-Bench for unified ev aluation over both target types. Extensive experiments demonstrate that incor- porating structured depth into VLMs consistently improv es embodied localization performance over RGB-only base- lines and alternative geometry-input designs, establishing depth tokens as a critical geometric inductive bias for scal- able, spatial-aware embodied reasoning. Finally , we validate SpatialPoint through real-robot de- ployment across three representative tasks: language-guided robotic arm grasping at specified locations ( touchable points ), object placement to tar get destinations ( air points ), and mobile robot navigation to goal positions ( air points ). The consistently strong real-world performance demon- strates the practical effecti veness and generalizability of our approach. Our contributions are threefold: 1. W e introduce embodied localization as a unified formu- lation of e xecutable 3D target prediction and instantiate it with tw o complementary types: touchable points and air points. 2. W e construct SpatialPoint-Data (2.6M samples) and SpatialPoint-Bench, enabling large-scale training and standardized ev aluation of depth-aw are embodied lo- calization. 3. W e propose SpatialPoint, an RGB-D extension of a VLM with an explicit depth-tok en stream that di- rectly generates camera-frame 3D coordinates, achiev- ing consistent gains in spatial reasoning and cross- scene generalization, v alidated by both offline bench- marks and real-robot experiments. 2 Related W ork 2.1 Spatial Understanding in V ision- Language Models Recent vision-language models (VLMs) have progressed from 2D grounding toward spatial understanding in 3D en- vironments. A common direction is to explicitly inject 3D representations into multimodal frameworks [ 15 , 16 ], e.g., aligning point-cloud features with large language models for 3D grounding, or improving grounding and relational rea- soning via fine-grained re w ard modeling [ 17 ]. Another line preserves strong 2D backbones while enabling 3D reasoning through multi-vie w inputs or coordinate prompting [ 18 – 20 ]. Hybrid approaches further incorporate geometric cues such as depth, lifting, or spatial programs. SpatialRGPT [ 12 ] 2 introduces a depth plugin that leverages monocular (rel- ativ e) depth to improve direction and distance reasoning; ZSVG3D [ 21 ] formulates spatial reasoning as visual pro- grams; and sev eral works lift 2D detections into 3D space for object-lev el localization [ 13 , 22 ]. Recent methods also explore more expressi v e 3D representations—such as 3D Gaussians and structured scene abstractions [ 23 – 26 ]—to support relational reasoning. Despite these adv ances, most approaches remain object- centric, focusing on 3D boxes, scene graphs, or coarse abstractions, rather than producing fine-grained executable spatial targets for embodied interaction. 2.2 V ision-Language Interfaces for Manipu- lation Execution Embodied tasks ultimately require actionable targets in met- ric 3D space. One line of work [ 6 ] predicts language- conditioned interaction points or affordances, emphasizing contact-lev el grounding for manipulation. Another line rep- resents actions in structured 3D spaces to better couple per- ception and control, such as voxelized action prediction from RGB-D reconstructions [ 5 ] or sequence-lev el control generation in continuous action spaces [ 27 , 28 ]. More recently , vision-language-action (VLA) models scale end-to-end polic y learning for multi-task generaliza- tion. Systems such as R T -1/R T -2 [ 2 , 29 ], PaLM-E [ 1 ], and SayCan [ 4 ] integrate language, vision, and control at different levels of abstraction, while open generalist pol- icy backbones—including OpenVLA and Octo [ 30 , 31 ]— are trained on lar ge embodied datasets. Complemen- tary to policy learning, explicit 3D w orld modeling of- fers geometry-grounded action representations; for e xample, PointW orld [ 32 ] predicts 3D point flows in a shared metric space for manipulation. On the dataset side, RoboAf ford [ 33 ] scales affordance supervision for manipulation, emphasizing object parts and surface contact regions. At a broader scope, Robo- Brain 2.5 [ 34 ] aggregates multi-source embodied supervi- sion to support generalist models, including depth-aw are coordinate-related signals. Howe ver , existing approaches typically focus either on surface-supported interaction points or on end-to-end ac- tion generation, and rarely provide a unified view that cov- ers both surface-attac hed targets and reasoning-required goals in surrounding free space under a consistent vision- conditioned interface. 2.3 Depth Cues f or Spatial Reasoning in VLMs and VLAs In practical robotics, depth is often readily av ailable via lo w- cost RGB-D sensors [ 35 , 36 ] (or can be approximated by monocular depth estimation [ 37 ]), making metric geometry an economical signal rather than an extra burden. Accord- ingly , depth cues provide a direct way to inject metric struc- ture into vision-language m odels and embodied policies, im- proving direction and distance reasoning beyond what RGB- only cues can reliably support. For VLMs, SpatialBot [ 38 ] explicitly consumes RGB and depth images to enhance depth-aw are spatial understanding, T able 1: Composition of SpatialPoint-Data and SpatialPoint-Bench. Ratios are computed within each category . Category Subtype #QA Ratio (%) T ouchable-Data Object detection 513,395 26.99 Object pointing 161,681 8.50 Object affordance 560,836 29.48 Object reference 346,617 18.22 Region reference 319,961 16.82 T ouchable-Bench Object affordance 124 36.69 Spatial affordance 100 29.59 Object reference 114 33.73 Air-Data direction only 252,267 35.18 direction (offset) 241,373 33.66 body-length 138,615 19.33 between 26,981 3.76 between (offset) 57,890 8.07 Air-Bench direction only 927 37.91 direction (offset) 855 34.97 body-length 422 17.26 between 72 2.94 between (offset) 169 6.91 while SpatialRGPT [ 12 ] introduces a flexible depth plugin that incorporates (typically estimated) depth maps as aux- iliary inputs to existing VLM visual encoders and bench- marks. For VLAs, DepthVLA [ 39 ] explicitly incorporates depth prediction into policy architectures to impro ve manip- ulation robustness, while PointVLA and GeoVLA [ 40 , 41 ] inject point-cloud or depth-deri ved geometric features to en- hance spatial generalization. Be yond adding depth as an auxiliary cue, 3D-VLA [ 42 ] links 3D perception, reason- ing, and action through a 3D-centric foundation model, and LLaV A-3D [ 43 ] adapts large multimodal models to w ard 3D outputs via 3D-augmented visual patches. These ef forts collecti vely support the view that e xplicit depth cues are valuable for metric spatial reasoning. In contrast to end-to-end action generation, our depth-aware vision-language modeling directly produces camera-fr ame 3D target points and ev aluates both touchable and air tar- gets with complementary metrics. 3 Methodology W e formulate embodied localization as depth-awar e, language-conditioned 3D point prediction . Given an RGB image, a depth map, and an instruction, the model gener- ates a list of 3D target points. This section presents the construction of SpatialPoint-Data, which serves as the fuel for introducing a new depth modality into vision-language models; followed by the network architecture and training strategy of SpatialPoint; and finally the ev aluation protocol, including the proposed metrics and the benchmark dataset SpatialPoint-Bench. T able 1 summarizes the composition of SpatialPoint-Data across both touchable-point and air-point supervision. 3.1 SpatialPoint-Data and Data Engine Data source. Since introducing a new modality as input to VLM is non-trivial and massive data is the best fuel, 3 D ata Engine M onocular De p th Es tima t or In tri nsics T ouchable poin ts Ai r poin ts DINO - X Cap tion + Bb o x + M ask 3D r ela tio n analy sis (c amer a fr ame) QA pair s R oboAff or d 2D QA pair s Dep th - map loo k up QA pair s Eg. Locate some points in the empty space on the left side of the laptop. Eg. Locate some free - space point s in the {direction} of th e red mud. 3D poin t (u, v , Z ) Figure 2: Our data engine. T ouchable points are conv erted from RoboAfford [ 33 ] 2D annotations using monocular depth estimation [ 37 ]. Air points are generated from objects’ 3D relations, which are computed by lifting DINO-X [ 10 ] detections (caption/bbox/mask) into the camera frame using the estimated depth map and intrinsics, and then applying geometric computations. we build SpatialPoint-Data on RoboAfford-Data [ 33 ], due to its large scale, diverse manipulation-related task types, and publicly av ailable RGB imagery . Figure 2 provides an ov ervie w of our data engine, as explained in the following text. Each tar get is encoded as a triplet ( u, v , Z ) , where ( u, v ) are pixel coordinates in the image frame and Z is pixel depth in millimeters. In our implementation, u and v are integers in [0 , 1000) , and Z is an integer -v alued depth. T argets are serialized as plain text and learned via standard token pre- diction, without additional coordinate binning. W e then use off-the-shelf monocular depth estimation model [ 37 ] to esti- mate depth for each RGB image. T ouchable points via monocular depth estimation. RoboAfford provides 2D QA pairs with surface-attached supervision, where each query is associated with valid 2D points on the image. Therefore, we first obtain the 2D tar get location ( u, v ) from the 2D annotation, look up the corre- sponding depth v alue Z from the estimated depth map at ( u, v ) , and form the unified 3D target ( u, v , Z ) . By applying this lift-from-2D procedure to all touchable points samples, we construct 1.9M touchable points QA pairs. Air points via 3D relation analysis. Air points are not an- chored to annotated surface contacts. Instead, we generate QA pairs from RGB images using estimated depth and ge- ometry constraints deriv ed from object-centric 3D context. Concretely , we first parse each image with DINO-X [ 10 ] to obtain objects’ captions, bounding boxes, and masks. Com- bining these outputs with the estimated depth map and in- trinsics, we lift the scene into the camera frame to obtain object-centric 3D occupancy cues. Based on this geome- try , we can directly deriv e objects’ 3D relations via geomet- ric computations. Using the resulting 3D relations, we con- struct 0.72M QA pairs over 26 discrete camera-frame direc- tions (six canonical axes and their compositions) and orga- nize them into three groups: (i) dir ection queries that ask for Vision - Languag e M odel R GB backbone T ok eniz er D pt backbone Que sti on : Po int o u t 2 p oin ts in the cam era c o ord ina te fra me th a t l ie to the lo we r - ri ght o f the ti ss u e b ox . R GB t ok en Dp t t ok en T e xt t ok en hidde n s t a t e (las t - la y er) Ans wer : (86 5, 71 1 , 8 25) (73 2, 68 9 , 8 10) Dec oder Figure 3: Model ov erview . W e add a dedicated depth encoder by duplicating the original visual backbone and feeding it a three-channel depth map to obtain depth tokens, wrapped by and . RGB/depth/text tokens form one causal sequence, and the LM head decodes structured ( u, v , Z ) point lists. a point in a specified direction (optionally with a metric of f- set), (ii) between-object queries that place a point between two referenced objects, optionally with distance constraints (e.g., near one object or with a metric of fset), and (iii) body- length queries that mirror the direction form b ut express dis- tance using body-length units, requiring the model to reason about the target object’ s physical extent. 3.2 Model Architectur e Giv en an RGB image I , a depth map D , and an instruction T , the input sequence is composed of RGB visual tokens, depth visual tokens, and text tokens. RGB and depth tokens are enclosed by dedicated delimiters. Conditioned on this RGB-D prefix, the model predicts a variable-length list of camera-centric targets in the structured form [( u, v , Z ) , . . . ] . Depth map encoding. Inspired by SpatialBot [ 38 ], we en- code the single-channel depth map into a three-channel uint8 depth image, making depth compatible with the vision tok- 4 T able 2: T ouchable points results on RoboAfford-Ev al. W e report overall accuracy and per-category accuracy for object affordance prediction (O AP), object af fordance recognition (O AR), and spatial affordance localization (SAL). For methods that output ( u, v , Z ) targets, we additionally report depth MAE (mm) against the monocular depth reference used in our lifted-3D ev aluation, with an inside/outside breakdown; otherwise depth metrics are marked as “ − ”. Method Overall ↑ O AP ↑ O AR ↑ SAL ↑ MAE Z (in) ↓ MAE Z (out) ↓ MAE Z (all) ↓ RoboPoint[ 6 ] 0.447 0.350 0.557 0.442 − − − RoboAfford-Qwen[ 33 ] 0.593 0.453 0.661 0.579 − − − RoboAfford-Qwen ++ [ 44 ] 0.634 0.631 0.705 0.558 − − − RoboBrain 2.5[ 34 ] 0.741 0.673 0.876 0.671 205.1 255.9 217.9 Qwen2.5-VL-7B[ 45 ] 0.161 0.083 0.195 0.219 − − − Qwen3-VL-Inst-2B[ 11 ] 0.319 0.307 0.445 0.190 − − − Qwen3-VL-Inst-4B[ 11 ] 0.503 0.573 0.540 0.375 567.1 577.9 574.8 Ours 0.790 0.785 0.885 0.689 9.3 54.4 17.2 T able 3: Overall air points e v aluation on SpatialPoint-Bench (2445 queries). Method DirPt MetPt@5cm MeanErr (cm) RoboBrain 2.5[ 34 ] 0.0804 0.0637 30.3412 Qwen3-VL-Inst-4B[ 11 ] 0.0532 0.0896 54.7086 Ours (epoch 1) 0.4886 0.2587 8.5008 Ours (epoch 2) 0.5088 0.2907 7.3034 Ours (epoch 3) 0.5071 0.3347 6.8084 enizer while preserving geometric structure. Dual backbones for RGB and depth. Since the depth map constitutes a new visual modality , we introduce a ded- icated depth backbone while keeping the rest of the model unchanged. T o maximize modality alignment, we directly duplicate the RGB visual backbone and allocate it exclu- siv ely to depth, using the same architecture but separate pa- rameters. Both backbones operate on the same patch grid, yielding token sequences that are naturally aligned for sub- sequent joint reasoning. T o make the depth backbone ef- fectiv e without destabilizing the pretrained components, we adopt a two-stage training strate gy . In Stage 1, we freeze all modules except the depth backbone and train only this back- bone with a 10 × larger learning rate. In Stage 2, we unfreeze the full model and perform joint finetuning with the standard learning rate. Causal multimodal fusion and structured decoding. W e follow the standard causal VLM interface that organizes non-text modalities as explicit segments in a single token sequence. In typical VLMs, image patch tokens are in- serted into the prompt and brack eted by dedicated boundary markers to make the visual prefix explicit without changing the underlying attention pattern. Follo wing the same design principle, we introduce a symmetric pair of special tokens, and , to delimit the depth se g- ment, within which we place the depth-backbone patch to- kens. These non-overlapping marker spans keep RGB and depth explicit in the multimodal prefix while preserving the original causal architecture. Conditioned on the RGB–depth prefix and the instruction tokens, the model autoregressi vely generates a bracketed list of 3D targets with a fixed syntax (e.g., [(123, 456, 789), ...] ). W e then determin- istically parse the generated string into ( u, v , Z ) tuples for ev aluation and downstream geometric computation. 3.3 SpatialPoint-Bench Evaluation data. W e ev aluate on SpatialPoint-Bench , whose images are sourced from the real-scene split of RoboAfford-Ev al [ 33 ]. For each image, we predict a dense depth map using the same monocular depth estimator as in training, and use it to e xpress both predictions and targets in the unified ( u, v , Z ) format. For touchable points ev aluation, RoboAfford-Ev al [ 33 ] provides a ground-truth valid 2D region mask for each query . By pairing this mask with the estimated depth at the corre- sponding locations, we obtain surf ace-attached ( u, v , Z ) tar- gets and a well-defined touchable points benchmark. For air points e v aluation, we generate air points QA pairs by following the same geometry-constrained synthesis pro- cedure used for training ( Section 3.1 ). T ouchable points: 2D accuracy fr om r egion masks. Each model response yields a set of predicted points { ( ˆ u i , ˆ v i , ˆ Z i ) } N i =1 . Gi ven the valid re gion mask M , per-query 2D accuracy is defined as the fraction of predicted ( ˆ u i , ˆ v i ) that fall inside the v alid region: Acc 2 D = 1 N N X i =1 I M ( ˆ u i , ˆ v i ) = 1 . (1) T ouchable points: depth error (MAE) against depth ref- erence. W e ev aluate depth by comparing ˆ Z i against the depth reference value Z ref ( ˆ u i , ˆ v i ) at the predicted location, and report mean absolute error (mm): MAE Z = 1 N N X i =1 ˆ Z i − Z ref ( ˆ u i , ˆ v i ) . (2) W e additionally report MAE Z on predictions inside the mask, outside the mask, and overall . Air points: direction correctness. W e ev aluate whether a prediction satisfies the language-specified geometric rela- tion in the camera frame. Let c denote the anchor (proxy- box center) of the referenced object, and p be the predicted 5 T able 4: Point-level micro results by category . MetPt is computed on direction-correct points in metric-offset queries (denominator: dir-passed points in has_metric samples). Direction ↑ Between ↑ Body-length ↑ Method DirPt MetPt FullPt DirPt MetPt FullPt DirPt MetPt FullPt Qwen3-VL-Inst-4B[ 11 ] 0.0573 0.1111 0.0050 0.0108 0.2222 0.0024 0.0627 0.0175 0.0011 RoboBrain 2.5[ 34 ] 0.0817 0.0827 0.0070 0.0543 0.0333 0.0018 0.0904 0.0455 0.0041 Ours (epoch 1) 0.4856 0.3096 0.1571 0.3825 0.1833 0.0701 0.5637 0.1959 0.1104 Ours (epoch 2) 0.5264 0.2864 0.1523 0.4031 0.2711 0.1093 0.4964 0.3093 0.1535 Ours (epoch 3) 0.5161 0.3764 0.1867 0.4371 0.2123 0.0928 0.5097 0.3143 0.1602 3D point con verted by back-projecting pix el ( u, v , Z ) in the image to 3D space. For dir ection queries with a direction unit vector d , we mark direction as correct if the prediction lies within a conic sector , i.e., ∠ ( p − c , d ) ≤ α , where α is a fixed angular tolerance. For between-object queries, we define a cylindrical cor- ridor along the segment connecting the two object anchors c A and c B . A prediction is marked correct if it lies within a radius- ρ cylinder around the segment and projects onto the segment (within endpoints), capturing the "in-between" con- straint. Occupation exclusion. T o enforce "not occupied by other objects", we reject predictions that fall inside any other ob- ject’ s inflated proxy box (constructed from lifted mask point clouds under estimated depth). Air points: distance bias err or (conditional). For queries that include an explicit distance constraint, we ev al- uate distance only when the direction or relationship con- straint is satisfied. Let r = ∥ p − c ∥ 2 be the predicted dis- placement magnitude (with anchor c chosen per query type) and r ∗ be the required magnitude. W e report distance bias as Bias dist = | r − r ∗ | . (3) Body-length definition. T o reduce sensitivity to mask noise, we define the body-length scale from the coarse 3D proxy box: the body length is set to half of the proxy box diagonal length. Accordingly , r ∗ in Equation (3) is speci- fied in this normalized scale for body-length queries, and in metric units for metric-offset queries. 4 Experiments In this section, we begin with training details and evalua- tion metrics, then present the main e xperimental results, fol- lowed by ablation studies and qualitati v e visualizations. Be- yond offline ev aluation, we further conduct real-robot e xper - iments to validate practical effecti veness; the experimental setup, results, and video demonstrations are available in the supplementary material. 4.1 Experimental Setup Dataset. Our network is trained with SpatialPoint-Data which includes approximately 1.9M touchable points sam- ples and 0.72M air points samples. Our offline e v aluation is conducted on SpatialPoint-Bench. Model training. W e build upon Qwen3-VL-Inst-4B [ 11 ] and introduce an explicit depth-token stream while keeping the original causal multimodal transformer unchanged (see Figure 3 ). W e adopt a two-stage optimization scheme for the newly introduced depth branch. W e first warm up the depth branch by training only the duplicated depth vision backbone while freezing the VLM, the RGB vision back- bone, and the multimodal transformer; during this stage, we use a learning rate that is 10 × the base learning rate. W e then unfreeze all components and jointly fine-tune the entire model with AdamW and cosine learning-rate decay to per- form instruction-tuned point generation under mix ed surface and air points supervision. Unless otherwise specified, we train for 1 epoch. All experiments are run on 8 GPUs with per-GPU batch size 4 and gradient accumulation 2. During inference, we decode the coordinate list from the LM head with top- p sampling ( p = 0 . 9 ), top- k sampling ( k = 50 ), and temperature 0 . 1 . T o study longer adaptation on air points targets, we ad- ditionally continue fine-tuning on the air points subset for 1/2/3 epochs under the same architecture and recipe, and re- port the corresponding checkpoints in T ables 3 and 4 . 4.2 Main Results W e report results on touchable points and air points follow- ing the ev aluation protocols in Section 3.3 . All air points metric results are reported conditional on relation correct- ness, since distance constraints are meaningful only when the intended geometric relation is satisfied. T ouchable Points. T able 2 summarizes performance on RoboAfford-Ev al for touchable points. W e report the of- ficial 2D accuracy across the three categories— Object Af- for dance Recognition (O AR): identifying objects based on attributes such as category , color, size, and spatial relations, Object Affor dance Pr ediction (OAP): localizing functional parts of objects to support specific actions, such as the han- dle of a teapot for grasping, and Spatial Affor dance Local- ization (SAL): detecting vacant areas in the scene for object 6 ( a ) Qwe n3 - VL - 4B ( b ) Our s ( b ) Our s ( a ) Qwe n3 - VL - 4B Pinpoint several points on the rightmost bottle in the image Locate some poi nts between the green part and th e white part on the left side of the table Figure 4: Surface-target qualitati v e comparison on RoboAfford-Ev al(touchable-point). placement and robot navigation—as well as the overall av- erage. Our experiments have demonstrated that our method achiev es the sota performance among the current methods. For methods that generate ( u, v , Z ) outputs, we additionally report depth MAE (mm) against the monocular depth refer - ence used in our ev aluation pipeline, with an inside/outside breakdown; methods that do not output depth are marked as “ − ”. Air points. T able 3 summarizes ov erall air points perfor- mance, while T able 4 reports point-lev el micro results by category . DirPt/MetPt are point-lev el micro accuracies. MetPt and MeanErr are computed on direction-correct points in metric-offset queries (i.e., conditional on direc- tion correctness). FullPt measures joint direction+metric success on metric-offset queries. W e report DirPt for relation correctness (direction-cone or between-cylinder), and for distance-constrained queries we additionally re- port MetPt@5cm, MeanErr (cm), and the joint success rate FullPt requiring both correct relation and distance satisfac- tion. F ollo wing our protocol, MetPt@5cm and MeanErr are computed only on relation-correct points in distance- constrained queries. For body-length constraints, the re- quired offset is instantiated as a metric distance via the object-specific body-length scale deriv ed from proxy geom- etry , enabling a unified 5 cm tolerance across query types. W e include checkpoints fine-tuned for 1/2/3 epochs on the air points subset to study the effect of longer air points ( a ) Qwen 3 - VL - 4B ( a ) Qwen 3 - VL - 4B ( b ) Our s ( b ) Ours Point out 5 points that are to the front right o f a blue mug, reported in camera coordinate frame Point out 5 points that are between blue mug and Quaker cereal, reported in camera coordin ate frame Figure 5: Free-space qualitati ve comparison on our benchmark. Under the same queries, our model better satisfies air points relation constraints, compared to Qwen3-VL, demonstrating more reliable air points target prediction. 7 T able 5: T ouchable points ablations on RoboAf ford-Eval. W e v ary the extra 3D input modality (depth map vs. point cloud), whether using a dual-branch backbone, whether depth is encoded in the SpatialBot-style (Depth → 3ch) when applicable, and whether special depth tokens / are used. Depth MAE is reported only when valid depth predictions are av ailable under our lifted-3D e v aluation; otherwise it is mark ed as “–”. Model Extra input Dual-branch SpatialBot enc. Special tokens Ov erall Acc ↑ Depth MAE (mm) ↓ Ours e1 Depth map ✓ ✓ ✓ 0.790 17.2 T1 – × × × 0.676 227.1 T2 – × × ✓ 0.698 191.9 T3 Depth map × ✓ × 0.708 49.2 T4 Depth map × ✓ ✓ 0.707 22.1 T5 Depth map ✓ × ✓ 0.736 38.1 T6 Point cloud ✓ – ✓ 0.443 23.3 T able 6: Air points ablations on SpatialPoint-Bench (overall). W e fix the dual-branch backbone and special depth tokens (used only when an extra input is pro vided), and only v ary the input representation. V ariant Epoch Input DirPt ↑ MetPt@5cm ↑ FullPt ↑ MeanErr (cm) ↓ Ours1 1 SpatialBot-encoded depth 0.4886 0.2587 0.1300 8.5008 Ours2 2 SpatialBot-encoded depth 0.5088 0.2907 0.1456 7.3034 Ours3 3 SpatialBot-encoded depth 0.5071 0.3347 0.1641 6.8084 A1 1 rgb-only 0.2007 0.0810 0.0181 18.6502 A2 2 rgb-only 0.3050 0.1050 0.0344 22.5311 A3 3 rgb-only 0.2556 0.0617 0.0174 26.3364 A4 1 Depth-3ch 0.4398 0.1521 0.0693 11.6903 A5 2 Depth-3ch 0.3980 0.1403 0.0574 14.1135 A6 3 Depth-3ch 0.4200 0.1667 0.0714 12.8000 A7 1 Point cloud (XYZ) 0.4667 0.4532 0.2138 7.24 A8 2 Point cloud (XYZ) 0.4712 0.4602 0.2362 6.47 A9 3 Point cloud (XYZ) 0.4549 0.4674 0.2284 5.25 adaptation. Results sho w consistent impro vement with more training epochs. 4.3 Ablations and Analysis W e ablate key design choices in our depth-aware formula- tion, including (A) whether depth is fed to the network, (B) alternativ e ways to feed depth, (C) the dual backbones de- sign for depth tokens. Unless otherwise specified, all vari- ants follow the same training data and ev aluation protocols as in Section 4.1 and Section 3.3 . T able 5 and T able 6 sum- marize ablation results on touchable and air points targets, respectiv ely . F or air points targets, we analyze two com- plementary axes: relation correctness and distance fidelity conditioned on correct r elation . (A) Feed depth or not. W e remove all depth inputs to train and ev aluate the model with RGB tokens and text tokens as input. This variant tests whether depth cues are essential for 3D point prediction and geometric consistency . The RGB- only variant drops on both touchable and air points bench- marks, indicating that explicit depth cues are important for ex ecutable 3D target prediction. (T1 & T2 for touchable points in T able 5 and A1 - A3 for air points in T able 6 ) (B) Alternativ e ways to feed depth. W e provide geome- try as a lifted point cloud (from monocular depth) and con- vert it into a three-channel representation by directly quan- tizing ( x, y, z ) values into three channels. The resulting three-channel “geometry map” is fed to the model as an al- ternativ e geometry input, enabling a controlled comparison between depth-token fusion and point-set-derived geometry input under the same VLM interface. Compared with alter- nativ e geometry inputs, our depth-token fusion provides a simple and ef fective interface that improves relation correct- ness and metric precision. (T3-T6 & A4-A9) Notably , point-cloud input shows competitiv e distance ac- curacy on air points but weaker direction correctness, and performs substantially worse than the baseline on touchable points. Despite these limitations, point-cloud geometry of- fers complementary adv antages w orth further e xploration in future work. (C) Shared vs. dual backbones. W e compare using a shar ed backbone for both RGB and encoded depth images 8 versus duplicating the backbone with separate parameters. This ablation isolates whether a dedicated depth branch improv es geometry token quality and downstream perfor- mance. The dual-backbone design consistently outperforms the shared-backbone variant. (T1-T4) 4.4 Qualitative Comparison W e provide qualitativ e comparisons on RoboAfford-Ev al to complement quantitativ e results. Figure 4 com- pares touchable-points predictions between our model and Qwen3-VL, where we visualize predicted points in the im- age (and depth-lifted 3D when applicable) to illustrate lo- calization quality . Figure 5 compares air points predictions, highlighting whether generated targets satisfy the intended spatial relation (direction/between) and distance constraints. 5 Conclusion W e introduced a minimalist ex ecution interface for embod- ied tasks by formulating them as language-conditioned 3D tar get point prediction with two complementary target types: touchable and air points . Using open-source RGB im- ages with monocular depth estimates, we constructed large- scale supervision and benchmarks under a unified ( u, v , Z ) camera-centric representation. Building on Qwen3-VL with an explicit depth-token stream, our model directly decodes structured point lists with the LM head and achiev es con- sistent gains on both touchable and air points benchmarks under our ev aluation protocols. Current limitations include reliance on monocular depth estimates, which may degrade in textureless or reflectiv e regions. Future work could extend embodied localization to dynamic scenes, integrate trajectory-le vel planning, and lev erage world models to support richer spatial reasoning across embodied tasks. Refer ences [1] Danny Driess, Fei Xia, Mehdi SM Sajjadi, Corey L ynch, Aakanksha Chowdhery , Brian Ichter , A yzaan W ahid, Jonathan T ompson, Quan V uong, Tianhe Y u, et al. Palm-e: An embodied multimodal language model. arXiv pr eprint arXiv:2303.03378 , 2023. [2] Anthony Brohan, Noah Brown, Justice Carbajal, Y ev- gen Chebotar , Joseph Dabis, Chelsea Finn, Keerthana Gopalakrishnan, Karol Hausman, Alex Herzog, Jas- mine Hsu, et al. Rt-1: Robotics transformer for real-world control at scale. arXiv pr eprint arXiv:2212.06817 , 2022. [3] Mohit Shridhar , Lucas Manuelli, and Dieter Fox. Cli- port: What and where pathways for robotic manipula- tion. In Confer ence on r obot learning , pages 894–906. PMLR, 2022. [4] Michael Ahn, Anthony Brohan, Noah Brown, Y ev- gen Chebotar , Omar Cortes, Byron David, Chelsea Finn, Chuyuan Fu, Keerthana Gopalakrishnan, Karol Hausman, et al. Do as i can, not as i say: Ground- ing language in robotic affordances. arXiv pr eprint arXiv:2204.01691 , 2022. [5] Mohit Shridhar, Lucas Manuelli, and Dieter Fox. Perceiv er -actor: A multi-task transformer for robotic manipulation. In Conference on Robot Learning , pages 785–799. PMLR, 2023. [6] W entao Y uan, Jiafei Duan, V alts Blukis, W ilbert Pumacay , Ranjay Krishna, Adithyav airavan Murali, Arsalan Mousavian, and Dieter Fox. Robopoint: A vision-language model for spatial af fordance predic- tion for robotics. arXiv pr eprint arXiv:2406.10721 , 2024. [7] Y aoyao Qian, Xupeng Zhu, Ondrej Biza, Shuo Jiang, Linfeng Zhao, Haojie Huang, Y u Qi, and Robert Platt. Thinkgrasp: A vision-language system for strategic part grasping in clutter . arXiv preprint arXiv:2407.11298 , 2024. [8] Chenyang Ma, Kai Lu, T a-Y ing Cheng, Niki T rigoni, and Andrew Markham. Spatialpin: Enhancing spa- tial reasoning capabilities of vision-language models through prompting and interacting 3d priors. Advances in neural information pr ocessing systems , 37:68803– 68832, 2024. [9] Shilong Liu, Zhaoyang Zeng, Tianhe Ren, Feng Li, Hao Zhang, Jie Y ang, Qing Jiang, Chunyuan Li, Jian- wei Y ang, Hang Su, et al. Grounding dino: Marrying dino with grounded pre-training for open-set object de- tection. In Eur opean conference on computer vision , pages 38–55. Springer , 2024. [10] Tianhe Ren, Y ihao Chen, Qing Jiang, Zhaoyang Zeng, Y uda Xiong, W enlong Liu, Zhengyu Ma, Junyi Shen, Y uan Gao, Xiaoke Jiang, et al. Dino-x: A unified vi- sion model for open-w orld object detection and under - standing. arXiv pr eprint arXiv:2411.14347 , 2024. [11] Shuai Bai, Y uxuan Cai, Ruizhe Chen, Keqin Chen, Xionghui Chen, Zesen Cheng, Lianghao Deng, W ei Ding, Chang Gao, Chunjiang Ge, et al. Qwen3-vl tech- nical report. arXiv pr eprint arXiv:2511.21631 , 2025. [12] An-Chieh Cheng, Hongxu Y in, Y ang Fu, Qiushan Guo, Ruihan Y ang, Jan Kautz, Xiaolong W ang, and Sifei Liu. Spatialrgpt: Grounded spatial reasoning in vision- language models. Advances in Neural Information Pr ocessing Systems , 37:135062–135093, 2024. [13] Y ung-Hsu Y ang, Luigi Piccinelli, Mattia Segu, Siyuan Li, Rui Huang, Y uqian Fu, Marc Pollefeys, Hermann Blum, and Zuria Bauer . 3d-mood: Lifting 2d to 3d for monocular open-set object detection. In Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision , pages 7429–7439, 2025. [14] Y uxin W ang, Lei Ke, Boqiang Zhang, T ian yuan Qu, Hanxun Y u, Zhenpeng Huang, Meng Y u, Dan Xu, and Dong Y u. N3d-vlm: Nativ e 3d grounding enables accurate spatial reasoning in vision-language models. arXiv pr eprint arXiv:2512.16561 , 2025. 9 [15] Jianing Y ang, Xuweiyi Chen, Shengyi Qian, Nikhil Madaan, Madhav an Iyengar , David F Fouhey , and Joyce Chai. Llm-grounder: Open-vocabulary 3d visual grounding with large language model as an agent. In 2024 IEEE International Confer ence on Robotics and Automation (ICRA) , pages 7694–7701. IEEE, 2024. [16] Y ilun Chen, Shuai Y ang, Haifeng Huang, T ai W ang, Runsen Xu, Ruiyuan L yu, Dahua Lin, and Jiangmiao Pang. Grounded 3d-llm with referent tokens. arXiv pr eprint arXiv:2405.10370 , 2024. [17] Siming Y an, Min Bai, W eifeng Chen, Xiong Zhou, Qixing Huang, and Li Erran Li. V igor: Improv- ing visual grounding of large vision language models with fine-grained reward modeling. In Eur opean Con- fer ence on Computer V ision , pages 37–53. Springer , 2024. [18] Zoey Guo, Y iwen T ang, Ray Zhang, Dong W ang, Zhi- gang W ang, Bin Zhao, and Xuelong Li. V iewrefer: Grasp the multi-vie w kno wledge for 3d visual ground- ing. In Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision , pages 15372–15383, 2023. [19] Runsen Xu, Zhiwei Huang, T ai W ang, Y ilun Chen, Jiangmiao Pang, and Dahua Lin. Vlm-grounder: A vlm agent for zero-shot 3d visual grounding. arXiv pr eprint arXiv:2410.13860 , 2024. [20] Dingning Liu, Cheng W ang, Peng Gao, Renrui Zhang, Xinzhu Ma, Y uan Meng, and Zhihui W ang. 3daxis- prompt: Promoting the 3d grounding and reasoning in gpt-4o. Neur ocomputing , 637:130072, 2025. [21] Zhihao Y uan, Jinke Ren, Chun-Mei Feng, Hengshuang Zhao, Shuguang Cui, and Zhen Li. V isual program- ming for zero-shot open-vocab ulary 3d visual ground- ing. In Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , pages 20623–20633, 2024. [22] Rong Li, Shijie Li, Lingdong Kong, Xulei Y ang, and Junwei Liang. Seeground: See and ground for zero- shot open-vocab ulary 3d visual grounding. In Pr oceed- ings of the Computer V ision and P attern Recognition Confer ence , pages 3707–3717, 2025. [23] Zhenyang Liu, Y ikai W ang, Sixiao Zheng, T ongy- ing Pan, Longfei Liang, Y anwei Fu, and Xiangyang Xue. Reasongrounder: Lvlm-guided hierarchical fea- ture splatting for open-vocab ulary 3d visual grounding and reasoning. In Pr oceedings of the Computer V i- sion and P attern Recognition Confer ence , pages 3718– 3727, 2025. [24] T atiana Zemskov a and Dmitry Y udin. 3dgraphllm: Combining semantic graphs and large language mod- els for 3d scene understanding. In Pr oceedings of the IEEE/CVF International Conference on Computer V i- sion , pages 8885–8895, 2025. [25] Anh Thai, Songyou Peng, Kyle Genova, Leonidas Guibas, and Thomas Funkhouser . Splattalk: 3d vqa with gaussian splatting. In Proceedings of the IEEE/CVF International Conference on Computer V i- sion , pages 4712–4721, 2025. [26] Zhangyang Qi, Zhixiong Zhang, Y e Fang, Jiaqi W ang, and Hengshuang Zhao. Gpt4scene: Understand 3d scenes from videos with vision-language models. arXiv pr eprint arXiv:2501.01428 , 2025. [27] Cheng Chi, Zhenjia Xu, Siyuan Feng, Eric Cousineau, Y ilun Du, Benjamin Burchfiel, Russ T edrake, and Shu- ran Song. Diffusion policy: V isuomotor policy learn- ing via action diffusion. The International J ournal of Robotics Resear c h , 44(10-11):1684–1704, 2025. [28] T ony Z Zhao, V ikash Kumar , Sergey Levine, and Chelsea Finn. Learning fine-grained bimanual ma- nipulation with low-cost hardware. arXiv preprint arXiv:2304.13705 , 2023. [29] Brianna Zitko vich, T ianhe Y u, Sichun Xu, Peng Xu, T ed Xiao, Fei Xia, Jialin Wu, Paul W ohlhart, Stefan W elker , A yzaan W ahid, et al. Rt-2: V ision-language- action models transfer web knowledge to robotic con- trol. In Conference on Robot Learning , pages 2165– 2183. PMLR, 2023. [30] Moo Jin Kim, Karl Pertsch, Siddharth Karamcheti, T ed Xiao, Ashwin Balakrishna, Suraj Nair , Rafael Rafailov , Ethan Foster , Grace Lam, Pannag Sanketi, et al. Open vla: An open-source vision-language-action model. arXiv pr eprint arXiv:2406.09246 , 2024. [31] Octo Model T eam, Dibya Ghosh, Homer W alke, Karl Pertsch, Ke vin Black, Oier Mees, Sudeep Dasari, Joey Hejna, T obias Kreiman, Charles Xu, et al. Octo: An open-source generalist robot policy . arXiv pr eprint arXiv:2405.12213 , 2024. [32] W enlong Huang, Y u-W ei Chao, Arsalan Mousa vian, Ming-Y u Liu, Dieter Fox, Kaichun Mo, and Li Fei- Fei. Pointworld: Scaling 3d world models for in-the-wild robotic manipulation. arXiv preprint arXiv:2601.03782 , 2026. [33] Y ingbo T ang, Lingfeng Zhang, Shuyi Zhang, Y inuo Zhao, and Xiaoshuai Hao. Roboafford: A dataset and benchmark for enhancing object and spatial af fordance learning in robot manipulation. In Pr oceedings of the 33r d A CM International Conference on Multimedia , pages 12706–12713, 2025. [34] Huajie T an, Enshen Zhou, Zhiyu Li, Y ijie Xu, Y uheng Ji, Xiansheng Chen, Cheng Chi, Pengwei W ang, Huizhu Jia, Y ulong Ao, et al. Robobrain 2.5: Depth in sight, time in mind. arXiv pr eprint arXiv:2601.14352 , 2026. [35] Chi Chen, Bisheng Y ang, Shuang Song, Mao T ian, Jianping Li, W enxia Dai, and Lina Fang. Calibrate multiple consumer rgb-d cameras for low-cost and ef- ficient 3d indoor mapping. Remote Sensing , 10(2):328, 2018. 10 [36] Lukas Rustler , V ojtech V olprecht, and Matej Hoff- mann. Empirical comparison of four stereoscopic depth sensing cameras for robotics applications. IEEE Access , 2025. [37] Haotong Lin, Sili Chen, Junhao Liew , Donny Y Chen, Zhenyu Li, Guang Shi, Jiashi Feng, and Bingyi Kang. Depth anything 3: Recovering the visual space from any vie ws. arXiv preprint , 2025. [38] W enxiao Cai, Iaroslav Ponomarenko, Jianhao Y uan, Xiaoqi Li, W ankou Y ang, Hao Dong, and Bo Zhao. Spatialbot: Precise spatial understanding with vision language models. In 2025 IEEE International Confer- ence on Robotics and Automation (ICRA) , pages 9490– 9498. IEEE, 2025. [39] Tian yuan Y uan, Y icheng Liu, Chenhao Lu, Zhuoguang Chen, T ao Jiang, and Hang Zhao. Depthvla: Enhanc- ing vision-language-action models with depth-aware spatial reasoning. arXiv pr eprint arXiv:2510.13375 , 2025. [40] Chengmeng Li, Junjie W en, Y axin Peng, Y an Peng, and Y ichen Zhu. Pointvla: Injecting the 3d world into vision-language-action models. IEEE Robotics and Automation Letter s , 11(3):2506–2513, 2026. [41] Lin Sun, Bin Xie, Y ingfei Liu, Hao Shi, T iancai W ang, and Jiale Cao. Geovla: Empowering 3d representa- tions in vision-language-action models. arXiv pr eprint arXiv:2508.09071 , 2025. [42] Haoyu Zhen, Xiaowen Qiu, Peihao Chen, Jincheng Y ang, Xin Y an, Y ilun Du, Y ining Hong, and Chuang Gan. 3d-vla: A 3d vision-language-action generative world model. arXiv preprint , 2024. [43] Chenming Zhu, T ai W ang, W enwei Zhang, Jiangmiao Pang, and Xihui Liu. Llav a-3d: A simple yet ef fec- tiv e pathway to empo wering lmms with 3d-awareness. arXiv pr eprint arXiv:2409.18125 , 2024. [44] Xiaoshuai Hao, Y ingbo T ang, Lingfeng Zhang, Y an- biao Ma, Y unfeng Diao, Ziyu Jia, W enbo Ding, Hangjun Y e, and Long Chen. Roboafford++: A gen- erativ e ai-enhanced dataset for multimodal affordance learning in robotic manipulation and na vigation. arXiv pr eprint arXiv:2511.12436 , 2025. [45] Shuai Bai, Keqin Chen, Xuejing Liu, Jialin W ang, W enbin Ge, Sibo Song, Kai Dang, Peng W ang, Shi- jie W ang, Jun T ang, Humen Zhong, Y uanzhi Zhu, Mingkun Y ang, Zhaohai Li, Jianqiang W an, Pengfei W ang, W ei Ding, Zheren Fu, Y iheng Xu, Jiabo Y e, Xi Zhang, T ianbao Xie, Zesen Cheng, Hang Zhang, Zhibo Y ang, Haiyang Xu, and Junyang Lin. Qwen2.5- vl technical report. ArXiv , abs/2502.13923, 2025. URL https://api.semanticscholar.org/ CorpusID:276449796 . [46] UFactory. xArm 7 Collaborative Robot Arm — Prod- uct Specification. https://www.ufactory.cc/ xarm- collaborative- robot- arm , 2023. 7- DOF collaborativ e robot arm with 700 mm reach and 3.5 kg payload. [47] AgileX Robotics. TRA CER 2.0: Indoor T wo-Wheel Differential A GV. https://global.agilex. ai/products/tracer- 2- 0 , 2024. 150 kg pay- load, 2 m/s maximum speed, 400 W motor po wer . [48] AgileX Robotics. PiPER: 6-DOF Lightweight Robotic Arm. https://global.agilex.ai/ products/piper , 2024. 1.5 kg payload, 626 mm reach, 0.1 mm repeatability . 11 SpatialP oint: Spatial-aware P oint Prediction f or Embodied Localization Supplementary Material A Mor e Detailed T ask Definitions and Evaluation Pr otocol A.1 T ouchable and Air Points T ouchable points are surface-grounded 3D targets for direct physical interaction, while air points are free-space 3D tar- gets specified by spatial language. T ogether , they provide a unified representation of action targets for both object con- tact and free-space interaction. A v alid air point must satisfy two constraints: it must match the instructed spatial relation and lie in unoccupied free space rather than on or inside occupied objects. This makes air-point prediction more challenging than direction- only grounding because the model must reason about both relativ e geometry and free-space v alidity . A.2 Unified Output Representation For both touchable and air points, the model predicts tar- gets in the unified format ( u, v , Z ) , where ( u, v ) denotes the image-plane location and Z denotes metric depth. This rep- resentation keeps the prediction visually anchored while re- maining directly ex ecutable in 3D. Compared with full camera-frame 3D coordinates, ( u, v , Z ) is more image-aligned: ( u, v ) specifies the visu- ally grounded location, while Z provides the depth needed to recov er the final 3D point. A.3 Detailed Geometric Evaluation f or Air Points As stated in the main te xt, all distance-related metrics for air points are ev aluated only when the pr edicted point alr eady satisfies the queried spatial relation , since metric of fsets are meaningful only for relation-valid predictions. Object center . W e use a unified object-center definition across all air-point categories. Each referenced object is lifted into 3D and represented by an object-level 3D proxy deriv ed from its occupancy cue. The center of this proxy serves as the reference point for direction ev aluation, between-object ev aluation, and body-length-based metric computation. Direction queries. For direction queries, we define a cone whose apex is the referenced object center and whose axis is the queried camera-frame direction. A prediction is con- sidered relation-correct if it lies within 30 ◦ of the cone axis, corresponding to a full angular aperture of 60 ◦ . This toler- ance allows moderate ambiguity between neighboring direc- tions and av oids an o verly brittle criterion. Between-object queries. For between-object queries, re- lation correctness is ev aluated with respect to the line seg- ment connecting the two referenced object centers. A pre- diction is considered relation-correct only if: (i) its projec- tion onto the segment lies between 10% and 90% of the se g- ment length; and (ii) its perpendicular distance to the seg- ment is at most 10 cm. This criterion encourages predic- tions that are genuinely between the two objects rather than merely close to the connecting line. Body-length queries. For body-length queries, one body length is approximated as half of the diagonal length of the target object’ s 3D proxy box. A query such as two body lengths in front of the mug is therefore conv erted into a target metric distance equal to twice this object-specific unit along the queried direction. Occupancy validity . A v alid air-point prediction must lie in free space. Predictions that fall inside occupied object volume are rejected, e ven if they satisfy the queried relation. This requires the model to reason about both spatial relations and basic scene occupancy . B Zer o-Shot Generalization to Real- W orld Robot Manipulation and Na vigation W e provide additional real-world robot results to comple- ment the main-text experiments on picking, placement, and navigation. W e emphasize that in both demonstrations, SpatialPoint operates without any fine-tuning on the target scene, highlighting its cross-scene generalizability . Pick-and-Place. W e deploy SpatialPoint on an uFactory xArm7 robotic arm [ 46 ] in a tabletop setting with multi- ple boxes. SpatialPoint serves two roles: (1) predicting the grasping contact point on the target box via natural language instruction ( touc hable point , Figure 6 (a)), and (2) predicting the target placement position for the grasped object via nat- ural language instruction ( air point , Figure 6 (b)). W e refer the reader to our project page, where the complete manipula- tion sequence video ( 1-robotarm-pick-place.mp4 ) is provided; in this video, the robot arm localizes the target box and places it at the specified destination. Mobile Manipulation. W e deploy SpatialPoint on a mo- bile robot built upon A GILEX T racer 2.0 [ 47 ] and AGILEX Piper [ 48 ]. SpatialPoint supports three sequential subtasks within a single scene: (1) predicting the approach position near the target object to enable grasping ( touchable point , Figure 7 (a)), (2) localizing the target object for interaction ( touchable point , Figure 7 (b)), and (3) predicting the place- ment position for the grasped object ( air point , Figure 7 (c)). 12 Locat e som e poi nts o n the blue - and - white medi cine box on th e rig ht si de of the image as grasp ing p oints . Locat e som e fre e - spa ce po ints above the baske t on the left side as gr aspin g end point s . (a) (b) Figure 6: Zero-shot real-world results on robot pick-and-place. W e refer the reader to our project page, where the com- plete process video ( 2-mobile-navigation.mp4 ) is provided; in this video, the mobile robot navigates to the target location and retrie v es the bottle. Long-Horizon T ask: Package Reordering . W e apply Spa- tialPoint to a long-horizon manipulation task inv olving the reordering of multiple packages within a multi-compartment open shelf. The packages are placed in indi vidual compart- ments, and a uFactory xArm7 robotic arm [ 46 ] is instructed to reorder them according to human voice or text commands. SpatialPoint supports two sequential subtasks: (1) predicting the grasping point on the target package ( touchable point , Figure 8 (a)), and (2) predicting the placement destination within the specified compartment following the human in- struction ( air point , Figure 8 (b)). W e refer the reader to our project page, where the complete manipulation sequence video ( 3-longhorizon-pack-reranking.mp4 ) is provided. C Additional Experimental Results This section presents additional qualitati v e comparisons and ablation results. W e extend the qualitati ve comparison by adding RoboBrain2.5 [ 34 ] as an extra baseline alongside Qwen3-VL-4B [ 11 ] and our model, and we further report a supplementary air-point ablation under SpatialBot-style depth encoding. C.1 More Qualitati ve Comparisons Compared with the main text, we additionally include Robo- Brain2.5 [ 34 ] as a baseline and present three-model qualita- tiv e comparisons under identical instructions. Figure 9 sho ws additional touchable-point examples, while Figure 10 shows additional air -point e xamples. C.2 Supplementary Ablations on Air Points Owing to space limitations, the main te xt reports only the default air-point ablation setting. Here we include addi- tional ablation results under SpatialBot-style depth encod- ing to separately show the effects of the dual-backbone de- sign and special depth tokens. All variants use the same SpatialBot-style depth representation and are trained for 3 epochs. T able 7 summarizes the results. Compared with the con- densed presentation in the main te xt, these additional results provide a more explicit breakdo wn of the two architectural choices, since the def ault setting there already includes both components. Overall, the trends are consistent with those reported 13 Locat e som e poi nts o n the groun d wit hin t he va cant space near the g reen drink cart on for t he ro bot t o app roach befor e gra sping . Locat e som e gra sp po ints on the g reen drink cart on fo r the robot to pick it up . Locat e som e fre e - spa ce po ints withi n the robo t's o wn le ft - side area for objec t pla cemen t . (a ) ( b ) ( c ) Figure 7: Zero-shot real-world robot results on mobile navigation and picking. in the main paper and further clarify the contribution of the dual-backbone design and special depth tokens under SpatialBot-style depth encoding. D Qualitativ e Analysis of F ailur e Cases D.1 F ailure Analysis on T ouchable Points W e observe three common failure patterns in touchable- point prediction, corresponding to progressi vely more se- vere errors in localization, constraint satisfaction, and target grounding. Fine-grained localization error . In some cases, the model identifies the correct target region but predicts a slightly shifted point, as illustrated in Figure 11 (a) and (b). Such errors are common near object boundaries or thin structures, where e ven a small image-plane deviation can lead to a noticeable 2D mismatch and a lar ger 3D error after depth lookup. This also helps explain why in-mask depth errors are typically smaller than out-of-mask ones: small boundary shifts can already destabilize both image-plane alignment and the associated depth value. Fine-grained constraint error . A second failure mode occurs when the model captures the coarse spatial relation but fails to satisfy the finer constraint in the instruction, as shown in Figure 11 (c). For example, when the instruction refers to a v acant re gion on one side of an object, the predic- tion may fall on the correct side but not in a truly v acant or interaction-valid area. These cases suggest that touchable- point prediction requires not only coarse directional under- standing, but also finer discrimination of locally valid target regions. Relational grounding error . A third and more severe failure mode arises when the model misidentifies the re- ferred target or misgrounds the underlying spatial relation, as shown in Figure 11 (d). In such cases, the prediction is anchored to the wrong object or relational target rather than being a small local deviation. 14 Point to the c enter of t he handl e of the m iddl e pin k Lucki n Co f fee b ag a s the grasp poin t. Point to a free - spac e tar get near the c enter of t he le ft empty comp artme nt as the final grip per p ositi on fo r plac e ment . (a) (b) Figure 8: Zero-shot real-world robot results on long-horizon task. T able 7: Supplementary air-point ablations under SpatialBot-style depth encoding. V ariant Epoch Dual Backbone Special T okens DirPt ↑ MetPt@5cm ↑ FullPt ↑ MeanErr (cm) ↓ Ours1 1 ✓ ✓ 0.4886 0.2587 0.1300 8.5008 Ours2 2 ✓ ✓ 0.5088 0.2907 0.1456 7.3034 Ours3 3 ✓ ✓ 0.5071 0.3347 0.1641 6.8084 A10 1 × × 0.4088 0.1885 0.0795 11.2143 A11 2 × × 0.4413 0.1916 0.0846 11.2934 A12 3 × × 0.4403 0.2130 0.0937 10.2762 A13 1 × ✓ 0.4315 0.1913 0.0854 11.5730 A14 2 × ✓ 0.4576 0.2453 0.1134 10.9660 A15 3 × ✓ 0.4650 0.2482 0.1164 9.7431 A16 1 ✓ × 0.4252 0.1911 0.0827 10.5795 A17 2 ✓ × 0.4543 0.2299 0.1043 10.8764 A18 3 ✓ × 0.4609 0.2454 0.1133 10.0685 D.2 F ailure Analysis on Air P oints Air-point prediction is more challenging than touchable- point prediction because the target lies in free space and must satisfy both spatial and occupancy constraints. W e therefore focus on representati ve relation-lev el failures and leav e metric errors aside, as they are harder to interpret in isolation. Relational direction misinterpretation. In this case, the model recognizes the referenced object b ut f ails to place the target in the instructed relational direction, as shown in Fig- ure 12 (a). This indicates that air -point prediction requires not only identifying the correct reference object, b ut also ac- curately grounding directional relations in 3D. W eak image support f or free-space targets. In this case, the queried free-space tar get is geometrically well defined in camera-frame 3D space but only weakly supported by the visible 2D image, especially near image boundaries, as shown in Figure 12 (b). This highlights a key difficulty of air-point prediction: a target may be clear in 3D yet hard to infer from the image plane alone. Occupancy-awareness failure. In this case, the model captures the coarse relational cue but predicts a point that still falls inside or too close to occupied object space, as shown in Figure 12 (c). This indicates that successful air- point prediction requires not only correct directional reason- ing, but also stronger awareness of local 3D occupancy and free-space feasibility . 15 ( b ) Qw en3 - VL (4B) ( a ) Ours Loc at e se ve r al po i nts wit hi n a va c ant a r ea on th e fr on t po rt i on of th e st ov e . Sel ec t th e l eft mo s t obj ec t in t h e i ma g e. ( c) RoboBrain2.5 (8B) ( b ) Qw en3 - VL - 4B ( a ) Ours ( c) RoboBrain2.5 (8B) Figure 9: Additional qualitativ e comparisons on touchable points prediction. W e compare 3 models under the same in- structions. 16 (b) Qw en3 - VL (4B) (a) Ours Point out some point s tha t are to t he lo wer f ront left of a yello w pen cil, about 3 b o dy l e ngth s away . (ca mera frame ) (c) RoboBrain2.5 (8B) Figure 10: Additional qualitativ e comparisons on air points prediction. W e compare 3 models under the same instructions. 17 (a ) What part of a mug s hould be gr ipped to l ift it? ( b ) Pinpo int s evera l spo ts in the v acant area that lies to th e rig ht of the glass conta iner. What conta iner can b e used to st ore a nd dispe nse l iquid s or chemicals, often featu ring a han dle f or easy carry ing? ( c ) Spot sever al po ints on the c an ri ght n ext t o the apple in t he im age. ( d ) Figure 11: F ailure cases on touchable-point prediction. (a) and (b) Fine-grained localization errors. (c) Fine-grained constraint error . (d) Relational grounding error . 18 (a ) Point out 4 poi nts t hat are betwe en a w hite mug and a silv er re frige rator . (came ra fr ame) ( b ) Point out 6 poi nts t hat are above a tis sue b ox. (came ra fr ame) Point out 4 poi nts t hat are t o the lowe r fro nt right of the si nk. (came ra fr ame) ( c ) Figure 12: Failure cases on air-point prediction. (a) Relational direction misinterpretation. (b) W eak image support for free-space targets. (c) Occupancy-a wareness f ailure. 19

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment