Active Seriation: Efficient Ordering Recovery with Statistical Guarantees

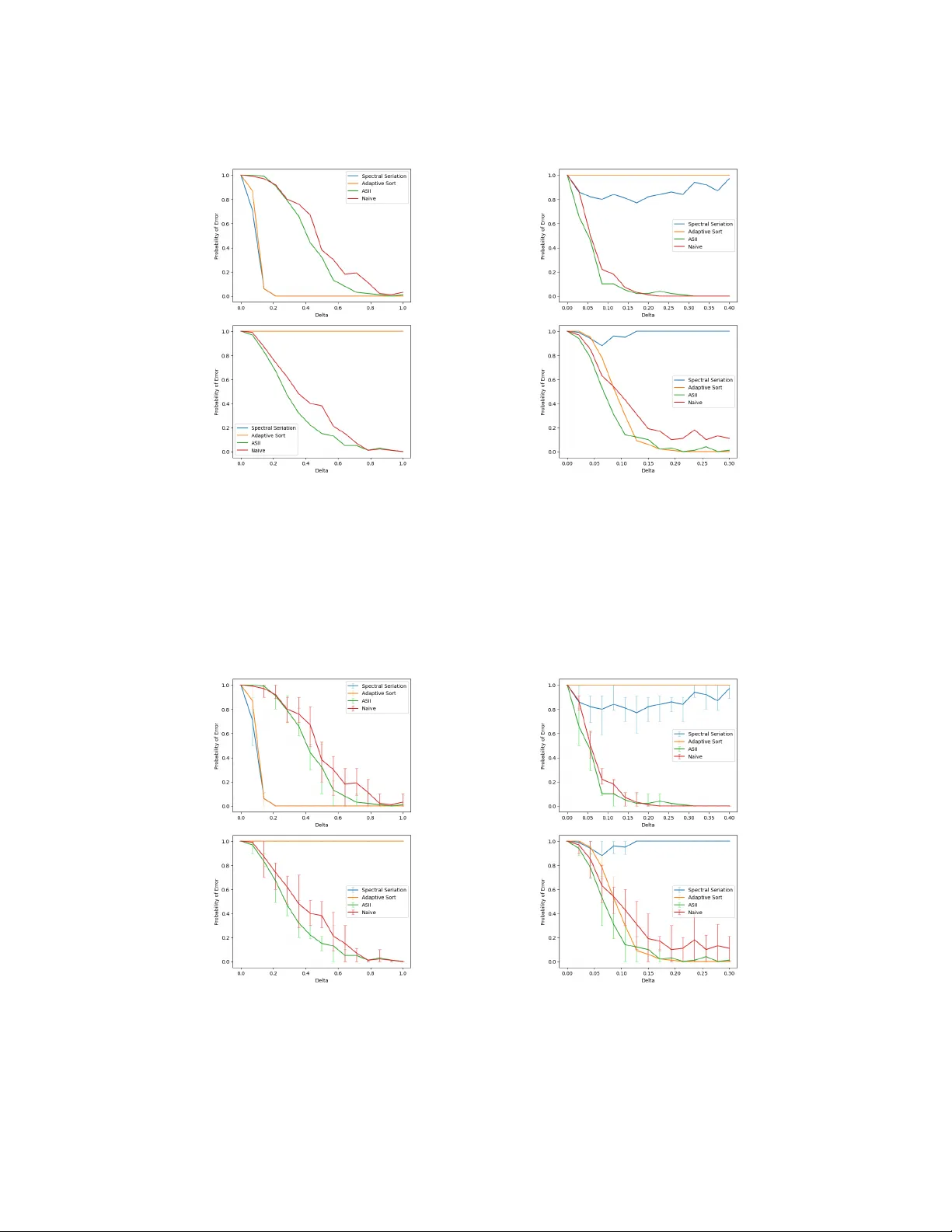

Active seriation aims at recovering an unknown ordering of $n$ items by adaptively querying pairwise similarities. The observations are noisy measurements of entries of an underlying $n$ x $n$ permuted Robinson matrix, whose permutation encodes the l…

Authors: James Cheshire, Yann Issartel