Towards Balanced Multi-Modal Learning in 3D Human Pose Estimation

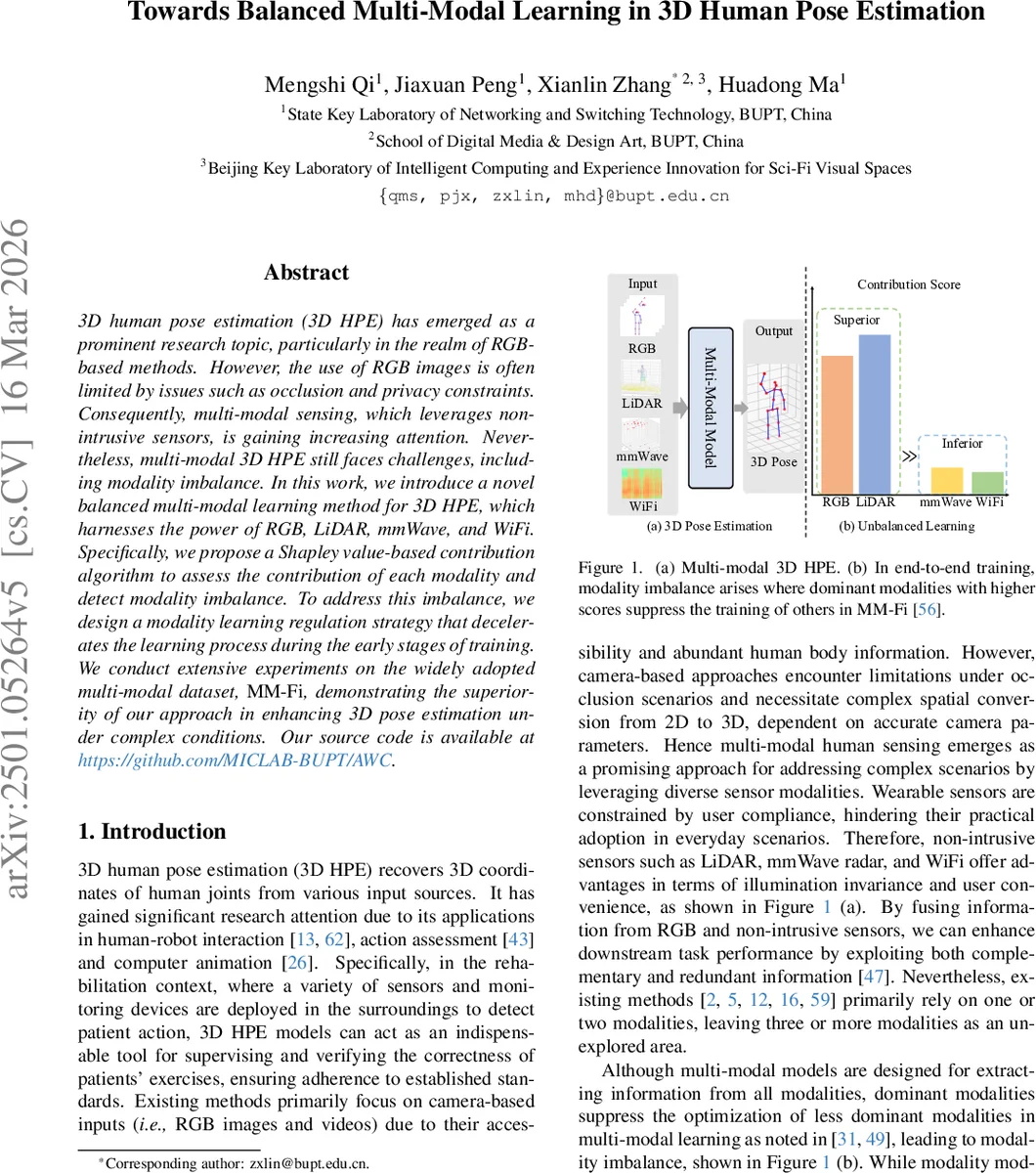

3D human pose estimation (3D HPE) has emerged as a prominent research topic, particularly in the realm of RGB-based methods. However, the use of RGB images is often limited by issues such as occlusion and privacy constraints. Consequently, multi-modal sensing, which leverages non-intrusive sensors, is gaining increasing attention. Nevertheless, multi-modal 3D HPE still faces challenges, including modality imbalance. In this work, we introduce a novel balanced multi-modal learning method for 3D HPE, which harnesses the power of RGB, LiDAR, mmWave, and WiFi. Specifically, we propose a Shapley value-based contribution algorithm to assess the contribution of each modality and detect modality imbalance. To address this imbalance, we design a modality learning regulation strategy that decelerates the learning process during the early stages of training. We conduct extensive experiments on the widely adopted multi-modal dataset, MM-Fi, demonstrating the superiority of our approach in enhancing 3D pose estimation under complex conditions. Our source code is available at https://github.com/MICLAB-BUPT/AWC.

💡 Research Summary

This paper tackles the persistent problem of modality imbalance in multi‑modal 3D human pose estimation (3D HPE). While RGB images dominate the literature, they suffer from occlusion, lighting variations, and privacy concerns. Non‑intrusive sensors such as LiDAR, millimeter‑wave (mmWave) radar, and WiFi channel state information (CSI) provide complementary information that is robust to illumination and does not require a camera. However, when these modalities are fused in an end‑to‑end network, dominant modalities (often RGB) can suppress the learning of weaker ones, leading to sub‑optimal performance. Existing balancing techniques are largely designed for classification tasks, rely on auxiliary heads, or introduce extra learnable parameters, making them unsuitable for regression‑oriented 3D pose estimation.

The authors propose a balanced multi‑modal learning framework that integrates four modalities—RGB, LiDAR, mmWave, and WiFi—without adding any extra parameters. The framework consists of two key components: (1) a Shapley‑value‑based contribution assessment and (2) an Adaptive Weight Constraint (AWC) regularization guided by the Fisher Information Matrix (FIM).

Shapley‑based contribution assessment

Shapley values, originating from cooperative game theory, fairly allocate the marginal contribution of each “player” (modality) to the overall performance. In classification, the profit function is typically cross‑entropy, but directly using mean‑squared error (MSE) or mean‑absolute error (MAE) for regression would bias the contribution toward modalities with larger output magnitudes. To avoid this, the authors replace the profit function with the average Pearson correlation coefficient between ground‑truth joint coordinates and predictions across the batch. For each modality m, they compute the Shapley value ϕ_m by enumerating (or approximating) all subsets S ⊆ M{m} and measuring the change in the Pearson‑based profit when m is added to S. This yields a dynamic, data‑driven score that reflects how informative each modality is during training.

Adaptive Weight Constraint (AWC) regularization

The Fisher Information Matrix approximates the expected squared gradient of each parameter, serving as a proxy for its sensitivity to the loss. Dominant modalities generate larger gradients early in training, resulting in higher FIM values, whereas weaker modalities have smaller FIMs. The AWC loss penalizes deviations from the initial parameters θ_0 proportionally to the FIM:

L_AWC = Σ_i λ_i · FIM_i · (θ_i − θ_{0,i})²

where λ_i is a modality‑specific regularization coefficient. To set λ_i, the authors cluster the four Shapley scores using K‑means into a “superior” set M_S and an “inferior” set M_I. Superior modalities receive a stronger coefficient α_S, while inferior ones receive a milder α_I. This adaptive scheme slows down aggressive updates from dominant modalities while allowing weaker modalities to continue learning, thereby mitigating the imbalance without suppressing any modality entirely.

Model architecture

Each modality is processed by a dedicated encoder (e.g., CNN for RGB, PointNet‑style networks for LiDAR and mmWave point clouds, 1‑D convolutions for WiFi CSI). The resulting feature vectors are concatenated and fed into a linear regression head that directly predicts 3D joint coordinates. No auxiliary uni‑modal heads or extra branches are introduced, keeping the parameter count comparable to a standard multi‑modal baseline.

Experiments

The approach is evaluated on MM‑Fi, the largest publicly available multi‑modal 3D HPE dataset containing synchronized RGB, LiDAR, mmWave, and WiFi recordings under diverse indoor and outdoor conditions. Baseline models use the same encoder‑fusion‑regression pipeline but lack the Shapley and AWC components. Results show a consistent reduction in Mean Per‑Joint Position Error (MPJPE): the Shapley‑only variant improves MPJPE by ~3 %, the AWC‑only variant by ~4 %, and the combined method by ~6 % relative to the baseline. Ablation studies confirm that the Shapley scores accurately identify dominant modalities early in training, and the AWC loss effectively regularizes their parameter updates. Training curves reveal smoother convergence and reduced variance when both components are employed.

Limitations and future work

Computing exact Shapley values scales exponentially with the number of modalities; the paper does not detail approximation strategies, which may be necessary for larger modality sets. Pearson correlation, while more robust than raw MSE, can still be noisy for small batch sizes or highly correlated errors. Estimating the FIM via squared gradients can be unstable under varying batch sizes or learning rates. Future research could explore Monte‑Carlo or sampling‑based Shapley approximations, more robust profit functions (e.g., rank‑based metrics), and stabilized FIM estimators (e.g., using exponential moving averages).

Conclusion

By introducing a principled, parameter‑free contribution metric and an adaptive regularization that leverages the Fisher Information Matrix, the authors provide a practical solution to modality imbalance in regression‑focused multi‑modal learning. Their method yields measurable performance gains on a challenging real‑world dataset without increasing model complexity, offering a promising direction for other sensor‑fusion tasks beyond 3D human pose estimation.

Comments & Academic Discussion

Loading comments...

Leave a Comment