A Hierarchical Gradient Tracking Algorithm for Mitigating Subnet-Drift in Fog Learning Networks

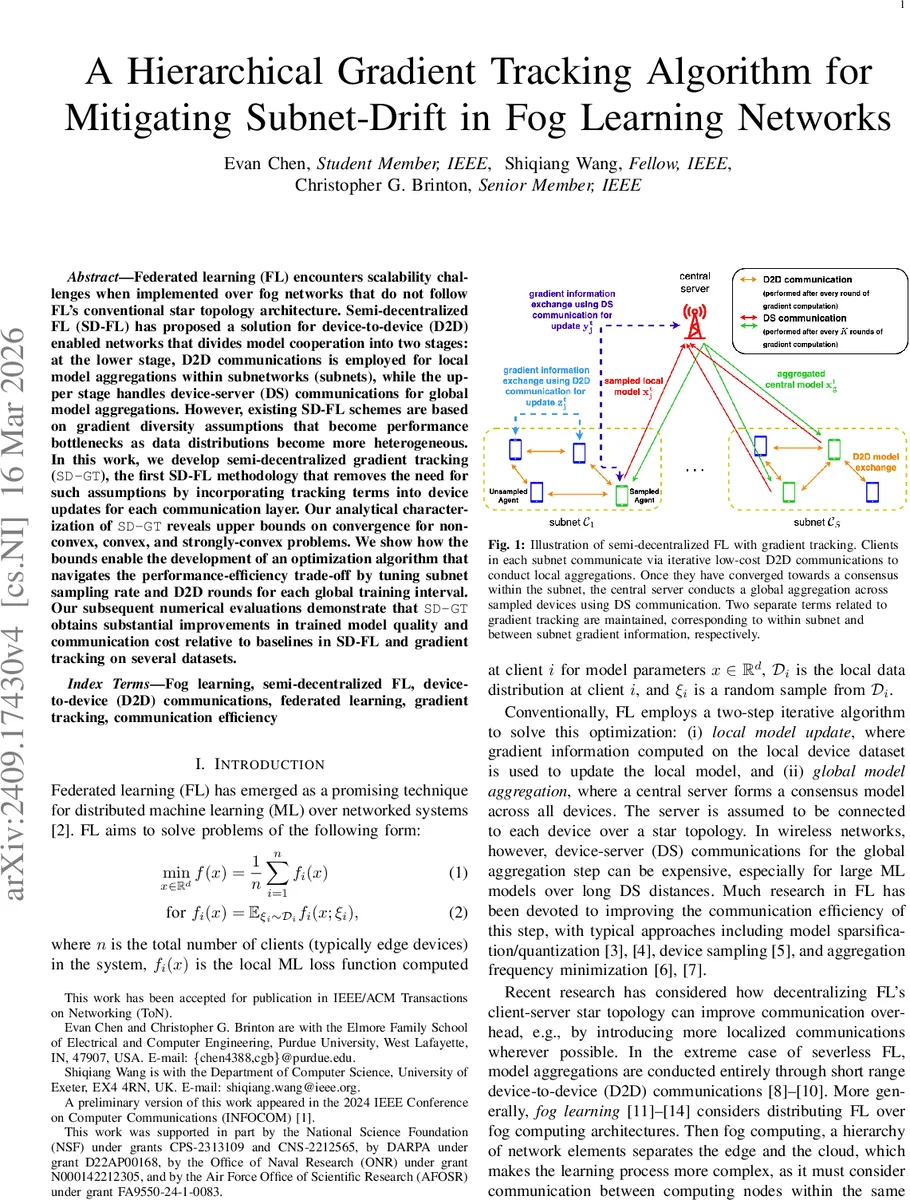

Federated learning (FL) encounters scalability challenges when implemented over fog networks that do not follow FL’s conventional star topology architecture. Semi-decentralized FL (SD-FL) has proposed a solution for device-to-device (D2D) enabled networks that divides model cooperation into two stages: at the lower stage, D2D communications is employed for local model aggregations within subnetworks (subnets), while the upper stage handles device-server (DS) communications for global model aggregations. However, existing SD-FL schemes are based on gradient diversity assumptions that become performance bottlenecks as data distributions become more heterogeneous. In this work, we develop semi-decentralized gradient tracking (SD-GT), the first SD-FL methodology that removes the need for such assumptions by incorporating tracking terms into device updates for each communication layer. Our analytical characterization of SD-GT reveals upper bounds on convergence for non-convex, convex, and strongly-convex problems. We show how the bounds enable the development of an optimization algorithm that navigates the performance-efficiency trade-off by tuning subnet sampling rate and D2D rounds for each global training interval. Our subsequent numerical evaluations demonstrate that SD-GT obtains substantial improvements in trained model quality and communication cost relative to baselines in SD-FL and gradient tracking on several datasets.

💡 Research Summary

This paper tackles a fundamental scalability problem in federated learning (FL) when deployed over fog networks that do not follow the classic star‑topology. In semi‑decentralized FL (SD‑FL), edge devices are grouped into subnets that can communicate via low‑cost device‑to‑device (D2D) links; each subnet performs multiple local consensus rounds before a central server samples a subset of devices for a global aggregation over expensive device‑to‑server (DS) links. While this hierarchy reduces DS traffic, it introduces “subnet‑drift”: repeated intra‑subnet aggregations cause the global model to deviate toward a weighted combination of the local optima of each subnet, especially when data distributions across subnets are heterogeneous.

Existing SD‑FL methods rely on gradient‑diversity or data‑heterogeneity constants to guarantee convergence, which limits performance in realistic non‑i.i.d. settings. The authors propose Semi‑Decentralized Gradient Tracking (SD‑GT), the first SD‑FL algorithm that eliminates these assumptions by embedding two separate gradient‑tracking variables into each device’s update: one for intra‑subnet information (tracked during D2D rounds) and another for inter‑subnet information (tracked during DS rounds). The intra‑subnet tracker aggregates gradients received from neighboring devices via a weighted matrix (W_s), while the inter‑subnet tracker incorporates the server‑sampled global gradient estimate. By maintaining both trackers, the algorithm compensates for the differing mixing speeds of D2D and DS communications and stabilizes the overall learning dynamics without increasing the frequency of costly DS exchanges.

Theoretical contributions include a Lyapunov‑based convergence analysis that yields upper bounds for non‑convex, weakly convex, and strongly convex objectives. For non‑convex problems the expected optimality gap decays as (\mathcal{O}(1/\sqrt{T})); for weakly convex problems it decays as (\mathcal{O}(1/T^{1/4})); and for strongly convex problems with deterministic gradients the method achieves linear convergence. Crucially, these bounds are free of any data‑heterogeneity constants, demonstrating that SD‑GT is robust to arbitrary distribution skew.

Leveraging the derived bounds, the authors formulate a joint optimization of the number of D2D rounds (K) and the subnet sampling rate (p) to balance convergence speed against communication cost. This co‑optimization is expressed as a geometric program that can be solved after each global aggregation, automatically adapting to changes in the relative cost of D2D versus DS links.

Extensive experiments on CIFAR‑10, FEMNIST, and Shakespeare datasets with varying Dirichlet concentration parameters (to control heterogeneity) and diverse subnet topologies (static, random, time‑varying) validate the theory. SD‑GT consistently outperforms baseline SD‑FL methods (e.g., FedAvg‑SD, FedProx‑SD) and conventional gradient‑tracking schemes in both model accuracy (10–25 % higher) and communication efficiency (30–45 % fewer DS transmissions). The co‑optimization controller successfully adjusts (K) and (p) in response to different cost regimes, preserving performance even when devices drop out or subnet connectivity changes.

In summary, the paper makes three key advances: (1) introduces a dual‑tracking mechanism that fundamentally mitigates subnet‑drift in hierarchical FL; (2) provides convergence guarantees that do not depend on data‑heterogeneity metrics, broadening applicability to highly non‑i.i.d. environments; and (3) offers a practical, analytically‑driven control framework that jointly optimizes communication resources and convergence speed. The work paves the way for more scalable, privacy‑preserving learning over fog architectures and suggests future directions such as compressed tracker communication, asynchronous updates, and real‑world 5G/6G D2D integration.

Comments & Academic Discussion

Loading comments...

Leave a Comment