On the (Generative) Linear Sketching Problem

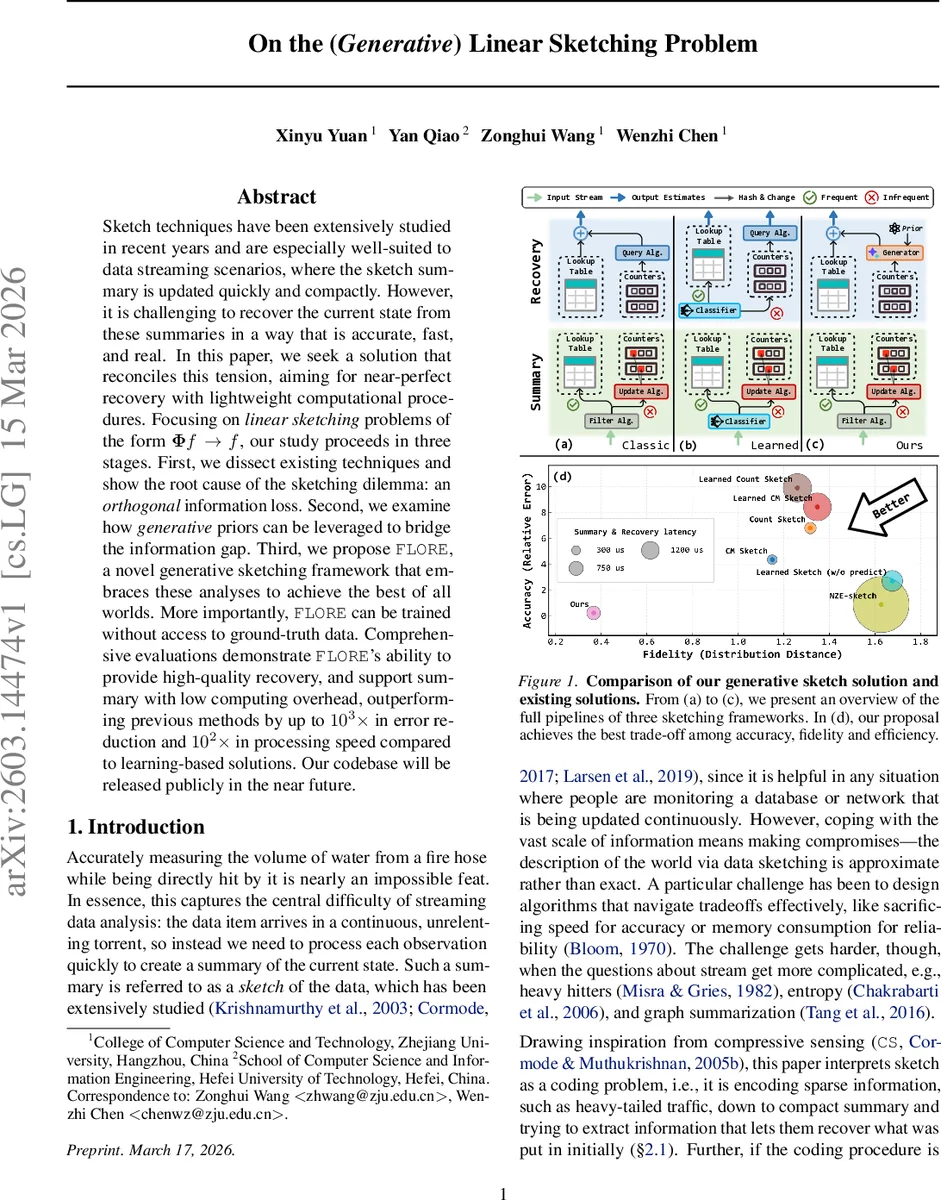

Sketch techniques have been extensively studied in recent years and are especially well-suited to data streaming scenarios, where the sketch summary is updated quickly and compactly. However, it is challenging to recover the current state from these summaries in a way that is accurate, fast, and real. In this paper, we seek a solution that reconciles this tension, aiming for near-perfect recovery with lightweight computational procedures. Focusing on linear sketching problems of the form $\boldsymbolΦf \rightarrow f$, our study proceeds in three stages. First, we dissect existing techniques and show the root cause of the sketching dilemma: an orthogonal information loss. Second, we examine how generative priors can be leveraged to bridge the information gap. Third, we propose FLORE, a novel generative sketching framework that embraces these analyses to achieve the best of all worlds. More importantly, FLORE can be trained without access to ground-truth data. Comprehensive evaluations demonstrate FLORE’s ability to provide high-quality recovery, and support summary with low computing overhead, outperforming previous methods by up to 1000 times in error reduction and 100 times in processing speed compared to learning-based solutions.

💡 Research Summary

The paper addresses a fundamental limitation of linear sketching techniques used for streaming data, where a compact summary b = Φf is produced by a sparse, hash‑based matrix Φ. By analyzing existing sketches (Count‑Min, Count‑Sketch, Augmented Sketch, etc.) through the lens of compressive sensing, the authors show that these matrices have poor Restricted Isometry Property (RIP) values, leading to a large “RIP distance” and, more importantly, an irrecoverable null‑space component f_N. In other words, the sketch only preserves the range‑space component f_Φ, while the orthogonal part f_N is lost, making perfect recovery impossible unless f_N is negligible.

Traditional recovery methods fall into three categories: (1) randomized per‑key approximations that are fast but suffer high error under tight memory budgets; (2) heavy‑light decompositions that alleviate hash collisions but still leave the light part with large error; and (3) compressive‑sensing‑style global reconstruction that requires dense random matrices with good RIP, which are incompatible with the O(k) update constraint of streaming sketches. Consequently, none of these approaches can simultaneously achieve high accuracy, low latency, and modest memory usage.

To bridge the information gap, the authors propose leveraging deep generative models (GMs) as external priors. A generative model can synthesize the missing null‑space component in a way that is consistent with the observed sketch (Φ f̂ = b) while remaining faithful to the true data distribution p(f). They adopt a flow‑based generative model (FGM) because it is invertible, allowing the sketch summary to be fed into the model, a latent variable to be sampled, and the full signal f̂ to be reconstructed by the inverse flow.

Training is performed without any ground‑truth frequency vectors. The authors devise an Expectation‑Maximization (EM) scheme: in the E‑step, the current model generates plausible null‑space samples given the current parameters; in the M‑step, the model parameters are updated to maximize the likelihood of the observed sketch counters. This unsupervised approach enables learning directly from aggregated counters, which are readily available in streaming systems.

The resulting framework, named FLORE, incorporates three design principles: (i) it treats the analysis of orthogonal loss as a guide, constructing an invertible solver that explicitly separates and reconstructs range‑ and null‑space components; (ii) it can be trained solely on aggregated counters via the EM algorithm, eliminating the need for labeled data; (iii) it employs a stream‑filtering technique together with a scalable Invertible Neural Network (INN) architecture, keeping the memory footprint around 1 MB and achieving high‑throughput inference, especially when accelerated on GPUs.

Extensive experiments on ten real‑world datasets—including CAIDA‑2018 network traces, ISP traffic logs, and web click streams—compare FLORE against nine state‑of‑the‑art baselines spanning traditional sketches, compressive‑sensing reconstructions, and recent learning‑augmented sketches. FLORE consistently outperforms all baselines: frequency estimation error is reduced by up to 1,000×, entropy and full‑distribution estimation improve by up to 100×, heavy‑hitter detection gains more than 80 points, and processing speed is 10–100× faster than learning‑based methods. Moreover, FLORE remains stable under distribution shifts without requiring model retraining, demonstrating its practicality for long‑running streaming applications.

In summary, the paper makes three key contributions: (1) a rigorous matrix‑theoretic analysis exposing the orthogonal irrecoverability inherent in sparse sketch matrices; (2) a demonstration that deep generative priors can effectively fill the missing null‑space, enabling near‑perfect recovery without ground‑truth supervision; and (3) the design and implementation of FLORE, the first invertible, unsupervised generative sketching system that simultaneously achieves high accuracy, low latency, and low memory usage. This work opens a new research direction—generative stream processing—and has immediate implications for network monitoring, real‑time analytics, and any domain where ultra‑fast, memory‑constrained summarization is required.

Comments & Academic Discussion

Loading comments...

Leave a Comment