Survey on Neural Routing Solvers

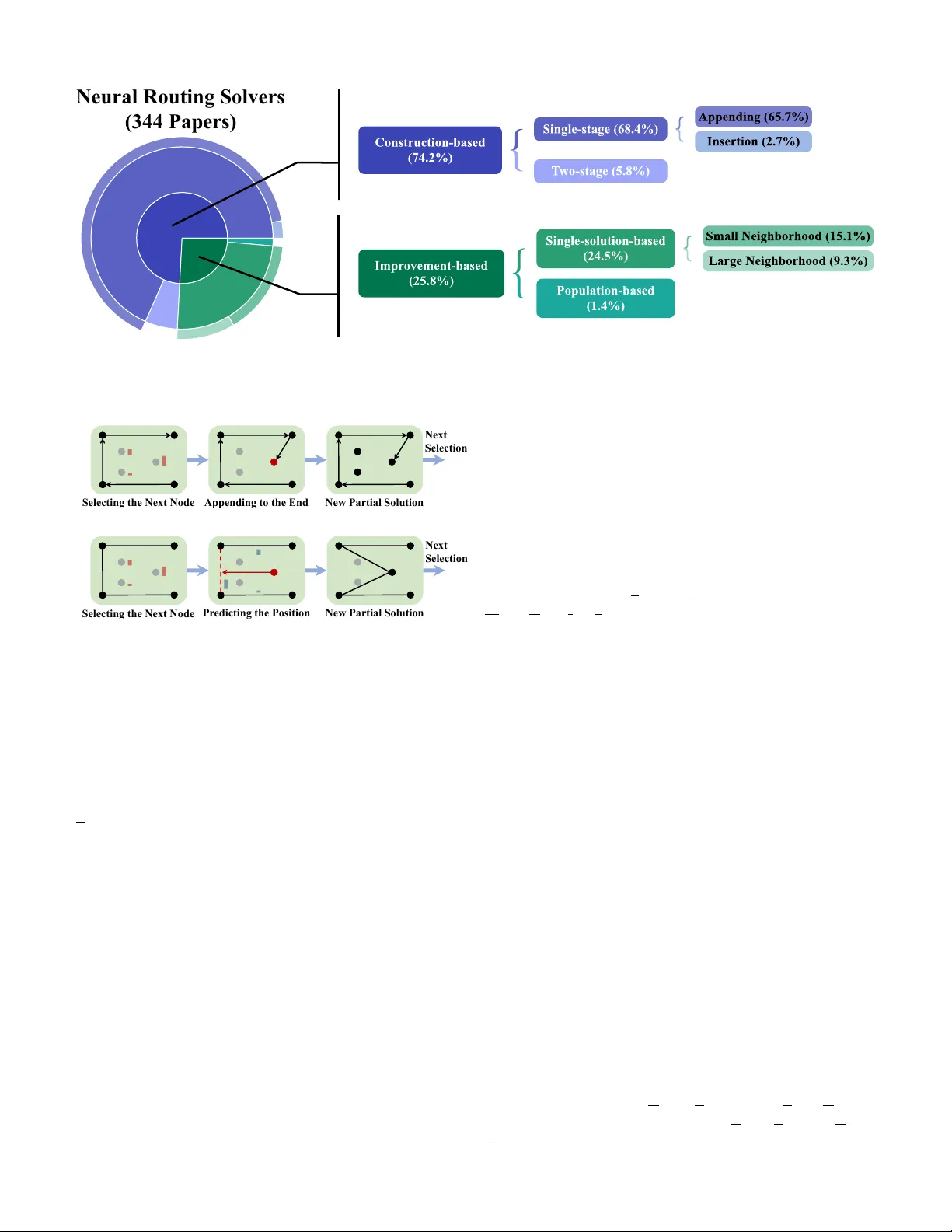

Neural routing solvers (NRSs) that leverage deep learning to tackle vehicle routing problems have demonstrated notable potential for practical applications. By learning implicit heuristic rules from data, NRSs replace the handcrafted counterparts in …

Authors: Yunpeng Ba, Xi Lin, Changliang Zhou