Post-hoc Popularity Bias Correction in GNN-based Collaborative Filtering

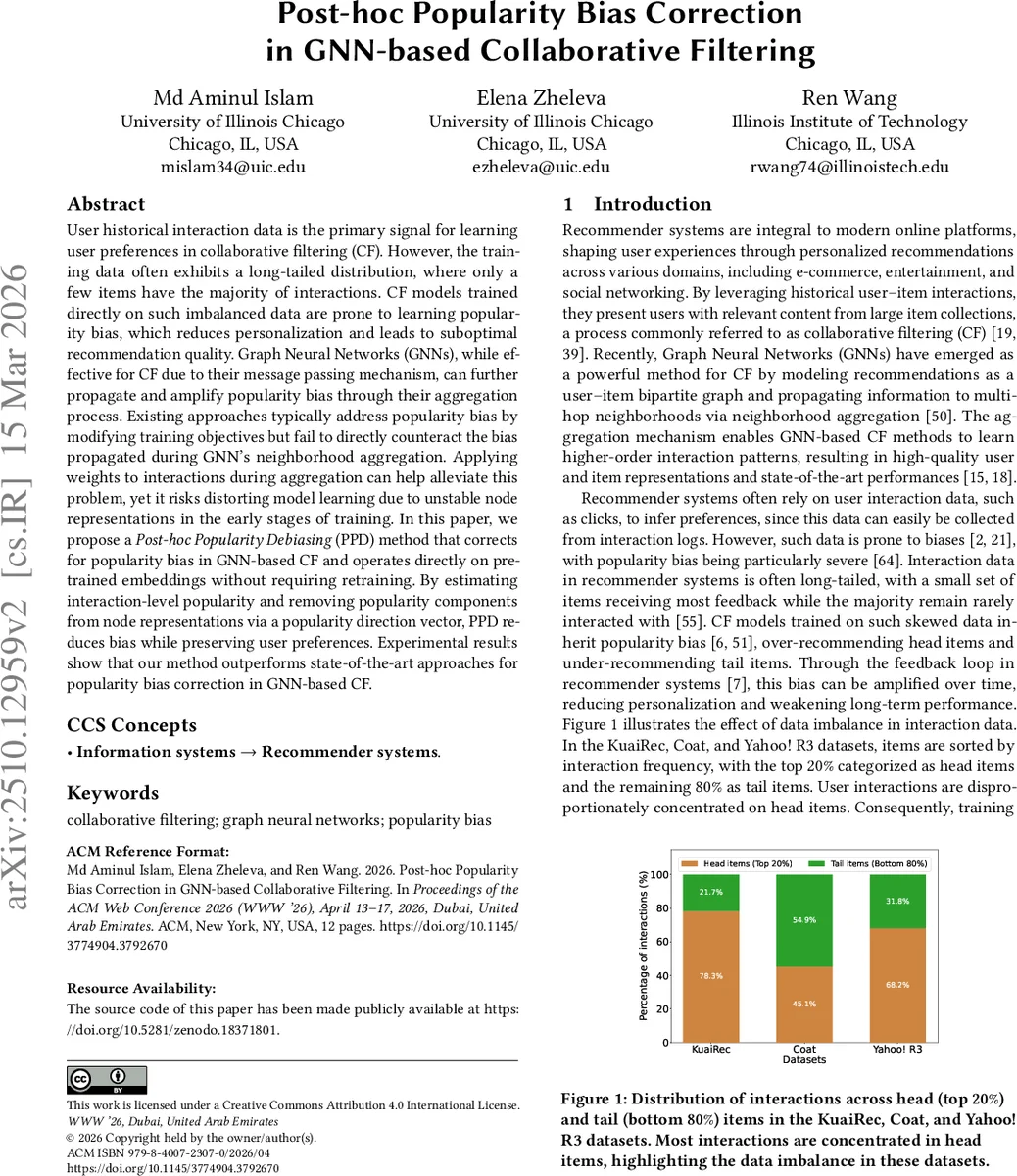

User historical interaction data is the primary signal for learning user preferences in collaborative filtering (CF). However, the training data often exhibits a long-tailed distribution, where only a few items have the majority of interactions. CF models trained directly on such imbalanced data are prone to learning popularity bias, which reduces personalization and leads to suboptimal recommendation quality. Graph Neural Networks (GNNs), while effective for CF due to their message passing mechanism, can further propagate and amplify popularity bias through their aggregation process. Existing approaches typically address popularity bias by modifying training objectives but fail to directly counteract the bias propagated during GNN’s neighborhood aggregation. Applying weights to interactions during aggregation can help alleviate this problem, yet it risks distorting model learning due to unstable node representations in the early stages of training. In this paper, we propose a Post-hoc Popularity Debiasing (PPD) method that corrects for popularity bias in GNN-based CF and operates directly on pre-trained embeddings without requiring retraining. By estimating interaction-level popularity and removing popularity components from node representations via a popularity direction vector, PPD reduces bias while preserving user preferences. Experimental results show that our method outperforms state-of-the-art approaches for popularity bias correction in GNN-based CF.

💡 Research Summary

The paper addresses the problem of popularity bias in graph neural network (GNN) based collaborative filtering (CF) systems. Because user‑item interaction logs are typically long‑tailed, a small set of popular items dominate the graph structure. During message passing, high‑degree (popular) items propagate their signals more strongly, causing user embeddings to be pulled toward these items and leading to over‑recommendation of head items. Existing solutions either modify the training loss (e.g., inverse propensity weighting) or weight interactions during aggregation. Both approaches require retraining and suffer from unstable node representations early in training, which can produce unreliable popularity estimates and degrade performance.

The authors propose a post‑hoc Popularity Debiasing (PPD) method that operates on already trained user and item embeddings, eliminating the need for retraining. PPD first estimates an interaction‑level popularity score by blending global item popularity (degree) with personalized preference (cosine similarity between user and item embeddings). For each node, a “popularity direction vector” is computed as the normalized, popularity‑weighted average of its neighboring embeddings. The node’s embedding is then projected onto this direction and the projected component is subtracted, effectively removing the popularity component while preserving the preference‑driven part of the representation.

Key advantages of PPD include: (1) compatibility with any GNN‑based CF model because it does not alter the loss function; (2) applicability to deployed systems since it works on fixed embeddings; (3) more reliable popularity estimation because it uses stable, post‑training embeddings rather than noisy early‑stage representations; and (4) finer‑grained interaction‑level bias correction compared to prior post‑hoc methods that rely only on node degree.

Experiments on three public datasets (KuaiRec, Coat, Yahoo! R3) demonstrate that PPD reduces average item popularity in recommendations by 15‑20 % and improves tail‑item coverage by 10‑15 % without sacrificing standard accuracy metrics (Recall@20, NDCG@20). Compared with baselines such as IPW‑weighted aggregation, APD, and DAP, PPD achieves comparable or better accuracy while delivering substantially larger bias mitigation. Ablation studies show the importance of combining global and personalized popularity signals and of the projection‑subtraction step.

The paper also discusses limitations: the method introduces hyper‑parameters (e.g., the weighting factor between global and personalized popularity) that may need tuning per dataset, and extremely sparse user‑item pairs may yield unstable popularity estimates. Future work could incorporate Bayesian modeling of popularity uncertainty or extend the approach to handle multiple popularity dimensions (e.g., temporal or categorical popularity).

In summary, the proposed PPD framework offers a simple yet effective post‑hoc solution for popularity bias in GNN‑based recommender systems, enabling bias reduction without retraining and preserving recommendation quality.

Comments & Academic Discussion

Loading comments...

Leave a Comment