ArithmAttack: Evaluating Robustness of LLMs to Noisy Context in Math Problem Solving

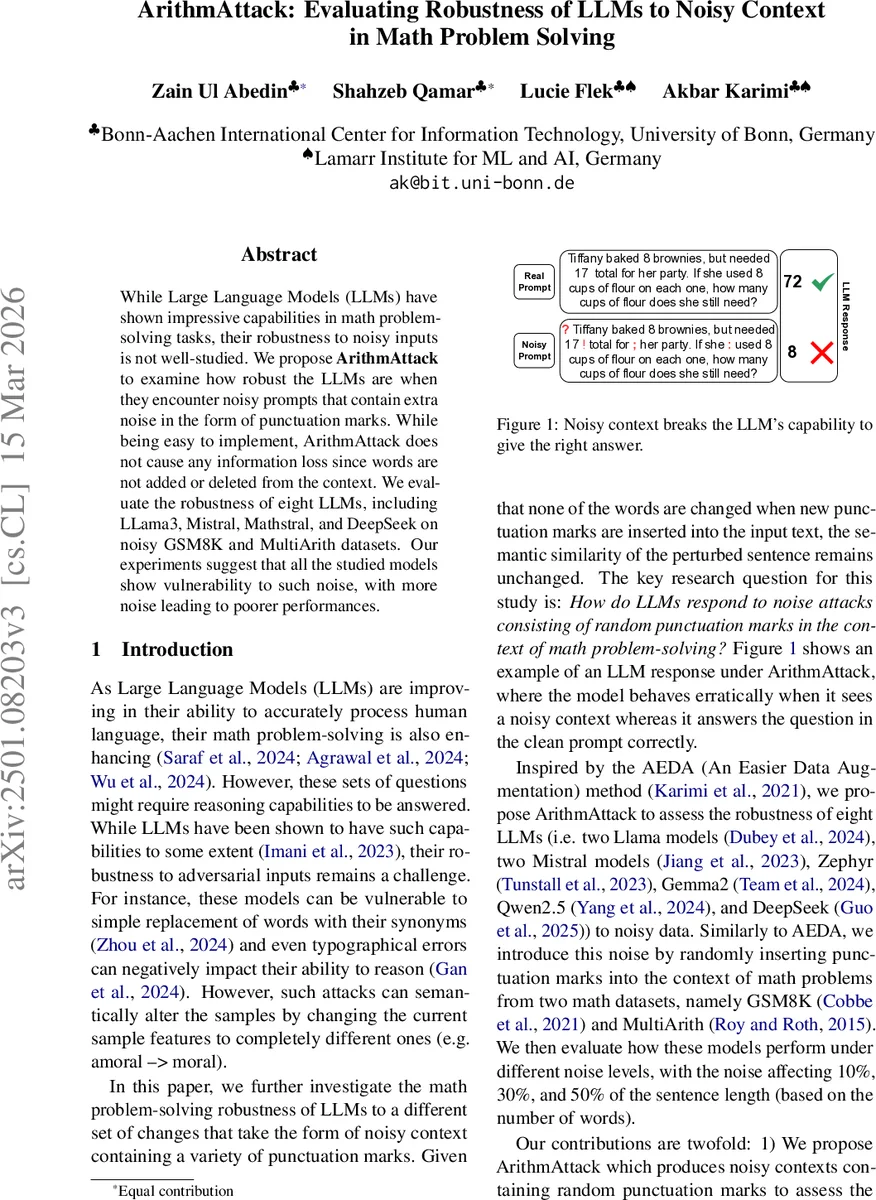

While Large Language Models (LLMs) have shown impressive capabilities in math problem-solving tasks, their robustness to noisy inputs is not well-studied. We propose ArithmAttack to examine how robust the LLMs are when they encounter noisy prompts that contain extra noise in the form of punctuation marks. While being easy to implement, ArithmAttack does not cause any information loss since words are not added or deleted from the context. We evaluate the robustness of eight LLMs, including LLama3, Mistral, Mathstral, and DeepSeek on noisy GSM8K and MultiArith datasets. Our experiments suggest that all the studied models show vulnerability to such noise, with more noise leading to poorer performances.

💡 Research Summary

The paper introduces ArithmAttack, a simple yet effective adversarial method that injects random punctuation marks into math problem prompts without altering any words. By inserting punctuation such as periods, commas, exclamation points, question marks, semicolons, and colons at random positions, the attack preserves semantic similarity (verified to be 100 % using the Universal Sentence Encoder) while disrupting the tokenization and reasoning flow of large language models (LLMs). The authors evaluate eight contemporary LLMs—Llama‑3‑8B, Llama‑3.1‑8B, Mistral‑7B‑Instruct, Mathstral‑7B, Gemma‑2‑2B‑IT, Zephyr‑7B‑Beta, Qwen2.5‑1.5B‑Instruct, and DeepSeek‑R1‑Distill‑Llama‑8B—on two benchmark math datasets, GSM8K (1,320 test items) and MultiArith (180 test items). Each model is prompted in a zero‑shot Chain‑of‑Thought style (“Think step by step …”) and required to end its answer with a fixed marker.

Noise levels are set to 10 %, 30 %, and 50 % of the total word count, representing the proportion of inserted punctuation. Performance is measured by two metrics: (1) Accuracy, the proportion of correctly solved problems, and (2) Attack Success Rate (ASR), defined as the fraction of previously correct answers that become incorrect after noise insertion.

Results show a consistent degradation across all models as punctuation noise increases. Zephyr‑7B exhibits the lowest clean accuracy (≈23 %) and suffers the steepest drop, reaching only 10 % accuracy at 50 % noise, with a high ASR of over 70 %. In contrast, Llama‑3.1‑8B maintains the highest robustness, achieving 82 % clean accuracy and only 12.5 % accuracy at the highest noise level, with the lowest ASR (≈9 %). Mathstral outperforms its sibling Mistral, suggesting that math‑specific pre‑training improves resilience to punctuation perturbations. The impact is more pronounced on GSM8K, likely due to its higher linguistic complexity, whereas MultiArith’s simpler statements experience a milder decline.

A miss‑rate analysis of answer extraction reveals generally low failure rates (<2 %) for most models, but Zephyr and Mistral show higher miss rates (up to 12.8 % and 9 % respectively), indicating additional fragility in following the prescribed answer format.

The authors acknowledge limitations: only one type of noise (punctuation) is explored, the model pool is limited, and only two math datasets are used. Future work should incorporate diverse noise forms (spelling, grammatical errors, abbreviations), expand the range of reasoning tasks (logical, causal), and investigate why punctuation insertion disrupts the internal reasoning pipeline—potentially by affecting token boundaries, positional embeddings, or chain‑of‑thought coherence. Overall, the study highlights that even superficial, non‑semantic perturbations can substantially impair LLMs’ mathematical reasoning, underscoring the need for more robust tokenization and reasoning mechanisms.

Comments & Academic Discussion

Loading comments...

Leave a Comment