Snowflake: A Distributed Streaming Decoder

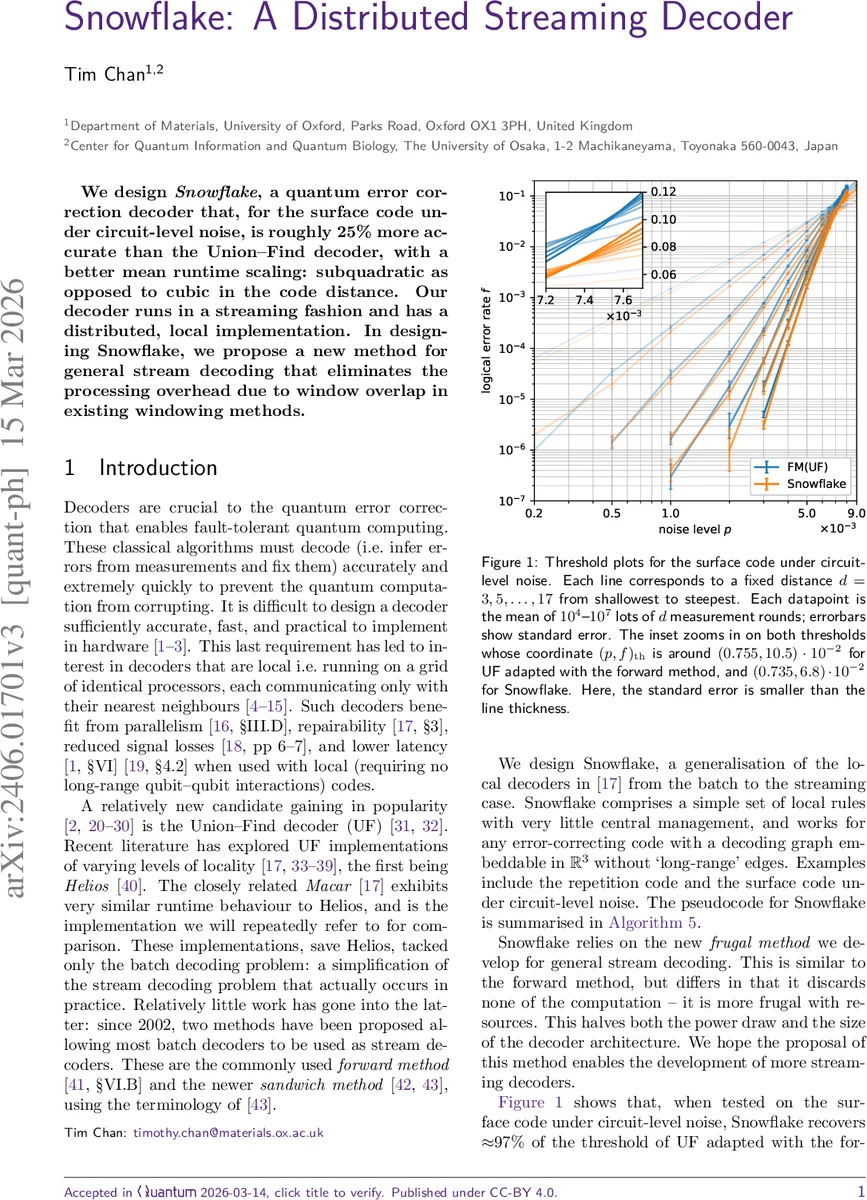

We design Snowflake, a quantum error correction decoder that, for the surface code under circuit-level noise, is roughly 25% more accurate than the Union-Find decoder, with a better mean runtime scaling: subquadratic as opposed to cubic in the code distance. Our decoder runs in a streaming fashion and has a distributed, local implementation. In designing Snowflake, we propose a new method for general stream decoding that eliminates the processing overhead due to window overlap in existing windowing methods.

💡 Research Summary

The paper introduces Snowflake, a novel quantum error‑correction decoder designed for streaming operation on the surface code under realistic circuit‑level noise. Compared with the widely used Union‑Find (UF) decoder, Snowflake achieves roughly a 25 % improvement in logical error rate while reducing the mean runtime scaling from cubic to sub‑quadratic in the code distance d. The key innovation lies in a new “frugal” decoding method that eliminates the computational overhead associated with overlapping windows in existing streaming approaches (the forward and sandwich methods).

In the traditional forward method, a decoding window of c + b layers is shifted upward by c layers each cycle; the lower c layers constitute the commit region, and the upper b layers form a buffer. After each batch decoding of the window, only the corrections within the commit region are retained, while the buffer’s intermediate results are discarded. To avoid loss of accuracy the buffer size must satisfy b ≳ d, which leads to substantial memory, power, and latency penalties because a large fraction of the computation is repeatedly thrown away.

The frugal method re‑thinks this process. It still raises the window by c layers each cycle, but it only requires the tentative correction T to annihilate defects inside the commit region (Condition 1). After finding T, Snowflake adds T∩E_C to the permanent correction set C and preserves the state associated with the buffer region (T∩E_B) for the next cycle. Consequently, the same buffer computation is never repeated, cutting the total number of operations roughly in half and dramatically lowering power and area requirements.

Snowflake’s algorithmic structure is deliberately local. The decoding graph G, built from the detector error model, is embedded in three‑dimensional Euclidean space without long‑range edges. Each vertex of G corresponds to a processor that communicates only with its nearest neighbors, enabling a fully distributed implementation on a 3‑D lattice of identical cores. The authors note that a “flattened” 2‑D version could be realized on current chip technologies with only marginal speed loss, further enhancing practical feasibility.

Performance evaluation focuses on the surface code with circuit‑level noise. Threshold plots show that Snowflake recovers about 97 % of the UF threshold when UF is adapted with the forward method, translating to an absolute logical error‑rate reduction of 24.9 % (±0.5 %). Runtime measurements demonstrate that the mean time per decoding cycle scales as O(d²) rather than O(d³), confirming the claimed sub‑quadratic improvement. The authors also discuss hard‑real‑time constraints (decoder must finish within a measurement interval) and show that the frugal method comfortably meets these limits even for large d.

Beyond raw performance, the paper emphasizes the broader impact of eliminating window overlap overhead. By reducing the required buffer size, Snowflake cuts the memory bandwidth and storage needed for streaming syndrome data, which is critical for long‑duration quantum memory experiments and large‑scale fault‑tolerant processors. The distributed, local nature of the decoder also simplifies hardware routing and improves fault‑tolerance of the decoder itself.

In summary, Snowflake presents a comprehensive solution to the streaming decoding problem: a frugal algorithm that retains all useful intermediate information, a local graph‑based architecture that scales naturally with code distance, and empirical evidence of superior accuracy and speed over the state‑of‑the‑art Union‑Find decoder. These advances make Snowflake a strong candidate for integration into next‑generation quantum computing platforms that require real‑time, low‑latency error correction.

Comments & Academic Discussion

Loading comments...

Leave a Comment