Estimating Causal Effects of Text Interventions Leveraging LLMs

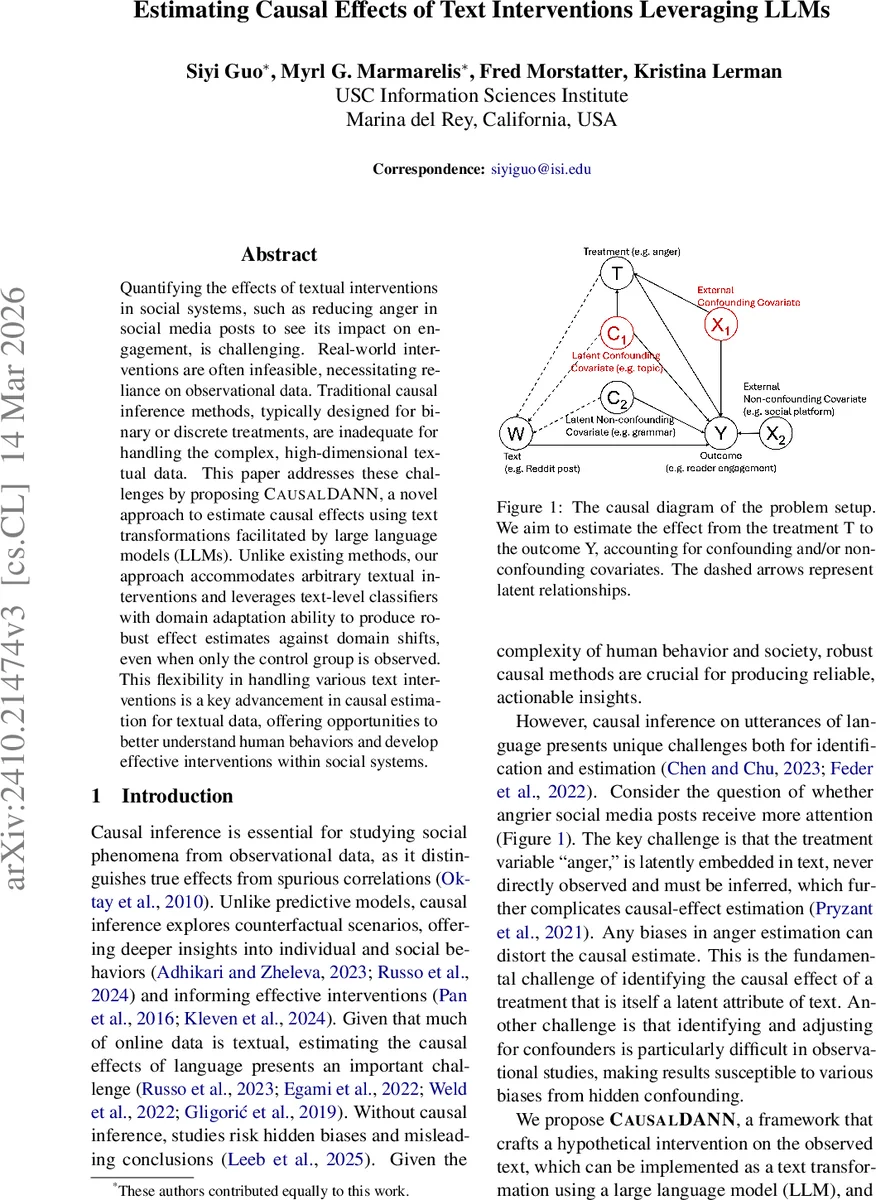

Quantifying the effects of textual interventions in social systems, such as reducing anger in social media posts to see its impact on engagement, is challenging. Real-world interventions are often infeasible, necessitating reliance on observational data. Traditional causal inference methods, typically designed for binary or discrete treatments, are inadequate for handling the complex, high-dimensional textual data. This paper addresses these challenges by proposing CausalDANN, a novel approach to estimate causal effects using text transformations facilitated by large language models (LLMs). Unlike existing methods, our approach accommodates arbitrary textual interventions and leverages text-level classifiers with domain adaptation ability to produce robust effect estimates against domain shifts, even when only the control group is observed. This flexibility in handling various text interventions is a key advancement in causal estimation for textual data, offering opportunities to better understand human behaviors and develop effective interventions within social systems.

💡 Research Summary

The paper tackles the problem of estimating causal effects when the treatment itself is embedded in text, a scenario common in social media and online communication but poorly served by traditional causal inference methods that assume binary or discrete treatments. The authors introduce CausalDANN, a framework that (1) creates a hypothetical intervention on observed text by applying a transformation g(·) using a large language model (LLM), (2) predicts outcomes for both the original and transformed texts with a domain‑adversarial neural network (DANN) that learns domain‑invariant representations, and (3) computes average treatment effects (ATE) and conditional average treatment effects (CATE) from the predicted outcomes.

Methodologically, the authors formalize the problem within the potential‑outcomes framework, retaining the classic causal assumptions of SUTVA, overlap, and ignorability. Overlap is ensured by designing LLM prompts that modify only a targeted attribute (e.g., anger intensity) while preserving other linguistic dimensions, so the transformed text remains within the support of the observed data. Ignorability holds because the model conditions on the full text, which implicitly contains latent covariates (grammar, style) that would otherwise need explicit control.

The intervention step leverages LLMs (e.g., GPT‑4) to re‑phrase or adjust sentiment, or, in structured datasets, to select alternative observed examples (e.g., 5‑star vs. 1‑star reviews). The outcome prediction component compares two approaches: a vanilla BERT classifier trained only on control texts, and the proposed CausalDANN that augments BERT with a domain classifier trained adversarially to make the learned features indistinguishable between control and transformed domains. This adversarial training mitigates the distribution shift that typically degrades predictions on counterfactual text.

Experiments are conducted on three semi‑synthetic datasets: (i) anger‑intensified Reddit posts, (ii) product reviews with opposite star ratings, and (iii) Reddit’s “AmITheAsshole” posts where LLMs generate moral judgments as outcomes. For each scenario, the authors synthesize counterfactual outcomes using LLMs, then evaluate bias and mean‑squared error of ATE estimates produced by CausalDANN, inverse propensity weighting (IPW), doubly robust (DR) methods, and the BERT baseline. Across all settings, CausalDANN consistently yields lower bias—approximately 15‑20 % improvement over IPW/DR—and better MSE, especially when the transformation induces a large semantic shift (e.g., strong anger amplification).

The paper acknowledges several limitations. First, ensuring that LLM transformations affect only the intended attribute is non‑trivial; residual changes could introduce hidden confounding. Second, LLM‑generated data may inherit societal biases, potentially contaminating both the intervention and the synthetic outcomes. Third, the evaluation relies on semi‑synthetic data; real‑world validation with actual interventions or A/B tests remains an open challenge. The authors suggest future work involving human‑in‑the‑loop verification of transformation fidelity, bias mitigation techniques for LLM outputs, and deployment in live platforms to assess external validity.

In summary, CausalDANN represents a novel contribution at the intersection of causal inference and natural language processing. By treating text as the treatment itself and employing domain‑adversarial learning to bridge the gap between observed and counterfactual text distributions, the framework enables robust causal effect estimation even when only control data are available. This opens new avenues for studying the impact of linguistic interventions on engagement, sentiment spread, and social outcomes, while also highlighting the need for careful handling of LLM‑induced biases and thorough real‑world testing.

Comments & Academic Discussion

Loading comments...

Leave a Comment