Learning Energy-Efficient Air--Ground Actuation for Hybrid Robots on Stair-Like Terrain

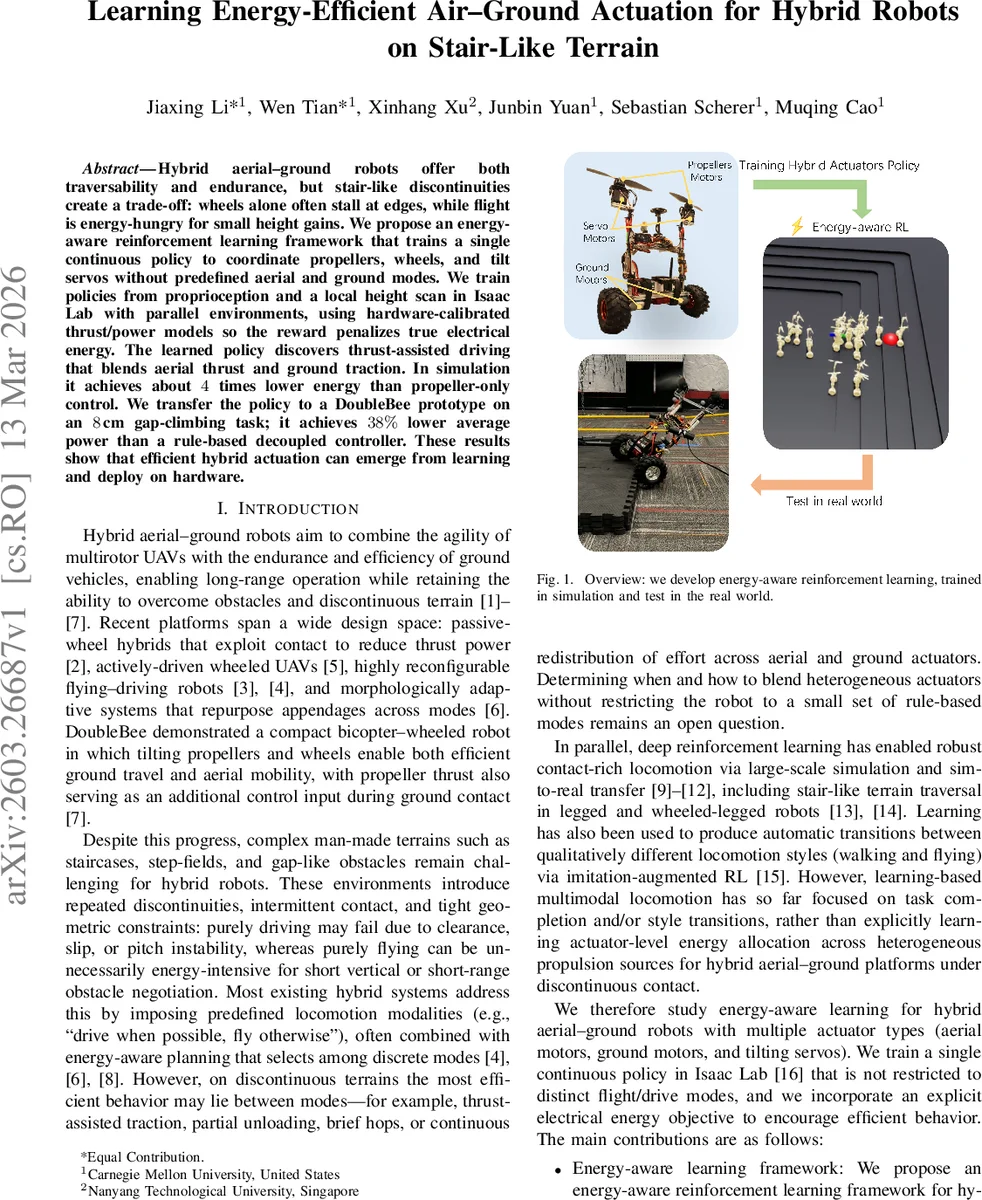

Hybrid aerial–ground robots offer both traversability and endurance, but stair-like discontinuities create a trade-off: wheels alone often stall at edges, while flight is energy-hungry for small height gains. We propose an energy-aware reinforcement learning framework that trains a single continuous policy to coordinate propellers, wheels, and tilt servos without predefined aerial and ground modes. We train policies from proprioception and a local height scan in Isaac Lab with parallel environments, using hardware-calibrated thrust/power models so the reward penalizes true electrical energy. The learned policy discovers thrust-assisted driving that blends aerial thrust and ground traction. In simulation it achieves about 4 times lower energy than propeller-only control. We transfer the policy to a DoubleBee prototype on an 8cm gap-climbing task; it achieves 38% lower average power than a rule-based decoupled controller. These results show that efficient hybrid actuation can emerge from learning and deploy on hardware.

💡 Research Summary

This paper tackles the problem of efficiently traversing stair‑like discontinuous terrain with a hybrid aerial‑ground robot that combines bicopter propellers, tilting servos, and driven wheels. Traditional hybrid platforms typically rely on a set of predefined locomotion modes—“drive when possible, fly otherwise”—and use energy‑aware planning to select among these discrete modes. Such approaches, however, restrict the robot to a limited set of behaviors and cannot exploit intermediate actuation strategies that may be more energy‑optimal on terrains where contact is intermittent and geometry is tight.

The authors propose an energy‑aware reinforcement learning (RL) framework that learns a single continuous policy capable of coordinating all three actuator families without any hard‑coded mode switches. Crucially, the RL reward incorporates a true electrical power consumption term derived from calibrated thrust‑to‑PWM‑to‑power models of the propellers, ensuring that the learned behavior directly minimizes the robot’s energy usage rather than a proxy such as thrust magnitude.

Simulation and training are performed in NVIDIA Isaac Lab, where a high‑fidelity USD model of the DoubleBee robot is built from CAD data, preserving measured masses and inertia tensors. Propeller dynamics are modeled by a two‑stage mapping: motor speed → PWM → thrust, with a fourth‑order polynomial fit to bench‑test data (RMSE ≈ 4 × 10⁻³ N). The same polynomial is used to compute instantaneous electrical power for the energy penalty. Wheels and servos are controlled via PD loops with realistic saturation and first‑order lag, mirroring the real‑world low‑level controllers.

The task environment consists of a procedurally generated inverted‑pyramid staircase on a 10 × 10 m tile. A difficulty scalar d interpolates step heights from 0.01 m (almost flat) to 0.126 m (challenging for wheeled locomotion). A curriculum gradually increases the maximum target distance, encouraging the policy to master increasingly difficult gaps. Observations supplied to the policy at each 20 ms control step include: wheel angular velocities, body linear and angular velocities, gravity direction (projected), binary wheel‑ground contact flags, a 6 × 6 local height scan (36 values), a normalized goal‑direction command (body‑frame x, y, and heading error), and the previous action vector, totaling a 23‑dimensional observation.

The action space is six‑dimensional and continuous: normalized commands for left/right wheel speeds, left/right servo tilt angles, and left/right propeller throttles. These are linearly scaled to physical references (e.g., wheel speed 500 rad/s · action, servo angle π/2 · action, propeller speed ±500 rad/s · action). The policy is a standard feed‑forward neural network trained with Proximal Policy Optimization (PPO).

Reward composition: (i) task progress and sparse terminal bonuses for reaching the goal, (ii) stability terms penalizing excessive tilt, heading error, and wheel speed deviation, and (iii) an energy term proportional to instantaneous electrical power consumption. Weighting coefficients are tuned so that energy minimization does not compromise task success.

Training results in simulation reveal an emergent “thrust‑assisted driving” behavior. Rather than fully switching to flight when a step is encountered, the policy applies a modest upward thrust while maintaining wheel contact, effectively increasing normal force and traction. This hybrid actuation reduces slip and prevents the robot from stalling at stair edges. Quantitatively, the learned policy consumes roughly one‑quarter of the power used by a baseline controller that relies solely on propeller thrust for vertical motion (i.e., ~4× energy savings) while achieving comparable success rates across the full difficulty range.

For sim‑to‑real transfer, the authors deploy the learned policy on a physical DoubleBee prototype to climb an 8 cm gap. They incorporate domain randomization (mass, friction, motor gains) and sensor noise during training to bridge the reality gap. The baseline for hardware experiments is the “decoupled” rule‑based controller from the original DoubleBee work, which keeps pitch control primarily with propellers and uses wheels for translation, but treats flight and ground modes as separate. In real‑world trials, the learned policy reduces average electrical power consumption by 38 % relative to the decoupled controller while successfully crossing the gap in all trials. No significant degradation in stability or tracking accuracy is observed.

The paper’s contributions are threefold: (1) an RL framework that directly penalizes true electrical energy, enabling the policy to discover energy‑optimal hybrid actuation; (2) demonstration that a single continuous policy can autonomously learn non‑intuitive blending of aerial thrust and ground traction without any predefined mode logic; (3) successful transfer of the policy from high‑fidelity simulation to a real hybrid robot, validating the approach on a representative discontinuous‑terrain task.

Overall, this work advances the state of the art in hybrid aerial‑ground locomotion by moving beyond discrete mode selection toward continuous, energy‑aware actuation synthesis. It opens pathways for deploying hybrid robots in energy‑constrained settings such as disaster response, infrastructure inspection, or long‑duration exploration where frequent small obstacles demand frequent mode blending. Future extensions could explore richer perception (e.g., vision‑based terrain mapping), multi‑robot coordination, and online adaptation of the energy model to account for battery health or varying environmental conditions.

Comments & Academic Discussion

Loading comments...

Leave a Comment