Stake the Points: Structure-Faithful Instance Unlearning

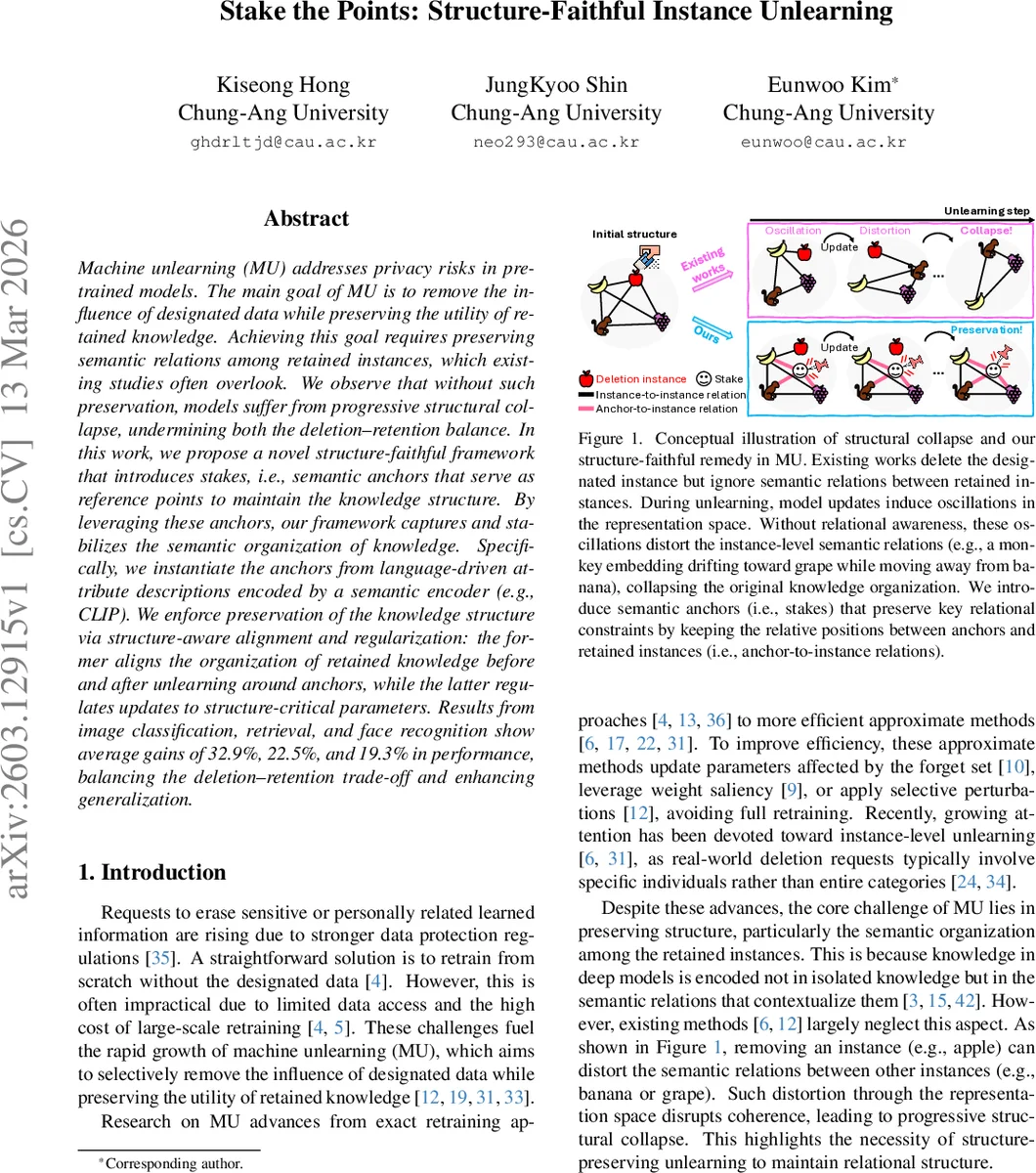

Machine unlearning (MU) addresses privacy risks in pretrained models. The main goal of MU is to remove the influence of designated data while preserving the utility of retained knowledge. Achieving this goal requires preserving semantic relations among retained instances, which existing studies often overlook. We observe that without such preservation, models suffer from progressive structural collapse, undermining both the deletion-retention balance. In this work, we propose a novel structure-faithful framework that introduces stakes, i.e., semantic anchors that serve as reference points to maintain the knowledge structure. By leveraging these anchors, our framework captures and stabilizes the semantic organization of knowledge. Specifically, we instantiate the anchors from language-driven attribute descriptions encoded by a semantic encoder (e.g., CLIP). We enforce preservation of the knowledge structure via structure-aware alignment and regularization: the former aligns the organization of retained knowledge before and after unlearning around anchors, while the latter regulates updates to structure-critical parameters. Results from image classification, retrieval, and face recognition show average gains of 32.9%, 22.5%, and 19.3% in performance, balancing the deletion-retention trade-off and enhancing generalization.

💡 Research Summary

Machine unlearning (MU) aims to erase the influence of specific data points from a pretrained model while keeping the model useful for the remaining data. Existing exact or approximate MU methods focus primarily on removing the targeted information, but they largely ignore the relational structure among the retained instances. This oversight leads to a phenomenon the authors call “structural collapse”: as the model updates to forget the designated samples, the embeddings of the retained data drift, causing previously meaningful semantic relationships (e.g., “banana is closer to apple than to grape”) to deteriorate. The authors empirically demonstrate that the degree of structural collapse correlates negatively with the deletion‑retention trade‑off, i.e., larger collapse results in a bigger gap between performance on retained data and the ability to delete the forget set.

To address this, the paper introduces a structure‑faithful unlearning framework built around semantic anchors (referred to as “stakes”). For each class, a large language model is prompted to generate a set of human‑readable attribute descriptions (e.g., texture, shape, typical context). These textual attributes are encoded by a frozen multimodal encoder such as CLIP, producing a fixed‑dimensional anchor vector for that class. Anchors are data‑free and remain unchanged throughout the unlearning process, providing a stable reference even when the retention set is unavailable.

The authors define structure as the matrix of affinities (inner products) between instance embeddings and the anchors. The original structure (S_{ori}=V_{ori}A^\top) is computed from the pretrained model’s embeddings (V_{ori}). During unlearning, a learnable projector (p_\omega) maps the current feature extractor’s outputs to the same semantic space, yielding (V_{unl}) and the updated structure (S_{unl}=V_{unl}A^\top).

Two complementary constraints are proposed to preserve this structure:

- Structure‑aware alignment: maximize the average cosine similarity between the rows of (S_{ori}) and (S_{unl}). This loss, \

Comments & Academic Discussion

Loading comments...

Leave a Comment