Hierarchical Reference Sets for Robust Unsupervised Detection of Scattered and Clustered Outliers

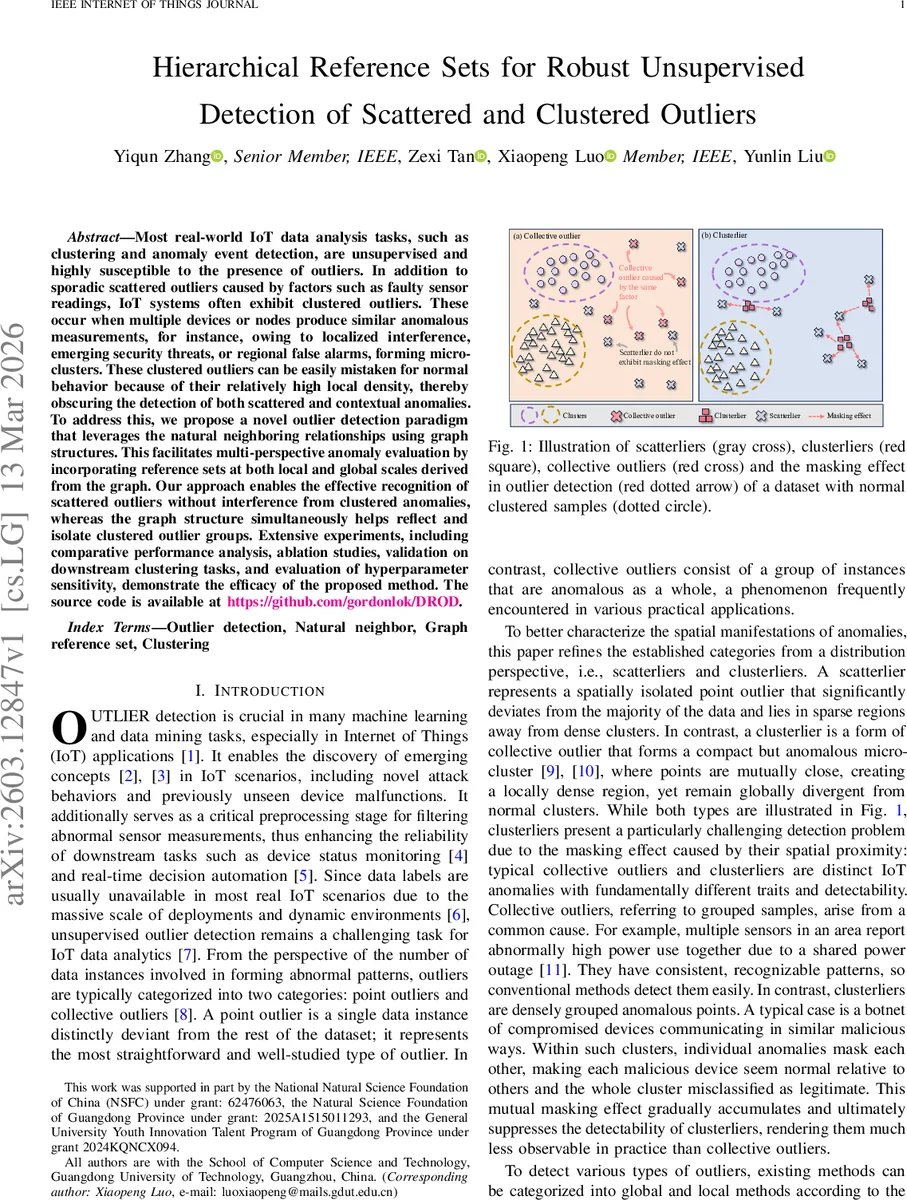

Most real-world IoT data analysis tasks, such as clustering and anomaly event detection, are unsupervised and highly susceptible to the presence of outliers. In addition to sporadic scattered outliers caused by factors such as faulty sensor readings, IoT systems often exhibit clustered outliers. These occur when multiple devices or nodes produce similar anomalous measurements, for instance, owing to localized interference, emerging security threats, or regional false alarms, forming micro-clusters. These clustered outliers can be easily mistaken for normal behavior because of their relatively high local density, thereby obscuring the detection of both scattered and contextual anomalies. To address this, we propose a novel outlier detection paradigm that leverages the natural neighboring relationships using graph structures. This facilitates multi-perspective anomaly evaluation by incorporating reference sets at both local and global scales derived from the graph. Our approach enables the effective recognition of scattered outliers without interference from clustered anomalies, whereas the graph structure simultaneously helps reflect and isolate clustered outlier groups. Extensive experiments, including comparative performance analysis, ablation studies, validation on downstream clustering tasks, and evaluation of hyperparameter sensitivity, demonstrate the efficacy of the proposed method. The source code is available at https://github.com/gordonlok/DROD.

💡 Research Summary

The paper addresses a critical challenge in Internet‑of‑Things (IoT) analytics: the simultaneous detection of two fundamentally different types of anomalies—isolated “scatterliers” and densely packed but globally abnormal “clusterliers.” Scatterliers are individual points that lie far from any dense region, while clusterliers form compact micro‑clusters whose local density is high enough to mask them from conventional local outlier detectors. Existing unsupervised methods fall into two categories. Global approaches assume a homogeneous data distribution and therefore fail when the real data contain mixed densities or clustered anomalies. Local k‑Nearest‑Neighbor (k‑NN) methods, even those enhanced with adaptive neighbor selection such as Natural Neighbor (NN), treat each point in isolation and cannot capture the collective abnormality of a micro‑cluster; moreover, the presence of clusterliers can inflate the neighbor set of nearby scatterliers, causing a masking effect that hides the scatterliers.

To overcome these limitations, the authors propose a novel paradigm built on the concept of Natural Neighbors—a symmetric mutual‑nearest‑neighbor relationship where two samples are natural neighbors if each appears in the other’s k‑NN list. The search radius λ is automatically determined by a data‑driven algorithm, allowing each point to have a variable number of natural neighbors that adapts to local density.

The method constructs two hierarchical reference sets:

-

Natural Neighbor Subsets (NRS) – The dataset is partitioned into subsets of mutually connected natural neighbors. Each NRS is a tightly knit group of points sharing high intra‑subset similarity. Within an NRS, the Local Anomaly Index (LAI) is computed for each sample based on its local natural density ρ (the cardinality of its natural neighbor set) relative to the average density of the subset. Because LAI only considers intra‑subset information, the high density of a clusterlier does not dominate the score of surrounding scatterliers, mitigating the masking effect.

-

Graph Reference Set (GRS) – The NRSs become vertices of a graph. Edges are formed between NRSs that contain natural neighbor pairs across subsets; the Link Strength (LS) quantifies how many such cross‑subset natural neighbor pairs exist. The Subset Anomaly Index (SAI) of an NRS is derived from its LS with other NRSs. Normal large clusters generate many connections and thus low SAI, whereas a clusterlier, represented by a small isolated group of NRSs, exhibits weak connectivity and consequently high SAI.

The final anomaly score for a sample is a weighted combination of its LAI and the SAI of its containing NRS. This dual‑reference scoring captures both point‑wise deviation (effective for scatterliers) and group‑wise isolation (effective for clusterliers). The weighting factor β is automatically adjusted based on subset size and density, eliminating the need for manual hyper‑parameter tuning aside from the intrinsic λ, which itself is data‑adaptive.

Algorithmic pipeline:

- Perform natural neighbor search to obtain NB(·) for all points.

- Partition the data into NRSs using NB relationships, prioritizing dense points for subset seeding.

- Compute LAI for each point within its NRS.

- Build the GRS by linking NRSs via cross‑subset natural neighbor pairs and compute LS and SAI.

- Combine LAI and SAI to produce the final anomaly score.

Experimental evaluation spans 32 benchmark datasets of varying dimensionality, density heterogeneity, and anomaly composition, as well as real‑world IoT sensor streams. The proposed method (named DROD) is compared against a broad spectrum of baselines: global density methods (e.g., Isolation Forest), local density methods (LOF, COF), clustering‑based detectors (CBLOF), and recent graph‑enhanced approaches (GNAN, HDIOD). Performance metrics include AUC, F1‑score, and PR‑AUC. DROD consistently outperforms all baselines, with especially large margins on datasets where clusterliers constitute a significant portion (average AUC improvement of 0.07 over the second‑best method). Ablation studies demonstrate that removing either the NRS (LAI) or GRS (SAI) component degrades performance, confirming their complementary roles.

A downstream clustering experiment further validates practical utility: after removing points flagged as outliers by each method, standard clustering algorithms (K‑means, Spectral Clustering) achieve higher Adjusted Rand Index (ARI) and Normalized Mutual Information (NMI), indicating that DROD’s outlier removal yields cleaner cluster structures.

Complexity analysis shows that natural neighbor search can be implemented with KD‑Tree or Ball‑Tree structures in O(n log n) time, while subset formation and graph construction are linear in the number of points. Consequently, the approach scales to hundreds of thousands of IoT measurements and can be integrated into streaming pipelines.

The authors release the full source code on GitHub (https://github.com/gordonlok/DROD), ensuring reproducibility. Hyper‑parameter sensitivity experiments reveal that the method is robust to variations in sampling rate and the number of repetitions used for stochastic stability, further supporting its applicability in diverse IoT settings.

In summary, the paper introduces a robust unsupervised outlier detection framework that simultaneously addresses isolated and clustered anomalies by leveraging hierarchical natural‑neighbor‑based reference sets. The dual anomaly indices effectively neutralize the masking effect of dense micro‑clusters, require minimal parameter tuning, and demonstrate superior performance across extensive benchmarks and real IoT scenarios, making it a valuable contribution to the field of anomaly detection in heterogeneous, large‑scale sensor data.

Comments & Academic Discussion

Loading comments...

Leave a Comment