Mask2Flow-TSE: Two-Stage Target Speaker Extraction with Masking and Flow Matching

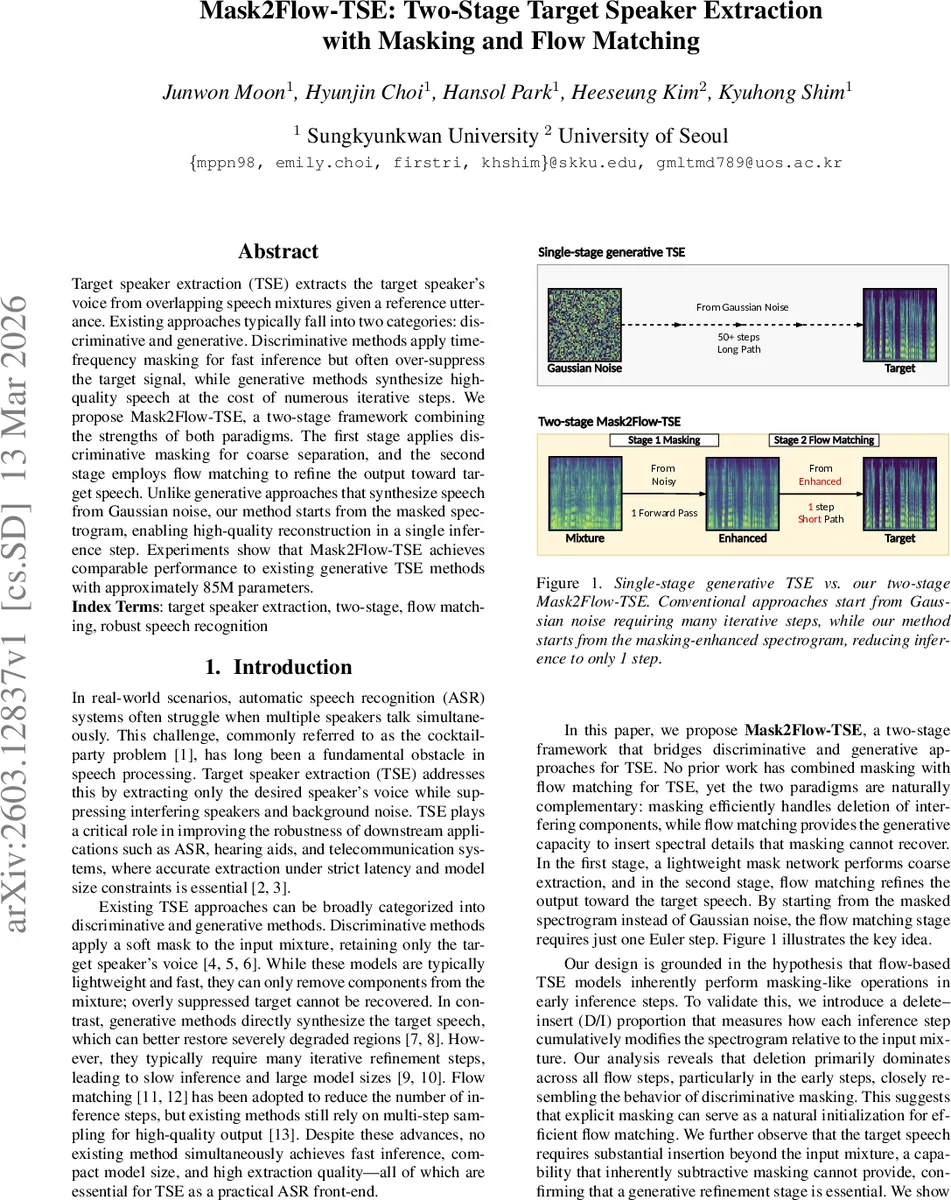

Target speaker extraction (TSE) extracts the target speaker’s voice from overlapping speech mixtures given a reference utterance. Existing approaches typically fall into two categories: discriminative and generative. Discriminative methods apply time-frequency masking for fast inference but often over-suppress the target signal, while generative methods synthesize high-quality speech at the cost of numerous iterative steps. We propose Mask2Flow-TSE, a two-stage framework combining the strengths of both paradigms. The first stage applies discriminative masking for coarse separation, and the second stage employs flow matching to refine the output toward target speech. Unlike generative approaches that synthesize speech from Gaussian noise, our method starts from the masked spectrogram, enabling high-quality reconstruction in a single inference step. Experiments show that Mask2Flow-TSE achieves comparable performance to existing generative TSE methods with approximately 85M parameters.

💡 Research Summary

Mask2Flow‑TSE addresses the long‑standing trade‑off in target speaker extraction (TSE) between fast, lightweight discriminative masking and high‑quality but computationally heavy generative synthesis. The authors first observe that flow‑based generative TSE models, when examined step by step, are heavily “deletion‑dominant” in their early inference stages. To quantify this, they introduce a Delete‑Insert (D/I) proportion metric that measures how much energy is removed (Delete) versus added (Insert) relative to the input mixture at each intermediate step. An 8‑step flow model on Libri2Mix shows deletion rates of ~94 % at step 1 and still ~71 % at step 8, while the clean target requires roughly 25‑28 % insertion energy that a pure mask cannot provide. This analysis leads to the central hypothesis: the early deletion work of a flow model can be replaced by an explicit masking stage, freeing the flow stage to focus on the insertion gap.

The proposed two‑stage architecture consists of:

- Stage 1 – Discriminative Masking

- A pretrained WaveLM speaker encoder extracts a d‑vector from a short reference utterance.

- The masking network combines the log‑mel spectrogram of the mixture (X) with the speaker embedding (d) via convolutional layers followed by bidirectional LSTMs with residual connections.

- A sigmoid‑activated linear layer outputs a soft mask M∈

Comments & Academic Discussion

Loading comments...

Leave a Comment