Fourier Angle Alignment for Oriented Object Detection in Remote Sensing

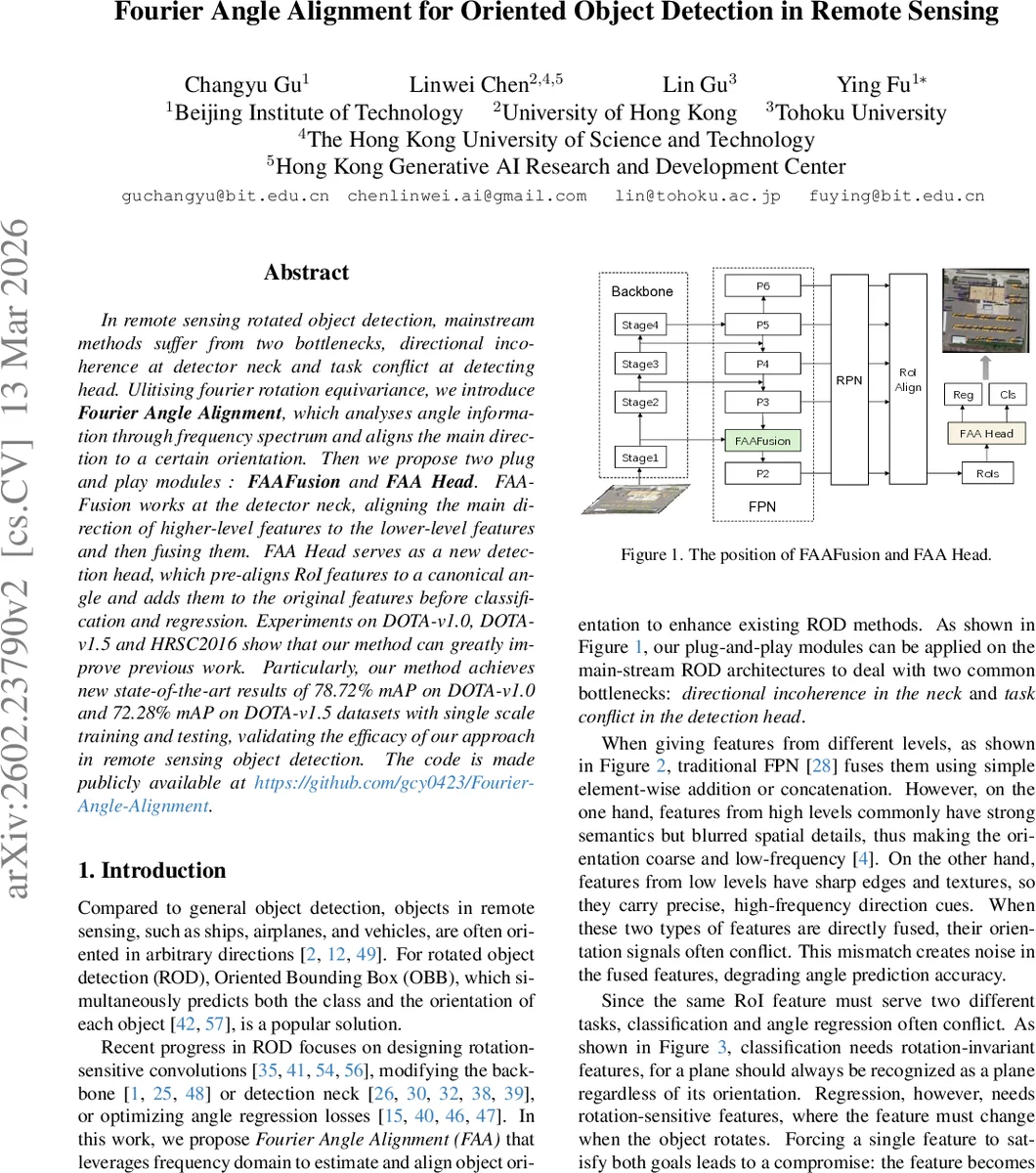

In remote sensing rotated object detection, mainstream methods suffer from two bottlenecks, directional incoherence at detector neck and task conflict at detecting head. Ulitising fourier rotation equivariance, we introduce Fourier Angle Alignment, which analyses angle information through frequency spectrum and aligns the main direction to a certain orientation. Then we propose two plug and play modules : FAAFusion and FAA Head. FAAFusion works at the detector neck, aligning the main direction of higher-level features to the lower-level features and then fusing them. FAA Head serves as a new detection head, which pre-aligns RoI features to a canonical angle and adds them to the original features before classification and regression. Experiments on DOTA-v1.0, DOTA-v1.5 and HRSC2016 show that our method can greatly improve previous work. Particularly, our method achieves new state-of-the-art results of 78.72% mAP on DOTA-v1.0 and 72.28% mAP on DOTA-v1.5 datasets with single scale training and testing, validating the efficacy of our approach in remote sensing object detection. The code is made publicly available at https://github.com/gcy0423/Fourier-Angle-Alignment .

💡 Research Summary

The paper “Fourier Angle Alignment for Oriented Object Detection in Remote Sensing” tackles two persistent challenges in rotated object detection: (1) directional incoherence when fusing multi‑scale features in the detector neck, and (2) the inherent conflict between classification (which prefers rotation‑invariant features) and angle regression (which requires rotation‑sensitive features) in the detection head. The authors propose a unified framework called Fourier Angle Alignment (FAA) that leverages the rotation equivariance of the Fourier transform to estimate a dominant orientation directly from the frequency domain and then explicitly align feature maps to a chosen reference angle.

Fourier Angle Estimation

Given a square feature map, a 2‑D discrete Fourier transform (FFT) is applied, followed by a phase‑shift that moves the zero‑frequency component to the center. The spectrum is converted from Cartesian to polar coordinates (ρ, θ). For each angle θ, the magnitude is summed radially to obtain a one‑dimensional angular energy distribution Eθ(θ). The angle that maximizes this distribution, θ̂, is taken as the dominant orientation of the underlying object. The authors provide a mathematical justification using the sinc‑based spectrum of a rectangular function, showing that high‑frequency energy concentrates along the axis perpendicular to the rectangle’s major side.

Angle Alignment

With the estimated θ̂, the original spatial feature map is rotated by Δθ = θ₀ − θ̂, where θ₀ is a predefined reference (e.g., 0°). This produces an “aligned” feature map whose dominant direction now matches the reference. The alignment operation is implemented as a standard 2‑D rotation around the feature map center, which can be efficiently realized with bilinear sampling on GPUs.

FAAFusion (Neck Module)

In typical Feature Pyramid Networks (FPN), high‑level semantic features (large receptive field, low spatial resolution) are simply added to low‑level detailed features (high spatial resolution, rich edges). The authors argue that high‑level features encode orientation in low‑frequency components, while low‑level features preserve high‑frequency directional cues. Direct addition mixes inconsistent orientation signals, degrading angle regression. FAAFusion first estimates θ̂ from the low‑level feature using the Fourier pipeline, then rotates the high‑level feature to align with this angle before fusion. The aligned high‑level and original low‑level features are then combined (addition or concatenation). This process enforces directional consistency across scales, improving the quality of fused representations for rotated bounding‑box regression.

FAA‑Head (Detection Head Module)

A Region of Interest (RoI) feature extracted by RoI pooling also suffers from the classification‑regression conflict: classification benefits from rotation‑invariant descriptors, while regression needs rotation‑sensitive cues. FAA‑Head applies the same Fourier‑based orientation estimator to each RoI, rotates the RoI feature to a canonical orientation (θ₀ = 0°), and then adds the aligned feature back to the original RoI feature. The resulting composite feature contains a rotation‑invariant component (useful for the classifier) and a rotation‑sensitive component (useful for the regressor), effectively decoupling the two tasks without adding extra parameters.

Experimental Validation

The authors integrate FAAFusion and FAA‑Head into the Oriented R‑CNN framework with three backbones: ResNet‑50, LSKNet‑S, and StripNet‑S. They evaluate on three benchmark datasets: DOTA‑v1.0, DOTA‑v1.5, and HRSC2016. Results show consistent mAP improvements across all settings:

- DOTA‑v1.0: +0.68 % (ResNet‑50), +1.00 % (LSKNet‑S), +0.63 % (StripNet‑S)

- DOTA‑v1.5: +0.37 % (ResNet‑50), +2.02 % (LSKNet‑S), +1.73 % (StripNet‑S)

- HRSC2016: +2.17 % (ResNet‑50), +1.81 % (LSKNet‑S), +1.23 % (StripNet‑S)

With single‑scale training and testing, the method achieves state‑of‑the‑art mAP of 78.72 % on DOTA‑v1.0 and 72.28 % on DOTA‑v1.5, surpassing previous best results.

Strengths and Limitations

The primary strengths are: (1) a principled, mathematically grounded way to extract orientation from the frequency domain, eliminating the need for handcrafted rotation‑aware convolutions; (2) plug‑and‑play modules that require only minor code changes, making the approach widely applicable; (3) modest computational overhead, as FFT and rotation are highly optimized on modern GPUs. Potential limitations include increased memory consumption due to storing complex spectra, sensitivity of the polar‑sampling step to resolution (which may affect robustness for very small or low‑contrast objects), and the reliance on a single dominant orientation estimate, which could be ambiguous for heavily occluded or multi‑part objects.

Future Directions

The authors suggest extending the framework to multi‑scale spectral aggregation, learning adaptive frequency filters, and integrating the method with transformer‑based backbones that already model global context. Additionally, exploring non‑linear rotation compensation and more sophisticated angle‑aware attention mechanisms could further improve performance under challenging imaging conditions (e.g., severe illumination changes, dense clutter).

In summary, Fourier Angle Alignment introduces a novel frequency‑domain perspective to rotated object detection, effectively addressing both neck‑level directional incoherence and head‑level task conflict, and demonstrates substantial empirical gains on leading remote‑sensing benchmarks.

Comments & Academic Discussion

Loading comments...

Leave a Comment