When Pretty Isn't Useful: Investigating Why Modern Text-to-Image Models Fail as Reliable Training Data Generators

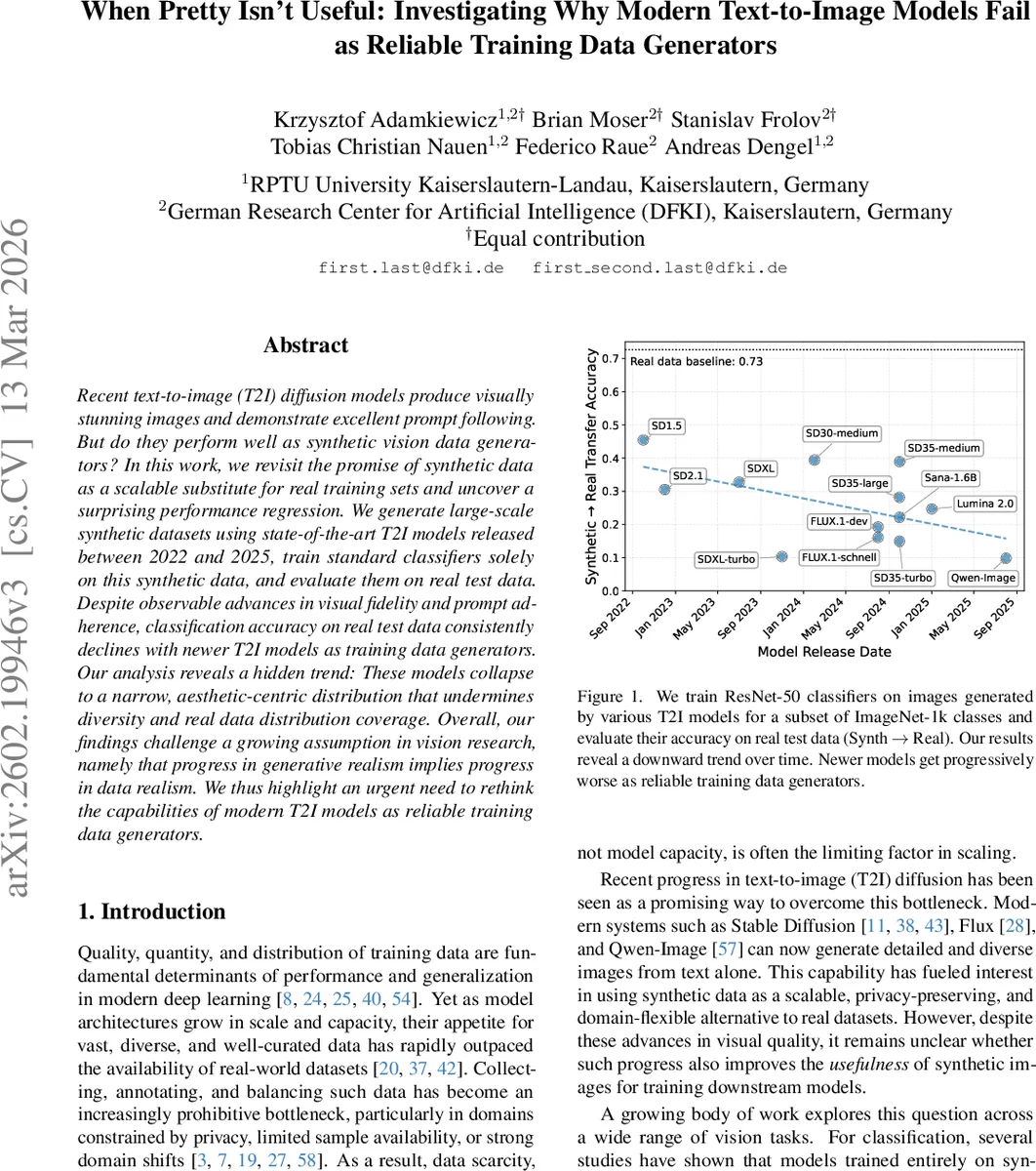

Recent text-to-image (T2I) diffusion models produce visually stunning images and demonstrate excellent prompt following. But do they perform well as synthetic vision data generators? In this work, we revisit the promise of synthetic data as a scalable substitute for real training sets and uncover a surprising performance regression. We generate large-scale synthetic datasets using state-of-the-art T2I models released between 2022 and 2025, train standard classifiers solely on this synthetic data, and evaluate them on real test data. Despite observable advances in visual fidelity and prompt adherence, classification accuracy on real test data consistently declines with newer T2I models as training data generators. Our analysis reveals a hidden trend: These models collapse to a narrow, aesthetic-centric distribution that undermines diversity and real data distribution coverage. Overall, our findings challenge a growing assumption in vision research, namely that progress in generative realism implies progress in data realism. We thus highlight an urgent need to rethink the capabilities of modern T2I models as reliable training data generators.

💡 Research Summary

This paper conducts a comprehensive evaluation of modern text‑to‑image (T2I) diffusion models as generators of synthetic training data for vision tasks. The authors select thirteen open‑source T2I models released between 2022 and 2025, spanning early Stable Diffusion versions, SDXL, SD‑3.5 (medium, large, turbo), Flux‑Dev, Flux‑Schnell, Qwen‑Image, and Lumina 2.0. For each model they generate a large‑scale synthetic dataset covering a subset of ImageNet‑1K classes (approximately 100 k images per model) and train a standard ResNet‑50 classifier solely on these synthetic images. The classifiers are then evaluated on the real ImageNet‑validation set, a protocol referred to as “Synth → Real” transfer.

Despite clear progress in visual fidelity, resolution, and prompt adherence, the authors observe a monotonic decline in real‑world classification accuracy as the T2I models become newer. The baseline real‑data model achieves 73 % top‑1 accuracy, while the most recent models drop to around 42 % under the same training regime. This paradox motivates a detailed analysis of three hypothesized failure modes: (i) texture and structure distortion, (ii) high‑frequency spectral mismatch, and (iii) distributional drift coupled with diversity collapse.

To isolate texture vs. structure effects, the authors train two auxiliary classifiers. A depth‑based ResNet‑50 operates on monocular depth maps (produced by the Depth Anything V2 model) and therefore relies only on coarse shape information, while a BagNet‑9 classifier processes 9 × 9 pixel patches and is highly sensitive to texture. Results show that newer T2I models preserve global structure (depth‑based accuracy remains relatively stable) but suffer severe texture degradation (BagNet accuracy collapses sharply), indicating that fine‑grained visual details are not faithfully reproduced.

For high‑frequency analysis, synthetic and real images are filtered with low‑pass and high‑pass kernels. Training on low‑pass filtered data reduces the Synth → Real gap, whereas high‑pass filtered training amplifies it. This demonstrates that the high‑frequency components of modern synthetic images deviate from the natural power‑law spectrum, and that CNNs, which are known to rely on high‑frequency cues, are penalized by this mismatch.

Distributional drift is quantified using density and coverage metrics introduced by Naeem et al. (2023). Feature embeddings are extracted with CLIP‑ViT‑L, and the authors compute how many generated samples fall within local neighborhoods of real images (density) and what fraction of real images have at least one generated neighbor (coverage). Newer models exhibit high density but low coverage, a classic sign of mode collapse: the synthetic manifold becomes overly concentrated, losing the breadth of the real data distribution. Complementary Real → Synth transfer experiments reveal a pronounced asymmetry: models trained on real data classify synthetic test images well, while models trained on synthetic data struggle on real test images, confirming that synthetic datasets form overly separable clusters that do not capture the complex decision boundaries of real data.

The authors also explore prompt engineering. Adding detailed captions (Caption‑in‑Prompt) improves Synth → Real performance modestly, but the benefit comes at the cost of an even tighter synthetic manifold, further reducing diversity. Thus, richer prompts cannot fully compensate for the fundamental distributional shortcomings of the generators.

In summary, the paper provides strong empirical evidence that advances in perceptual realism of T2I models do not translate into better utility as training data generators. The key limiting factors are loss of texture and high‑frequency detail, and a systematic drift toward an aesthetic‑centric, low‑diversity distribution. The work calls for a shift in evaluation criteria for generative models: beyond sample realism, future research must explicitly measure and optimize distributional realism and diversity if synthetic data are to replace real datasets in large‑scale vision training.

Comments & Academic Discussion

Loading comments...

Leave a Comment