A Photorealistic Dataset and Vision-Based Algorithm for Anomaly Detection During Proximity Operations in Lunar Orbit

NASA’s forthcoming Lunar Gateway space station, which will be uncrewed most of the time, will need to operate with an unprecedented level of autonomy. One key challenge is enabling the Canadarm3, the Gateway’s external robotic system, to detect hazards in its environment using its onboard inspection cameras. This task is complicated by the extreme and variable lighting conditions in space. In this paper, we introduce the visual anomaly detection and localization task for the space domain and establish a benchmark based on a synthetic dataset called ALLO (Anomaly Localization in Lunar Orbit). We show that state-of-the-art visual anomaly detection methods often fail in the space domain, motivating the need for new approaches. To address this, we propose MRAD (Model Reference Anomaly Detection), a statistical algorithm that leverages the known pose of the Canadarm3 and a CAD model of the Gateway to generate reference images of the expected scene appearance. Anomalies are then identified as deviations from this model-generated reference. On the ALLO dataset, MRAD surpasses state-of-the-art anomaly detection algorithms, achieving an AP score of 62.9% at the pixel level and an AUROC score of 75.0% at the image level. Given the low tolerance for risk in space operations and the lack of domain-specific data, we emphasize the need for novel, robust, and accurate anomaly detection methods to handle the challenging visual conditions found in lunar orbit and beyond.

💡 Research Summary

The paper addresses a critical need for autonomous visual hazard detection on NASA’s upcoming Lunar Gateway, specifically for the external robotic arm Canadarm3. Because the station will operate largely uncrewed, the arm must be able to identify and localize foreign objects (e.g., loose tools, debris) using its inspection cameras under the extreme lighting conditions of lunar orbit. The authors first formalize the problem as “visual anomaly detection and localization” in the space domain and then create a benchmark dataset, ALLO (Anomaly Localization in Lunar Orbit), to evaluate existing methods and their own solution.

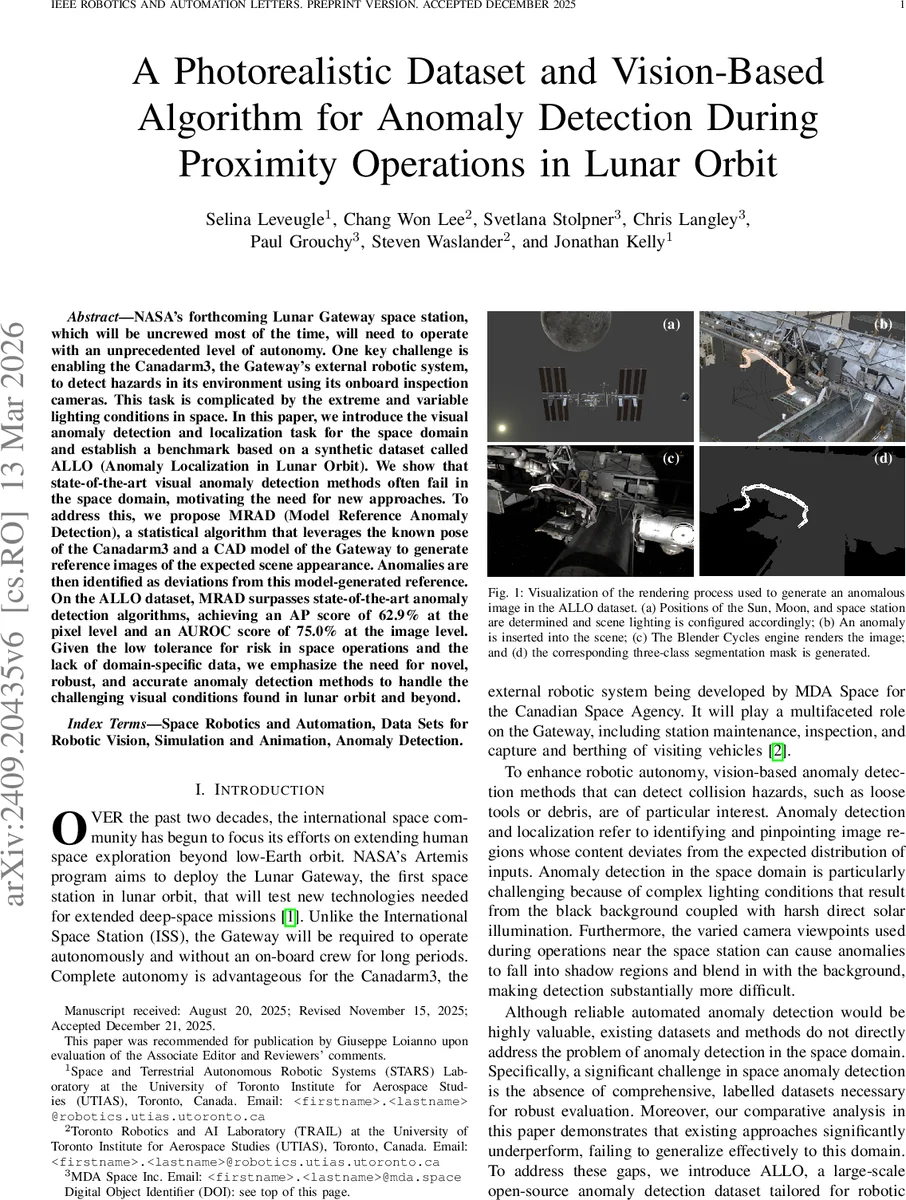

ALLO is a large‑scale synthetic dataset comprising 51,409 high‑resolution (1920×1080) images rendered with Blender’s Cycles path‑tracing engine. The authors model the ISS as a stand‑in for the Gateway, position Earth, Moon, and Sun using 2030 ephemeris data, and generate realistic lunar‑orbit illumination (deep‑space black background, direct solar glare, and spotlights). Fifty base camera poses are defined around the station; each pose is perturbed with Gaussian noise (1 m position, 0.2 rad orientation) to emulate arm pose uncertainty. Sixteen distinct anomalous objects (thermal blankets, cables, drills, etc.) are inserted one per scene at five depth variations, ensuring each occupies at least 0.1 % of the image. Every image is paired with a three‑class segmentation mask (background, normal foreground, anomaly). Validation against real ISS photographs shows strong photorealism: LPIPS averages 0.58 and FID scores of 3.61, comparable or better than state‑of‑the‑art image synthesis models.

To tackle the detection problem, the authors propose Model Reference Anomaly Detection (MRAD). MRAD exploits the known camera pose and a CAD model of the station to render a “reference” image that represents the expected appearance of the scene. The algorithm proceeds in three steps: (1) Compute pixel‑wise anomaly scores by applying an extended Reed‑Xiaoli Detector (RXD) to the query–reference pair. The extension adapts RXD to multi‑channel space imagery and uses the reference image to provide an accurate background model, overcoming the classic RXD assumption of homogeneous Gaussian background. (2) Perform region‑growing on high‑score pixels to form candidate anomaly blobs, applying a minimum area threshold to suppress noise. (3) Classify the whole image as anomalous if the total detected area exceeds a preset proportion. The reference image is deliberately perturbed in lighting and pose to simulate the real‑to‑synthetic domain gap, demonstrating MRAD’s robustness to modest modeling errors.

Experimental evaluation on the ALLO benchmark compares MRAD against a suite of recent unsupervised and self‑supervised deep‑learning anomaly detectors (PatchCore, PaDiM, Student‑Teacher, etc.) as well as classical statistical methods (OC‑SVM, original RXD variants). MRAD achieves a pixel‑level Average Precision of 62.9 % and an image‑level AUROC of 75.0 %, substantially outperforming all baselines. Ablation studies reveal that (i) adding realistic lighting/pose noise to the reference image degrades performance by less than 3 %, confirming MRAD’s tolerance to modeling inaccuracies, and (ii) replacing the RXD score with a simple L2 difference drops AP by about 12 %, highlighting the importance of the statistical formulation.

The authors acknowledge limitations: the reference images are fully synthetic, and the study does not incorporate real sensor noise, compression artifacts, or on‑orbit radiometric variations. Future work is outlined to include domain‑adaptation with actual lunar‑orbit imagery, real‑time GPU implementation, multi‑camera fusion for 3D anomaly localization, and integration with the arm’s motion‑planning pipeline.

In summary, the paper makes three major contributions: (1) the ALLO dataset, a publicly released, photorealistic benchmark for space‑domain visual anomaly detection; (2) a thorough benchmark that demonstrates the inadequacy of existing industrial‑focused methods for lunar‑orbit conditions; and (3) MRAD, a model‑reference statistical detector that leverages known geometry and lighting to achieve state‑of‑the‑art performance. This work provides a concrete foundation for safe, autonomous proximity operations in lunar orbit and sets a precedent for vision‑based safety systems in future deep‑space missions.

Comments & Academic Discussion

Loading comments...

Leave a Comment