Beyond Motion Imitation: Is Human Motion Data Alone Sufficient to Explain Gait Control and Biomechanics?

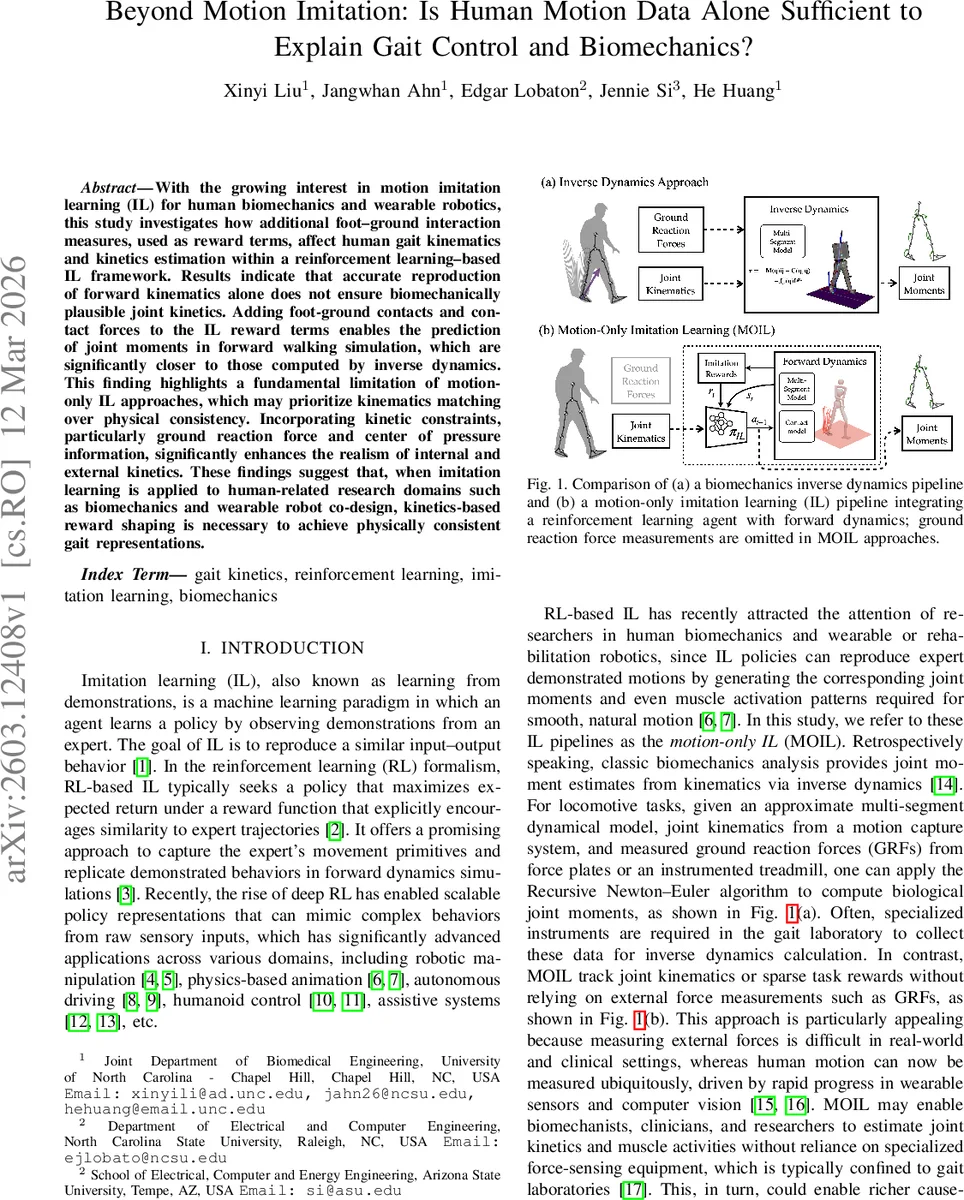

With the growing interest in motion imitation learning (IL) for human biomechanics and wearable robotics, this study investigates how additional foot-ground interaction measures, used as reward terms, affect human gait kinematics and kinetics estimation within a reinforcement learning-based IL framework. Results indicate that accurate reproduction of forward kinematics alone does not ensure biomechanically plausible joint kinetics. Adding foot-ground contacts and contact forces to the IL reward terms enables the prediction of joint moments in forward walking simulation, which are significantly closer to those computed by inverse dynamics. This finding highlights a fundamental limitation of motion-only IL approaches, which may prioritize kinematics matching over physical consistency. Incorporating kinetic constraints, particularly ground reaction force and center of pressure information, significantly enhances the realism of internal and external kinetics. These findings suggest that, when imitation learning is applied to human-related research domains such as biomechanics and wearable robot co-design, kinetics-based reward shaping is necessary to achieve physically consistent gait representations.

💡 Research Summary

This paper investigates whether human motion data alone are sufficient to generate biomechanically plausible gait control and kinetics when used within a reinforcement‑learning (RL) based imitation learning (IL) framework. The authors compare a conventional “motion‑only imitation learning” (MOIL) pipeline, which optimizes only kinematic objectives (joint angles, end‑effector positions, root orientation and velocities), with an extended “kinetics‑aware imitation learning” (KAIL) pipeline that adds explicit rewards for matching ground‑reaction forces (GRFs) and center‑of‑pressure (CoP) trajectories measured from a treadmill.

A floating‑base 17‑degree‑of‑freedom human model is built in MuJoCo, with each foot represented by a box containing four contact points. The dynamics follow the standard equation M(q)¨q + C(q, ˙q) = τ + Jc(q)ᵀf_grf, and a residual wrench ξ is applied to the root to improve stability. Policies are trained with Proximal Policy Optimization (PPO). The action vector consists of target joint equilibrium angles ψ_eq and the residual wrench ξ; joint torques are generated by an impedance controller τ = –Kp(ψ – ψ_eq) – Kd·ψ̇.

The reward function is split into a kinematic part R_k (weighted sum of pose, end‑effector, root pose, root velocity, and residual‑wrench regularization terms) and a dynamics part R_dyn = w_grf·R_grf + w_cop·R_cop. R_grf penalizes the squared error between simulated and measured sagittal‑plane GRF components (anterior‑posterior and vertical for each foot). R_cop penalizes the squared error of the anterior‑posterior CoP positions.

Data were collected from a single healthy male (29 y, 176 cm, 70 kg) walking at 1.2 m/s on an instrumented treadmill. Motion capture (120 Hz) and force‑plate data (1000 Hz) were filtered and time‑normalized. Inverse dynamics were computed in Visual3D to obtain reference joint moments. The same anthropometric parameters were used in both the MuJoCo forward model and the Visual3D inverse‑dynamics model to ensure comparability.

An ablation study was performed: after 700 training episodes with only R_k (pre‑training), policies were fine‑tuned for an additional 200 episodes under four conditions: (i) R_k only (baseline MOIL), (ii) R_k + R_grf, (iii) R_k + R_cop, and (iv) R_k + R_grf + R_cop (ALL). All policies completed full gait cycles without falling. Kinematic reward remained high (≈0.94) across conditions, indicating that motion imitation is achievable regardless of kinetic supervision. However, kinetic rewards improved markedly when their respective terms were activated (e.g., R_grf rose from 0.55 to 0.71 in the R_k+R_grf condition).

Kinematic fidelity was assessed by root‑mean‑square error (RMSE) of joint angles against experimental data; the ALL condition achieved the lowest errors (maximum 6.56° at the knee). External kinetics were evaluated by comparing simulated GRF waveforms to measured ones using complex Pearson correlation coefficients (CPCC). The ALL condition showed the highest CPCC values, statistically significant improvements over the baseline (p < 0.01). CoP trajectories also aligned closely with experimental data only when the CoP reward was present. Consequently, joint moments derived from the forward simulations in the ALL condition matched inverse‑dynamics moments far better than the MOIL baseline.

The key insight is that pure motion imitation can produce accurate joint trajectories while exploiting unrealistic foot‑ground interaction forces, leading to implausible joint torques. Incorporating GRF and CoP information forces the policy to respect physical contact constraints, yielding both kinematic and kinetic realism. This suggests that for applications in biomechanics, clinical gait analysis, and wearable‑robot co‑design, kinetic‑aware reward shaping is essential.

Limitations include the use of a single subject, a single walking speed, and only sagittal‑plane GRF components. Muscle activation patterns and electromyographic data were not modeled. Future work should extend the framework to diverse populations, multiple gait tasks, and multimodal sensory inputs (e.g., EMG) to further improve the physiological fidelity and to enable real‑time deployment in wearable robotic controllers.

Comments & Academic Discussion

Loading comments...

Leave a Comment