Chem4DLLM: 4D Multimodal LLMs for Chemical Dynamics Understanding

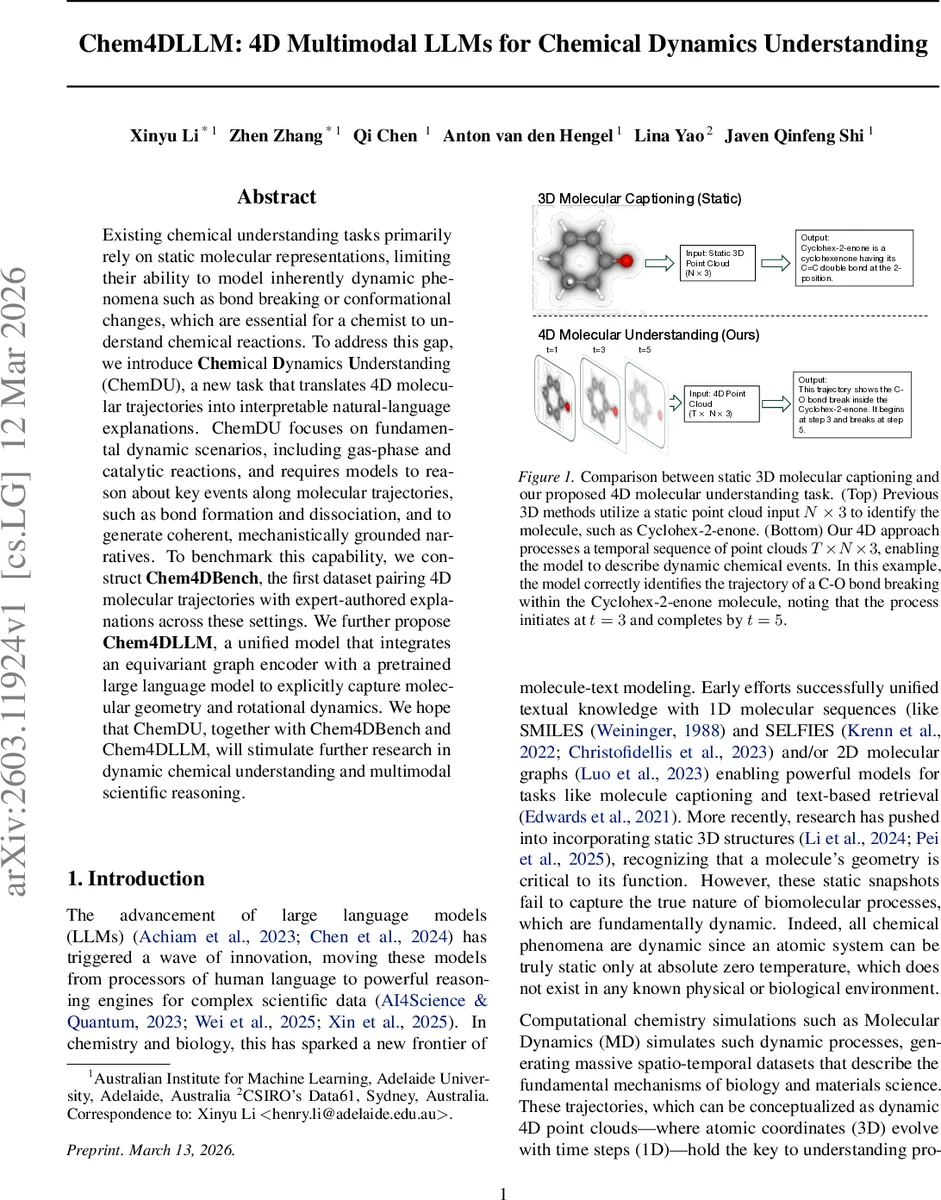

Existing chemical understanding tasks primarily rely on static molecular representations, limiting their ability to model inherently dynamic phenomena such as bond breaking or conformational changes, which are essential for a chemist to understand chemical reactions. To address this gap, we introduce Chemical Dynamics Understanding (ChemDU), a new task that translates 4D molecular trajectories into interpretable natural-language explanations. ChemDU focuses on fundamental dynamic scenarios, including gas-phase and catalytic reactions, and requires models to reason about key events along molecular trajectories, such as bond formation and dissociation, and to generate coherent, mechanistically grounded narratives. To benchmark this capability, we construct Chem4DBench, the first dataset pairing 4D molecular trajectories with expert-authored explanations across these settings. We further propose Chem4DLLM, a unified model that integrates an equivariant graph encoder with a pretrained large language model to explicitly capture molecular geometry and rotational dynamics. We hope that ChemDU, together with Chem4DBench and Chem4DLLM, will stimulate further research in dynamic chemical understanding and multimodal scientific reasoning.

💡 Research Summary

The paper addresses a critical gap in AI‑driven chemistry: existing large language models (LLMs) and multimodal frameworks are built around static molecular representations (SMILES strings, 2‑D graphs, or single‑frame 3‑D structures) and therefore cannot capture the inherently dynamic nature of chemical processes such as bond breaking, conformational changes, or catalytic surface events. To fill this void, the authors introduce a new task called Chemical Dynamics Understanding (ChemDU). ChemDU requires a model to ingest a full 4‑dimensional molecular trajectory—i.e., a sequence of atomic coordinate frames (T × N × 3)—and produce a concise, scientifically accurate natural‑language description that identifies key events (bond formation/breakage, transition‑state appearance, adsorption/desorption, rotational motions) together with their temporal ordering and causal relationships.

To evaluate models on ChemDU, the authors construct Chem4DBench, the first benchmark that pairs high‑quality 4‑D trajectories from molecular dynamics or reaction‑path simulations with expert‑written explanations. Chem4DBench contains two complementary categories: (1) Gas‑phase Reaction Product Prediction, where models see reactant and transition‑state geometries and must predict product geometry and narrate the reaction pathway; (2) Catalytic Reaction, which includes periodic‑boundary‑condition (PBC) surface simulations (e.g., adsorption, desorption, surface diffusion) and asks models to describe the full catalytic event. Each sample provides the atom set A, the time‑indexed coordinates C_t, and a detailed textual annotation, thereby enabling quantitative assessment of both event‑level accuracy and linguistic quality.

The core model, Chem4DLLM, is a unified architecture that couples an equivariant graph neural network (GNN) encoder with a pretrained LLM. The encoder (based on state‑of‑the‑art equivariant models such as NequIP or MACE) processes each frame, preserving rotational and translational symmetries (l ≥ 1 features) and producing a compact embedding for every atom at every time step. These embeddings are tokenized and fed into a large language model (e.g., Llama‑2, GPT‑4) which generates the final narrative. By keeping the geometry processing external to the LLM, the system avoids the prohibitive token explosion that would result from naïvely flattening thousands of coordinates into a text stream.

To cope with long trajectories, the authors introduce two efficiency mechanisms. First, a frame‑wise summarization step extracts “key atoms” and “key events” (e.g., bonds whose length crosses a threshold) to drastically reduce the number of tokens per frame. Second, a temporal attention module allows the LLM to focus on salient frames while still respecting the overall sequence, mitigating the limited context window of current LLMs. Prompt engineering is also employed so that users can ask specific questions (e.g., “What is the reaction barrier?” or “Describe the adsorption mechanism”).

Experimental results show that Chem4DLLM outperforms several strong baselines that rely on static 3‑D encoders (3D‑MoLM, 3D‑MolT5, Chem3DLLM) across multiple metrics: (a) event‑time accuracy (correct identification of when bonds break/form), (b) chemical consistency (correct transition‑state description, plausible reaction barriers), and (c) language quality (BLEU, ROUGE). The gains are especially pronounced in the catalytic category, where the model correctly narrates surface adsorption, rotation of adsorbates, and desorption steps. However, performance degrades on very long simulations (thousands of steps) and on cases with multiple competing reaction pathways, highlighting the current limitations of LLM context windows and the need for more sophisticated multi‑modal attention mechanisms.

The paper’s contributions are fourfold: (1) definition of the ChemDU task, emphasizing temporal grounding and causal event abstraction; (2) creation of the Chem4DBench benchmark with both gas‑phase and PBC catalytic scenarios; (3) design of an equivariant‑graph‑encoder‑plus‑LLM architecture that respects physical symmetries while leveraging the generative power of LLMs; (4) demonstration of effective token‑compression and temporal‑attention strategies that enable practical handling of 4‑D data. The authors also discuss future directions, including scaling to longer trajectories, integrating uncertainty quantification for reaction barriers, and coupling the system with active‑learning loops for autonomous simulation‑experiment pipelines.

In summary, this work pioneers the integration of high‑dimensional, time‑resolved molecular data with large language models, moving AI‑assisted chemistry beyond static property prediction toward true dynamic understanding. It opens pathways for automated scientific narration, AI‑driven hypothesis generation, and more transparent interpretation of complex simulation outputs, thereby laying a foundation for the next generation of agentic scientific discovery tools.

Comments & Academic Discussion

Loading comments...

Leave a Comment