Stage-Adaptive Reliability Modeling for Continuous Valence-Arousal Estimation

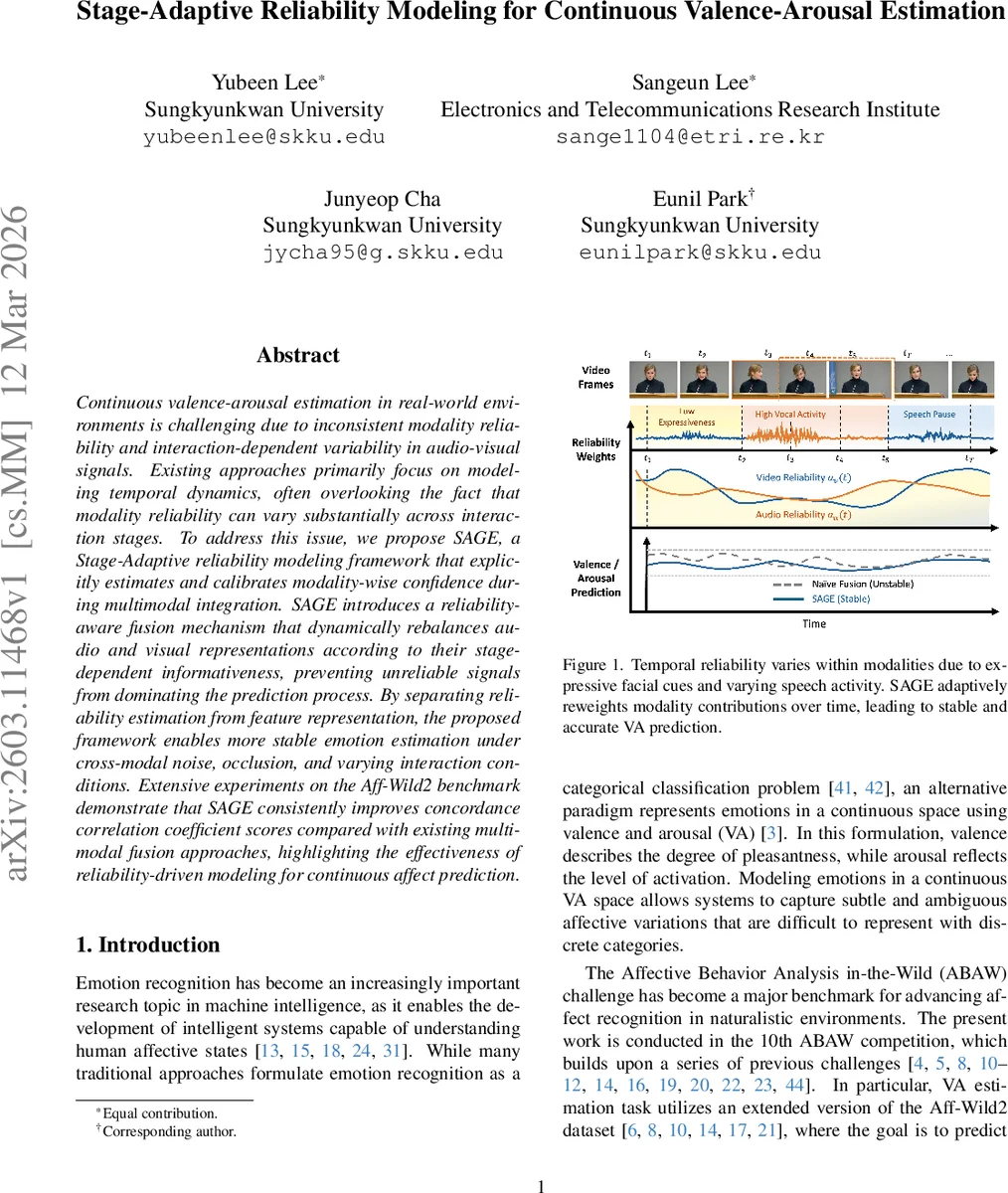

Continuous valence-arousal estimation in real-world environments is challenging due to inconsistent modality reliability and interaction-dependent variability in audio-visual signals. Existing approaches primarily focus on modeling temporal dynamics, often overlooking the fact that modality reliability can vary substantially across interaction stages. To address this issue, we propose SAGE, a Stage-Adaptive reliability modeling framework that explicitly estimates and calibrates modality-wise confidence during multimodal integration. SAGE introduces a reliability-aware fusion mechanism that dynamically rebalances audio and visual representations according to their stage-dependent informativeness, preventing unreliable signals from dominating the prediction process. By separating reliability estimation from feature representation, the proposed framework enables more stable emotion estimation under cross-modal noise, occlusion, and varying interaction conditions. Extensive experiments on the Aff-Wild2 benchmark demonstrate that SAGE consistently improves concordance correlation coefficient scores compared with existing multimodal fusion approaches, highlighting the effectiveness of reliability-driven modeling for continuous affect prediction.

💡 Research Summary

The paper tackles the problem of continuous valence‑arousal (VA) estimation in real‑world audio‑visual settings, where the reliability of each modality can fluctuate dramatically over time due to noise, occlusion, or varying speech activity. Existing multimodal fusion approaches mainly focus on modeling temporal dynamics or cross‑modal interactions, but they rarely account for the time‑varying confidence of each signal. To fill this gap, the authors propose SAGE (Stage‑Adaptive Reliability Modeling), a framework that explicitly estimates a per‑time‑step reliability score for the combined audio‑visual representation and uses it to re‑weight the modalities before fusion.

The architecture consists of four stages. First, pretrained encoders extract frame‑level visual features (ResNet‑50) and raw‑waveform acoustic embeddings (WavLM‑base). Second, Temporal Convolutional Networks (TCNs) capture short‑term dependencies for each modality separately. Third, the core Stage‑Adaptive Reliability Modeling module processes the concatenated features. Within this module, a lightweight linear layer produces a scalar logit gₜ for each time step; a softmax across the whole sequence yields a normalized reliability vector αₜ that sums to one. The original multimodal feature Xₜ is multiplied by αₜ, producing a reliability‑adjusted representation Zₜ. This “Reliability‑Guided Fusion” (RGF) dynamically suppresses unreliable modalities (e.g., noisy audio or occluded face) and amplifies the more trustworthy one. The adjusted sequence Z is then fed into a Transformer‑based Temporal Refinement block, which applies multi‑head self‑attention to capture long‑range temporal patterns while operating on already confidence‑weighted inputs. Finally, a frame‑wise MLP regression head predicts the two continuous VA values.

Training optimizes a concordance correlation coefficient (CCC) loss (L_CCC = 1 − CCC), aligning the objective directly with the evaluation metric. The authors use the Aff‑Wild2 dataset from the 10th ABAW competition, segmenting videos into 300‑frame clips with overlapping windows, discarding frames with invalid annotations or failed face detection. Visual inputs are resized to 48 × 48, augmented with random flips and crops; audio is resampled to 16 kHz and synchronized with video frames.

Experimental results show that SAGE achieves CCC scores of 0.509 (valence) and 0.674 (arousal) on the official validation split, yielding an average CCC of 0.591. While some top‑ranking challenge entries obtain slightly higher numbers by employing larger ensembles or additional modalities, SAGE’s performance is competitive given its relatively streamlined design. On the test set, the submitted model reaches an average CCC of 0.58, outperforming several prior multimodal baselines (e.g., MM‑CV‑LC, HFUT‑MAC) and matching the performance of more complex approaches such as GRJCA and HGRJCA.

The key contribution lies in the explicit, stage‑wise reliability estimation and its integration into the fusion pipeline. By separating reliability modeling from feature extraction, SAGE can adapt to varying signal quality without redesigning the encoders. The simplicity of the linear reliability estimator makes the method lightweight, yet the empirical gains demonstrate that even a modest confidence model can substantially improve robustness. Limitations include the reliance on a single linear layer for reliability scoring, which may be insufficient for highly complex acoustic‑visual interactions (e.g., multiple speakers, background music). Future work could explore non‑linear or Bayesian reliability estimators, incorporate textual cues, and extend the approach to handle multi‑speaker diarization scenarios. Visualization of the αₜ weights also offers interpretability, showing when the model leans on audio versus visual cues.

In summary, SAGE introduces a novel reliability‑aware fusion strategy that dynamically rebalances audio and visual contributions across interaction stages, leading to more stable and accurate continuous affect prediction in uncontrolled environments. The work demonstrates that modeling modality confidence is a powerful and under‑exploited direction for multimodal emotion recognition.

Comments & Academic Discussion

Loading comments...

Leave a Comment