OrchMLLM: Orchestrate Multimodal Data with Batch Post-Balancing to Accelerate Multimodal Large Language Model Training

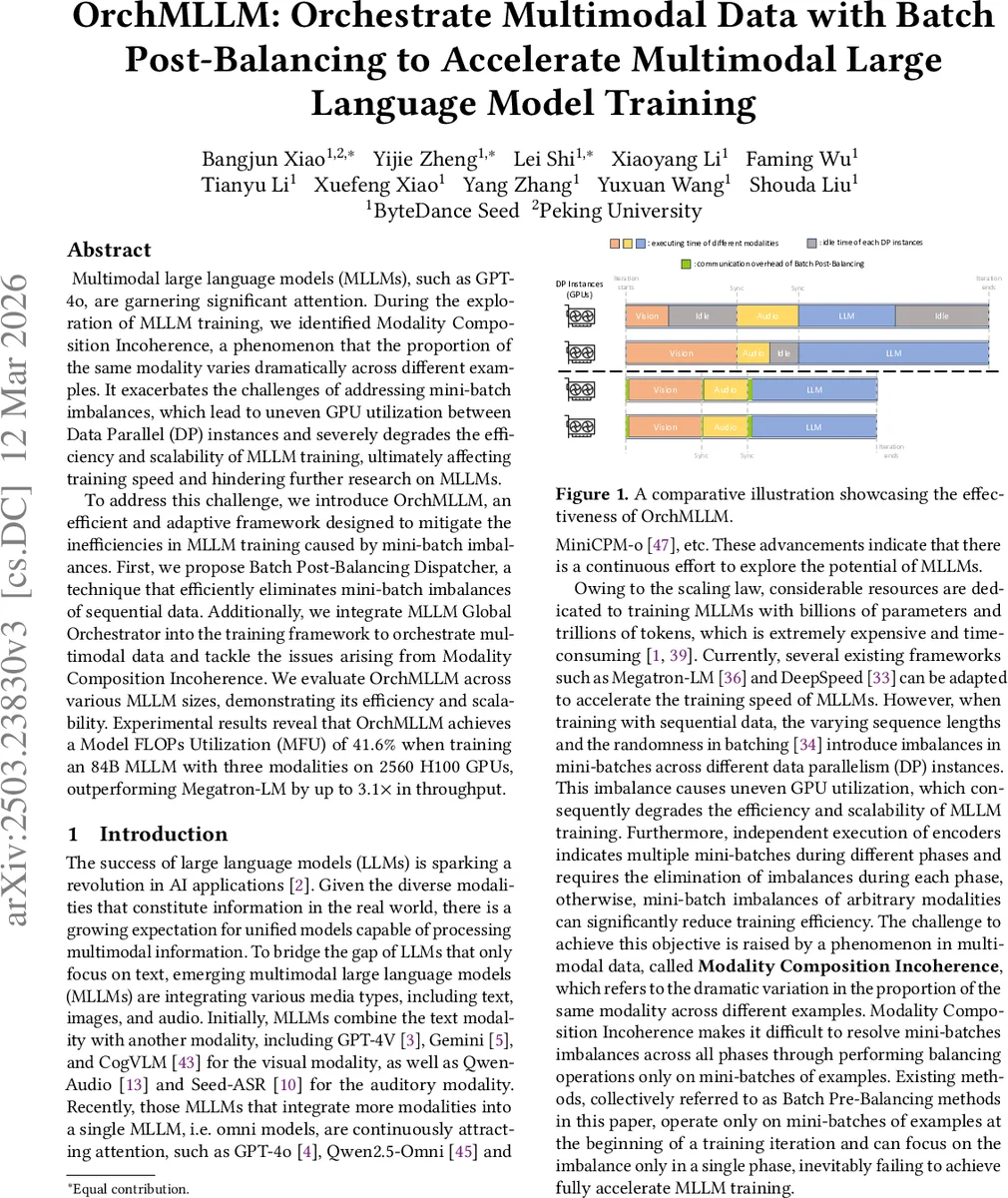

Multimodal large language models (MLLMs), such as GPT-4o, are garnering significant attention. During the exploration of MLLM training, we identified Modality Composition Incoherence, a phenomenon that the proportion of a certain modality varies dramatically across different examples. It exacerbates the challenges of addressing mini-batch imbalances, which lead to uneven GPU utilization between Data Parallel (DP) instances and severely degrades the efficiency and scalability of MLLM training, ultimately affecting training speed and hindering further research on MLLMs. To address these challenges, we introduce OrchMLLM, a comprehensive framework designed to mitigate the inefficiencies in MLLM training caused by Modality Composition Incoherence. First, we propose Batch Post-Balancing Dispatcher, a technique that efficiently eliminates mini-batch imbalances in sequential data. Additionally, we integrate MLLM Global Orchestrator into the training framework to orchestrate multimodal data and tackle the issues arising from Modality Composition Incoherence. We evaluate OrchMLLM across various MLLM sizes, demonstrating its efficiency and scalability. Experimental results reveal that OrchMLLM achieves a Model FLOPs Utilization (MFU) of $41.6%$ when training an 84B MLLM with three modalities on $2560$ H100 GPUs, outperforming Megatron-LM by up to $3.1\times$ in throughput.

💡 Research Summary

The paper addresses a critical bottleneck in training multimodal large language models (MLLMs), namely the “Modality Composition Incoherence” problem, where the proportion of each modality varies dramatically across training examples. This variability leads to severe mini‑batch imbalances across data‑parallel (DP) instances, causing uneven GPU utilization, long idle times, and high communication overhead. Existing pre‑balancing methods attempt to equalize token counts at the example‑level before the forward pass, but they cannot simultaneously balance all phases (vision encoder, audio encoder, LLM backbone) in a multimodal pipeline, making them ineffective for omni‑modal models.

OrchMLLM introduces a two‑stage solution. First, the Batch Post‑Balancing Dispatcher rearranges mini‑batches after they have been sampled, grouping data by modality and re‑assigning them across DP instances so that each phase receives batches with equal token counts. This post‑balancing leverages the insight that rearranging batches does not affect training outcomes (consequence‑invariance). The paper proposes several algorithms (greedy, dynamic programming, histogram‑based) to compute the optimal rearrangement under different workload scenarios.

Second, the MLLM Global Orchestrator integrates with the training loop to monitor per‑phase token distributions and trigger post‑balancing dynamically. A Node‑wise All‑to‑All Communicator implements the actual data exchange, exploiting fast intra‑node links (NVLink) and optimized inter‑node NCCL All‑to‑All patterns to keep communication overhead low. Memory usage is minimized by streaming batches and eliminating duplicate data during the exchange.

Experiments were conducted on a 2560‑GPU H100 cluster, training an 84‑billion‑parameter model with vision, audio, and text modalities. OrchMLLM achieved a Model FLOPs Utilization (MFU) of 41.6 %, representing up to a 3.1× throughput improvement over Megatron‑LM and significantly reducing idle time (by ~65 %) and communication overhead (to <5 % of total time). The approach also scaled well to smaller models (7 B, 13 B) and other modality combinations, with no degradation in convergence or final evaluation metrics (e.g., VQA accuracy, ASR WER).

The authors acknowledge that the current implementation relies heavily on abundant GPU memory and high‑bandwidth networking; very large batches may increase the cost of the post‑balancing computation. Future work includes developing lighter‑weight approximation algorithms and extending the framework to heterogeneous hardware environments.

In summary, OrchMLLM provides a practical, architecture‑agnostic framework that resolves the multi‑phase imbalance caused by Modality Composition Incoherence, dramatically improving training efficiency for current and future multimodal omni‑models.

Comments & Academic Discussion

Loading comments...

Leave a Comment