FedSKD: Aggregation-free Model-heterogeneous Federated Learning via Multi-dimensional Similarity Knowledge Distillation for Medical Image Classification

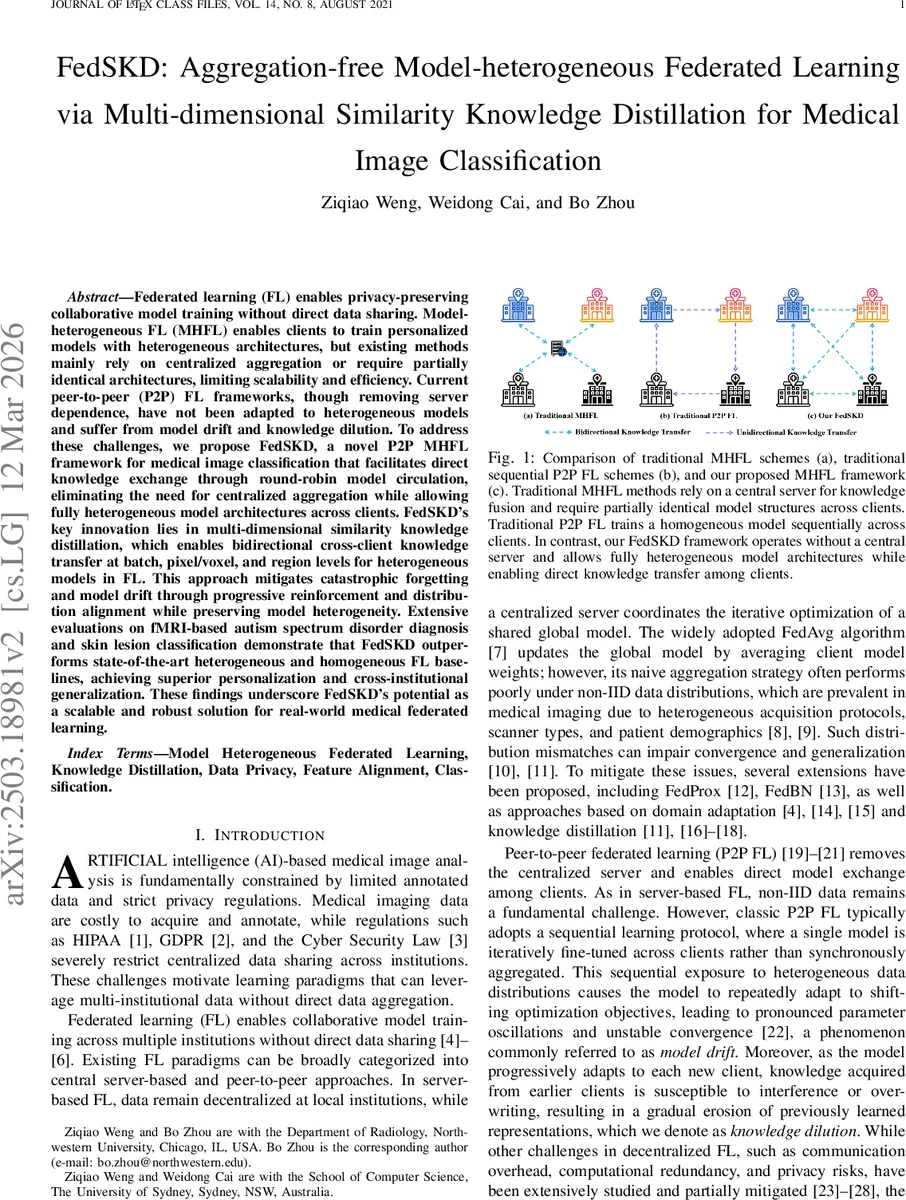

Federated learning (FL) enables privacy-preserving collaborative model training without direct data sharing. Model-heterogeneous FL (MHFL) extends this paradigm by allowing clients to train personalized models with heterogeneous architectures tailored to their computational resources and application-specific needs. However, existing MHFL methods predominantly rely on centralized aggregation, which introduces scalability and efficiency bottlenecks, or impose restrictions requiring partially identical model architectures across clients. While peer-to-peer (P2P) FL removes server dependence, it suffers from model drift and knowledge dilution, limiting its effectiveness in heterogeneous settings. To address these challenges, we propose FedSKD, a novel MHFL framework that facilitates direct knowledge exchange through round-robin model circulation, eliminating the need for centralized aggregation while allowing fully heterogeneous model architectures across clients. FedSKD’s key innovation lies in multi-dimensional similarity knowledge distillation, which enables bidirectional cross-client knowledge transfer at batch, pixel/voxel, and region levels for heterogeneous models in FL. This approach mitigates catastrophic forgetting and model drift through progressive reinforcement and distribution alignment while preserving model heterogeneity. Extensive evaluations on fMRI-based autism spectrum disorder diagnosis and skin lesion classification demonstrate that FedSKD outperforms state-of-the-art heterogeneous and homogeneous FL baselines, achieving superior personalization (client-specific accuracy) and generalization (cross-institutional adaptability). These findings underscore FedSKD’s potential as a scalable and robust solution for real-world medical federated learning applications.

💡 Research Summary

The paper introduces FedSKD, a novel peer‑to‑peer (P2P) federated learning framework designed for medical image classification tasks where participating institutions may use heterogeneous model architectures and hold non‑IID data. Traditional model‑heterogeneous federated learning (MHFL) relies on a central server to aggregate weights or share partial subnetworks, which creates scalability bottlenecks and limits architectural freedom. Existing P2P FL methods, while removing the server, typically train a single homogeneous model sequentially across clients, leading to model drift and knowledge dilution when data distributions differ.

FedSKD addresses these issues by (1) circulating locally trained models among clients in a round‑robin fashion without any central coordinator, and (2) performing bidirectional knowledge distillation between the receiving client’s “Domain‑Adaptive Model” (DAM) and the incoming “Knowledge‑Transit Model” (KTM). Each communication round begins with a random permutation Oₜ that defines a one‑to‑one mapping of models to clients. A client either refines its own DAM (if Oₜᵢ = i) or engages in simultaneous knowledge exchange with a KTM (if Oₜᵢ ≠ i).

The core technical contribution is Multi‑Dimensional Similarity Knowledge Distillation (SKD), which aligns feature representations at three complementary granularities:

-

Batch‑wise SKD (B‑SKD) – aligns the statistical distribution of batch‑level activations across clients using KL‑divergence or Maximum Mean Discrepancy, ensuring that global learning signals remain consistent despite heterogeneous data.

-

Pixel/voxel‑wise SKD (P‑SKD) – matches spatial (2‑D) or volumetric (3‑D) feature maps on a per‑pixel/voxel basis via a weighted combination of cosine similarity and L₂ distance, encouraging different architectures to produce similar localized responses for the same anatomical structures.

-

Region‑wise SKD (R‑SKD) – operates on predefined anatomical regions of interest (ROIs). For each ROI, class‑wise mean embeddings are extracted and their Euclidean distances are minimized, thereby transferring clinically relevant regional knowledge.

The total loss for a client i combines standard cross‑entropy on its local labels with the three SKD terms, each weighted by hyper‑parameters (α, β, γ, δ). This formulation yields two simultaneous knowledge flows: (i) DAM → KTM injects domain‑specific expertise into the transit model, and (ii) KTM → DAM supplies cross‑client insights that improve generalization. By keeping both models active during each round, FedSKD mitigates catastrophic forgetting and prevents the progressive erosion of previously learned representations.

Communication overhead is modest: each round requires one model transfer per client (size equal to the model) and optional transmission of compressed feature maps for SKD calculations. Because aggregation is avoided, the method scales linearly with the number of participants and eliminates the central server’s computational and storage bottlenecks.

Experiments were conducted on two clinically relevant benchmarks: (1) a federated fMRI dataset derived from ABIDE for autism spectrum disorder (ASD) diagnosis (named FedASD), and (2) a skin‑lesion classification dataset (FedSkin) built from ISIC images. In both settings, five to eight institutions participated, each employing a distinct architecture (e.g., ResNet‑18, EfficientNet‑B0, 3‑D ConvNet). FedSKD was compared against a wide range of baselines: central‑server MHFL methods (FedAvg, FedProx, FedBN, FedMD, AlFeCo, FedMoE), traditional P2P schemes (Sequential, Gossip), and recent decentralized approaches.

Key findings include:

-

Personalization performance – FedSKD achieved 3–5 % higher client‑specific test accuracy than the best MHFL baseline, with the largest gains observed on sites with the most skewed data distributions.

-

Cross‑institution generalization – When models trained on one institution were evaluated on others, FedSKD outperformed baselines by 2–4 % absolute accuracy, demonstrating superior knowledge transfer.

-

Stability metrics – Model drift (measured by parameter variance across rounds) decreased by roughly 40 %, and knowledge dilution (performance drop after successive transfers) was reduced by about 35 % compared to naïve sequential P2P training.

-

Ablation study – Removing any SKD component degraded performance; the full three‑level SKD yielded the highest gains, confirming that batch, spatial, and regional alignments each contribute uniquely.

The authors acknowledge limitations: transmitting high‑dimensional feature maps for 3‑D medical volumes can increase bandwidth usage, and the current round‑robin schedule assumes a static set of participants. Future work will explore compressed feature communication, adaptive topologies that handle client churn, and formal convergence analysis under non‑IID heterogeneous settings.

In summary, FedSKD provides a practical, server‑free solution for model‑heterogeneous federated learning in privacy‑sensitive medical imaging. By leveraging multi‑dimensional similarity knowledge distillation, it simultaneously enhances personalization, improves cross‑institution generalization, and stabilizes training dynamics, marking a significant step forward for decentralized AI in healthcare.

Comments & Academic Discussion

Loading comments...

Leave a Comment