P-GSVC: Layered Progressive 2D Gaussian Splatting for Scalable Image and Video

Gaussian splatting has emerged as a competitive explicit representation for image and video reconstruction. In this work, we present P-GSVC, the first layered progressive 2D Gaussian splatting framework that provides a unified solution for scalable Gaussian representation in both images and videos. P-GSVC organizes 2D Gaussian splats into a base layer and successive enhancement layers, enabling coarse-to-fine reconstructions. To effectively optimize this layered representation, we propose a joint training strategy that simultaneously updates Gaussians across layers, aligning their optimization trajectories to ensure inter-layer compatibility and a stable progressive reconstruction. P-GSVC supports scalability in terms of both quality and resolution. Our experiments show that the joint training strategy can gain up to 1.9 dB improvement in PSNR for video and 2.6 dB improvement in PSNR for image when compared to methods that perform sequential layer-wise training. Project page: https://longanwang-cs.github.io/PGSVC-webpage/

💡 Research Summary

The paper introduces P‑GSVC (Progressive Gaussian Splat Video Coding), the first layered progressive 2‑D Gaussian splatting framework that unifies scalable coding for both images and videos. The authors observe that while Gaussian splatting (GS) has become a competitive explicit representation for high‑fidelity reconstruction, extending it to scalable coding is non‑trivial because Gaussian splats are highly inter‑dependent; removing any subset—even those with low visual contribution—can severely degrade the overall rendering. Traditional scalable codecs (e.g., JPEG2000, HEVC‑SHVC) rely on wavelet or block‑based hierarchies, and recent learning‑based approaches use implicit latent representations, which are computationally heavy and difficult to edit.

P‑GSVC addresses these issues by organizing 2‑D Gaussian splats into a base layer (L₀) and multiple enhancement layers (ΔL₁, ΔL₂, …). Decoding only the base layer yields a coarse preview; each subsequent layer adds new splats that capture higher‑frequency details, enabling both quality and resolution scalability. The key technical contribution is a joint training strategy that simultaneously optimizes two objectives at each iteration: (i) a loss computed using all splats up to the highest layer (high‑fidelity reconstruction) and (ii) a loss computed using splats only up to a current target layer i (low‑fidelity reconstruction). By cycling the target layer i throughout training, the gradients for all layers remain aligned, preventing the instability and sub‑optimal local minima observed in sequential layer‑wise training. This approach stabilizes the optimization trajectory, ensures that intermediate reconstructions are of acceptable quality, and preserves inter‑layer compatibility.

The framework also inherits several mechanisms from the earlier GSVC system: Gaussian Splat Pruning (GSP) removes splats with negligible contribution to reduce bitrate, Gaussian Splat Augmentation (GSA) injects new splats to handle fast motion or newly appearing objects, and Dynamic Key‑frame Selection (DKS) detects scene changes and inserts new I‑frames when necessary. These components together allow P‑GSVC to adapt to temporal dynamics in video while maintaining a compact representation.

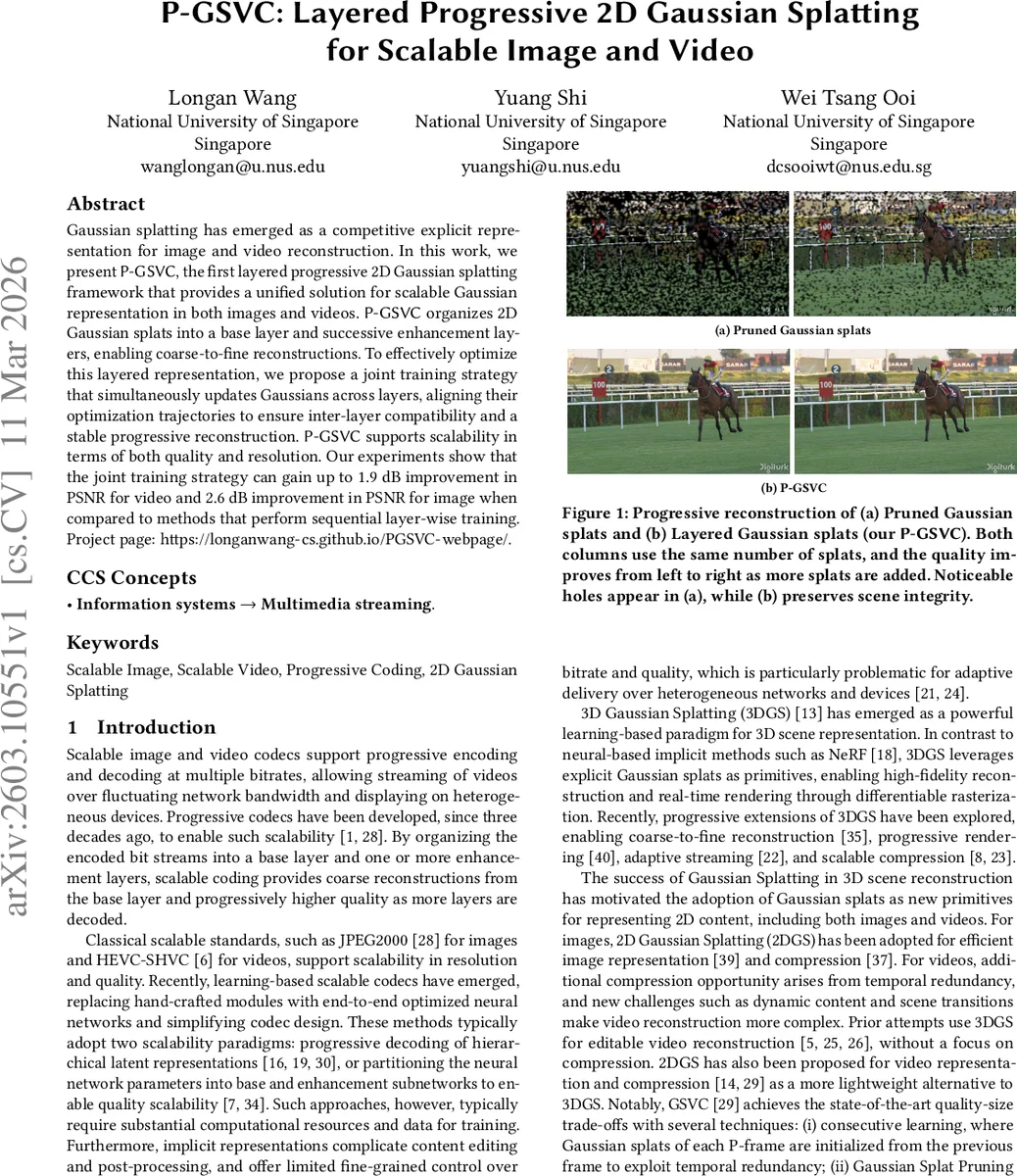

Experiments are conducted on the UVG video dataset and the DIV2K‑HR image dataset. Compared with methods that train layers sequentially, P‑GSVC achieves up to 1.9 dB higher PSNR on video and up to 2.6 dB higher PSNR on images, using the same number of splats. Qualitative results (Figure 1) show that naïve pruning of splats leads to noticeable holes, whereas the layered approach preserves scene integrity. Training curves (Figure 2) demonstrate that joint training yields lower final loss and smoother gradient evolution than single‑layer baselines.

In summary, P‑GSVC makes four major contributions: (1) a scalable progressive representation for images and videos based on explicit 2‑D Gaussian splats, (2) a joint training methodology that aligns optimization across layers and guarantees stable progressive reconstruction, (3) an extensive evaluation showing significant PSNR gains over sequential training baselines, and (4) a practical codec that combines pruning, augmentation, and dynamic key‑frame selection to handle both static and highly dynamic content. By leveraging the lightweight nature of 2‑D Gaussian splats and a carefully designed training regime, P‑GSVC delivers high compression efficiency, fast decoding, and flexible quality adaptation, positioning it as a compelling alternative to both traditional wavelet‑based scalable codecs and modern implicit neural codecs.

Comments & Academic Discussion

Loading comments...

Leave a Comment