UltraGen: Efficient Ultra-High-Resolution Image Generation with Hierarchical Local Attention

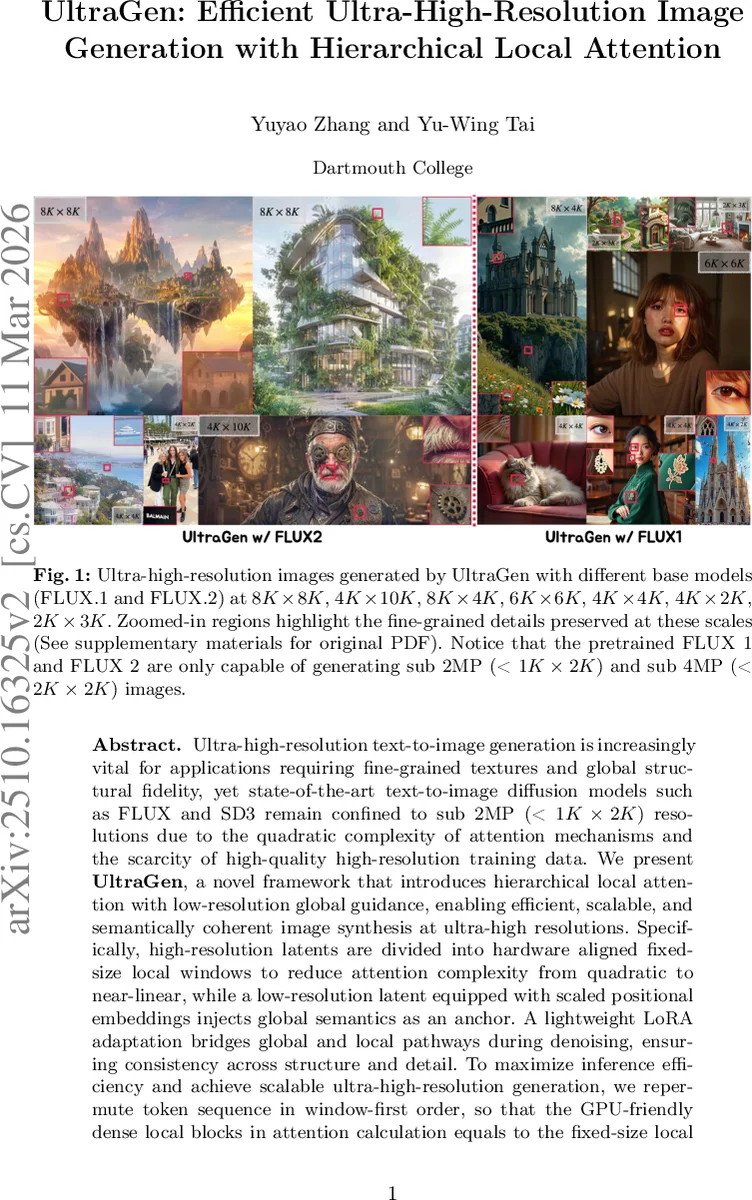

Ultra-high-resolution text-to-image generation is increasingly vital for applications requiring fine-grained textures and global structural fidelity, yet state-of-the-art text-to-image diffusion models such as FLUX and SD3 remain confined to sub 2MP (< $1K\times2K$) resolutions due to the quadratic complexity of attention mechanisms and the scarcity of high-quality high-resolution training data. We present \textbf{\ourwork}, a novel framework that introduces hierarchical local attention with low-resolution global guidance, enabling efficient, scalable, and semantically coherent image synthesis at ultra-high resolutions. Specifically, high-resolution latents are divided into hardware aligned fixed-size local windows to reduce attention complexity from quadratic to near-linear, while a low-resolution latent equipped with scaled positional embeddings injects global semantics as an anchor. A lightweight LoRA adaptation bridges global and local pathways during denoising, ensuring consistency across structure and detail. To maximize inference efficiency and achieve scalable ultra-high-resolution generation, we repermute token sequence in window-first order, so that the GPU-friendly dense local blocks in attention calculation equals to the fixed-size local window in 2D regardless of resolution. Together~\ourworkreliably scales the pretrained model to resolutions higher than $8K$ with more than $10\times$ speed up and significantly lower memory usage. Extensive experiments demonstrate that\ourwork~achieves superior quality while maintaining computational efficiency, establishing a practical paradigm for advancing ultra-high-resolution image generation.

💡 Research Summary

UltraGen tackles the long‑standing bottleneck of scaling text‑to‑image diffusion models to ultra‑high resolutions (4K, 8K, and beyond) without the need for massive high‑resolution training data. The authors observe that the quadratic cost of self‑attention makes naïve scaling infeasible and that existing models such as FLUX or Stable Diffusion 3 are limited to sub‑2 MP images. To overcome this, UltraGen introduces three tightly coupled innovations.

First, hierarchical local attention partitions the high‑resolution latent map into fixed‑size, hardware‑aligned windows (e.g., 16 × 16 tokens). Attention is computed independently inside each window, reducing the complexity from O(N²) to roughly O(N · l²), which is near‑linear in the total number of tokens. A crucial engineering detail is the “window‑first token permutation”: tokens are reordered so that each contiguous block in the attention matrix corresponds exactly to a spatial window. This alignment lets the model reuse highly optimized dense‑attention kernels (e.g., FlashAttention) without modification, achieving massive speed‑ups and memory savings. Limited cross‑window interaction is allowed along window borders to preserve continuity.

Second, a low‑resolution guidance latent (X_lr) provides global semantics. X_lr is generated at a much coarser scale (typically ¼ of the high‑resolution dimensions) and its positional embeddings are scaled (using RoPE) to match the high‑resolution coordinate system. In the attention mask, X_lr tokens attend globally to each other, while each high‑resolution window attends to its own local tokens, adjacent tokens, and the corresponding region of X_lr. This design ensures that the global layout, composition, and long‑range consistency are dictated by the low‑resolution guide, while fine‑grained texture is produced locally. The approach naturally supports recursive generation: the output of one stage can serve as the guidance for an even higher‑resolution stage.

Third, a parameter‑efficient joint denoising scheme integrates the two pathways via LoRA (Low‑Rank Adaptation). The pretrained Multi‑Modal Diffusion Transformer (MMDiT) weights are kept frozen; only lightweight LoRA adapters are inserted on the X_lr branch. This adds only a few hundred thousand trainable parameters, allowing the model to learn how to fuse global guidance with local detail while preserving the original model’s knowledge. Training is performed solely on standard 256 × 256 – 1K × 1K datasets, eliminating the need for any native ultra‑high‑resolution data.

Extensive experiments demonstrate that UltraGen can upscale FLUX‑1 and FLUX‑2 to resolutions up to 8K × 8K (and non‑square sizes such as 4K × 10K) with over 10× faster inference and more than 10 GB reduced memory consumption compared to dense‑attention baselines. Quantitatively, UltraGen matches or surpasses state‑of‑the‑art methods that are trained on native 4K data across FID, IS, and CLIP‑Score. Qualitatively, the generated images retain coherent global structures while exhibiting sharp, detailed textures even at extreme scales.

The paper also discusses limitations: window borders can still exhibit subtle artifacts, and an overly dominant low‑resolution guide may suppress creative variation. Future work may explore adaptive window sizes, dynamic scaling of the guidance latent, or multi‑scale window mixtures to further improve fidelity.

In summary, UltraGen offers a practical paradigm for ultra‑high‑resolution text‑to‑image generation: hierarchical local attention for computational tractability, low‑resolution global guidance for semantic consistency, and lightweight LoRA adaptation for seamless integration with existing pretrained diffusion models. This combination enables high‑quality, scalable image synthesis without the prohibitive data or compute costs that have previously hampered progress in this domain, opening the door to real‑world applications in digital art, advertising, scientific visualization, and beyond.

Comments & Academic Discussion

Loading comments...

Leave a Comment