TIMID: Time-Dependent Mistake Detection in Videos of Robot Executions

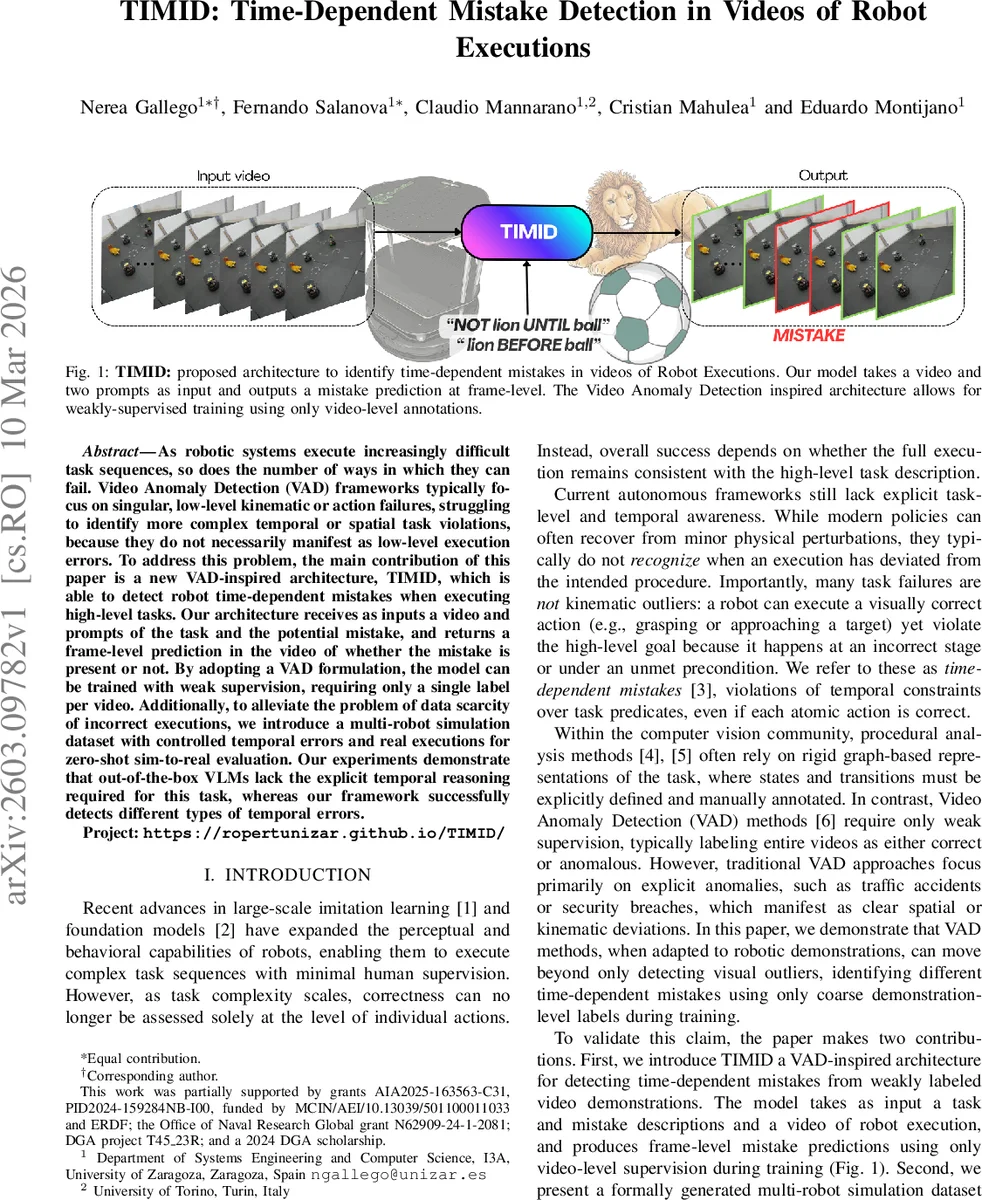

As robotic systems execute increasingly difficult task sequences, so does the number of ways in which they can fail. Video Anomaly Detection (VAD) frameworks typically focus on singular, low-level kinematic or action failures, struggling to identify more complex temporal or spatial task violations, because they do not necessarily manifest as low-level execution errors. To address this problem, the main contribution of this paper is a new VAD-inspired architecture, TIMID, which is able to detect robot time-dependent mistakes when executing high-level tasks. Our architecture receives as inputs a video and prompts of the task and the potential mistake, and returns a frame-level prediction in the video of whether the mistake is present or not. By adopting a VAD formulation, the model can be trained with weak supervision, requiring only a single label per video. Additionally, to alleviate the problem of data scarcity of incorrect executions, we introduce a multi-robot simulation dataset with controlled temporal errors and real executions for zero-shot sim-to-real evaluation. Our experiments demonstrate that out-of-the-box VLMs lack the explicit temporal reasoning required for this task, whereas our framework successfully detects different types of temporal errors. Project: https://ropertunizar.github.io/TIMID/

💡 Research Summary

The paper introduces TIMID, a novel framework for detecting time‑dependent mistakes in robot execution videos. Traditional video anomaly detection (VAD) methods focus on obvious visual anomalies and are ill‑suited for high‑level procedural errors where each atomic action may be correct but the overall temporal logic is violated. TIMID adapts the VAD paradigm to robotics by taking three inputs: (1) a video of the robot’s execution, (2) a textual description of the intended task (P), and (3) a textual description of the potential mistake (M). The model is trained with only video‑level supervision (a single binary label indicating whether the mistake occurs somewhere in the video) yet can output frame‑level predictions at inference time.

The architecture consists of a video encoder that processes non‑overlapping fragments of the video into high‑level feature vectors, followed by two attention modules. The first “temporal context” module combines sinusoidal positional encodings with a Gaussian‑like dynamic prior to produce a similarity matrix that incorporates both absolute and relative temporal information. It then splits into a global stream (unmasked) and a causal local stream (masked to prevent attention to future frames). A learnable scalar balances these streams into a unified temporal representation Z_time.

The second “semantic alignment” module bridges visual features with language. Using a pretrained CLIP text encoder, the task and mistake prompts are embedded as Z_task. Cross‑attention projects Z_time into queries and Z_task into keys/values, allowing the model to attend to video regions that correspond to the textual temporal constraints (e.g., “robot must visit the ball before the lion”). A residual connection and layer normalization produce Z_sem, which is finally linearly projected to per‑frame mistake scores ŷ_t.

Training follows a Multiple‑Instance Learning (MIL) scheme. Frame scores S are pooled into a video‑level score s_pool: for normal videos the maximum frame score is used (penalizing any false alarm), while for anomalous videos the average of the top‑k scores (k≈T/32) is taken to focus on the region where the mistake occurs. This pooled score is optimized with a binary cross‑entropy loss (L_bce). Additionally, a supervised contrastive loss (L_con) is applied to global video embeddings to separate normal and anomalous representations in feature space.

To address the scarcity of labeled erroneous demonstrations, the authors create a new multi‑robot simulation dataset. In a Gazebo arena, three TurtleBot3 robots interact with two objects (a lion plush and a green ball). Two tasks are defined using Linear Temporal Logic (LTL): (1) Mutual exclusion – robots must never be in both object zones simultaneously, and (2) Sequential ordering – robots must visit the ball before the lion. Mistakes are expressed as conflicting LTL formulas. Action plans are generated automatically by converting LTL specifications into Büchi automata and sampling compliant sequences with the Renew simulator. The low‑level motion is executed using ROS2 Nav2, and each episode is recorded from three camera viewpoints. Real‑world robot executions are also included for zero‑shot sim‑to‑real evaluation.

Experiments compare TIMID against baseline VLM‑based VAD models (e.g., PEL4VAD). Baselines can align text and video but fail to capture temporal logic, resulting in near‑random AUC. TIMID achieves substantially higher frame‑level accuracy and AUC on both simulated and real videos, demonstrating effective temporal reasoning and successful zero‑shot transfer from simulation to real robots. Limitations include dependence on relatively short video clips, the need for LTL‑style textual prompts, and potential scalability issues for very long task sequences. Future work is suggested on integrating multimodal sensors (force/torque), automatic prompt generation, and more efficient attention mechanisms for longer horizons.

Comments & Academic Discussion

Loading comments...

Leave a Comment