Contrastive Bayesian Inference for Unnormalized Models

Unnormalized (or energy-based) models provide a flexible framework for capturing the characteristics of data with complex dependency structures. However, the application of standard Bayesian inference methods has been severely limited because the par…

Authors: Naruki Sonobe, Shonosuke Sugasawa, Daichi Mochihashi

Contrastiv e Bayesian Infer ence f or Unnormalized Models * Naruki Sonobe 1 , Shonosuke Sugasa wa 2 , Daichi Mochihashi 3 and T akeru Matsuda 4 , 5 1 Department of Information Sciences, T okyo Uni versity of Science 2 Faculty of Economics, K eio Univ ersity 3 The Institute of Statistical Mathematics 4 Graduate School of Information Science and T echnology , The Univ ersity of T ok yo 5 RIKEN Center for Brain Science Abstract Unnormalized (or energy-based) models pro vide a flexible frame work for capturing the characteristics of data with complex dependency structures. Ho wev er , the application of standard Bayesian inference methods has been se verely limited because the parameter - dependent normalizing constant is either analytically intractable or computationally pro- hibiti ve to ev aluate. A promising approach is score-based generalized Bayesian inference, which a voids ev aluating the normalizing constant by replacing the likelihood with a scor - ing rule. Ho wev er , this approach requires careful tuning of the likelihood information, and it may fail to yield valid inference without appropriate control. T o ov ercome this dif ficulty , we propose a fully Bayesian framework for inference on unnormalized models that does not require such tuning. W e build on noise contrasti ve estimation, which recasts inference as a binary classification problem between observed and noise samples, and treat the normalizing constant as an additional unkno wn parameter within the resulting * March 11, 2026 1 likelihood. For exponential families, the classification likelihood becomes conditionally Gaussian via P ´ olya–Gamma data augmentation, leading to a simple Gibbs sampler . W e demonstrate the proposed approach through two models: time-v arying density models for temporal point process data and sparse torus graph models for multi variate circular data. Through simulation studies and real-data analyses, the proposed method provides accurate point estimation and enables principled uncertainty quantification. K ey words : Bayesian computation; Intractable normalizing constants; Generalized Bayesian inference; Shrinkage priors 1 Intr oduction Bayesian inference for unnormalized models, i.e. statistical models whose likelihoods contain intractable normalizing constants, remains a persistent challenge. The core ob- stacle is ev aluating the parameter -dependent normalizing constant, which requires inte- grating ov er the sample space and is often analytically intractable. Unnormalized models arise in a range of applications, for instance, Ising models (Besag, 1974), exponential random graph models (Robins et al., 2007; Hunter and Handcock, 2012), non-Gaussian graphical models (Lin et al., 2016; Inouye et al., 2017), nonlinear independent compo- nent analysis (Hyv ¨ arinen et al., 2024; Matsuda and Hyv ¨ arinen, 2019), torus graph models (Klein et al., 2020), and time-v arying density estimation based on logistic Gaussian pro- cesses (Lenk, 1988; T okdar and Ghosh, 2007). Despite wide range of applications, the intractable likelihood implies that both the likelihood and the resulting posterior distri- bution are only known up to parameter -dependent normalizing constants that cannot be e valuated, thereby rendering standard Bayesian inference methods inapplicable. A wide range of computational methods has been proposed to perform Bayesian in- ference for unnormalized models with intractable normalizing constants. Park and Haran (2018) provided a comprehensi ve re view of Marko v chain Monte Carlo (MCMC) meth- ods for such models. These include asymptotically exact algorithms whose Markov chain 2 has the desired posterior distribution as its stationary distribution. For example, pseudo- marginal MCMC algorithms (Andrieu and Roberts, 2009; L yne et al., 2015) pro vide a theoretically sound frame work in which the intractable likelihood is replaced by an un- biased estimator while still targeting the exact posterior distrib ution. Ho wev er , a major drawback of these exact methods is their high computational cost, because each MCMC iteration requires expensi ve inner Monte Carlo estimation for the intractable normalizing constant. In pseudo-marginal methods, multiple such estimates may be required within a single iteration, which can make these methods impractical for complex problems (Park and Haran, 2018). T o alle viate this computational burden, se veral asymptotically ine x- act or approximate MCMC algorithms have been dev eloped. These methods, such as the function emulation approach proposed by Park and Haran (2020), can of fer substan- tial gains in computational efficienc y by introducing controlled approximations to the likelihood or normalizing constant. While these approaches are often much faster , they come with a critical trade-of f: the stationary distribution of the resulting Marko v chain typically differs from the exact target posterior , and there is generally no strong theoret- ical guarantee that the generated samples will con ver ge to the true posterior distribution asymptotically . In parallel to these MCMC-based approaches, likelihood-free inference methods exploit situations in which it is much easier to generate synthetic data from the model than to e v aluate its (unnormalized) likelihood. A canonical example is approximate Bayesian computation (ABC), which approximates the posterior by comparing summary statistics computed from the observed data with those from simulated data (Marin et al., 2012). Further connections between the proposed frame work and likelihood-free infer- ence methods are discussed in Section 5. Additionally , there are recent dev elopments in generalized Bayesian approaches that update prior beliefs using task-specific losses or proper scoring rules in place of a stan- dard likelihood (Bissiri et al., 2016). An appealing feature of this loss-based formulation is that certain choices av oid ev aluating normalizing constants, making the frame work suit- able for unnormalized models. Illustrativ e examples include methods based on the Stein 3 discrepancy (Matsubara et al., 2022) and the Hyv ¨ arinen score (also kno wn as the Fisher di ver gence) (Jewson and Rossell, 2022), which support Bayesian-style estimation with- out likelihood normalization and ha ve been e xtended to discrete distrib utions (Matsubara et al., 2024). Relatedly , Pacchiardi et al. (2024) de veloped a likelihood-free frame work based on scoring rule posteriors, connecting scoring rule-based inference with simulator- based models and demonstrating ho w sev eral existing likelihood-free methods can be re- cov ered as special cases. These approaches are often computationally efficient and come with solid theoretical underpinnings; meanwhile, they introduce a learning-rate or tuning hyperparameter that meaningfully affects the resulting generalized posterior and, thus, inference and uncertainty quantification, motiv ating principled, data-dri ven calibration (Bissiri et al., 2016; W u and Martin, 2023). In this paper , we dev elop a fully Bayesian formulation of noise contrasti ve estima- tion (NCE) for inference in unnormalized models, sidestepping the direct computation of the normalizing constant. Our approach lev erages the principles of NCE (Gutmann and Hyv ¨ arinen, 2010, 2012), which reframes the likelihood construction as a binary classifi- cation problem between observ ed data and artificially generated noise. While NCE has demonstrated considerable success across a range of applications, most e xisting methods estimate parameters by directly maximizing the NCE objecti ve. A fe w works introduce Bayesian or v ariational aspects, such as variational NCE (Rhodes and Gutmann, 2019) and fully v ariational NCE (Zach, 2023), but these still represent parameter uncertainty through variational approximations rather than a fully Bayesian posterior ov er model pa- rameters. T o illustrate the practical benefits of our fully Bayesian NCE framework for un- normalized models, we highlight two k ey adv antages ov er existing approaches. First, full Bayesian inference pro vides principled uncertainty quantification over all model param- eters and enables the natural incorporation of latent variables, allowing for flexible and interpretable probabilistic modeling. Second, while score-based generalized Bayesian inference has sho wn promise for unnormalized models, its reliance on score matching makes information control dif ficult, rendering it ill-suited for data with hierarchical struc- 4 tures or shrinkage priors; our frame work ov ercomes this limitation by operating directly within a proper Bayesian posterior frame work. W e demonstrate these adv antages in two challenging settings: time-varying density estimation, where uncertainty o ver latent tem- poral dynamics is critical, and edge selection for sparse graphical models of multi variate angular data, where structured sparsity must be carefully encoded through the prior . This paper is organized as follo ws. Section 2 details our proposed methodology , which combines NCE with P ´ olya-Gamma data augmentation for ef ficient Bayesian inference, and proposes an appropriate selection of noise distrib utions. In Section 3, we apply this frame work to the problem of time-v arying density estimation. Section 4 demonstrates applicability of the frame work through a second application: learning sparse torus graph models for multiv ariate circular data. Finally , Section 5 concludes the paper with a sum- mary of our findings and a discussion of future work. 2 Methodology 2.1 Unnormalized models and classification likelihood Consider the problem of estimating an unknown parameter (vector) θ ∈ Θ ⊂ R p in a parametric model p ( x | θ ) from observ ations x 1 , . . . , x n . W e focus on models whose likelihood in volv es an intractable normalizing constant. Such a model can be e xpressed as p ( x | θ ) = ˜ p ( x | θ ) Z ( θ ) , Z ( θ ) = Z ˜ p ( x | θ ) dx, where the parameter θ controls the shape or features of the model, ˜ p ( x | θ ) is an unnormal- ized probabilistic model, and Z ( θ ) is the normalizing constant, or partition function, that con verts ˜ p ( x | θ ) into a proper probability distribution. In man y applications, x is high- dimensional and the model induces strong dependence or interactions among components, so Z ( θ ) does not factorize and has no closed-form e xpression; moreo ver , accurate numer- ical ev aluation is prohibitiv ely e xpensi ve. As a result, the lik elihood p ( x | θ ) cannot be computed pointwise for arbitrary θ , and standard Bayesian procedures that require re- 5 peated ev aluation of the lik elihood, or ratios of normalizing constants across parameter v alues, are not directly applicable. In the framew ork of NCE (Gutmann and Hyv ¨ arinen, 2010, 2012), inference is refor- mulated as a binary classification problem that distinguishes between observ ed data and artificially generated noise data x n +1 , . . . , x n + m sampled from a known distrib ution q ( x ) , which we refer to as the noise distribution. Giv en x , the probability that x is recognized as a genuine observ ation is giv en by r ( x | θ , Z ( θ )) = n ˜ p ( x | θ ) / Z ( θ ) n ˜ p ( x | θ ) / Z ( θ ) + mq ( x ) = n ˜ p ( x | θ ) n ˜ p ( x | θ ) + mZ ( θ ) q ( x ) . Then, the likelihood for the binary model to classify the genuine and artificial observations is obtained as L ( θ , Z ( θ ) | X n , X ∗ m ) ≡ n Y i =1 r ( x i | θ , Z ( θ )) n + m Y i = n +1 1 − r ( x i | θ , Z ( θ )) = n + m Y i =1 { n ˜ p ( x | θ ) } s i { mZ ( θ ) q ( x i ) } 1 − s i n ˜ p ( x | θ ) + mZ ( θ ) q ( x i ) , (1) where X n = { x 1 , . . . , x n } is a set of genuine observations, X ∗ m = { x n +1 , . . . , x n + m } is a set of noise observations, and s i = I ( i ≤ n ) is an indicator of genuine observation. This likelihood corresponds to that of a logistic re gression classifier, in which the parameter estimates are obtained by maximizing the ability to discriminate between genuine and artificial observ ations. T o enable Bayesian inference, we place priors on both the parameter θ and the normal- izing constant Z ( θ ) . W e treat Z ( θ ) as a parameter separate from θ and hereafter denote it by Z . This specification of prior distrib utions and the classification likelihood (1) gi ves the posterior distribution of ( θ, Z ) ⊤ as π ( θ , Z | X n , X ∗ m ) ∝ π ( θ , Z ) L ( θ , Z | X n , X ∗ m ) , (2) 6 where π ( θ , Z ) is a prior distribution for ( θ , Z ) ⊤ . Note that the abov e posterior distrib ution is free from the intractable normalizing constant o wing to the classification likelihood (1) and does not include a tuning constant, unlik e generalized Bayesian approaches. W e refer to this procedure as noise-contrasti ve Bayes ( NC-Bayes ). Hence, the posterior compu- tation of (2) can be performed via general algorithms such as Hamiltonian Monte Carlo methods. On the other hand, when the probability model p ( x | θ ) is an exponential-f amily , ef ficient sampling algorithms can be dev eloped by employing P ´ olya–Gamma data aug- mentation (Polson et al., 2013), as described in the subsequent section. 2.2 Gibbs sampler for exponential-families Here, we describe the details of posterior computation for the binary likelihood induced by NCE under an exponential-family , ˜ p ( x | θ ) = h ( x ) exp( η ( x ) ⊤ θ ) . Note that the exponential- family form is highly expressi ve and cov ers a broad range of widely used models, includ- ing generalized linear models such as linear regression, logistic re gression and Poisson regression, as well as Ising models (Besag, 1974), e xponential random graph models (Robins et al., 2007; Hunter and Handcock, 2012) and truncated Gaussian graphical mod- els (Lin et al., 2016). Importantly , the model considered in our experiments also falls within this framew ork. Therefore, the proposed posterior computation applies generi- cally to a wide range of re gression and graphical models whenev er the (unnormalized) likelihood admits an e xponential-family representation. For the model, the binary lik elihood is n + m Y i =1 exp(log n + log h ( x i ) + η ( x i ) ⊤ θ ) s i { mZ q ( x i ) } 1 − s i exp(log n + log h ( x i ) + η ( x i ) ⊤ θ ) + mZ q ( x i ) = n + m Y i =1 ( e ψ i ) s i 1 + e ψ i , (3) where ψ i = log n + log h ( x i ) + η ( x i ) ⊤ θ − log m − log Z − log q ( x i ) . Let us introduce the reparametrization β = − log Z , z ( x i ) = ( η ( x i ) ⊤ , 1) ⊤ , γ = ( θ ⊤ , β ) ⊤ and C ( x i ) = log n − log m + log h ( x i ) − log q ( x i ) so that ψ i = z ( x i ) ⊤ γ + C ( x i ) . Using the integral 7 expression in Polson et al. (2013), the lik elihood (3) can be augmented as n + m Y i =1 exp( z ( x i ) ⊤ γ + C ( x i )) s i 1 + exp( z ( x i ) ⊤ γ + C ( x i )) = 1 2 n + m Y i =1 exp s i − 1 2 ( z ( x i ) ⊤ γ + C ( x i )) × Z ∞ 0 exp − 1 2 ω i ( z ( x i ) ⊤ γ + C ( x i )) 2 p PG ( ω i ) dω i , where p PG ( ω i ) is the density of P ´ olya-Gamma distrib ution, PG(1 , 0) . Since the likelihood is now written as a scale mixture of Gaussians, if we assume a Gaussian prior for γ , the full conditional posterior of γ becomes Gaussian, and the full conditional posterior of ω i becomes a P ´ olya-Gamma distribution. Therefore, posterior samples can be obtained via Gibbs sampling. Suppose that the joint prior for γ is N ( A 0 , B 0 ) . Then, the posterior samples can be generated by Gibbs sampling as follo ws: Algorithm 1. Starting with the initial value of γ , iteratively gener ate a random sample of γ and ω i (auxiliary latent variable) as follows: 1. F or i = 1 , . . . , n + m , generate ω i fr om PG(1 , z ( x i ) ⊤ γ + C ( x i )) . 2. Generate γ fr om N ( A 1 , B 1 ) , wher e B 1 = B − 1 0 + n + m X i =1 ω i z ( x i ) z ( x i ) ⊤ ! − 1 , A 1 = B 1 ( n + m X i =1 s i − 1 2 − ω i C ( x i ) z ( x i ) + B − 1 0 A 0 ) . 2.3 Mar ginalization and adaptive updating of the noise distribution NC-Bayes depends on the choice of the noise distribution q ( x ) in terms of statistical ef ficiency and the stability of estimation. A common practical strategy is to choose q ( x ) to be close to the data-generating distrib ution so that the resulting classification task is well balanced and yields more informati ve comparisons. Recent analyses also indicate that the theoretically optimal noise need not coincide with the data distribution (Gutmann 8 and Hyv ¨ arinen, 2012), but we do not pursue this direction further here. W e begin by choosing q ( x ) to be a fix ed noise distribution such as a uniform distrib ution. The standard algorithm for NC-Bayes uses only a single set of noise data, that is, the noise data is generated in advance and fixed in the analysis. In the Bayesian framework, it is also possible to integrate the noise data out with respect to the generati ve distrib ution q ( · ) , which mak es the posterior inference less sensiti ve to the noise data. Note that X n and X ∗ m are v ectors of observed data and noise data, respecti vely . Algorithm 1 targets the posterior distribution gi ven the noise data: π ( γ | X n , X ∗ m ) ∝ π ( γ ) n Y i =1 exp( z ( x i ) ⊤ γ + C ( x i )) 1 + exp( z ( x i ) ⊤ γ + C ( x i )) n + m Y i = n +1 1 1 + exp( z ( x i ) ⊤ γ + C ( x i )) . On the other hand, to make posterior inference less sensiti ve to the choice of X ∗ m , we can target the posterior of the form: π ( γ | X n ) = Z π ( γ | X n , X ∗ m ) n + m Y i = n +1 q ( x i ) dx i . (4) By generating fresh noise samples at each iteration, this formulation reduces the depen- dence of posterior inference on any particular realization of noise data. T o generate the integrated posterior (4), we use the follo wing sampling algorithm: Algorithm 2. Starting with the initial value of γ , iteratively gener ate random samples of γ and ω i (auxiliary latent variable) as follows: 1. F or i = n + 1 , . . . , n + m , generate noise data x i fr om the gener ative distrib ution q ( · ) , and compute z ( x i ) and C ( x i ) . 2. Generate ω i fr om PG(1 , z ( x i ) ⊤ γ + C ( x i )) for i = 1 , . . . , n , and fr om PG(1 , z ( x i ) ⊤ γ + C ( x i )) for i = n + 1 , . . . , n + m . 9 3. Generate γ fr om N ( A 1 , B 1 ) , wher e B 1 = B − 1 0 + n X i =1 ω i z ( x i ) z ( x i ) ⊤ + n + m X i = n +1 ω i z ( x i ) z ( x i ) ⊤ ! − 1 , A 1 = B 1 ( n X i =1 1 2 − ω i C ( x i ) z ( x i ) + n + m X i = n +1 − 1 2 − ω i C ( x i ) z ( x i ) + B − 1 0 A 0 ) . At each iteration, we redraw a fresh set of noise samples from q ( · ) . This refresh is a practical heuristic to a void committing to a single noise realization. Importantly , this procedure does not marginalize over the noise and thus does not by itself provide a formal variance-reduction or robustness guarantee; the estimates remain conditional on the realized noise. Further , we propose an adaptive method for the noise distribution by updating it within MCMC iterations. Let e γ be the av erage of the posterior samples of γ in some mini-batch of MCMC. Then, we can specify the noise distribution as q α ( x ) = exp { αz ( x ) ⊤ e γ } Z α , Z α = Z exp { αz ( u ) ⊤ e γ } du, where α ∈ (0 , 1] is a shrinkage (tempering) parameter that controls the concentration of the noise distribution. Note that α = 1 leads to the same distribution as the fitted model while α = 0 reduces to the uniform distrib ution. Samples from q α can be readily generated by an importance resampling. T o generate m samples from q α ( x ) , we first generate M ( ≫ m ) samples from a base distribution (e.g. uniform distrib ution), denoted by q 0 ( x ) , and re-sample m units according to the probability proportional to q α ( x ) /q 0 ( x ) . The resulting adapti ve noise update is summarized as follo ws: Algorithm 3. (Adaptive noise update by tempered importance resampling) 1. Generate pr oposals { x ( j ) } M j =1 fr om q 0 ( · ) . 2. F or each j = 1 , . . . , M , compute z ( x ( j ) ) , set e w j = exp { αz ( x ( j ) ) ⊤ e γ } /q 0 ( x ( j ) ) and compute b Z α = M − 1 P M j =1 e w j . 10 3. Resample m points fr om { x ( j ) } M j =1 with pr obabilities e w j / P M l =1 e w l ( j = 1 , . . . , M ) , and denote the r esulting set by X ∗ m = { x n +1 , . . . , x n + m } . 4. Compute q α ( x ( j ) ) = exp { αz ( x ( j ) ) ⊤ e γ } / b Z α . Although the target noise density q α ( x ) in volv es the normalizing constant Z α , Al- gorithm 3 does not require repeated ev aluations of Z α for each resampled point. The normalization is implicitly handled through the importance resampling step, since the probabilities e w j / P M l =1 e w l depend only on ratios of unnormalized weights. The Monte Carlo estimate b Z α is therefore computed once per noise update and reused for ev aluating q α if needed. T o stabilize the performance of the above algorithm, it is useful to moni- tor the ef fectiv e sample size ESS = P M j =1 e w j 2 / P M j =1 e w 2 j during the noise update. The base distribution q 0 can be chosen flexibly , provided that it has support covering the region where q α has non-negligible mass. In our implementation, we use a uniform distribution ov er a data-adaptiv e bounding box for simplicity . 2.4 Contrastive Bayesian infer ence for hierar chical models W e consider a multi-group setting with J groups. F or j = 1 , . . . , J , let X j = { x j 1 , . . . , x j n j } denote observed data and X ∗ j = { x j,n j +1 , . . . , x j,n j + m j } denote artificial noise generated from a possibly group-specific distribution q j ( · ) . W e assume an unnormalized model p j ( x | θ j ) = ˜ p j ( x | θ j ) / Z j , where Z j = R ˜ p j ( x | θ j ) dx . W e focus on the exponential-family case where ˜ p j ( x | θ j ) = h ( x ) exp( η ( x ) ⊤ θ j ) . By reparametrizing β j = − log Z j and defin- ing vectors z ( x j i ) = η ( x j i ) ⊤ , 1 ⊤ and γ j = ( θ ⊤ j , β j ) ⊤ , the classification likelihood for all groups can be expressed as J Y j =1 n j + m j Y i =1 exp( z ( x j i ) ⊤ γ j + C ( x j i )) s j i 1 + exp( z ( x j i ) ⊤ γ j + C ( x j i )) , where C ( x j i ) = log n j − log m j + log h ( x j i ) − log q j ( x j i ) and s j i = I ( i ≤ n j ) . T o facilitate sharing statistical strength across groups, we introduce a hierarchical prior structure. Specifically , we assume that the group-specific parameters { γ j } J j =1 are drawn 11 from a common population distribution: θ j | µ, Σ ∼ N ( µ, Σ) , β j | µ β , σ 2 β ∼ N ( µ β , σ 2 β ) , for j = 1 , . . . , J . W e complete the model by placing standard non-informati ve or weakly informati ve hyperpriors on the population parameters ( µ, Σ , µ β , σ 2 β ) . Posterior computation can be performed ef ficiently via a Gibbs sampler that lev er- ages P ´ olya-Gamma data augmentation, which renders the conditional posteriors for the parameters Gaussian. The complete sampling algorithm proceeds as follo ws: Algorithm 4. Starting fr om initial values of { γ j } J j =1 and the hyperpar ameters, iter atively gener ate posterior samples as follows: 1. F or each gr oup j , either fix the noise data X ∗ j thr oughout all iterations or r e gener ate x j i ∼ q j ( · ) for i = n j + 1 , . . . , n j + m j at each iteration, r ecomputing z ( x j i ) and C ( x j i ) accor dingly . 2. F or all j = 1 , . . . , J and i = 1 , . . . , n j + m j , sample the P ´ olya-Gamma latent variables: ω j i ∼ PG 1 , z ( x j i ) ⊤ γ j + C ( x j i ) . 3. F or each gr oup j = 1 , . . . , J , sample the gr oup-specific parameters γ j = ( θ ⊤ j , β j ) ⊤ fr om its Gaussian conditional posterior N ( A 1 j , B 1 j ) , wher e B 1 j = diag(Σ − 1 , σ − 2 β ) + n j + m j X i =1 ω j i z ( x j i ) z ( x j i ) ⊤ − 1 , A 1 j = B 1 j n n j + m j X i =1 s j i − 1 2 − ω j i C ( x j i ) z ( x j i ) + diag (Σ − 1 , σ − 2 β )( µ ⊤ , µ β ) ⊤ o . 4. Sample the hyperparameter s ( µ, Σ , µ β , σ 2 β ) fr om their r espective full conditional distributions, given the curr ent values of { θ j , β j } J j =1 and the hyperpriors. Assuming standar d conjugate hyperpriors (e.g ., Gaussian for means, In verse-W ishart/Gamma 12 for variances), these updates ar e standard. Under this hierarchical specification, applying NC-Bayes naturally captures between- group heterogeneity via Bayesian partial pooling. The group-specific parameters { θ j , β j } as well as the hyperparameters ( µ, Σ , µ β , σ 2 β ) can be updated within a unified and ef ficient Gibbs sampler using the P ´ olya-Gamma data augmentation. 3 NC-Bayes in Action I: T ime-varying Density Estimation 3.1 Model Suppose that one is interested in modeling time-varying density p t ( x ) = exp( f t ( x )) / Z t for t = 1 , . . . , T , where Z t = R exp( f t ( x )) dx is a normalizing constant and x is a p -dimensional vector . For f 1 ( x ) , . . . , f T ( x ) , we introduce a basis function expansion, f ( x ) = P L l =1 θ tl ϕ l ( x ) , where ϕ 1 ( x ) , . . . , ϕ L ( x ) are basis functions that are common over all the time points. For the vector of coefficient parameters, θ t = ( θ t 1 , . . . , θ tL ) ⊤ , we put the follo wing random-walk prior: θ t | θ t − 1 ∼ N ( θ t − 1 , λI L ) , t = 1 , . . . , T , with θ 0 = 0 , where λ is a scalar parameter that controls the smoothness of the func- tion f t ( x ) . W e assign priors for − log Z t and λ as β t = − log Z t ∼ N (0 , b 0 ) and λ ∼ IG( n 0 , ν 0 ) with fixed v alues b 0 , n 0 and ν 0 . In the subsequent numerical studies, we set b 0 = 10 3 and n 0 = ν 0 = 1 . Let X t = { x t 1 , . . . , x tn t } be a set of observed data at time t . Further , let X ∗ t = { x t,n t +1 , . . . , x t,n t + m t } be a set of noise data generated from a distrib ution q ( · ) . Then, the joint posterior of Θ = ( θ 1 , . . . , θ T ) ⊤ , β = ( β 1 , . . . , β T ) ⊤ , and λ gi ven the observed data X = { X 1 , . . . , X T } and the noise data X ∗ = { X ∗ 1 , . . . , X ∗ T } is expressed as π (Θ , β , λ | X , X ∗ ) ∝ π ( λ, β ) T Y t =1 ϕ ( θ t ; θ t − 1 , λI L ) n t + m t Y i =1 exp(Φ ⊤ ti θ t + β t + C ti ) s it 1 + exp(Φ ⊤ ti θ t + β t + C ti ) , 13 where Φ ti = ( ϕ 1 ( x ti ) , . . . , ϕ L ( x ti )) ⊤ and C ti = log n t − log m t − log q ( x ti ) . Using Algorithm 4, we can dev elop a Gibbs sampler for posterior computation, whose details are gi ven in the Supplementary Material S1.1. 3.2 Simulation study W e demonstrate the proposed method using synthetic data. As the true data generating distribution, we consider the follo wing two scenarios: • (Scenario 1: T ime-varying Gaussian mixture) For t = 1 , . . . , T , each observation x ti ( i = 1 , . . . , n t ) is generated from a tw o-component Gaussian mixture model with fixed mixing proportions, 0 . 4 N 2 ( µ (1) t , Σ (1) ) + 0 . 6 N 2 ( µ (2) t , Σ (2) ) with time- in variant cov ariance matrices given by Σ (1) = diag (0 . 7 , 0 . 2) and Σ (2) = 0 . 5 I 2 . The component means e volv e deterministically over time as µ (1) t = ( − 2 , 0) ⊤ + 4 t (1 , 0) ⊤ /T and µ (2) t = ( − 2 , − 2) ⊤ + 4 t (1 , 1) ⊤ /T . • (Scenario 2: Ring-shaped distribution) For t = 1 , . . . , T , each observation x ti ( i = 1 , . . . , n t ) is constructed by decomposing it into a radial r ti ∼ N ( µ t , σ 2 t ) and an angular component θ ti ∼ U (0 , 2 π ) . Then, the two-dimensional observ ation x ti is generated as x ti = ( r ti cos θ ti , r ti sin θ ti ) ⊤ . The parameter µ t controls the radius of the ring and increases over time, whereas σ t determines the thickness of the ring and gradually decreases. The time-varying parameters µ t and σ 2 t are specified deterministically as µ t = 1 + 2( t − 1) / ( T − 1) and σ t = 0 . 5 − 0 . 2( t − 1) / ( T − 1) . W e set T = 10 and n t = 100 in this study . T o illustrate the behavior of the pro- posed method, we first present a one-shot demonstration using a single realization of the simulated data. Specifically , we apply NC-Bayes to the generated datasets and vi- sually inspect the resulting density estimates over time. For NC-Bayes, we dra w 3,000 posterior samples after discarding the first 2,000 samples as burn-in. As the basis func- tions, we set L = 30 (the number of basis functions) and employ radial basis functions, Φ l ( x ) = exp( −∥ x − κ l ∥ /h ) for l = 1 , . . . , L , where κ l is the center (knot) and h is a 14 bandwidth. Here, the basis centers are determined by applying k -means clustering with L clusters to the pooled observ ations across all time points. The number of noise samples is set to m t = 100 , matching the number of observ ed data points at each time, and we adopt Algorithm 3 with α = 0 . 2 that updates the noise distribution sequentially over time. As a benchmark method, we apply kernel density estimation (KDE) separately at each time point, without sharing information across time. For Scenario 2, the true densities and the corresponding estimates obtained by NC- Bayes and KDE are displayed in Figure 1. From these visual comparisons, we observe that NC-Bayes successfully captures both the temporal e volution of the density and the complex, non-Gaussian structure of the distribution. In contrast, the KDE estimates tend to be o verly smooth, producing more dif fuse density shapes. This behavior can be at- tributed to the fact that KDE is applied independently at each time point and therefore cannot borro w information across dif ferent times. From this perspecti ve, the proposed method benefits from its hierarchical time-series structure, which enables effecti ve shar- ing of statistical strength across time and leads to more stable and coherent density esti- mates. W e next conduct a Monte Carlo study with 200 independent replications to quantita- ti vely assess estimation accuracy and inferential performance. For each replication, we e valuate the absolute error (ABE) of the estimated density , defined as ABE = T − 1 T X t =1 Z D b f t ( x ) − f t ( x ) dx, where D denotes the rectangular domain determined by the range of the whole observed data, b f t ( x ) and f t ( x ) are the estimated and true densities at time t . This integral is ap- proximated via Monte Carlo integration with 500 e valuation points generated from the uniform distrib ution on D , and rescaled versions of b f t and f t , so that their discretized integrals over D equal one. T o ev aluate the performance of uncertainty quantification of NC-Bayes, we e valuated the cov erage probability (CP) and a verage length (AL) of 95% 15 Figure 1: T rue time-v arying density (upper), posterior mean of the time-varying density model fitted by NC-Bayes (middle) and time-wise KDE (lower), for selected four time points under Scenario 2. credible interv als computed at the same 500 ev aluation points. For NC-Bayes, we con- sider three different noise specifications: the adapti ve noise distribution described abov e, a time-in variant common noise distrib ution shared across all time points (N1), and time- specific noise distributions estimated separately for each time (N2). The results for both scenarios are summarized in T able 1. Overall, NC-Bayes achiev es higher estimation accu- racy than KDE in both scenarios, reflecting the adv antage of incorporating a hierarchical structure ov er time. Regarding inference, all three NC-Bayes variants yield empirical cov- erage probabilities close to the nominal le vel. Ho wev er , the av erage length of the credible interv als varies depending on the noise specification, indicating differences in inferen- tial ef ficiency . In particular , learning the noise distrib ution adaptiv ely leads to slightly shorter interv als and impro ved point estimation accurac y compared with the fix ed noise alternati ves. 16 T able 1: Absolute error (ABE) of the density estimation, cov erage probability (CP) and av erage length (AL) of point-wise 95% credible interv als, av eraged o ver 500 e valuation points, obtained from time-wise kernel density estimation (KDE) and NC-Bayes with time-in variant noise (N1), time-dependent noise (N2) and adapti ve noise (aN). These v al- ues are av eraged ov er 200 Monte Carlo replications. Scenario 1 Scenario 2 NC-Bayes NC-Bayes N1 N2 aN KDE N1 N2 aN KDE ABE 0.267 0.270 0.255 0.301 0.350 0.375 0.371 0.581 CP (%) 96.2 95.1 93.9 - 97.2 95.8 94.5 - AL 0.154 0.130 0.113 - 0.123 0.129 0.111 - 3.3 Example: crime incidents in W ashington W e demonstrate the proposed method using publicly av ailable crime incident data in W ashington, DC 1 . The dataset consists of the locations (longitude and latitude) of gun assault incidents observ ed in each month ( T = 12 ) in 2022. The sample size for each month ranges from 60 to 85 observ ations. For this dataset, we applied the proposed time- v arying density model based on NC-Bayes with adapti ve noise updating. W e generated 3,000 posterior samples after discarding the first 2,000 iterations as b urn-in, and used the posterior mean as a point estimate. For comparison, we also fitted kernel density esti- mators (KDEs) separately for each month. In Figure 2, we present the estimated density functions for four selected months. In Figure 2, we show the estimated density functions for four selected months (Jan- uary , May , September and December). The results indicate that NC-Bayes is able to flex- ibly capture complex spatial density structures and their temporal ev olution over months. In particular , the proposed method produces sharp and well-localized density estimates while adapting smoothly to changes in the underlying spatial distrib ution over time. In contrast, the KDE results tend to be ov erly smooth across all months, which is likely due to the relativ ely small sample size a vailable in each period. This behavior is consistent 1 av ailable at https://opendata.dc.gov/datasets/DCGIS::crime- incidents- in- 2 022/about . 17 Figure 2: Estimated spatial density functions for gun assault incident locations in W ash- ington, DC, for four selected months ( t = 1 , 5 , 9 , 12 ) in 2022. The observed points (upper) and the posterior mean of the time-varying density model fitted by NC-Bayes (middle) are compared with the time-wise KDE (lo wer). with the findings from our simulation studies, where KDE exhibited limited ability to recov er complex or rapidly changing density shapes under small-sample settings. 4 NC-Bayes in Action II: Sparse T orus Graph 4.1 Model Angular measurements are naturally modeled as circular random v ariables, whose joint distribution for d variables lies on the d -dimensional torus, the product of d circles. T o describe dependencies among such v ariables, Klein et al. (2020) introduced torus graphs, a class of regular full e xponential-families with pair interactions. Let x = ( x 1 , . . . , x d ) ⊤ be a vector of circular variables, each x j ∈ [0 , 2 π ) . The torus 18 graph density can be expressed as p ( x | ϕ ) ∝ e p ( x | ϕ ) = exp ( d X j =1 ϕ ⊤ j θ ( x j ) + X j 0 (Piironen and V ehtari, 2017). Concretely , for the node-specific parameters we set, for l = 1 , 2 , ϕ j ( l ) ∼ N 0 , 1 c 2 + 1 λ 2 j ( l ) τ 2 ! − 1 ! , λ j ( l ) ∼ C + (0 , 1) , and for the edge-le vel components we regularize the grouped horseshoe by placing the slab at the group scale: for l ′ = 1 , . . . , 4 , ϕ j k ( l ′ ) ∼ N 0 , 1 c 2 + 1 u 2 j k τ 2 ! − 1 ! , u j k ∼ C + (0 , 1) . W ith this approach, we enforce an admissible network structure through a constrained parameterization of ϕ , while retaining edge-wise shrinkage via the group scales u j k . In the follo wing sections, we use the fixed slab width c = 1 . 4.3 Simulation study W e generate a synthetic data set by mimicking the simulation scenario giv en in Klein et al. (2020). Let n = 200 and d = 12 in this study . The d -dimensional circular v ariable x = ( x 1 , . . . , x d ) ⊤ is generated in a sequential way . First, we generate x 1 from the von- Mises distribution with mean direction µ = π / 6 and concentration parameter κ = 2 . F or j = 2 , . . . , d , we generate x j from the von-Mises distribution with mean direction x j − 1 + µ and concentration parameter κ = 2 . Therefore, the true edge structure corresponds to a linear chain in which each node j is connected to node j + 1 , for j = 1 , . . . , d − 1 . For the synthetic data, we applied the proposed method with the re gularized grouped horseshoe prior . Using 2,000 posterior samples after discarding the first 1,000 samples as b urn-in, 21 1 2 3 4 5 6 7 8 9 10 11 12 Figure 3: The detected edges from NC-Bayes. Note that the true edge structure corre- sponds to a linear chain where each node j is connected to node j + 1 , for j = 1 , . . . , d − 1 . we computed the posterior median ˜ ϕ j k ( l ) of ϕ j k ( l ) and re garded the edge ( j, k ) as non-null when at least one of {| ˜ ϕ j k (1) | , . . . , | ˜ ϕ j k (4) |} is larger than 0.100. The detected non-null edges are shown in Figure 3, with edge width proportional to P 4 l =1 | ˜ ϕ j k ( l ) | . From Figure 3, it can be seen that NC-Bayes successfully recovers the true graph structure used in the simulation. In particular , the edges corresponding to the linear chain structure between adjacent nodes are entirely detected, while spurious edges are not identified. Moreo ver , the edge widths are proportional to the frequenc y with which the estimated associations de viate from zero, meaning that edges corresponding to stronger true dependencies tend to be assigned larger estimated v alues more consistently across repetitions and are thus rendered thicker . This result indicates that NC-Bayes is capable of accurately identifying both the presence and the strength of the true conditional dependencies. Additionally , we conducted a comparati ve study to e valuate the performance of the proposed method against a Hyv ¨ arinen score-based Bayesian inference method. This com- peting approach utilizes generalized Bayesian inference (Bissiri et al., 2016), and we call it H -Bayes. Further details on its generalized posterior distribution, which includes a loss scaling parameter w that requires calibration, and the corresponding sampling algorithm, are pro vided in Supplementary Material S1.3. The comparison was performed under the 22 identical simulation scenario described pre viously , where the true graph structure is a lin- ear chain. For both methods, we assessed the performance using a grouped horseshoe prior; for NC-Bayes, we used its re gularized counterpart. W e also assessed the perfor - mance of NC-Bayes under two settings: with and without updating the noise distribution, where the former adopts Algorithm 3 with α = 0 . 2 to update the noise distrib ution at each iteration. In the following ev aluations, performance metrics such as Recall, Precision, and Accuracy are calculated by defining a ’positi ve’ case as a true edge and a ’negati ve’ case as the absence of an edge. T ables 2 and 3 summarize performance under the median-based (V alue = 0 . 100 ) and 90% credible-interval (CI; V alue = 90 . 0 ) edge detection rules, respectiv ely . Under the median-based rule, NC-Bayes without noise updating achieves excellent performance, with recall of 0.996, near -perfect precision of 0.999, and accuracy of 0.999; enabling noise updating further impro ves recall to 0.999 but leads to a notable drop in precision (0.824) and accurac y (0.964), suggesting a trade-off between sensitivity and specificity . Under the CI-based rule, NC-Bayes without noise updating behav es conservati vely , at- taining perfect precision (1.000) but lo wer recall (0.507), yielding an accuracy of 0.918; noise updating substantially improv es recall to 0.847 and accuracy to 0.974, while preci- sion remains near-perfect (0.999); importantly , CP for ϕ remains high under both settings (97.9% and 98.8%, respecti vely), suggesting reliable interv al beha vior . In contrast, H - Bayes exhibits pronounced sensitivity to the loss-scaling parameter w : while it performs reasonably well for a small, well-tuned value ( w = 0 . 2 ), its precision deteriorates sharply as w increases, resulting in dramatic accurac y losses under both detection rules (with se- vere inflation of false positi ves). Under the CI-based rule, its CP collapses as well (45.1% for w = 1 . 0 and 17.9% for w = 5 . 0 ), signaling instability and loss of calibration. Ov erall, these results indicate that NC-Bayes provides a practically reliable alternati ve with stable behavior across settings, whereas H -Bayes is hindered by the f act that w directly influ- ences the effecti ve sparsity of the solution; consequently , within the H -Bayes frame work, it is dif ficult to introduce shrinkage priors in a principled and robust manner because 23 T able 2: Performance metrics (av eraged o ver 100 simulations) using the median-based edge detection rule. An edge is detected if the absolute v alue of posterior median of any associated coef ficient exceeds the threshold (V alue = 0.100). Method w Noise Update V alue Recall Precision Accurac y NC-Bayes – False 0.100 0.996 0.999 0.999 – T rue 0.100 0.999 0.824 0.964 0.2 – 0.100 1.000 0.699 0.928 H -Bayes 1.0 – 0.100 1.000 0.174 0.207 5.0 – 0.100 1.000 0.168 0.173 T able 3: Performance metrics (av eraged ov er 100 simulations) using the CI-based edge detection rule. An edge is detected if its 90% credible interval (V alue = 90.0) does not contain zero. ’CP’ is the co verage probability for ϕ . Method w Noise Update V alue CP (%) Recall Precision Accurac y NC-Bayes – False 90.0 97.9 0.507 1.000 0.918 – T rue 90.0 98.8 0.847 0.999 0.974 0.2 – 90.0 97.2 1.000 0.941 0.989 H -Bayes 1.0 – 90.0 45.1 1.000 0.181 0.248 5.0 – 90.0 17.9 1.000 0.167 0.170 prior-induced sparsity becomes entangled with the choice of w . Additional Monte Carlo experiments under alternativ e scenarios, reported in Supplementary Material S3, lead to conclusions consistent with those presented here. 4.4 Example: multic hannel neural phase angle data As a real-world application, we analyze multichannel neural phase data computed from the local field potential (LFP) in a macaque monke y e xperiment, pre viously studied in Brincat and Miller (2015, 2016) and later re visited using torus graphs in Klein et al. (2020). The data consists of beta-phase values at 300 ms after stimulus onset across 840 trials. A total of 24 signals were simultaneously recorded from the prefrontal cortex (PFC) and the hippocampus (HPC), with the hippocampal electrodes assigned to the dentate gyrus (DG), CA3, and subiculum (Sub). W e use the preprocessed data 2 obtained by applying Morlet wa velets to LFP signals (Klein et al., 2020). Analysis of connecti vity patterns in these data can rev eal whether hippocampal activ- 2 av ailable at https://github.com/natalieklein/torus- graphs . 24 Figure 4: Pairwise scatter plots for one selected channel from each of the four regions (CA3, DG, Sub, and PFC) are sho wn. Each axis represents the oscillatory beta phase (range: − π to π ). ity precedes acti vity in the prefrontal corte x. Moreov er , torus graph models allo w us to distinguish direct from indirect connections more ef fectiv ely than con ventional bi variate coupling measures (Klein et al., 2020). W e use this dataset to illustrate the ability of the proposed method to infer conditional phase dependencies in multichannel neural signals. Figure 4 shows pairwise scatter plots of beta-phase values for one representative channel from each of the four re gions (CA3, DG, Sub, and PFC), illustrating the v arying degrees of phase coupling across regions. For statistical modeling, we treat these observations as independent and identically distributed samples from a 24-dimensional torus graph model. Since the torus graph lik e- lihood is av ailable only up to a parameter -dependent normalizing constant, we perform Bayesian parameter estimation using the NC-Bayes approach. F or comparison, we also apply the H -Bayes procedure, follo wing the same comparativ e frame work as in the sim- 25 ulation study of Section 4.3. In particular , for both methods we use a grouped horseshoe prior , and for NC-Bayes we adopt its regularized counterpart. Moreov er , in the proposed method, to prev ent excessi ve sparsity , the global shrinkage scale is fixed at the v alue τ = p 0 / ( √ n + m (2 d 2 − p 0 )) , where p 0 = ⌊ 1 . 7 d 2 + 0 . 5 ⌋ , following Piironen and V ehtari (2017). This setting incorporates prior information that approximately 85% of all param- eters contain signals. Additionally , the noise distrib ution is updated adaptiv ely within the burn-in period using Algorithm 3 with α = 0 . 2 . For both methods, posterior inference is based on 2,000 posterior samples after discarding the first 1,000 samples as b urn-in. Pos- terior summaries of edge parameters are then used to infer the connecti vity structure: an edge is detected if the absolute value of the posterior median of any associated coef ficient exceeds a threshold of 0.020. Figure 5 displays the estimated network structure obtained from NC-Bayes. The iden- tified graph e xhibits moderate within-region connecti vity and substantial between-region connecti vity , particularly from hippocampal regions to PFC. Specifically , CA3 and Sub sho w direct connections to PFC, while DG connects to both CA3 and Sub . These find- ings are consistent with Klein et al. (2020), who analyzed the same dataset using score matching-based inference and found that PFC-HPC connections were primarily dri ven by PFC-CA3 and PFC-Sub pathways, with no evidence for direct PFC-DG connections. Our NC-Bayes-based analysis recovers this same connectivity pattern, v alidating the method’ s ability to identify biologically meaningful network structures. In contrast, Figure 6 sho ws the es timated network structure using H -Bayes with the learning-rate parameter w = 0 . 2 , which produced the sparsest estimates in our simulation study (Section 4.3 and Supple- mentary Material S3). Despite using this sparsity-promoting setting, the resulting graph is considerably denser than the graph based on NC-Bayes, with substantially more edges detected both within and between re gions. This dense connecti vity pattern makes it more challenging to identify the k ey pathways and distinguish direct connections from spurious associations. The comparison highlights the ability of NC-Bayes to produce more parsi- monious and interpretable network structures, ev en when the competing method is tuned 26 for sparsity . Next, we e valuate the uncertainty re garding edge presence by examining the posterior median and 50% credible intervals. The results are summarized in Figure 7. Examining the top three plots rev eals that for the NC-Bayes approach, edges identified as present based on the median are determined to be absent when assessed using the credible in- terv als. This indicates that when uncertainty is properly ev aluated, there is insuf ficient 1 2 3 4 5 1 2 3 4 5 6 7 8 1 2 3 1 2 3 4 5 6 7 8 CA3 DG Sub PFC Figure 5: Inferred torus graph structure from the phase angle data using NC-Bayes. 1 2 3 4 5 1 2 3 4 5 6 7 8 1 2 3 1 2 3 4 5 6 7 8 CA3 DG Sub PFC Figure 6: Inferred torus graph structure from the phase data using H -Bayes with w = 0 . 2 . 27 e vidence for the previously estimated netw ork structure. In contrast, for the H -Bayes ap- proach, edge determinations based on the median coincide with those based on the cred- ible intervals. Howe ver , the interv al lengths vary substantially depending on the learning rate w , with uncertainty decreasing as w increases. Since the results in Section 4.3 and Supplementary Material S3 demonstrate that larger values of w facilitate the estimation of denser network structures, the H -Bayes approach may erroneously claim high confidence in incorrect inference results. Figure 7: Posterior medians and 50% credible interv als for the selected edge coefficients in the torus graph model fitted to the phase-angle data. The panels compare the NC-Bayes approach (top left) with H -Bayes using the weight parameter w set to 0 . 2 (top right), 1 (bottom left), and 5 (bottom right). Circles indicate posterior medians and horizontal segments indicate 50% credible interv als. In each panel, the top three plots show edges selected from re gions identified as associated in Klein et al. (2020), while the bottom two plots sho w edges selected from regions identified as not associated. 28 5 Discussion While the main text focuses on the MCMC-based perspectiv e of NC-Bayes, a further connection to likelihood-free inference is worth discussing. A growing body of work has e xplored classification-based approaches, including classifier-based ABC (Gutmann et al., 2018; W ang et al., 2022) and methods that plug likelihood-ratio estimators into the Metropolis–Hastings algorithm (Kaji and Ro ˇ cko v ´ a, 2023). These approaches share with NC-Bayes the motiv ation of a voiding direct e valuation of an intractable quantity , though they primarily target simulator-based models where the likelihood itself is unav ailable, whereas NC-Bayes targets unnormalized models where the likelihood is known up to a normalizing constant. Unlike ABC, which introduces approximation error through sum- mary statistics and tolerance thresholds, NC-Bayes requires neither , yielding a posterior free from these sources of approximation. Moreov er , the P ´ olya-Gamma data augmenta- tion yields closed-form Gibbs conditionals, an analytical tractability that these likelihood- free approaches do not generally of fer . Despite these advantages, NC-Bayes is not without limitations. Since the NC-Bayes approach in volv es a logistic regression likelihood, it becomes considerably challenging in high-dimensional settings. In Section 4.2, we introduced a method using the regular- ized horseshoe to address a problem that arises in high-dimensional logistic regression: regression coef ficients identified as signals can take on extremely lar ge v alues. This issue is mitigated by restricting the tail weight, and we employed this approach in our simula- tions and data analysis. Howe ver , reducing the tail weight creates a problem where the distribution struggles to escape from the neighborhood of zero, which may lead to exces- si vely sparse estimates. Therefore, selecting an appropriate prior distrib ution that enables proper shrinkage estimation in high-dimensional logistic re gression remains an important challenge. A ke y direction for future work is the dev elopment of methods for selecting the noise distribution. While we hav e considered fixed noise distributions and practical adaptation 29 schemes within the MCMC framew ork in this paper , a more systematic strategy is needed. According to Chehab et al. (2022), the theoretically optimal noise distribution need not coincide with the data distribution in the frequentist framework. Similarly , deriving a theoretically optimal noise selection method for the NC-Bayes approach remains an im- portant direction. Additionally , while we estimate the data distribution density during MCMC using importance resampling, an alternati ve approach would be to pre-estimate the data distribution using a nonparametric high-precision density estimator and then use it as the noise distribution (Uehara et al., 2020). Acknowledgement This work is partially supported by Japan Society for the Promotion of Science (KAK- ENHI) grant numbers 24K21420 and 25H00546. T akeru Matsuda was supported by JSPS KAKENHI Grant Numbers 21H05205, 22K17865 and 24K02951 and JST Grant Num- bers JPMJMS2024 and JPMJ AP25B1. Refer ences Andrieu, C. and G. O. Roberts (2009). The pseudo-marginal approach for efficient Monte Carlo computations. The Annals of Statistics 37 (2), 697–725. Publisher: Institute of Mathematical Statistics. Besag, J. (1974). Spatial interaction and the statistical analysis of lattice systems. Journal of the Royal Statistical Society: Series B (Methodological) 36 (2), 192–225. Bissiri, P . G., C. C. Holmes, and S. G. W alk er (2016). A general frame work for updat- ing belief distributions. Journal of the Royal Statistical Society Series B: Statistical Methodology 78 (5), 1103–1130. 30 Brincat, S. L. and E. K. Miller (2015, April). Frequency-specific hippocampal-prefrontal interactions during associati ve learning. Natur e Neur oscience 18 (4), 576–581. Brincat, S. L. and E. K. Miller (2016). Prefrontal cortex networks shift from external to internal modes during learning. J ournal of Neur oscience 36 (37), 9739–9754. Carv alho, C. M., N. G. Polson, and J. G. Scott (2009). Handling sparsity via the horse- shoe. In Artificial intelligence and statistics , pp. 73–80. PMLR. Carv alho, C. M., N. G. Polson, and J. G. Scott (2010). The horseshoe estimator for sparse signals. Biometrika , 465–480. Chehab, O., A. Gramfort, and A. Hyv ¨ arinen (2022, 01–05 Aug). The optimal noise in noise-contrasti ve learning is not what you think. In J. Cussens and K. Zhang (Eds.), Pr oceedings of the Thirty-Eighth Confer ence on Uncertainty in Artificial Intelligence , V olume 180 of Pr oceedings of Machine Learning Resear ch , pp. 307–316. PMLR. Gutmann, M. and A. Hyv ¨ arinen (2010). Noise-contrasti ve estimation: A new estima- tion principle for unnormalized statistical models. In Pr oceedings of the thirteenth international confer ence on artificial intelligence and statistics , pp. 297–304. JMLR W orkshop and Conference Proceedings. Gutmann, M. U., R. Dutta, S. Kaski, and J. Corander (2018, March). Likelihood-free inference via classification. Statistics and Computing 28 (2), 411–425. Gutmann, M. U. and A. Hyv ¨ arinen (2012). Noise-contrasti ve estimation of unnormalized statistical models, with applications to natural image statistics. Journal of Machine Learning Resear ch 13 (1), 307–361. Hunter , D. R. and M. S. Handcock (2012). Inference in curved exponential family models for networks. J ournal of Computational and Graphical Statistics 15 (3), 565–583. Hyv ¨ arinen, A. (2005). Estimation of non-normalized statistical models by score matching. J ournal of Machine Learning Resear ch 6 (4). 31 Hyv ¨ arinen, A., I. Khemakhem, and R. Monti (2024). Identifiability of latent-variable and structural-equation models: from linear to nonlinear . Annals of the Institute of Statistical Mathematics 76 (1), 1–33. Inouye, D. I., E. Y ang, G. I. Allen, and P . Ravikumar (2017). A re view of multiv ariate dis- tributions for count data deri ved from the poisson distrib ution. WIREs Computational Statistics 9 (3), e1398. Je wson, J. and D. Rossell (2022). General Bayesian loss function selection and the use of improper models. J ournal of the Royal Statistical Society Series B: Statistical Method- ology 84 (5), 1640–1665. Kaji, T . and V . Ro ˇ cko v ´ a (2023). Metropolis–hastings via classification. Journal of the American Statistical Association 118 (544), 2533–2547. Klein, N., J. Orellana, S. L. Brincat, E. K. Miller , and R. E. Kass (2020). T orus graphs for multi variate phase coupling analysis. The annals of applied statistics 14 (2), 635. Lenk, P . J. (1988). The logistic normal distribution for Bayesian, nonparametric, predic- ti ve densities. J ournal of the American Statistical Association 83 (402), 509–516. Lin, L., M. Drton, and A. Shojaie (2016). Estimation of high-dimensional graphical models using regularized score matching. Electr onic journal of statistics 10 (1), 806. L yne, A.-M., M. Girolami, Y . Atchad ´ e, H. Strathmann, and D. Simpson (2015, Nov em- ber). On Russian Roulette estimates for Bayesian inference with doubly-intractable likelihoods. Statistical Science 30 (4), 443–467. Marin, J.-M., P . Pudlo, C. P . Robert, and R. J. Ryder (2012, No vember). Approximate Bayesian computational methods. Statistics and Computing 22 (6), 1167–1180. Matsubara, T ., J. Knoblauch, F .-X. Briol, and C. J. Oates (2022). Robust generalised Bayesian inference for intractable likelihoods. Journal of the Royal Statistical Society Series B: Statistical Methodology 84 (3), 997–1022. 32 Matsubara, T ., J. Knoblauch, F .-X. Briol, and C. J. Oates (2024). Generalized Bayesian inference for discrete intractable likelihood. Journal of the American Statistical Asso- ciation 119 (547), 2345–2355. Matsuda, T . and A. Hyv ¨ arinen (2019). Estimation of non-normalized mixture models. In The 22nd International Confer ence on Artificial Intelligence and Statistics , pp. 2555– 2563. PMLR. Matsuda, T ., M. Uehara, and A. Hyvarinen (2021). Information criteria for non- normalized models. J ournal of Machine Learning Resear ch 22 (158), 1–33. Nishimura, A. and M. A. Suchard (2023). Shrinkage with shrunken shoulders: Gibbs sampling shrinkage model posteriors with guaranteed con ver gence rates. Bayesian Analysis 18 (2), 367 – 390. Pacchiardi, L., S. Khoo, and R. Dutta (2024). Generalized Bayesian likelihood-free infer- ence. Electr onic Journal of Statistics 18 (2), 3628–3686. Park, J. and M. Haran (2018). Bayesian inference in the presence of intractable normal- izing functions. J ournal of the American Statistical Association 113 (523), 1372–1390. Park, J. and M. Haran (2020). A function emulation approach for doubly intractable distributions. J ournal of Computational and Graphical Statistics 29 (1), 66–77. Piironen, J. and A. V ehtari (2017). Sparsity information and regularization in the horse- shoe and other shrinkage priors. Electr onic Journal of Statistics 11 (2), 5018 – 5051. Polson, N. G., J. G. Scott, and J. W indle (2013). Bayesian inference for logistic mod- els using P ´ olya–Gamma latent v ariables. Journal of the American statistical Associa- tion 108 (504), 1339–1349. Rhodes, B. and M. U. Gutmann (2019, 16–18 Apr). V ariational noise-contrasti ve esti- mation. In K. Chaudhuri and M. Sugiyama (Eds.), Pr oceedings of the T wenty-Second 33 International Confer ence on Artificial Intellig ence and Statistics , V olume 89 of Pr o- ceedings of Machine Learning Resear ch , pp. 2741–2750. PMLR. Robins, G., P . Pattison, Y . Kalish, and D. Lusher (2007). An introduction to exponential random graph (p*) models for social networks. Social Networks 29 (2), 173–191. Sukeda, I. and T . Matsuda (2026). Sparse graphical modeling for electrophysiological phase-based connecti vity using circular statistics. Neural Computation . T okdar , S. T . and J. K. Ghosh (2007). Posterior consistency of logistic Gaussian process priors in density estimation. Journal of statistical planning and infer ence 137 (1), 34– 42. Uehara, M., T . Kanamori, T . T akenouchi, and T . Matsuda (2020, 26–28 Aug). A unified statistically ef ficient estimation frame work for unnormalized models. In S. Chiappa and R. Calandra (Eds.), Pr oceedings of the T wenty Thir d International Confer ence on Artificial Intelligence and Statistics , V olume 108 of Pr oceedings of Machine Learning Resear ch , pp. 809–819. PMLR. W ang, Y ., T . Kaji, and V . Rockov a (2022). Approximate Bayesian computation via clas- sification. J ournal of Machine Learning Resear ch 23 (350), 1–49. W u, P .-S. and R. Martin (2023, March). A Comparison of Learning Rate Selection Meth- ods in Generalized Bayesian Inference. Bayesian Analysis 18 (1), 105–132. Zach, C. (2023). Fully v ariational noise-contrastiv e estimation. In Image Analysis: 22nd Scandinavian Confer ence, SCIA 2023, Sirkka, F inland, April 18–21, 2023, Pr oceed- ings, P art II , Berlin, Heidelberg, pp. 175–190. Springer -V erlag. 34 Supplementary Material f or “Contrastive Bayesian Inference f or Unnormalized Models” This Supplementary Material provides details of the posterior sampling algorithms and additional simulations. S1 Details of posterior computation algorithms S1.1 T ime-varying Density estimation Using data augmentation, the posterior samples can be generated via a si mple Gibbs sam- pler , where the step-by-step algorithm is described below . 1. (Sampling from PG latent v ariable) For t = 1 , . . . , T and i = 1 , . . . , n t + m t , generate ω ti from PG(1 , Φ ⊤ ti θ t + β t + C ti ) . 2. (Sampling from θ t ) For t = 1 , . . . , T , generate θ t from N ( e B θ t e A θ t , e B θ t ) , where e B θ t = b 0 t I L + n t + m t X i =1 ω ti Φ ti Φ ⊤ ti ! − 1 , e A θ t = a 0 t + n t + m t X i =1 n s it − 1 2 − ω ti ( β t + C ti ) o Φ ti with b 0 t = 2 λ − 1 and a 0 t = λ − 1 ( θ t − 1 + θ t +1 ) for t = 1 , . . . , T − 1 and b 0 T = λ − 1 and a 0 T = λ − 1 θ t − 1 . 3. (Sampling from β t ) For t = 1 , . . . , T , generate β t from N ( e B β t e A β t , e B β t ) , where e B β t = b − 1 0 + n t + m t X i =1 ω ti ! − 1 , e A β t = n t + m t X i =1 n s it − 1 2 − ω ti (Φ ⊤ ti θ t + C ti ) o Φ ti . 4. (Sampling from λ ) Generate λ from IG( n 1 , ν 1 ) , where n 1 = n 0 + T L/ 2 and ν 1 = ν 0 + P T t =1 P L l =1 ( θ tl − θ t − 1 ,l ) 2 / 2 . 1 S1.2 Sparse T orus Gr aph The posterior distribution from (4) is sampled using a Gibbs sampler that iterates through four main steps. The complete Gibbs sampler is described as follo ws: 1. (Sampling of ω i ) For i = 1 , . . . , n + m , sample the P ´ olya-Gamma latent v ariables from: ω i ∼ PG(1 , ψ i + C ( x i )) . 2. (Sampling of γ ) Conditional on the latent variables ω i and the prior hyperparame- ters, sample γ from its Gaussian full conditional N ( ˜ b γ , ˜ B γ ) , where ˜ B γ = B − 1 γ 0 + n + m X i =1 ω i z ( x i ) z ( x i ) ⊤ ! − 1 , ˜ b γ = ˜ B γ ( n + m X i =1 s i − 1 2 − ω i C ( x i ) z ( x i ) + B − 1 γ 0 b γ 0 ) . Here, the prior cov ariance matrix B γ 0 is determined by the shrinkage prior structure detailed in the ne xt step, and we set the prior mean to zero, b γ 0 = 0 , so that B − 1 γ 0 b γ 0 v anishes in the mean update. Also, under a regularized horseshoe prior , we add c − 2 to the diagonal of the prior precision matrix B − 1 γ 0 , where c is the finite slab width; in our experiments, we set c = 1 . 3. (Sampling of Hyperparameters) Depending on the chosen prior , update the shrink- age hyperparameters. (a) For the standard horseshoe prior: Let ϕ k be an element of ϕ and λ k is its local shrinkage parameter for k = 1 , . . . , 2 d 2 . - Sample the local shrinkage components: λ 2 k | ϕ k , τ 2 , ν k ∼ In v-Gamma 1 , ϕ 2 k 2 τ 2 + 1 ν k , ν k | λ 2 k ∼ In v-Gamma 1 , 1 + 1 λ 2 k . 2 - Sample the global shrinkage components: τ 2 | { ϕ k , λ 2 k } k , ξ ∼ In v-Gamma 2 d 2 + 1 2 , 1 2 2 d 2 X k =1 ϕ 2 k λ 2 k + 1 ξ ! , ξ | τ 2 ∼ In v-Gamma 1 , 1 + 1 τ 2 . (b) For the grouped horseshoe prior on interaction parameters: The main ef fects ϕ j ( l ) are updated as in (a). F or interaction parameters ϕ j k ( l ′ ) : - Sample the group-le vel shrinkage components for each edge ( j, k ) : u 2 j k | − ∼ In v-Gamma 5 2 , 1 2 4 X l ′ =1 ϕ 2 j k ( l ′ ) τ 2 + 1 t j k ! , t j k | u 2 j k ∼ In v-Gamma 1 , 1 + 1 u 2 j k ! . - Sample the global shrinkage components: τ 2 | − ∼ In v-Gamma 2 d 2 + 1 2 , 1 2 X j,l ϕ 2 j ( l ) λ 2 j ( l ) + X j 0 is a loss scaling parameter that controls the influence of the data on the posterior . W e place a conjugate Gaussian prior on ϕ , which, combined with the quadratic form of L ( ϕ ) , results in a Gaussian posterior . The MCMC algorithm for this method in volves the follo wing steps: 1. (Pr e-computation) Gi ven the data X n , first compute the matrices b Γ and b H based on the deri vati ves of the suf ficient statistics, follo wing the formulation in Klein et al. (2020). This is done once before the MCMC sampling. 2. (MCMC Iteration) At each iteration, sample the parameters and hyperparameters. (a) (Sampling of ϕ ) Let the prior for ϕ be N (0 , B ϕ 0 ) , where B ϕ 0 is determined by the horseshoe shrinkage structure. Sample ϕ from its Gaussian full conditional posterior N ( ˜ b ϕ , ˜ B ϕ ) , where ˜ B − 1 ϕ = B − 1 ϕ 0 + nw b Γ , ˜ b ϕ = ˜ B ϕ ( nw b H ) . (b) (Sampling of Hyperparameters) Sample the hyperparameters for the stan- dard or grouped horseshoe prior . The full conditional distributions and update steps for these parameters are identical to those described in Step 3(a) and 3(b) of the NC-Bayes-based Gibbs sampler . S2 Additional Simulation Results on Time-V arying Density Estima- tion Here, we pro vide the true time-varying density and its point estimate by NC-Bayes and time-wise kernel density estimation. The results are given in Figure S1. 4 Figure S1: T rue time-v arying density (upper), posterior mean of the time-varying density model fitted by NC-Bayes (middle) and time-wise KDE (lower), for selected four time points under Scenario 2. S3 Additional Simulation Results on Sparse T orus Graph In addition to the simulation study presented in the main te xt, we conducted two further experiments with settings based on (Sukeda and Matsuda, 2026) to assess the performance of NC-Bayes under dif ferent graph structures and dimensionalities. S3.1 Scenario 1: 5-Node Cycle Gr aph This scenario was designed to ev aluate the methods’ performance on a small, well-defined sparse graph structure. • Graph Structur e : W e used a fixed graph with d = 5 nodes. The true graph is a 5-cycle (a pentagonal structure). The specific edges defined in the simulation code are (1,3), (1,4), (2,4), (2,5), and (3,5). • T rue Parameter V alues : The main ef fect parameters were set to zero, i.e., ϕ j = (0 , 0) ⊤ for all j = 1 , . . . , 5 . For the 5 pairs of nodes ( j, k ) connected by an edge, the 5 interaction parameters were set to ϕ j k = (0 . 3 , 0 . 3 , 0 . 3 , 0 . 3) ⊤ . All other interaction parameters for non-connected pairs were set to zero. • Data Generation Algorithm : A total of n = 1 , 000 samples were generated from the true torus graph model using rejection sampling. Specifically , we first com- puted a uniform upper bound U max for the unnormalized log-density o ver the entire domain. W e then repeatedly dre w candidate samples x from a uniform distribu- tion on [0 , 2 π ) 5 and accepted each candidate with probability exp( U ( x ) − U max ) , where U ( x ) is the unnormalized log-density at x . This process was continued until n = 1 , 000 samples were accepted. The results for this scenario are presented in T able S1 and T able S2. Across both edge detection rules, a consistent pattern emerges reg arding the relativ e stability of the two methods. NC-Bayes achie ves near -perfect edge recov ery in this setting: under both the median-based rule (V alue = 0 . 100 ) and the CI-based rule (V alue = 90 . 0 ), it attains essentially perfect recall, precision, and accuracy regardless of whether the noise distri- bution is updated. In contrast, H -Bayes again e xhibits a pronounced sensiti vity to the loss-scaling parameter w . When w is small ( w = 0 . 2 ), its performance matches that of NC-Bayes, yielding perfect recall, precision, and accuracy under both detection rules. Ho wev er , as w increases, the method becomes progressiv ely less sparse, leading to a marked deterioration in precision and, consequently , accurac y , despite recall remaining at 1.000 throughout. This de gradation is particularly e vident under the CI-based rule, where CP also drops substantially as w grows, indicating increasingly unstable interv al behavior . Overall, these tables reinforce that NC-Bayes pro vides uniformly reliable performance in this scenario, whereas H -Bayes can be highly ef fective only for carefully tuned, small v alues of w and deteriorates rapidly for moderate-to-large w . S3.2 Scenario 2: 30-Dimensional Er d ¨ os-R ´ enyi Graph This scenario was designed to assess performance in a higher-dimensional setting with a more complex, random structure. 6 T able S1: Performance metrics for 5-dimensional torus graphs (Median criterion), av er- aged ov er 100 simulations. Method w Noise Update V alue Recall Precision Accuracy NC-Bayes – False 0.100 1.000 1.000 1.000 – T rue 0.100 1.000 0.996 0.998 0.2 – 0.100 1.000 1.000 1.000 H -Bayes 1.0 – 0.100 1.000 0.893 0.940 5.0 – 0.100 1.000 0.820 0.890 T able S2: Performance metrics for 5-dimensional torus graphs (CI criterion), a veraged ov er 100 simulations. Method w Noise Update V alue CP (%) Recall Precision Accuracy NC-Bayes – False 90.0 99.7 1.000 1.000 1.000 – T rue 90.0 99.0 1.000 1.000 1.000 0.2 – 90.0 98.7 1.000 1.000 1.000 H -Bayes 1.0 – 90.0 82.9 1.000 0.776 0.856 5.0 – 90.0 43.6 1.000 0.513 0.525 • Graph Structure : W e used a fixed instance of an Erd ¨ os-R ´ enyi random graph G ( d, p e ) with d = 30 nodes and an edge probability of p e = 0 . 1 . The graph was generated once using a fix ed seed and this same structure was used for all 100 Monte Carlo simulations. • T rue Parameter V alues : All main effect parameters ϕ j were set to zero. For pairs of nodes ( j, k ) connected by an edge in the generated graph, the interaction param- eters were set to ϕ j k = (0 . 3 , 0 . 3 , 0 , 0) ⊤ , corresponding to only rotational depen- dence. All other interaction parameters were zero. • Data Generation Algorithm : A total of n = 1 , 000 samples were generated from the true model using a Gibbs sampler . Starting from a random initial state x (0) ∈ [0 , 2 π ) 30 , we iterativ ely sampled each node’ s value x k from its full con- ditional distribution, which is a von-Mises distribution whose parameters depend on the current values of its neighbors in the graph. W e ran the Gibbs sampler for 3,000 iterations, discarded the first 2,000 iterations as burn-in, and collected the subsequent 1,000 samples to form the dataset. 7 T able S3: Performance metrics for Erd ¨ os-R ´ enyi random graph ( d = 30 ) (Median crite- rion), av eraged ov er 100 simulations. Method w Noise Update V alue Recall Precision Accuracy NC-Bayes – False 0.100 0.991 0.436 0.878 – T rue 0.100 0.917 0.957 0.988 0.2 – 0.100 0.997 1.000 1.000 H -Bayes 1.0 – 0.100 1.000 0.660 0.952 5.0 – 0.100 1.000 0.303 0.783 The results for this scenario are summarized in T able S3 and T able S4. Compared with the star-graph case, this Erd ¨ os–R ´ enyi setting re veals a clearer recall–precision ten- sion for NC-Bayes. W ithout updating the noise distribution, NC-Bayes tends to fav or sensiti vity (high recall) at the expense of specificity (moderate-to-low precision); once noise updating is enabled, false discoveries are substantially reduced, yielding a sharp gain in precision and a corresponding impro vement in ov erall accuracy , with only a mod- erate loss in recall. The same qualitati ve behavior appears under both the median-based and CI-based detection rules. In addition, under the CI-based rule, CP remains high for NC-Bayes under both noise-update settings, indicating that uncertainty quantification is well-calibrated and not materially af fected by noise updating. By contrast, H -Bayes con- tinues to depend strongly on the loss-scaling choice w . At a small v alue ( w = 0 . 2 ), it can achie ve near -ideal edge recov ery under both detection rules, b ut increasing w leads to increasingly dense selections: recall stays high while precision (and hence accuracy) degrades rapidly . This loss of sparsity is most severe under the CI-based rule, where CP also falls markedly as w gro ws, reflecting unstable interv al behavior . Overall, these results suggest that NC-Bayes deli vers consistently reliable inference across detection rules (and stable CP under the CI rule), whereas H -Bayes is competitiv e only when w is carefully kept small and becomes unreliable for moderate-to-lar ge w . 8 T able S4: Performance metrics for Erd ¨ os-R ´ enyi random graph ( d = 30 ) (CI criterion), av eraged ov er 100 simulations. Method w Noise Update V alue CP (%) Recall Precision Accuracy NC-Bayes – False 90.0 97.5 0.937 0.838 0.977 – T rue 90.0 99.1 0.807 0.994 0.981 0.2 – 90.0 99.6 0.984 1.000 0.998 H -Bayes 1.0 – 90.0 87.3 1.000 0.269 0.744 5.0 – 90.0 37.1 1.000 0.099 0.143 9

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

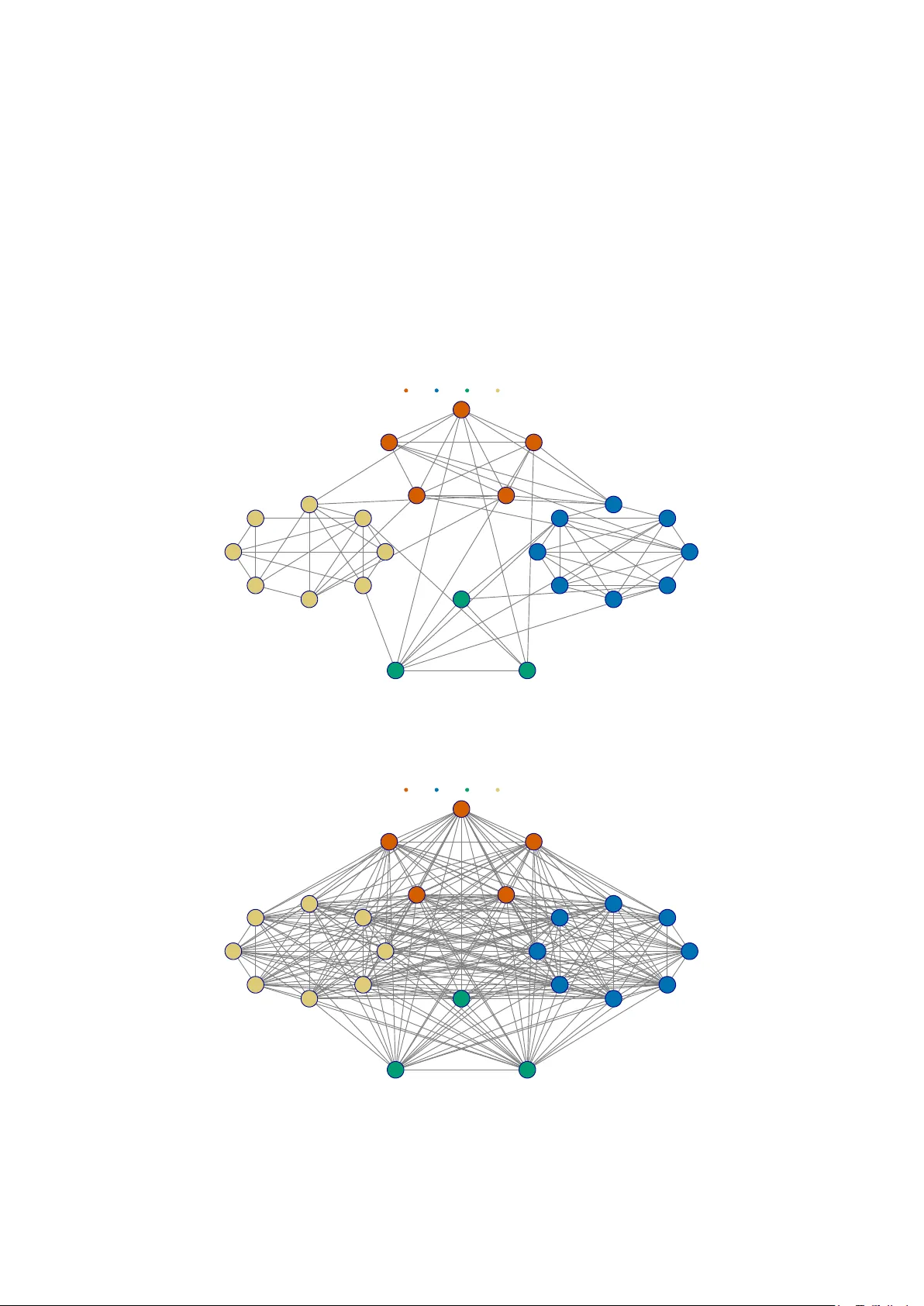

Leave a Comment