Point Cloud as a Foreign Language for Multi-modal Large Language Model

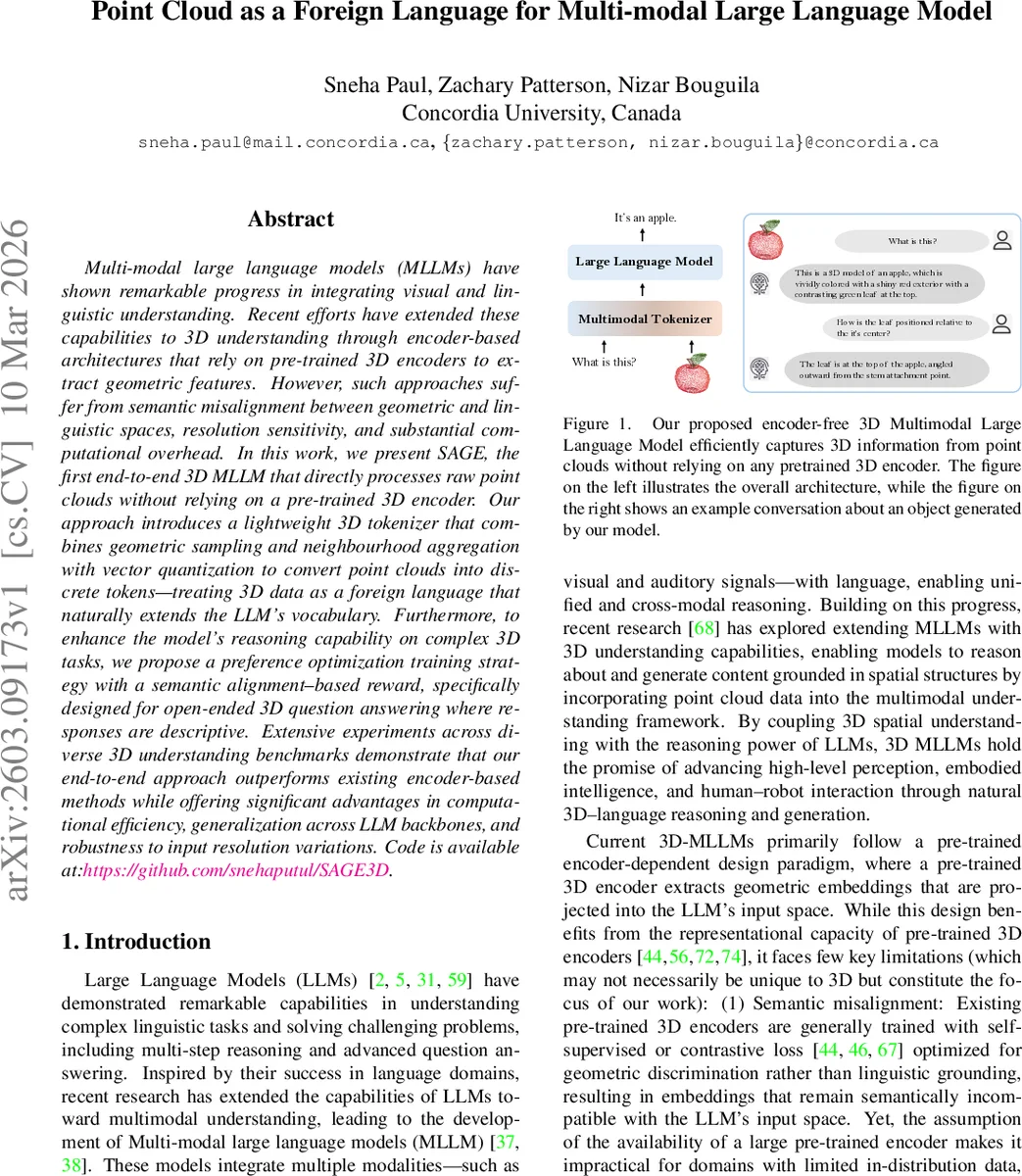

Multi-modal large language models (MLLMs) have shown remarkable progress in integrating visual and linguistic understanding. Recent efforts have extended these capabilities to 3D understanding through encoder-based architectures that rely on pre-trained 3D encoders to extract geometric features. However, such approaches suffer from semantic misalignment between geometric and linguistic spaces, resolution sensitivity, and substantial computational overhead. In this work, we present SAGE, the first end-to-end 3D MLLM that directly processes raw point clouds without relying on a pre-trained 3D encoder. Our approach introduces a lightweight 3D tokenizer that combines geometric sampling and neighbourhood aggregation with vector quantization to convert point clouds into discrete tokens–treating 3D data as a foreign language that naturally extends the LLM’s vocabulary. Furthermore, to enhance the model’s reasoning capability on complex 3D tasks, we propose a preference optimization training strategy with a semantic alignment-based reward, specifically designed for open-ended 3D question answering where responses are descriptive. Extensive experiments across diverse 3D understanding benchmarks demonstrate that our end-to-end approach outperforms existing encoder-based methods while offering significant advantages in computational efficiency, generalization across LLM backbones, and robustness to input resolution variations. Code is available at: github.com/snehaputul/SAGE3D.

💡 Research Summary

The paper introduces SAGE, the first end‑to‑end 3‑dimensional multimodal large language model (3D‑MLLM) that processes raw point clouds without any pre‑trained 3D encoder. Traditional 3D‑MLLMs rely on heavy encoders (e.g., Point‑BERT, 3D‑LLaVA) to extract geometric embeddings, then project them into the language model’s embedding space. This pipeline suffers from three major drawbacks: (1) semantic misalignment because encoder features are learned for geometric discrimination rather than linguistic grounding, (2) resolution sensitivity due to fixed‑size point inputs, and (3) substantial computational overhead from the encoder itself.

SAGE eliminates these issues by treating a point cloud as a “foreign language”. The core component is a lightweight, trainable 3D tokenizer that converts point clouds into discrete tokens compatible with the LLM’s vocabulary. The tokenizer first applies Farthest Point Sampling (FPS) to select a set of representative centroids. For each centroid, K‑Nearest‑Neighbour (KNN) grouping gathers a local neighborhood, preserving fine‑grained geometry. A local geometry aggregation module then projects raw point features (coordinates, optional RGB) into a higher‑dimensional geometric space, adds relative positional embeddings, and applies max‑pooling to obtain a compact representation Z for each neighborhood.

Z is linearly projected into the LLM’s embedding dimension using a learnable matrix W, yielding continuous vectors H. To bridge the continuous‑to‑discrete gap, vector quantization (VQ) with a learnable codebook C is employed. Each projected vector h_i is replaced by its nearest codebook entry e_k, producing a sequence of quantized tokens H_q. The VQ loss consists of a codebook update term and a commitment term, ensuring the codebook adapts to the geometry while the projected features stay close to quantized vectors.

The quantized 3D tokens are concatenated with special markers <p_start> and <p_end> and then with textual tokens, forming a mixed‑modality input sequence for a decoder‑only LLM (e.g., LLaMA, Vicuna). The entire system is trained in three stages: (1) a warm‑up stage for the tokenizer using next‑token prediction (NTP) and VQ loss, (2) instruction tuning of the full model on multimodal prompts, and (3) a reinforcement‑learning based preference optimization stage.

The preference optimization addresses the challenge that most 3D question‑answering tasks are open‑ended and lack binary correctness signals. The authors design a semantic alignment reward that measures cosine similarity between the generated answer’s text embedding (e.g., CLIP‑Text) and a human‑written reference. This reward is integrated into a Generalized Reward‑Based Preference Optimization (GRPO) framework, encouraging the model to produce more accurate, detailed, and linguistically coherent 3D descriptions.

Experiments span several standard 3D benchmarks, including ShapeNet, ModelNet, and ScanObjectNN, covering captioning, question answering, and object recognition. Two variants are evaluated: SAGE* (without preference optimization) and full SAGE (with it). SAGE* already matches or exceeds encoder‑based baselines despite having no pre‑trained encoder, and demonstrates remarkable robustness to point‑cloud density changes—performance degrades minimally when the number of input points is halved or doubled. Full SAGE further improves on complex reasoning tasks, achieving 4–6 % higher accuracy on multi‑step 3D QA compared to the strongest existing methods. Moreover, the tokenizer contains only ~10 M parameters, leading to roughly 30 % lower inference latency and memory consumption relative to encoder‑dependent pipelines.

The paper’s contributions are fourfold: (1) a novel tokenization scheme that treats 3D data as an extension of the LLM’s vocabulary, removing the need for any pre‑trained 3D encoder; (2) the integration of vector quantization to discretize geometric features while preserving spatial relationships; (3) a semantic‑alignment‑based reward for preference optimization, enabling effective RL training on open‑ended 3D tasks; and (4) extensive empirical validation showing superior performance, efficiency, and resolution robustness across multiple LLM backbones.

In summary, SAGE demonstrates that end‑to‑end learning of 3D representations directly within a language model is feasible and advantageous, opening new avenues for real‑time, scalable, and linguistically grounded 3D perception in robotics, AR/VR, and embodied AI applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment