RTFDNet: Fusion-Decoupling for Robust RGB-T Segmentation

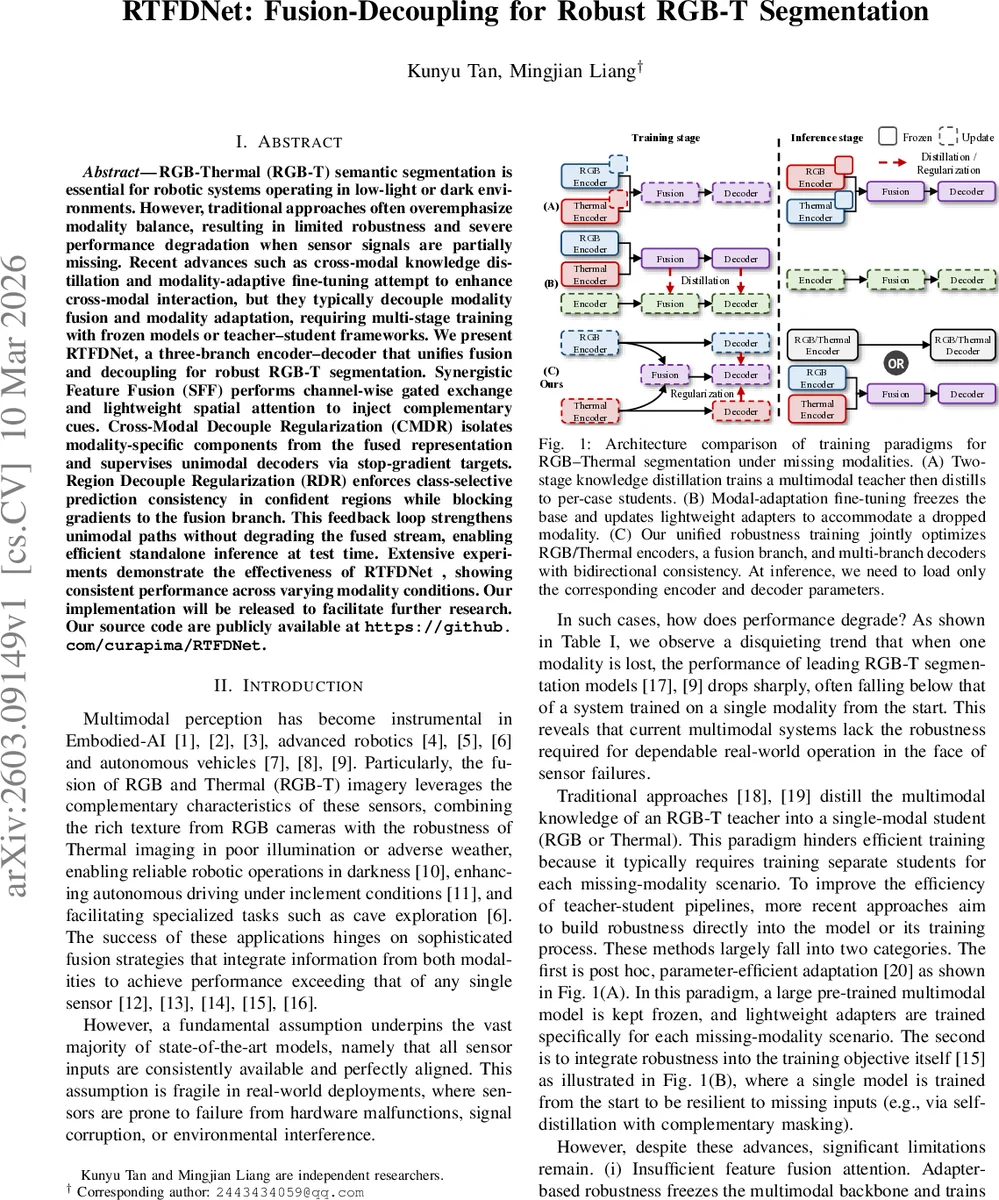

RGB-Thermal (RGB-T) semantic segmentation is essential for robotic systems operating in low-light or dark environments. However, traditional approaches often overemphasize modality balance, resulting in limited robustness and severe performance degradation when sensor signals are partially missing. Recent advances such as cross-modal knowledge distillation and modality-adaptive fine-tuning attempt to enhance cross-modal interaction, but they typically decouple modality fusion and modality adaptation, requiring multi-stage training with frozen models or teacher-student frameworks. We present RTFDNet, a three-branch encoder-decoder that unifies fusion and decoupling for robust RGB-T segmentation. Synergistic Feature Fusion (SFF) performs channel-wise gated exchange and lightweight spatial attention to inject complementary cues. Cross-Modal Decouple Regularization (CMDR) isolates modality-specific components from the fused representation and supervises unimodal decoders via stop-gradient targets. Region Decouple Regularization (RDR) enforces class-selective prediction consistency in confident regions while blocking gradients to the fusion branch. This feedback loop strengthens unimodal paths without degrading the fused stream, enabling efficient standalone inference at test time. Extensive experiments demonstrate the effectiveness of RTFDNet, showing consistent performance across varying modality conditions. Our implementation will be released to facilitate further research. Our source code are publicly available at https://github.com/curapima/RTFDNet.

💡 Research Summary

The paper addresses the critical problem of robustness in RGB‑Thermal (RGB‑T) semantic segmentation, which is essential for robotic and autonomous systems operating under low‑light or adverse weather conditions. Existing multimodal approaches typically assume that both RGB and thermal inputs are always available and perfectly aligned; when one modality is missing or corrupted, performance drops dramatically. Recent methods such as cross‑modal knowledge distillation or modality‑adaptive fine‑tuning try to improve cross‑modal interaction, but they separate the fusion and adaptation stages, requiring multi‑stage training, frozen backbones, or teacher‑student frameworks, and they provide little supervision to the fusion pathway itself.

RTFDNet proposes a unified “fusion‑decoupling” framework that simultaneously strengthens the multimodal fusion branch and equips each unimodal branch with the ability to operate independently at inference time. The architecture consists of three parallel encoder‑decoder streams: an RGB encoder‑decoder, a thermal encoder‑decoder, and a fused encoder‑decoder that receives features from both modalities. The core components are:

-

Synergistic Feature Fusion (SFF) – For each modality, global average and max pooling generate compact channel descriptors R (RGB) and T (Thermal). A dynamic gating mechanism amplifies cross‑modal flow on channels where the signs of R and T differ (indicating complementary semantics). The gated features are added to the original modality features, concatenated, and passed through a 1×1 convolution to produce the fused representation. This two‑stage process combines channel‑wise gating with a lightweight spatial attention, enabling rich cross‑modal interaction with modest computational overhead.

-

Cross‑Modal Decouple Regularization (CMDR) – The fused feature map F is compared with the channel descriptors R and T. Channels where F and R (or F and T) share the same sign are extracted via a binary sign‑consistency gate, yielding modality‑specific decoupled features f_d^rgb and f_d^thermal. These are treated as targets for the RGB and thermal decoders, respectively, and an ℓ2 loss forces each unimodal decoder to mimic its target. Crucially, the targets are detached from the computation graph (stop‑gradient), so gradients flow only from the fused branch to the unimodal branches, preventing conflicting updates and allowing the fused representation to remain optimal.

-

Region Decouple Regularization (RDR) – To refine predictions near object boundaries, the fused decoder’s softmax output is converted into one‑hot class masks M. The masked fused predictions (sg(p_fuse ⊙ M)) serve as pseudo‑ground‑truth for the RGB and thermal decoders, with an L1 loss enforcing pixel‑wise consistency only in high‑confidence regions. Again, stop‑gradient blocks gradient flow into the fused branch, ensuring that the unimodal streams learn from the fused predictions without altering the fused pathway.

The overall training objective combines standard cross‑entropy with weighted CMDR and RDR terms. During training, all three branches are jointly optimized, forming a feedback loop: the fused branch provides high‑quality supervisory signals, while the unimodal branches learn to recover modality‑specific cues and maintain performance when the other sensor is unavailable.

Experiments are conducted on three public RGB‑T segmentation benchmarks: MFNet, FMB, and PST900. The authors simulate various modality‑drop scenarios, including complete loss, random noise, and signal instability. Results show that state‑of‑the‑art multimodal models (e.g., RTFNet, CMXNet, StitchFusion) suffer mIoU drops of 15–30% when a modality is missing, whereas RTFDNet’s degradation is limited to 3–6%. When both modalities are present, RTFDNet still outperforms strong baselines (SegFormer‑b2 backbone) by 1–2% absolute mIoU. Ablation studies confirm that each component (SFF, CMDR, RDR) contributes positively, and the full model yields the best trade‑off between robustness and overall accuracy.

The paper also highlights practical advantages: at inference time, only the encoder‑decoder pair corresponding to the available modality needs to be loaded, reducing memory and compute requirements. Limitations include the relatively heavy fused branch during training and the current focus on only two modalities; extending the framework to RGB‑Depth, LiDAR, or more than two sensors is suggested as future work.

In summary, RTFDNet introduces a novel fusion‑decoupling paradigm that achieves robust RGB‑Thermal semantic segmentation under missing‑modality conditions, offering both strong overall performance and practical flexibility for real‑world robotic applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment