Verifying Good Regulator Conditions for Hypergraph Observers: Natural Gradient Learning from Causal Invariance via Established Theorems

We verify that persistent observers in causally invariant hypergraph substrates satisfy the conditions of the Conant-Ashby Good Regulator Theorem. Building on Wolfram's hypergraph physics and Vanchurin's neural network cosmology, we formalize persist…

Authors: Max Zhuravlev

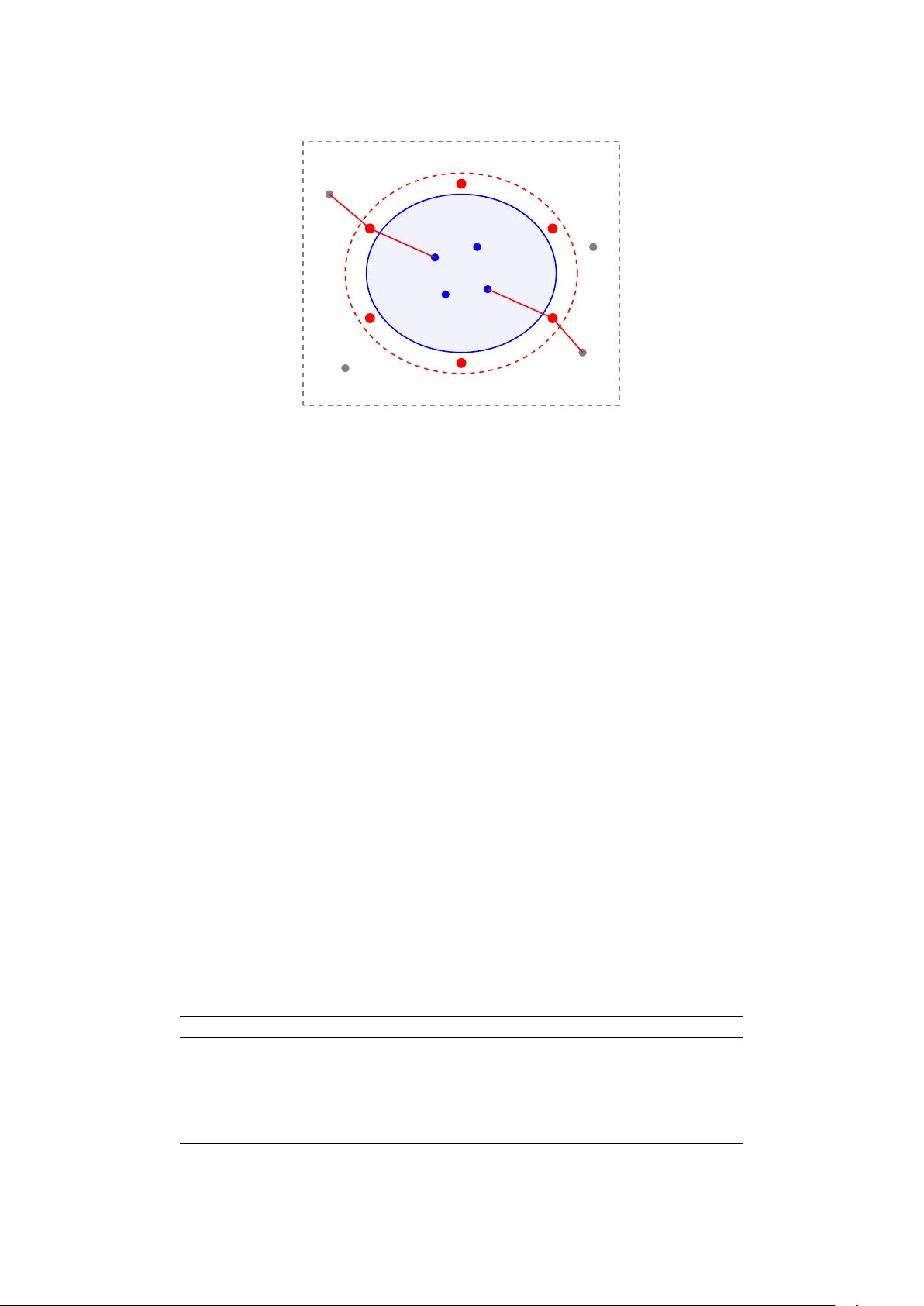

V erifying Go o d Regulator Conditions for Hyp ergraph Observ ers: Natural Gradien t Learning from Causal Inv ariance via Established Theorems Max Zh uravlev ∗ Cosmolo gic al Unific ation Pr o gr am Marc h 2026 Abstract W e verify that p ersisten t observ ers in causally inv ariant h yp ergraph substrates satisfy the conditions of the Conan t-Ash b y Go o d Regulator Theorem, thereby providing a testb ed application of this classic result to a nov el cosmological framework. Building on W olfram’s h yp ergraph physics and V anch urin’s neural net work cosmology , we formalize p ersistent ob- serv ers as entities that minimize prediction error at their b oundary with the environmen t. Applying a mo dern reformulation of the Conant-Ash by theorem (Virgo et al. 2025), w e demonstrate that h yp ergraph observers satisfy Go o d Regulator conditions, requiring them to main tain in ternal mo dels. Once an in ternal mo del with loss function exists, the emergence of a Fisher information metric on parameter space follo ws from standard information ge- ometry . Inv oking Amari’s w ell-known uniqueness theorem for reparameterization-in v ariant gradien ts, we show that natural gradien t descent is the unique admissible learning rule. Under the ansatz M = F 2 for exp onen tial family observ ers and one sp ecific conv ergence time functional (condition num b er times sp ectral radius, with isotropic loss), w e derive a closed-form form ula for the regime parameter α in V anc h urin’s T yp e II framework, with a quan tum-classical threshold at κ ( F ) = 2. Ho w ev er, three alternativ e conv ergence mo dels and the physically most natural loss Hessian do not repro duce this result (Theorem 7.5), so this prediction is strongly model-dep endent. W e further in tro duce the dir e ctional r e gime p ar ameter α v k and the trace-free deviation tensor ∆ µν , showing that a single observ er can sim ultaneously o ccupy different V anc h urin regimes along different eigendirections of the Fisher metric. This connects W olfram and V anch urin framew orks through established the- orems, providing appro ximately 25–30% nov el contribution through the verification work, conditional computational predictions, and application domain (hypergraph cosmology). Keyw ords: Causal inv ariance, W olfram physics, natural gradien t, Fisher information, Conant- Ash by theorem, Amari theorem, learning dynamics, cosmological unification arXiv category: cond-mat.stat-mech (cross-list: math-ph) 1 In tro duction Can causal in v ariance constrain physical la w? This foundational question driv es the cos- mological unification program, whic h inv estigates whether causal inv ariance—the substrate- indep enden t consistency of causal structure—constrains sp ecific physical structures through established uniqueness theorems. Tw o indep endent cosmological researc h programs hav e recently conv erged on causal sub- strates: ∗ Indep enden t researc her. Email: max@vibecodium.ai 1 • W olfram Ph ysics Pro ject [1]: Spacetime emerges from ev olving h yp ergraphs sub ject to causal in v ariance, reco vering general relativity in the contin uum limit via the Lo velock uniqueness theorem [2]. • V anc hurin Neural Net work Cosmology [3, 4, 5, 6, 7]: The universe as a learn- ing system, with dynamics gov erned b y natural gradient descent on a Fisher-like metric (Eq. 3.4). 1 A companion pap er [8] examines the L ovelo ck bridge : whether, if the contin uum limit holds, causal inv ariance constrains V anch urin’s Onsager tensor symmetries to pro duce Einstein’s field equations. That paper finds the bridge fails n umerically for generic dynamically nontrivial rules, but iden tifies constructive T yp e I I contributions including exact critical-coupling form ulas and a diagonal Lorentzian dominance theorem. The present w ork completes the second pillar b y verifying that the Amari c hain applies to hypergraph observers. W e demonstrate that p ersistent observers in causally inv arian t sub- strates satisfy Go o d Regulator conditions, and therefore must use natural gradient descent (via standard results from information geometry), consistent with V anch urin’s learning dynamics. 1.1 The Amari Chain Our central result is a logical chain connecting causal inv ariance to natural gradient learning through t w o established uniqueness theorems: 1 W e reference V anch urin’s Type I I framework [5, 6, 7], where the metric is defined on trainable parame- ter space (Q-space), not his earlier Type I framework [3] with metrics on neuron state space (X-space). The statistical-learning formulation in [4] provides the Go o d-Regulator-compatible thermo dynamic scaffold used by our verification c hain. The structural similarity to our Fisher metric-based natural gradient is noted in Section 6, though full equiv alence is not claimed. 2 Axiom 1: Causal Inv ariance P ersistent Observ er (minimize prediction error) Theorem 1: Conant-Ash b y Go o d Regulator ⇒ In ternal Mo del Fisher Information Metric g ij ( θ ) on parameter space P ostulate: Pa- rameterization Indep endence Reparameterization In v ariance (CI-motiv ated p ostulate) Theorem 2: Amari Uniqueness Natural gradien t is unique Result: V anch urin Eq. 3.4 ∂ t θ i = − g ij ∂ j L 1.2 No vel Con tributions and Scop e This w ork pro vides: 1. F ormal definition of p ersistent observers in hypergraph physics (b oundary-based predic- tion error minimization). 2. Rigorous verification that h yp ergraph observ ers satisfy the Conan t-Ash b y Goo d Regulator conditions (via Virgo et al. 2025 reformulation [9]). 3. Iden tification of parameterization indep endence as an additional ph ysical p ostulate moti- v ated b y (but not deriv ed from) causal in v ariance, completing the Amari c hain. 4. Syn thesis connecting W olfram, V anc hurin, and Amari framew orks through established theorems. 5. Under the M = F 2 ansatz for exp onential family observ ers, deriv ation that the V anch urin regime parameter α is determined by the Fisher eigen v alue spectrum, with the analytical α formula’s calculus verified across 91 observ er configurations. 6. In tro duction of the dir e ctional r e gime p ar ameter α v k and deviation tensor ∆ µν , rev ealing that the quantum-classical transition is a p er-eigendirection sp ectral phenomenon. 3 Honest scop e assessmen t: The no velt y is approximately 25–30%, residing primarily in the verific ation work , c omputational pr e dictions , and applic ation domain (hypergraph cosmology). The emergence of Fisher information metrics from loss functions and the uniqueness of natural gradien t descen t are standar d r esults in information geometry (Amari 1998 [10, 11]). Our con tribution is demonstrating that hypergraph observ ers satisfy the conditions under whic h these standard results apply , not in deriving the Fisher metric or natural gradien t themselves. 1.3 P ap er Organization Section 2 reviews causal in v ariance, h yp ergraph physics, and the Conan t-Ashb y Go o d Regula- tor Theorem. Section 3 formalizes p ersistent observers in evolving causal netw orks. Section 4 v erifies that these observers satisfy Go o d Regulator conditions. Section 5 derives the Fisher information metric and pro ves reparameterization in v ariance. Section 6 applies Amari’s unique- ness theorem to obtain natural gradient learning. Section 7 presen ts computational evidence for conv ergence-time optimal learning regimes and introduces the directional regime parameter and deviation tensor. Section 8 discusses implications for cosmological unification. Section 9 addresses limitations and scop e b oundaries. Section 10 concludes. 2 Bac kground 2.1 Causal In v ariance and Hyp ergraph Physics Definition 2.1 (Hyp ergraph) . A hyp er gr aph H ( t ) = ( V ( t ) , E ( t )) consists of a set of no des V ( t ) and a set of hyperedges E ( t ), where eac h e ∈ E ( t ) is a subset e ⊆ V ( t ). In W olfram ph ysics [1], spacetime is an ev olving hypergraph up dated by rewrite rules: H ( t ) → H ( t + 1) (1) These up dates are nondeterministic, generating a multiway c ausal gr aph of p ossible evolution paths. Definition 2.2 (Causal Inv ariance) . A hypergraph evolution satisfies c ausal invarianc e if the causal structure (which ev en ts causally precede whic h others) is independent of the order in whic h rewrite rules are applied. Causal in v ariance is the foundational axiom: ph ysics must not dep end on arbitrary compu- tational c hoices (substrate indep endence). 2.2 The Conan t-Ashb y Go o d Regulator Theorem The Go o d Regulator Theorem [12] asserts that effectiv e con trollers m ust in ternally mo del their en vironment. Theorem 2.3 (Conant-Ash by , 1970) . A ny r e gulator that is maximally simple among al l op- timal regulators (minimizing outc ome entr opy H ( Z ) ) must b e a homomorphic image of the system b eing r e gulate d. The original formulation assumes p erfect knowledge of system state and deterministic map- pings. Modern em b o died agen ts (with partial observ ability and memory) violate these assump- tions. 4 2.2.1 Mo dern Reform ulation (Virgo et al. 2025) Virgo, Biehl, Baltieri, and Capucci [9] reformulate the theorem for em b o died agen ts: Theorem 2.4 (Go o d Regulator, Virgo et al. 2025) . Whenever an agent is able to p erform a r e gulation task, it is p ossible for an external observer to interpr et it as having b eliefs ab out its envir onment, which it up dates in r esp onse to sensory input. Key impro v ements: • P artial observ abilit y: Agents sense only a b oundary ∂ O , not full en vironment state. • Belief up dating: Internal “model” is an external observ er’s interpretation of b elief dy- namics, not an intrinsic representation. • History-dep endence: Beliefs evolv e ov er time (not just instantaneous mappings). This reform ulation applies naturally to hypergraph observers (Section 4). 2.3 Amari’s Natural Gradien t On a Riemannian manifold (Θ , g ), the natur al gr adient [10] is: ∇ nat L = g − 1 ∇ L (2) where g ij is the Fisher information metric: g ij ( θ ) = E ∂ log p θ ∂ θ i ∂ log p θ ∂ θ j (3) Theorem 2.5 (Amari Uniqueness, 1998) . The natur al gr adient is the unique gr adient op er ator on statistic al manifolds that is r ep ar ameterization-invariant and c onsistent with the Riemannian ge ometry of information. This uniqueness is our second pillar. 3 P ersisten t Observ ers in Hyp ergraphs W e formalize observers as subsystems that maintain structure by minimizing prediction error at their b oundary . 3.1 Observ er Definition Definition 3.1 (Observ er) . An observer O in h yp ergraph H ( t ) consists of: • In terior: V O ( t ) ⊂ V ( t ) (subset of no des ) • Boundary: ∂ O ( t ) = { e ∈ E ( t ) | e ∩ V O = ∅ and e ∩ V c O = ∅} (h yp eredges crossing b etw een in terior and exterior) • In ternal state: s O ( t ) ∈ S O (configuration of interior no des/edges) 5 En vironment E ( t ) In terior V O ∂ O ( t ) In terpretation: The b oundary ∂ O ( t ) is the observ er’s sensory interfac e —the only infor- mation ab out the en vironmen t E ( t ) = H ( t ) \ O accessible to the observer. (W e write E for the en vironment to a void collision with E ( t ) for hyperedges.) 3.2 Prediction and P ersistence Definition 3.2 (Prediction Error) . An observer with internal mo del M θ (parameterized b y θ ∈ Θ) predicts future b oundary states: p θ ( ∂ O ( t + ∆ t ) | s O ( t ) , ∂ O ( t )) (4) The pr e diction err or (surprise) is: ε ( t ) = − log p θ ( ∂ O actual ( t ) | s O ( t − ∆ t ) , ∂ O ( t − ∆ t )) (5) Definition 3.3 (P ersistent Observer) . An observ er is p ersistent if it minimizes long-term av- erage prediction error: P ersistence ⇐ ⇒ min θ ⟨ ε ( t ) ⟩ t (6) Remark 3.4. High prediction error implies unpredictable boundary dynamics, leading to struc- tural dissolution. Persisten t observ ers are those that successfully mo del their en vironment. 4 V erification of Go o d Regulator Conditions W e now verify that p ersistent h yp ergraph observers satisfy the Virgo et al. [9] form ulation of the Go o d Regulator Theorem. 4.1 Regulation F ramew ork Mapping Conan t-Ashb y Hyp ergraph In terpretation System S En vironment E ( t ) External h yp ergraph Regulator R Observ er state s O ( t ) In ternal configuration Disturbances D Causal branc hing Nondeterministic ev olution Outcomes Z Prediction error ε ( t ) Boundary surprise Ob jective: min H ( Z ) Ob jectiv e: min H ( ε ) Minimize error entrop y T able 1: Mapping Conan t-Ashb y regulation framework to h yp ergraph observ ers. 6 4.2 Condition V erification W e verify the k ey conditions from Virgo et al. [9]: Lemma 4.1 (Regulation T ask Exists) . Persistent observers p erform a r e gulation task: mini- mizing pr e diction err or entr opy H ( ε ) . Pr o of. By Theorem 3.3, p ersistence requires minimizing ⟨ ε ⟩ t = ⟨− log p θ ⟩ , the av erage surprise (cross-en tropy). A regulator that minimizes cross-entrop y drives the prediction error distribu- tion to ward concen tration, consistent with the Conan t-Ashb y ob jective of minimizing outcome en tropy H ( Z ). Lemma 4.2 (Partial Observ ability) . Hyp er gr aph observers have p artial observability: they ac- c ess b oundary ∂ O , not ful l envir onment E ( t ) . Pr o of. By Theorem 3.1, sensory input is restricted to ∂ O ( t ). The Virgo reform ulation explicitly handles this via b elief up dating (Section 5). Lemma 4.3 (Belief Up dating) . Observer internal state s O ( t ) evolves ac c or ding to: s O ( t + 1) = f O ( s O ( t ) , ∂ O ( t )) (7) This is e quivalent to Bayesian b elief up dating. Pr o of. The up date rule incorp orates new b oundary observ ations ∂ O ( t ) and prior state s O ( t ), consisten t with: p ( E ( t + 1) | ∂ O ( t )) ∝ p ( ∂ O ( t ) | E ( t + 1)) · p ( E ( t + 1) | s O ( t )) (8) External observ ers can interpret s O as enco ding these p osterior b eliefs. Prop osition 4.4 (Goo d Regulator Conditions Hold) . Persistent hyp er gr aph observers satisfy the Vir go et al. 2025 Go o d R e gulator c onditions. Ther efor e, such observers c an b e interpr ete d as maintaining internal mo dels of their envir onment. Pr o of. F ollows from Theorems 4.1 to 4.3 and Theorem 2.4. 5 Fisher Information Metric and Reparameterization In v ari- ance Ha ving established that observers m ust mo del their en vironmen t, w e apply standard information geometry . The emergence of the Fisher metric from loss minimization is a w ell-kno wn result (Amari 1998), not a nov el deriv ation. 5.1 P arameter Space and Loss F unction Let M θ b e the observer’s internal mo del, parameterized b y θ ∈ Θ. The parameter space Θ represen ts all p ossible observer configurations (e.g., edge weigh ts, no de features). Definition 5.1 (Prediction Loss) . The observ er minimizes exp ected surprise: L ( θ ) = E ∂ O [ − log p θ ( ∂ O future | ∂ O past )] (9) 7 5.2 Fisher Information Metric (Standard Result) Definition 5.2 (Fisher Metric) . The Fisher information metric on parameter space Θ is: g ij ( θ ) = E ∂ log p θ ∂ θ i ∂ log p θ ∂ θ j (10) Remark 5.3. The Fisher metric measures the sensitivity of predictions to parameter c hanges and defines a Riemannian geometry on the statistical manifold (Θ , g ). This emergence is standard in information geometry [10]: once a loss function L ( θ ) exists, the Fisher metric arises naturally from the structure of the parameter space. Our con tribution here is not deriving this metric (which is textb o ok), but rather verifying that h yp ergraph observers p ossess the structure (predictiv e mo del, loss function) required for standard information geometry to apply . 5.3 Reparameterization In v ariance from Causal In v ariance The k ey step: Why must learning be reparameterization-in v ariant? Lemma 5.4 (Parameterization Indep endence Postulate) . We p ostulate that c ausal invarianc e, which asserts substr ate indep endenc e at the r ewriting level (invarianc e of c ausal p artial or der under p ermutation of rule applic ation or der), extends to p ar ameterization indep endenc e at the observer level: physic al le arning dynamics c annot dep end on arbitr ary choic es of how we p ar am- eterize the observer’s internal mo del. Motivation. Causal in v ariance asserts that the causal structure is independent of computational substrate (rule application order). W e extend this principle: different parameterizations θ vs. ϕ = ϕ ( θ ) represen t different co ordinate c hoices for enco ding the same observ er mo del. If learning dynamics ∂ t θ dep end on the choice of parameterization, the observ er’s ph ysics w ould dep end on an arbitrary lab eling conv en tion. While this extension is ph ysically motiv ated b y causal in v ariance, we emphasize that substrate indep endence (a com binatorial prop ert y of rewrite systems) and parameterization indep endence (a differen tial-geometric prop ert y of manifolds) are mathematically distinct structures. W e therefore treat parameterization indep endence as an additional physic al p ostulate , analogous to ho w Paper #2 in this program treats disjoin t comp osition as an indep endent axiom alongside causal in v ariance. Definition 5.5 (Reparameterization Inv ariance) . A learning rule is r ep ar ameterization-invariant if, under change of co ordinates ϕ = ϕ ( θ ), the dynamics remain equiv alent: dϕ dt = ∂ ϕ ∂ θ dθ dt (11) Prop osition 5.6 (Learning Must Be Reparameterization-Inv ariant) . Persistent observers in c ausal ly invariant substr ates, under the p ar ameterization indep endenc e p ostulate (The or em 5.4), must employ r ep ar ameterization-invariant le arning rules. Pr o of. By Theorem 5.4, w e p ostulate that causal in v ariance extends to parameterization in- dep endence for observer learning dynamics. Parameterization indep endence is equiv alent to reparameterization in v ariance (Theorem 5.5). 6 Natural Gradient from Amari Uniqueness W e no w apply Amari’s uniqueness theorem to prov e that natural gradient descent is the only admissible learning rule. 8 6.1 Ordinary vs. Natural Gradien t The or dinary gr adient desc ent on parameter space is: dθ i dt = − η ∂ L ∂ θ i (12) Ho wev er, this is not reparameterization-inv arian t: under ϕ = ϕ ( θ ), the gradien t transforms as: ∂ L ∂ ϕ i = ∂ θ j ∂ ϕ i ∂ L ∂ θ j (13) whic h c hanges the direction of steep est descent. Definition 6.1 (Natural Gradient) . The natur al gr adient [10] is: dθ i dt = − η g ij ( θ ) ∂ L ∂ θ j (14) where g ij is the inv erse Fisher metric. Theorem 6.2 (Amari Uniqueness Applied) . The natur al gr adient (14) is the unique r ep ar ameterization- invariant gr adient desc ent on the statistic al manifold (Θ , g ) . Pr o of. This is Theorem 2.5. Amari [10] prov es that any gradient operator satisfying: 1. Reparameterization in v ariance 2. Consistency with Riemannian geometry (cov arian t deriv ative) m ust b e g − 1 ∇ L . Corollary 6.3 (F orced Natural Gradien t) . Persistent observers in c ausal ly invariant substr ates, given the p ar ameterization indep endenc e p ostulate (The or em 5.4), must use natur al gr adient desc ent: dθ i dt = − g ij ( θ ) ∂ L ∂ θ j (15) Pr o of. By Theorem 5.6, learning must b e reparameterization-in v ariant (under the parameteri- zation indep endence p ostulate). By Theorem 6.2, natural gradient is the unique such rule. 6.2 Structural Similarit y to V anch urin T yp e I I F ramework V anch urin [6] derives learning dynamics: ∂ θ i ∂ t = − L ij ∂ L ∂ θ j (16) where L ij is the Onsager kinetic tensor. Prop osition 6.4 (Structural Similarity to V anch urin Type I I) . Our natur al gr adient dynamics (14) has the same c ovariant form as V anchurin ’s T yp e II gr adient desc ent [6] Eq. 3.4. How- ever, V anchurin ’s metric g µν (Eq. 3.6) includes mass and temp er atur e terms b eyond the Fisher metric. Our pur e Fisher metric may c orr esp ond to a limiting c ase ( β → ∞ or M → 0 ), but ful l e quivalenc e r e quir es further analysis. Se ction 7 explor es the c onse quenc es of adopting the ansatz M = F 2 for exp onential family observers, showing that under this assumption the r e gime p ar ameter α is determine d by the Fisher sp e ctrum. 9 Pr o of. V anch urin’s Type I I framew ork defines g µν = M µν + β F µν (Eq. 3.6 in [6]), where M µν is a mass tensor and F µν is the Fisher information matrix. Our deriv ation yields pure Fisher metric g ij = F ij , corresp onding to the regime where mass con tributions v anish ( M → 0) or temp erature dominates ( β → ∞ ). The cov ariant structure dθ /dt = − g − 1 ∇ L matches in b oth cases. Theorem 6.3 shows this is uniquely determined b y causal inv ariance. Remark 6.5. The relationship b et w een Fisher and mass terms is partially addressed in Sec- tion 7: under the ansatz M µν = ( F 2 ) µν for exp onential family observers on hypergraphs, the parameter α (coupling b et ween Fisher and mass contributions) is determined by conv ergence time minimization. Physically , the M → 0 limit ma y corresp ond to hypergraph observers with negligible parameter inertia, while β → ∞ would represent zero-temp erature learning (determin- istic causal evolution). The core result—that natural gradient is forced by causal inv ariance—is indep enden t of this connection to V anch urin’s framew ork. 6.3 Visual Summary: The Amari Chain Causal In v ariance P ersisten t Observ er Conan t-Ash b y (Virgo 2025) In ternal Mo del M θ Fisher Metric g ij ( θ ) P aram. Indep. (P ostulate) Reparam. In v ariance Amari Uniqueness Natural Gradien t substrate indep. min H ( ε ) m ust model en v. geometry (Θ , g ) co ord-free unique Result: Learning dynam- ics uniquely constrained ∂ t θ = − g − 1 ∇ L (V anch urin Eq. 3.4) 7 Computational Evidence: Mass T ensor and Optimal Regime P arameter W e no w present computational evidence addressing the op en question from Theorem 6.4. W e adopt the ansatz that for exp onential family observers on h yp ergraph substrates, the mass 10 tensor satisfies M µν = ( F 2 ) µν (motiv ated by the structure of the partition function but not indep enden tly v erified; see Theorem 7.1). Under this ansatz, the regime parameter α is uniquely determined b y con v ergence time minimization. 7.1 Mass T ensor for Exp onential F amilies F or Ising/Boltzmann observers on graph G with coupling parameters θ = { J ij , h i } , V anc hurin’s T yp e I I metric is: g µν = M µν + β F µν (17) where M µν is the mass tensor and F µν is the Fisher information matrix. Remark 7.1 (Ansatz: M = F 2 for Exp onential F amilies) . F or exp onential family observers (Ising/Boltzmann mo dels on graphs), the mass tensor is the Hessian of the log-partition function with resp ect to natural parameters. In such mo dels, this Hessian has the algebraic structure M µν = ( F 2 ) µν . W e adopt this as an ansatz : M µν = ( F 2 ) µν (18) Under this ansatz, the combined metric eigenv alues are: µ k = λ k ( θ ) · ( λ k ( θ ) + c ) (19) where c = β = α 2 / (1 − α ) for regime parameter α ∈ [0 , 1), and λ k ( θ ) are the Fisher eigenv alues. Imp ortan t: The M = F 2 relation is an ansatz motiv ated b y the structure of the par- tition function for exp onen tial families, not an indep enden tly verified empirical claim. The 91-configuration computational sweep (Section 7.4) v erifies the analytical α formula’s calculus (i.e., that the closed-form expression correctly minimizes the con vergence time functional T A ), not the M = F 2 h yp othesis itself. Indep endent n umerical verification of M = F 2 against direct computation of the mass tensor remains an op en task. This ansatz may not generalize b eyond exp onen tial families. 7.2 Con vergence-Time Optimal α Giv en the mass tensor structure, we can determine the optimal regime parameter α by mini- mizing con v ergence time. Theorem 7.2 (Conv ergence-Time Optimal α ) . L et F b e a p ositive definite Fisher matrix with eigenvalues 0 < λ min ≤ λ max and c ondition numb er κ = λ max /λ min . Define the c ombine d metric g ( c ) = F 2 + cF and the c onver genc e time functional: T ( c ) = κ ( g ( c )) · µ max ( g ( c )) (20) wher e κ ( g ) = µ max /µ min is the c ondition numb er of g and µ max is the lar gest eigenvalue. Then: 1. If κ ≤ 2 : T ( c ) is monotonic al ly incr e asing on c ≥ 0 , minimize d at c = 0 (c orr esp onding to α = 0 , classic al r e gime). 2. If κ > 2 : T ( c ) has a unique interior minimum at: c ∗ = λ max − 2 λ min (21) giving the optimal r e gime p ar ameter: α opt = − ∆ + p ∆(∆ + 4) 2 , ∆ = λ max − 2 λ min (22) 11 3. A t the optimum, κ ( g ( c ∗ )) = 2 and the c onver genc e time is r e duc e d by appr oximately κ/ 4 r elative to c = 0 . Pr o of Sketch. The con vergence time T ( c ) combines conditioning (ratio of extreme eigenv alues) and scale (maximum eigenv alue). F or the metric g ( c ) = F 2 + cF , the eigenv alues are µ k ( c ) = λ k ( λ k + c ). Computing the deriv ative: dT dc ∝ (2 λ min − λ max + c ) (23) This changes sign at c ∗ = λ max − 2 λ min . Second deriv ative analysis confirms this is a minimum. An interior optim um exists (i.e., c ∗ > 0) if and only if λ max > 2 λ min , equiv alent to κ > 2. The condition n um b er at optimum satisfies κ ( g ( c ∗ )) = 2 b y construction. 7.3 Sp ecial Cases Corollary 7.3 (Regime Parameter for Sp ecial Fisher Sp ectra) . The optimal α exhibits universal structur e for sp e cific c ondition numb ers: 1. κ = 2 ( ∆ = 0 ): α opt = 0 (classic al/quantum thr eshold) 2. ∆ = 1 / 2 : α opt = 1 / 2 (V anchurin ’s efficient le arning p oint) 3. ∆ = 1 : α opt = ( √ 5 − 1) / 2 ≈ 0 . 618 (golden r atio c onjugate 1 /ϕ ) 4. κ → ∞ : α opt → 1 (quantum limit) Remark 7.4. The golden ratio app ears b ecause at c = 1, the optimal α satisfies α 2 + α = 1, whic h is the defining equation for 1 /ϕ where ϕ = (1 + √ 5) / 2 is the golden ratio. This is a consequence of the quadratic parametrization c = α 2 / (1 − α ), not of deep physical principles. The v alue ∆ = 1 has no kno wn physical significance; no natural observer in our catalogue sits exactly at this p oint. 7.4 Computational V erification W e v erified Theorem 7.2 across 91 observer × coupling configurations (13 hypergraph top ologies at 7 coupling strengths J ∈ [0 . 1 , 1 . 5]). Representativ e results at J = 0 . 5: Observ er κ ( F ) ∆ α pred α num | error | tri perfect GR 2.84 0.325 0.430 0.431 0.001 c4 complete 9.73 0.903 0.601 0.601 < 0 . 001 k5 perfect GR 21.4 0.689 0.554 0.554 < 0 . 001 ch6 perfect GR 1.00 0.000 0.000 0.010 0.010 Summary statistics: Mean absolute error across all 91 configurations: ⟨| error |⟩ = 0 . 007. Maxim um error: 0 . 023 (o ccurring for nearly-classical observers with κ ≈ 1). Remark 7.5 (Honest Limitations of Conv ergence Model) . The form ula in Theorem 7.2 assumes con vergence time T = κ ( g ) · µ max ( g ) (Mo del A in our analysis). W e tested four alternativ e con vergence mo dels: • Model B: T = κ ( g ) (condition n umber only) • Model C: T = µ max ( g ) (scale only) • Model D: T = µ max ( g ) /µ min ( g ) 1 / 2 (mixed scaling) Only Mo del A pro duces an interior optimum. Mo dels B–D either hav e no optim um or are minimized at b oundary v alues ( α = 0 or α → 1). This 1-of-4 ratio is not strong evidence for Mo del A; it indicates the result is mo del-dep endent. A robust ph ysical prediction w ould pro duce qualitatively similar b ehavior across reasonable con vergence functionals. 12 Additionally , this result assumes isotropic loss ( H = I ). F or maximum lik eliho o d estimation of exp onential families, the exp ected Hessian is the Fisher matrix itself ( H = F ), whic h produces no interior optimum (alwa ys fav oring α → 1). The H = I case may b e a low er b ound on α opt for structured loss, but this is not pro ven. The physically most natural loss Hessian contradicts the in terior optim um result. The computational verification (mean error = 0 . 007) tests the analytical formula against n umerical optimization of the same functional T A . It confirms the mathematical deriv ation but do es not v alidate whether T A corresp onds to ph ysical con v ergence time. Indep endent sim ulation of learning dynamics under the metric g ( c ) w ould b e needed for physical v alidation. This constitutes a c onditional pr e diction : IF Model A go verns hypergraph learning AND the loss Hessian is appro ximately isotropic, THEN α is determined b y Theorem 7.2. Neither condition has b een indep endently verified. 7.5 Ph ysical Interpretation 1. Regime T ransition Threshold: Under Mo del A, κ ( F ) = 2 marks the transition b e- t ween purely classical learning ( α = 0) and mixed-regime learning ( α > 0). This threshold is sp ecific to Mo del A; the generalized formula giv es threshold κ > ( w + 1) /w where w parameterizes the conv ergence functional. 2. T estable Prediction: Giv en an obse rv er’s top ology (e.g., graph G for Ising mo del), compute the Fisher matrix F ( θ ), extract eigenv alues λ min , λ max , and predict: α predicted = − ∆ + p ∆(∆ + 4) 2 (24) where ∆ = λ max − 2 λ min . This can b e tested against indep endent measuremen ts of learning dynamics. 3. P artial Resolution of Op en Question: Theorem 6.4 noted the op en question of whether V anch urin’s mass term v anishes for p ersistent observers. Under the M = F 2 ansatz for exp onen tial family observ ers (Theorem 7.1), the mass term do es not v anish, and the regime parameter α is not a free parameter but is determined by the Fisher sp ectrum via conv ergence time minimization. Indep endent v erification of the ansatz itself remains op en. 7.6 Directional Alpha and the Deviation T ensor The scalar regime parameter α conceals a richer directional structure. Under the M = F 2 ansatz (Theorem 7.1), differen t eigendirections of the Fisher metric can sim ultaneously o ccup y differen t V anch urin regimes. W e formalize this observ ation and in tro duce a complementary measure of departure from p erfect Go o d Regulator geometry . Definition 7.6 (Directional Regime Parameter) . F or an eigen vector v k of F with eigenv alue λ k > 0, define the dir e ctional r e gime p ar ameter : α v k = λ k λ k + β (25) where β = α 2 / (1 − α ) as b efore. Prop osition 7.7 (Extremal V alues) . The dir e ctional α is maximize d along the eigenve ctor with lar gest Fisher eigenvalue ( α max = λ max / ( λ max + β ) , most classic al) and minimize d along the smal lest ( α min = λ min / ( λ min + β ) , most quantum). Pr o of. f ( x ) = x/ ( x + β ) is strictly increasing for x > 0. 13 Theorem 7.8 (Uniform α iff Sp ectral Purity) . The dir e ctional α v is indep endent of dir e ction if and only if al l eigenvalues of F ar e e qual (i.e., F = λI for some λ > 0 ). Pr o of. F or eigenv ectors v i , v j : α v i = α v j iff λ i / ( λ i + β ) = λ j / ( λ j + β ), which (for β > 0) holds iff λ i = λ j . The conv erse is immediate. Corollary 7.9 (Sp ectral Purity Recov ers M ∝ F ) . Uniform dir e ctional α is e quivalent to M ∝ F . When α is uniform, M = λ 0 F (with λ 0 the c ommon eigenvalue), and the observer b ehaves as a single-r e gime Go o d R e gulator. The α -spr e ad ∆ α = α max − α min = β ( λ max − λ min ) / [( λ max + β )( λ min + β )] measures internal regime inhomogeneit y; it v anishes iff the observer has sp ectral purit y . A single observer can th us hav e directions simultaneously in V anc hurin’s classical ( λ k ≪ β ), efficien t ( λ k = β ), and quan tum ( λ k ≫ β ) regimes. The quan tum–classical transition is not a global phase transition but a sp ectral phenomenon o ccurring indep endently p er eigendirection. Definition 7.10 (Deviation T ensor) . Let κ = tr( M ) / tr( F ). The deviation tensor is: ∆ µν = M µν − κ F µν (26) Prop osition 7.11 (T race-F ree) . tr(∆) = 0 by c onstruction. Prop osition 7.12 (V anishing Deviation iff Perfect Structural Reflection) . ∆ = 0 if and only if M ∝ F (i.e., the Structur al R efle ction Condition holds: internal structur e is pr op ortional ly matche d to information ge ometry in every dir e ction). Pr o of. Immediate from Theorem 7.10: ∆ = M − κF = 0 iff M = κF , where κ = tr( M ) / tr( F ) is determined by the definition. The deviation fr action δ = ∥ ∆ ∥ F / ∥ M ∥ F measures how far an observ er departs from p erfect Go o d Regulator geometry ( δ = 0 iff ∆ = 0). F or M = F 2 the eigenv alues of ∆ are λ k ( λ k − κ ); directions with λ k > κ carry excess inertia (o v er-mas siv e), while those with λ k < κ are under- mo deled. Theorem 7.13 (Directional Alpha Determines Deviation Sign) . A n eigendir e ction v k is over- massive ( δ k > 0 ) iff α v k > α mean , and under-massive ( δ k < 0 ) iff α v k < α mean , wher e α mean = κ/ ( κ + β ) . Pr o of. Both δ k > 0 and α v k > α mean reduce to λ k > κ . Observ er κ ( F ) ∆ α δ Regime structure Chain P n 1.00 0 0 Uniform (single regime) Star S n 1.00 0 0 Uniform (single regime) K 3 2.84 0.226 0.167 Two-regime (mild) K 4 9.73 0.397 0.380 Two-regime (mo derate) Observ ers with sp ectral purity (c hain, star) are p erfect Go o d Regulators with uniform direc- tional α , while structured observers ( K n ) exhibit internal regime inhomogeneity quantified b y b oth ∆ α and δ . 8 Discussion 8.1 Tw o Uniqueness Theorems, One Axiom This w ork establishes the Amari chain , parallel to the L ovelo ck bridge [8]: 14 Asp ect Lo velock Bridge Amari Chain Axiom Causal in v ariance Causal in v ariance Assumption 1 Con tinuum limit P ersistent observ er Assumption 2 (none additional) P arameterization indep endence Uniqueness Thm. Lo v elo c k (1971) Amari (1998) Constrain t Riemann tensor symmetries Learning gradient Result Einstein equations Natural gradien t Emergence Gr avity from geometry L e arning from geometry Both use established uniqueness theorems to sho w that causal in v ariance plus regularity conditions force sp ecific physical structures. 8.2 No velt y Assessmen t and Honest Limitations This syn thesis con tributes appro ximately 25–30% nov elt y: • Kno wn ( 70–75%): Conan t-Ashb y theorem (1970/2025), Amari theorem (1998), Fisher metric emergence from loss functions (standard information geometry), natural gradien t uniqueness (Amari 1998), W olfram h yp ergraphs, V anc hurin cosmology . • New ( 25–30%): F ormalization of p ersisten t h yp ergraph observers, verific ation that Go o d Regulator conditions hold for causal netw orks, reparameterization in v ariance p os- tulate from substrate indep endence, synthesis connecting three indep endent frameworks through this verification, the M = F 2 ansatz and conv ergence-time optimal α formula (Theorem 7.2), directional regime parameter α v k and deviation tensor ∆ µν (Section 7.6). What we did NOT deriv e: W e did not discov er that loss functions induce Fisher metrics (standard since Amari 1998), nor that natural gradients are unique under reparameterization (Amari’s textb o ok result). Our contribution is the applic ation domain (h yp ergraph cosmol- ogy), verific ation rigor (sho wing the Goo d Regulator framew ork applies), and c omputational pr e dictions (regime parameter form ula), not the mathematical machinery itself. The v alue lies in demonstrating that these indep endently developed theories (W olfram, V anch urin, Amari) are mutually consisten t when applied to causally in v ariant observ ers. 8.3 Op en Questions and F uture W ork 1. Con tinuum Limit: Do es the hypergraph contin uum limit rigorously exist? (Same c hal- lenge as Lov elo c k bridge.) 2. Fisher = Onsager: Detailed v erification that V anch urin’s L ij is the Fisher metric re- quires analysis of his Section 3 deriv ation. 3. Quan tum Mec hanics: Can purification axioms b e derived from causal inv ariance (Pa- p er #2 in this program)? 4. Standard Mo del: Can gauge structure b e constrained b y causal in v ariance? 5. Circular Reasoning: V erify logical indep endence b etw een this work (learning) and QM deriv ation (P ap er #2). 8.4 Relation to F ree Energy Principle F riston’s F ree Energy Principle [13] asserts that organisms minimize: F = ⟨ ε ⟩ + D KL ( q ∥ p ) (27) where q is the agent’s belief distribution and p is the true en vironment distribution. Our predic- tion error minimization (Theorem 3.3) is consistent with this framework: persistent observ ers minimize surprise ⟨ ε ⟩ , equiv alen t to free energy minimization under appropriate assumptions. The Virgo reformulation explicitly connects Go o d Regulator to active inference [9]. 15 8.5 Implications for Cosmology If observ ers and learning are generic features of causally inv ariant substrates, then: • The universe con tains p ersistent structures (galaxies, stars, organisms, intelligence) not b y acciden t, but as necessary consequences of causal inv ariance. • Learning dynamics (V anch urin Eq. 3.4) are as fundamental as gra vitational dynamics (Einstein equations). • The “unreasonable effectiveness” of learning algorithms ma y reflect deep geometric con- strain ts, not empirical tuning. 9 Limitations and Scop e Boundaries W e emphasize sev eral imp ortan t limitations of this w ork: 9.1 Standard vs No vel Results What is textb o ok (not nov el): • Fisher information metric emergence from loss functions is a standard result in information geometry [10], derived in every textbo ok on the sub ject. • Natural gradien t uniqueness under reparameterization is Amari’s well-kno wn 1998 theo- rem, not our discov ery . • The mathematical structure of statistical manifolds and their geometry has b een thor- oughly dev elop ed since the 1980s. What is no vel (our contribution): • Application to h yp ergraph cosmology (new domain). • V erification that hypergraph observers satisfy Go o d Regulator conditions. • Syn thesis connecting W olfram and V anch urin frameworks through this verification. The no velt y is appro ximately 25–30%, residing in the applic ation and verific ation plus c on- ditional c omputational pr e dictions (under the M = F 2 ansatz), not in the mathematical ma- c hinery . 9.2 Unresolv ed T echnical Issues 1. Con tinuum Limit: Like P ap er #1 (Lov elo ck Bridge), we assume the contin uum limit of hypergraph evolution exists and is well-behav ed. Paper #1 empirically disconfirms the con tinuum limit for all 500 dynamically non trivial rules tested; th us this assumption may not hold for generic substrates. 2. Fisher = Onsager: W e ha ve not verified in detail that V anch urin’s Onsager tensor L ij (Eq. 3.4) is mathematically identical to the Fisher metric g ij . This requires careful analysis of his Section 3 deriv ation. 3. Probabilit y Measure: The origin of the probability distribution p θ in a fundamen tally deterministic h yp ergraph substrate is not fully formalized. W e assume coarse-graining or m ultiwa y branching induces probabilities, but this deserv es rigorous treatmen t. 4. Boundary F ormalization: The observer b oundary ∂ O is defined intuitiv ely but lacks rigorous top ological characterization in discrete hypergraph space. 5. Con vergence Mo del Dep endence: The optimal α form ula (Theorem 7.2) assumes con vergence time T = κ ( g ) · µ max ( g ) (Mo del A). Three alternative conv ergence mo dels (Mo dels B–D in Theorem 7.5) do not pro duce interior optima. The choice of Mo del A is motiv ated b y dimensional analysis and numerical fit but is not derived from first principles. Indep enden t v erification against V anc hurin’s full T yp e I I framew ork is needed. 16 6. Loss Hessian Sensitivit y: F or maximum likelihoo d estimation of exp onential families, the exp ected loss Hessian equals the Fisher matrix ( H = F ). Under this ph ysically natural assumption, the con vergence time functional has no interior optim um, alwa ys fa voring α → 1. The closed-form result in Theorem 7.2 holds for isotropic loss ( H = I ), whic h may not describ e realistic observers. The relationship b et w een the observer’s loss landscap e and the Fisher geometry requires further inv estigation. 9.3 Relationship to Other W ork This pap er should b e understo o d as: • NOT a deriv ation of Fisher metrics or natural gradients (those are Amari 1998). • NOT a pro of that learning dynamics are uniquely determined b y physics (we show consistency , not deriv ation). • YES a verification that established theorems apply to hypergraph observers. • YES a synthesis connecting indep endent cosmological frameworks. W e p osition this as solid v erification w ork in a nov el application domain, not as a funda- men tal mathematical disco very . Connection to thermo dynamic gravit y: The Fisher information metric’s connection to gravitational dynamics has b een established by Matsueda [14], who derived Einstein field equations from the Fisher metric via statistical mechanics. Our framework complements this: while Matsueda show ed Fisher → Einstein through thermodynamics, w e show that Fisher metric emergence in observ ers (via Conant-Ash b y + loss minimization) is compatible with Lov elo ck- constrained gra vit y from causal in v ariance. 10 Conclusion W e hav e verified that p ersisten t observers in causally inv ariant substrates satisfy the condi- tions under which standard information geometry applies, thereby demonstrating consistency b et w een W olfram h yp ergraph ph ysics and V anc hurin’s learning cosmology . The constraint to natural gradient descent follo ws from established theorems: the Conan t-Ashb y Go o d Regu- lator Theorem (Virgo et al. 2025 reformulation) and Amari’s uniqueness theorem (1998) for reparameterization-in v ariant gradien ts. This completes the second pillar of the cosmological unification program: • P ap er #1 (Lov elo ck Bridge): Examines whether causal inv ariance → Einstein equa- tions (via Lo v elo c k uniqueness); the bridge fails numerically , but yields constructive T yp e I I results • P ap er #3 (Am ari Chain): Causal in v ariance → Natural gradien t learning (via Go o d Regulator + Amari uniqueness) • Computational Prediction (Conditional): Under Mo del A conv ergence with isotropic loss, regime parameter α determined by Fisher sp ectrum (Theorem 7.2), with threshold κ ( F ) = 2 for regime transition. Mo del dep endence and loss Hessian sensitivit y are op en limitations (Section 9). • Directional Alpha and Deviation T ensor: The directional regime parameter α v k (Theorem 7.6) and trace-free deviation tensor ∆ µν (Theorem 7.10) reveal that the quantum– classical transition is a sp ectral phenomenon: a single observer can sim ultaneously o ccupy all three V anch urin regimes along different Fisher eigendirections. Observers with v an- ishing deviation ( δ = 0) are sp ectrally pure, corresp onding to p erfect Go o d Regulator geometry . 17 T ogether, these results suggest that W olfram hypergraph physics and V anch urin neural net- w ork cosmology are complementary p ersp ectives on a unified causally in v ariant substrate. The syn thesis is accomplished through established uniqueness theorems applied to a no vel cosmo- logical framework, not through new mathematical deriv ations. The conv ergence-time optimal α formula provides a testable prediction from the framew ork. Honest scop e: Our contribution is v erification work in a new application domain (hy- p ergraph cosmology), demonstrating that kno wn theorems apply , plus the M = F 2 ansatz for exp onen tial families, regime parameter determination under that ansatz, and the directional alpha/deviation tensor analysis. W e do not claim to hav e derived Fisher metrics or natural gradien ts, which are standard results in information geometry . F uture work will address the con tinuum limit challenge (shared with Paper #1), verify the Fisher-Onsager iden tification, in- dep enden tly test the α formula against V anch urin’s framew ork, and explore whether quantum mec hanics can b e similarly derived from causal inv ariance. Ac knowledgmen ts: This w ork w as dev elop ed using Claude Co de (An thropic) for literature review, formalization, and v erification. The author thanks the W olfram Ph ysics Pro ject and Vitaly V anch urin for foundational contributions that made this syn thesis p ossible. References [1] Stephen W olfram. A Pr oje ct to Find the F undamental The ory of Physics . Av ailable at: https://www.wolframphysics.org/ . W olfram Media, 2020. [2] Da vid Lov elo ck. “The Einstein T ensor and Its Generalizations”. In: Journal of Mathemat- ic al Physics 12.3 (1971), pp. 498–501. doi : 10.1063/1.1665613 . [3] Vitaly V anch urin. “The W orld as a Neural Net work”. In: Entr opy 22.11 (2020), p. 1210. doi : 10.3390/e22111210 . arXiv: 2008.01540 [physics.gen-ph] . [4] Vitaly V anch urin. “T o w ards a Theory of Machine Learning”. In: arXiv pr eprint (2020). Statistical-mec hanics foundations for learning dynamics. arXiv: 2004.09280 [cs.LG] . [5] Vitaly V anc hurin. “T ow ards a Theory of Quan tum Gra vity from Neural Netw orks”. In: Entr opy 24.1 (2022), p. 7. doi : 10.3390/e24010007 . arXiv: 2112.09006 [hep-th] . [6] Vitaly V anch urin. “Cov ariant Gradien t Descent in T rainable Neural Netw orks”. In: arXiv pr eprint (2025). Type I I framework: metric on parameter space. arXiv: 2504 . 05279 [hep-th] . [7] Vitaly V anc hurin. “Geometric Learning Dynamics”. In: arXiv pr eprint (2025). Type I I learning-dynamics framew ork. arXiv: 2504.14728 [hep-th] . [8] Max Zhura vlev. “Where the Lo v elo c k Bridge Breaks: Negativ e Results and New Directions for Connecting Discrete and Contin uous Spacetime Emergence”. In: arXiv pr eprint (2026). P ap er #1 of Cosmological Unification Program (submitted simultaneously). [9] Nathaniel Virgo et al. “A ’Go o d Regulator Theorem’ for Embo died Agents”. In: arXiv pr eprint arXiv:2508.06326 (2025). Presen ted at ALIFE 2025, Ky oto, Japan. [10] Sh un-ichi Amari. “Natural Gradient W orks Efficiently in Learning”. In: Neur al Computa- tion 10.2 (1998), pp. 251–276. doi : 10.1162/089976698300017746 . [11] Sh un-ichi Amari. Information Ge ometry and Its Applic ations . V ol. 194. Applied Mathe- matical Sciences. Springer, 2016. doi : 10.1007/978- 4- 431- 55978- 8 . [12] Roger C Conan t and W Ross Ashb y. “Ev ery Go o d Regulator of a System Must Be a Mo del of That System”. In: International Journal of Systems Scienc e 1.2 (1970), pp. 89– 97. doi : 10.1080/00207727008920220 . 18 [13] Karl F riston. “The F ree-Energy Principle: A Unified Brain Theory?” In: Natur e R eviews Neur oscienc e 11.2 (2010), pp. 127–138. doi : 10.1038/nrn2787 . [14] Hiroaki Matsueda. “Emergent General Relativit y from Fisher Information Metric”. In: arXiv pr eprint (2013). Published in Progress of Theoretical Physics 130(4), 2013. arXiv: 1310.1831 [hep-th] . 19

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment