UAT-LITE: Inference-Time Uncertainty-Aware Attention for Pretrained Transformers

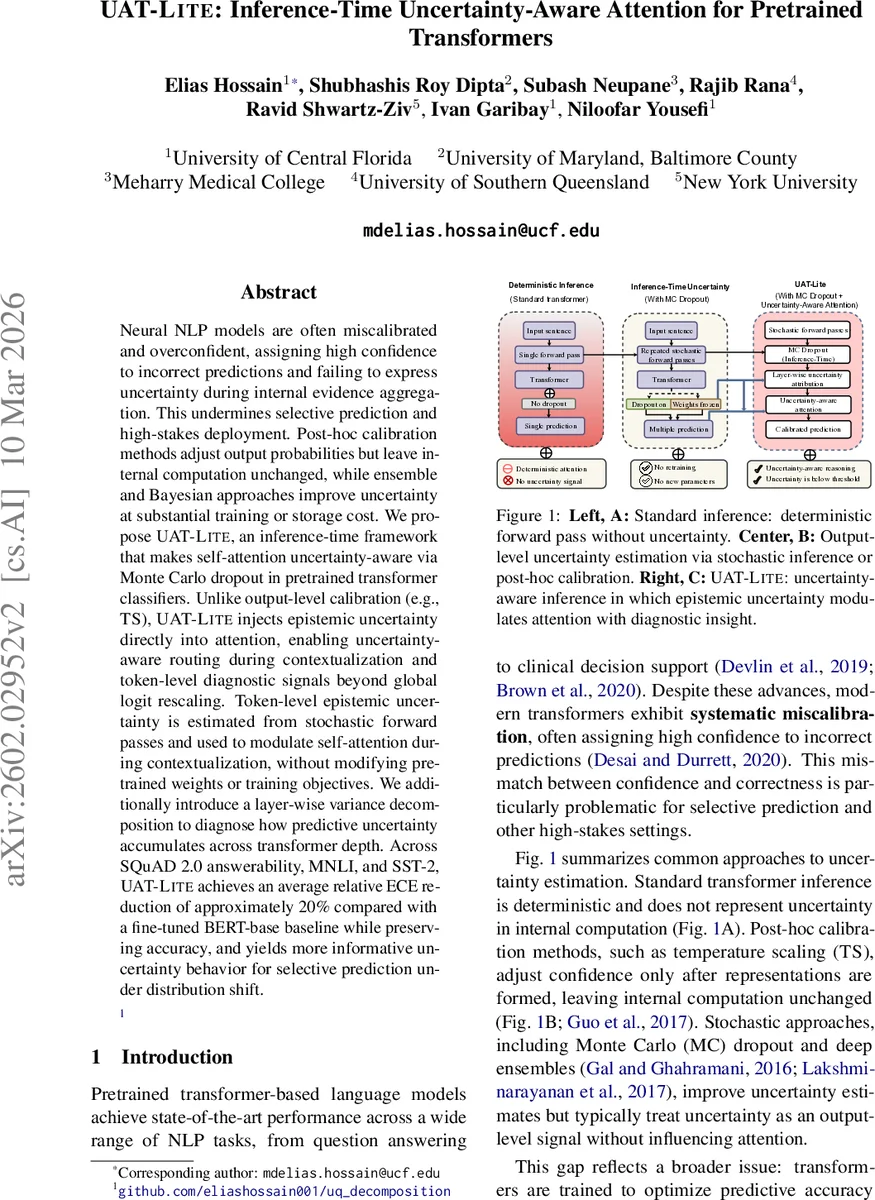

Neural NLP models are often miscalibrated and overconfident, assigning high confidence to incorrect predictions and failing to express uncertainty during internal evidence aggregation. This undermines selective prediction and high-stakes deployment. Post-hoc calibration methods adjust output probabilities but leave internal computation unchanged, while ensemble and Bayesian approaches improve uncertainty at substantial training or storage cost. We propose UAT-LITE, an inference-time framework that makes self-attention uncertainty-aware via Monte Carlo dropout in pretrained transformer classifiers. Unlike output-level calibration (e.g., TS), UAT-LITE injects epistemic uncertainty directly into attention, enabling uncertainty-aware routing during contextualization and token-level diagnostic signals beyond global logit rescaling. Token-level epistemic uncertainty is estimated from stochastic forward passes and used to modulate self-attention during contextualization, without modifying pretrained weights or training objectives. We additionally introduce a layer-wise variance decomposition to diagnose how predictive uncertainty accumulates across transformer depth. Across SQuAD 2.0 answerability, MNLI, and SST-2, UAT-LITE achieves an average relative ECE reduction of approximately 20% compared with a fine-tuned BERT-base baseline while preserving accuracy, and yields more informative uncertainty behavior for selective prediction under distribution shift.

💡 Research Summary

The paper addresses a critical gap in modern pretrained transformer classifiers: while they achieve state‑of‑the‑art accuracy, they are notoriously miscalibrated and provide no uncertainty signal during the internal evidence‑aggregation process (self‑attention). Existing remedies fall into two categories. Post‑hoc calibrators such as Temperature Scaling (TS) merely rescale the final logits, leaving the internal computation untouched. Stochastic methods like Monte‑Carlo (MC) dropout or deep ensembles improve uncertainty estimates but treat uncertainty as an output‑level quantity and do not influence how tokens attend to each other. Bayesian variants that truly embed uncertainty often require architectural changes, variational training, or multiple model copies, which defeats the purpose of leveraging large pretrained models without costly retraining.

UAT‑LITE (Uncertainty‑Aware Attention at Inference‑Time) proposes a lightweight, inference‑only solution that injects epistemic uncertainty directly into the self‑attention mechanism. The method proceeds as follows:

- Monte‑Carlo Dropout at Test Time – Dropout layers are kept active during inference and the model is run M times (M ∈ {3,5,10} in the experiments). Each stochastic pass yields a different embedding for every token.

- Token‑Level Uncertainty Estimation – For token j, the standard deviation across the M embedding samples (averaged over the embedding dimension) defines a scalar uncertainty U(x_j). This quantity serves as a proxy for how unstable the token’s representation is under the posterior induced by dropout.

- Uncertainty‑Weighted Attention – The usual scaled‑dot‑product attention logits a_ij = Q_i K_j^T/√d_k are attenuated by a factor exp(‑λ·u_ij), where u_ij is derived from token uncertainties. Several variants are explored (Q‑only, K‑only, QK‑sum, etc.); the Q‑only variant (using the query token’s uncertainty) consistently yields the best calibration‑accuracy trade‑off. λ is a fixed hyper‑parameter controlling the strength of the penalty.

- Online Estimation – To avoid a separate embedding‑only sampling stage, the uncertainty estimate is updated incrementally after each stochastic pass. In pass m the attention uses the lagged estimate \hat{U}^{(m‑1)}(x_j), ensuring a single M‑pass loop that produces both the final MC‑mean logits and the token‑level uncertainty map.

- Layer‑Wise Variance Decomposition – Using the law of total variance, the authors analytically decompose the predictive variance across transformer layers. This diagnostic tool highlights which layers amplify epistemic noise, offering insight for model debugging or future architectural improvements.

- Optional Post‑hoc TS – UAT‑LITE can be combined with temperature scaling on the MC‑mean logits for further probability calibration, but the two mechanisms operate at different stages (internal attention vs. final logits).

The experimental suite covers five benchmarks: SQuAD 2.0 answerability (question answering with unanswerable queries), MNLI (natural language inference, both matched and mismatched splits), SST‑2 (binary sentiment), and two medical QA datasets (MedQA, PubMedQA) to test domain shift. The backbone is BERT‑base (and in some ablations RoBERTa‑base). Baselines include: (i) deterministic BERT, (ii) BERT + TS, (iii) BERT + MC dropout without attention modulation, (iv) Deep Ensembles (5 models). Evaluation metrics focus on Expected Calibration Error (ECE), Brier score, and selective prediction performance measured by risk‑coverage curves (AUC‑RC).

Key findings:

- Calibration – UAT‑LITE reduces average ECE by roughly 20 % relative to the deterministic baseline, outperforming TS (which reduces ECE but cannot go below ~0.07 on some tasks) and matching or slightly surpassing deep ensembles while using a single model.

- Accuracy Preservation – Across all tasks, top‑1 accuracy drops by less than 0.2 %, confirming that the uncertainty‑aware attention does not sacrifice predictive power.

- Selective Prediction – When abstaining on low‑confidence examples (threshold tuned on validation), UAT‑LITE achieves higher coverage at a fixed risk level, especially under distribution shift (MNLI‑mismatched, MedQA). This demonstrates that the model’s confidence becomes more trustworthy.

- Layer Diagnostics – The variance decomposition reveals that early layers tend to dampen token‑level noise, while middle layers (especially the 6‑8th transformer block) often amplify it. This pattern aligns with intuition that higher‑level semantic integration is where ambiguous tokens exert larger influence.

- Ablation on λ and M – Larger M yields more stable uncertainty estimates but with diminishing returns beyond M = 5. λ ≈ 1.0 provides the best balance; overly aggressive attenuation (λ ≥ 2) harms accuracy, while λ ≈ 0.5 yields modest calibration gains.

Limitations and future directions are candidly discussed. The primary overhead is the linear increase in inference time with M; however, the authors argue that for high‑stakes applications (medical QA, legal document analysis) the trade‑off is acceptable. The method is currently limited to encoder‑only architectures; extending uncertainty‑aware attention to decoder‑only generative models (e.g., GPT‑3) is left as future work. Moreover, the approach assumes that dropout‑induced variance is a good proxy for epistemic uncertainty, which may not hold for all pretraining regimes.

In summary, UAT‑LITE offers a practical, training‑free technique to embed epistemic uncertainty into the core attention mechanism of pretrained transformers. By doing so, it improves calibration, yields richer token‑level diagnostic signals, and enhances selective prediction under both in‑domain and out‑of‑domain conditions, all while preserving the model’s original accuracy. This work bridges the gap between post‑hoc calibration and fully Bayesian transformer variants, providing a middle ground that is both computationally feasible and empirically effective.

Comments & Academic Discussion

Loading comments...

Leave a Comment