Learning responsibility allocations for multi-agent interactions: A differentiable optimization approach with control barrier functions

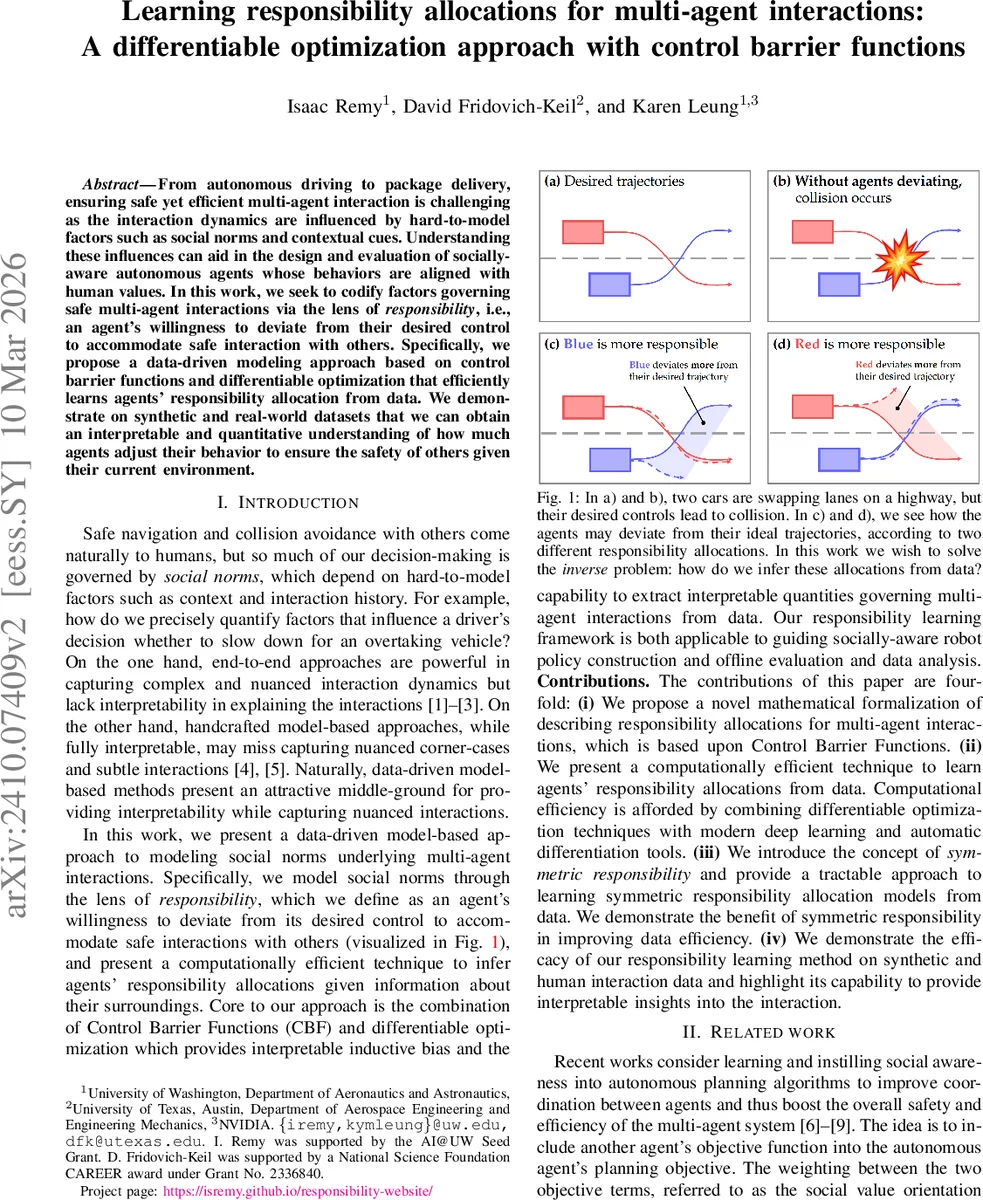

From autonomous driving to package delivery, ensuring safe yet efficient multi-agent interaction is challenging as the interaction dynamics are influenced by hard-to-model factors such as social norms and contextual cues. Understanding these influences can aid in the design and evaluation of socially-aware autonomous agents whose behaviors are aligned with human values. In this work, we seek to codify factors governing safe multi-agent interactions via the lens of responsibility, i.e., an agent’s willingness to deviate from their desired control to accommodate safe interaction with others. Specifically, we propose a data-driven modeling approach based on control barrier functions and differentiable optimization that efficiently learns agents’ responsibility allocation from data. We demonstrate on synthetic and real-world datasets that we can obtain an interpretable and quantitative understanding of how much agents adjust their behavior to ensure the safety of others given their current environment.

💡 Research Summary

The paper tackles the problem of quantifying how much each agent in a multi‑agent system is willing to compromise its own desired control in order to maintain safety, a notion the authors term “responsibility allocation.” By leveraging Control Barrier Functions (CBFs) as a safety filter, the authors formulate a projection of each agent’s nominal control u_des onto the set of controls that satisfy a shared safety constraint ∇b(x)·f(x,u)+α(b(x))≥0. The key innovation is the introduction of a responsibility vector γ ∈ℝⁿ, with non‑negative entries that sum to one, which weights the deviation cost ‖u_i−u_des_i‖² for each agent. When γ_i is small, agent i bears little responsibility and deviates little; when γ_i approaches one, the agent assumes most of the burden.

The authors define a quadratic program (Problem 3) that incorporates the weighted deviation costs, a slack variable ε to handle infeasibility, and regularization terms to guarantee a unique solution even when an agent carries full responsibility. They also propose a symmetric‑responsibility variant where agents share identical γ values, reducing the number of parameters and improving data efficiency.

To learn γ from observed interaction data, the paper casts the problem as a bi‑level optimization: the outer level minimizes a loss measuring the discrepancy between observed controls and the projected controls produced by the inner CBF‑based QP, while the inner level solves the QP for each data point. By employing differentiable optimization techniques (e.g., cvxpylayers, qpth) and modern automatic‑differentiation frameworks such as JAX, the authors are able to back‑propagate through the QP, compute gradients ∂ℓ/∂γ, and update γ via gradient descent. The responsibility vector is parameterized as γ=softmax(e_γ) to enforce the simplex constraints without projection.

Experimental validation proceeds in three stages. First, synthetic 1‑D two‑agent integrator scenarios demonstrate that the method can recover ground‑truth γ values across the full spectrum from 0 to 1. Second, high‑dimensional vehicle simulations (multiple lanes, acceleration/deceleration dynamics) show that the learned γ adapts sensibly to relative positions and velocities, assigning more responsibility to the agent that is better positioned to avoid a collision. Third, real‑world driving data collected from human drivers during lane‑changing and overtaking maneuvers reveal interpretable patterns: a trailing vehicle assumes higher responsibility when the leading vehicle brakes abruptly, while the leading vehicle’s responsibility rises when it yields to an overtaking car. The symmetric‑responsibility model achieves comparable performance with fewer training samples, confirming its data‑efficiency advantage.

The paper’s contributions are fourfold: (i) a mathematically rigorous formulation of responsibility allocation using CBFs; (ii) a scalable, differentiable‑optimization‑based learning algorithm; (iii) the introduction of symmetric responsibility to reduce model complexity; and (iv) empirical evidence that the approach yields accurate, interpretable responsibility maps on both synthetic and real datasets. Limitations include the reliance on a manually designed CBF (which encodes the safety notion) and the focus on a single safety constraint (collision avoidance). Future work is suggested to extend the framework to multiple, possibly conflicting constraints (e.g., speed limits, traffic rules), to higher‑order dynamics, and to integrate the learned responsibility allocations directly into online control policies for socially‑aware autonomous agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment