AlpsBench: An LLM Personalization Benchmark for Real-Dialogue Memorization and Preference Alignment

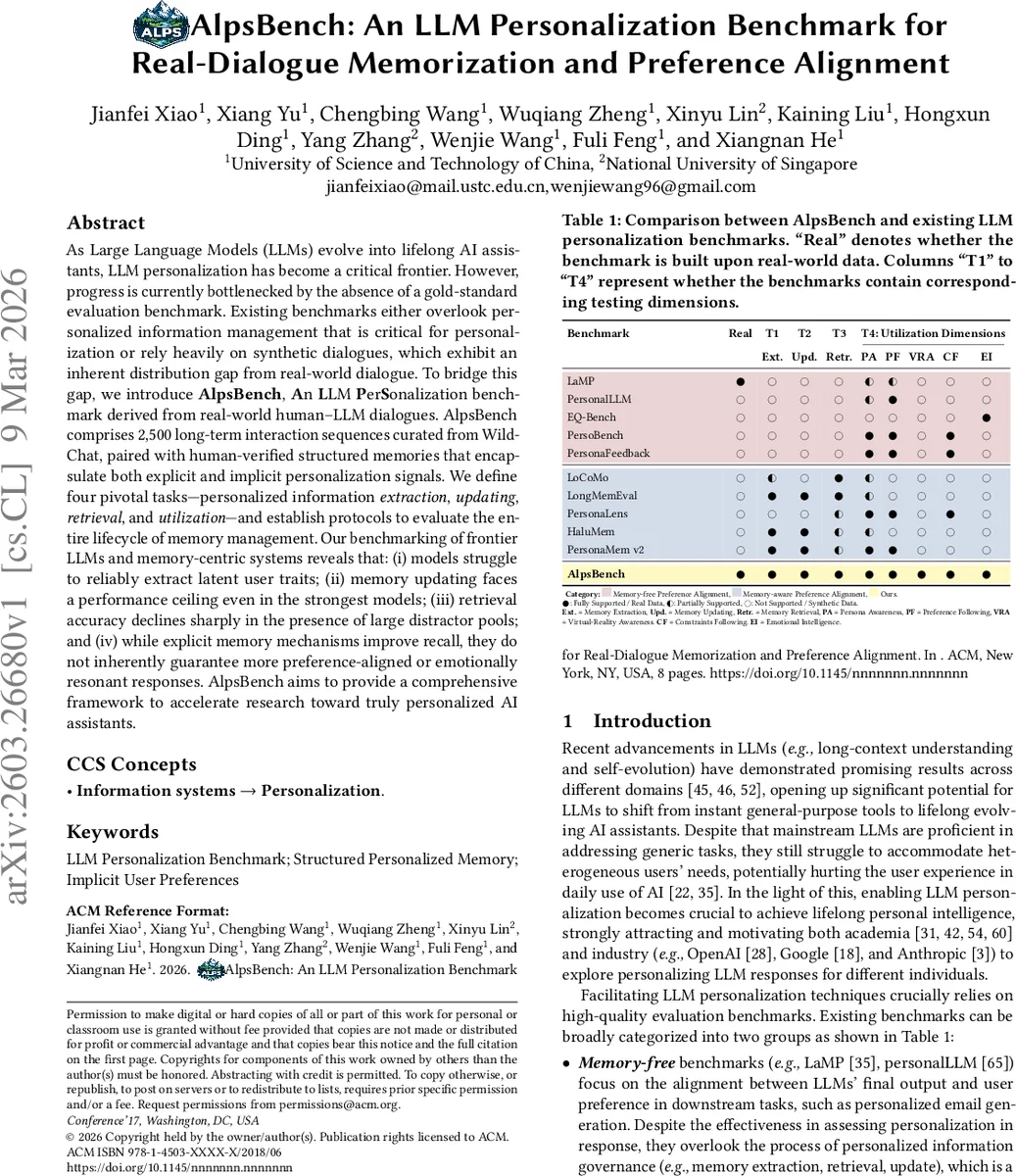

As Large Language Models (LLMs) evolve into lifelong AI assistants, LLM personalization has become a critical frontier. However, progress is currently bottlenecked by the absence of a gold-standard evaluation benchmark. Existing benchmarks either overlook personalized information management that is critical for personalization or rely heavily on synthetic dialogues, which exhibit an inherent distribution gap from real-world dialogue. To bridge this gap, we introduce AlpsBench, An LLM PerSonalization benchmark derived from real-world human-LLM dialogues. AlpsBench comprises 2,500 long-term interaction sequences curated from WildChat, paired with human-verified structured memories that encapsulate both explicit and implicit personalization signals. We define four pivotal tasks - personalized information extraction, updating, retrieval, and utilization - and establish protocols to evaluate the entire lifecycle of memory management. Our benchmarking of frontier LLMs and memory-centric systems reveals that: (i) models struggle to reliably extract latent user traits; (ii) memory updating faces a performance ceiling even in the strongest models; (iii) retrieval accuracy declines sharply in the presence of large distractor pools; and (iv) while explicit memory mechanisms improve recall, they do not inherently guarantee more preference-aligned or emotionally resonant responses. AlpsBench aims to provide a comprehensive framework.

💡 Research Summary

AlpsBench introduces a comprehensive benchmark for evaluating large language model (LLM) personalization using real‑world human‑LLM dialogues. The authors argue that existing benchmarks fall into two categories: memory‑free benchmarks that assess only the final output’s alignment with user preferences, and memory‑aware benchmarks that rely on synthetic dialogues. Both suffer from a lack of authentic conversational diversity and an inability to capture implicit user signals.

To address these gaps, the authors curate 2,500 long‑term interaction sequences from the WildChat dataset, each ranging from 6 to 249 turns. For every dialogue, a structured memory is created, containing five fields: a unique memory ID, memory type (explicit or implicit), a taxonomic label, the extracted value, and a confidence score. Human annotators verify all memories, ensuring high‑quality ground truth.

AlpsBench defines four core tasks that together cover the entire lifecycle of personalized information management:

-

Personalized Information Extraction – Models must convert raw dialogue histories into sets of structured memories. Evaluation combines exact‑match F1 scoring with a “LLM‑as‑a‑judge” semantic similarity metric to capture nuanced matches.

-

Personalized Information Update – Given an existing memory set and a new dialogue, models output an updated memory set and label each change as Retention, Addition, or Modification. Accuracy against human‑annotated actions measures the model’s ability to track evolving user preferences and resolve conflicts.

-

Personalized Information Retrieval – Models receive a user query and a candidate pool consisting of one positive memory and many randomly sampled negatives. The task is to retrieve the most relevant memory. Recall (and precision) are reported, highlighting how well models can sift through large distractor sets.

-

Personalized Information Utilization – Models generate a response to a user query using the dialogue history. Five dimensions are judged: Persona Awareness, Preference Following, Virtual‑Reality Awareness, Constraint Following, and Emotional Intelligence. Four dimensions receive binary scores from an LLM judge; Emotional Intelligence is scored on a 1‑5 scale using a reward‑model approach.

The benchmark construction follows a four‑step pipeline: (1) data collection from WildChat, (2) automated memory extraction, (3) human verification, and (4) task generation (queries, negative samples, etc.). Because the pipeline can be rerun as new dialogues become available, AlpsBench is positioned as a dynamic, extensible benchmark.

Experiments evaluate several state‑of‑the‑art LLMs (GPT‑4‑Turbo, Claude‑2, Llama‑2‑70B) and memory‑augmented systems (retrieval‑augmented generation). Key findings include:

- Extraction – Even the strongest models achieve modest F1 ≈ 0.45, indicating difficulty in reliably extracting latent user traits, especially implicit preferences.

- Update – Accuracy plateaus around 0.68, suggesting that models struggle to correctly retain, add, or modify memories as user preferences evolve.

- Retrieval – Recall drops sharply when the candidate pool grows (e.g., Recall ≈ 0.31 with 100 distractors), exposing a bottleneck in large‑scale memory indexing and relevance ranking. Retrieval‑augmented approaches provide only marginal gains.

- Utilization – Explicit memory mechanisms improve recall of factual persona details but do not automatically translate into higher Preference Following or Emotional Intelligence scores. Models with memory prompts perform similarly to memory‑free baselines on these dimensions.

Statistical analysis of the data shows that real‑world dialogues have higher lexical diversity (type‑token ratio 0.084 vs. 0.042 for synthetic data) and a richer set of implicit expressions, confirming the distributional gap that synthetic benchmarks suffer from.

In summary, AlpsBench offers the first benchmark that (a) is built on authentic human‑LLM conversations, (b) provides human‑verified structured memories, and (c) evaluates the full pipeline of personalized information handling. The results reveal substantial room for improvement in latent trait extraction, dynamic memory updating, scalable retrieval, and the integration of memory into preference‑aligned response generation. The authors release code, data, and a public leaderboard under an ODC‑BY license, inviting the community to continuously track progress and develop more robust personalized LLM systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment