FreeTacMan: Robot-free Visuo-Tactile Data Collection System for Contact-rich Manipulation

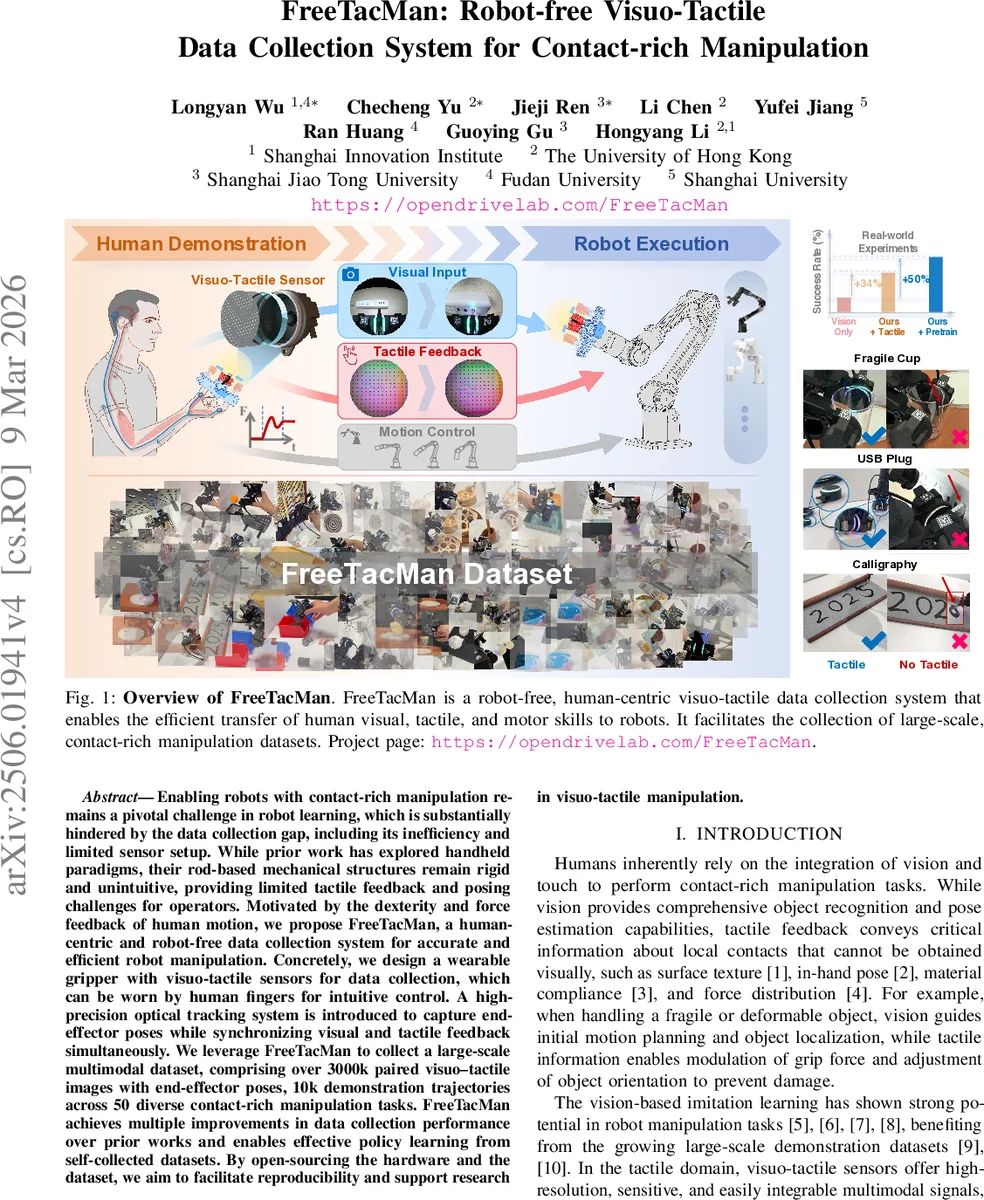

Enabling robots with contact-rich manipulation remains a pivotal challenge in robot learning, which is substantially hindered by the data collection gap, including its inefficiency and limited sensor setup. While prior work has explored handheld paradigms, their rod-based mechanical structures remain rigid and unintuitive, providing limited tactile feedback and posing challenges for operators. Motivated by the dexterity and force feedback of human motion, we propose FreeTacMan, a human-centric and robot-free data collection system for accurate and efficient robot manipulation. Concretely, we design a wearable gripper with visuo-tactile sensors for data collection, which can be worn by human fingers for intuitive control. A high-precision optical tracking system is introduced to capture end-effector poses while synchronizing visual and tactile feedback simultaneously. We leverage FreeTacMan to collect a large-scale multimodal dataset, comprising over 3000k paired visuo-tactile images with end-effector poses, 10k demonstration trajectories across 50 diverse contact-rich manipulation tasks. FreeTacMan achieves multiple improvements in data collection performance over prior works and enables effective policy learning from self-collected datasets. By open-sourcing the hardware and the dataset, we aim to facilitate reproducibility and support research in visuo-tactile manipulation.

💡 Research Summary

FreeTacMan introduces a novel, robot‑free, human‑centric platform for collecting large‑scale visuo‑tactile datasets tailored to contact‑rich manipulation. The system consists of a wearable gripper that mounts high‑resolution visuo‑tactile sensors directly on the operator’s fingertip, eliminating intermediate mechanical linkages and providing essentially zero‑loss tactile transmission. A wrist‑mounted fisheye RGB camera captures visual observations, while a high‑precision optical motion‑capture system (NOKOV, 240 Hz, sub‑millimeter accuracy) records the 6‑DoF pose of the gripper in real time. This combination yields perfectly synchronized multimodal streams: RGB image, two tactile images, gripper width, and pose at 30 Hz.

FreeTacMan’s modular architecture separates the perception module, the universal gripper interface, and the camera scaffold, allowing rapid re‑configuration for different robot arms (e.g., a 6‑DoF Piper and a 7‑DoF Franka) or for pure data‑collection setups. The fingertip straps accommodate hand sizes from the 5th to 95th percentile, ensuring ergonomic comfort.

Using this platform, the authors collected over 3 million paired visuo‑tactile images and more than 10 000 demonstration trajectories across 50 diverse tasks, ranging from fragile cup handling to USB plug insertion and calligraphy. Each trajectory is embodied‑agnostic; given a robot’s URDF, inverse kinematics can map the recorded gripper pose to joint commands directly.

The learning pipeline is two‑stage. First, a CLIP‑style contrastive pre‑training aligns tactile embeddings with visual embeddings. The visual encoder (ResNet) is frozen, while the tactile encoder (identical backbone) is fine‑tuned. Primary positives are the visual embeddings at the same timestep; secondary positives are the visual embeddings at the next timestep, encouraging temporal smoothness. A memory bank supplies negatives, and the loss combines both primary and secondary alignments.

Second, the pretrained tactile encoder and frozen visual encoder produce embeddings that are concatenated and fed into an Action Chunking Transformer (ACT). ACT predicts 7‑DoF joint positions for downstream manipulation. Experiments show that policies trained with both modalities achieve up to 50 % higher success rates than vision‑only baselines, especially on tasks requiring delicate force modulation.

Compared with prior handheld data‑collection methods that rely on SLAM/IMU for pose estimation and multi‑link mechanical transmissions, FreeTacMan reduces the tactile transmission path to a single “finger‑to‑sensor” link, provides real‑time tactile feedback, and eliminates drift‑prone pose errors. The authors also report a 34 % improvement in success when adding tactile data to vision, and a 50 % boost when pre‑training tactile representations.

Limitations include dependence on a laboratory‑grade motion‑capture system, which may be costly for large‑scale deployment, and potential operator fatigue from wearing the gripper for extended periods. The tactile sensor (based on the McTac design) may require further robustness testing for industrial‑grade loads.

Overall, FreeTacMan delivers a high‑fidelity, scalable, and open‑source solution for visuo‑tactile data collection, bridging a critical gap in robot learning research and setting a new benchmark for future contact‑rich manipulation studies.

Comments & Academic Discussion

Loading comments...

Leave a Comment