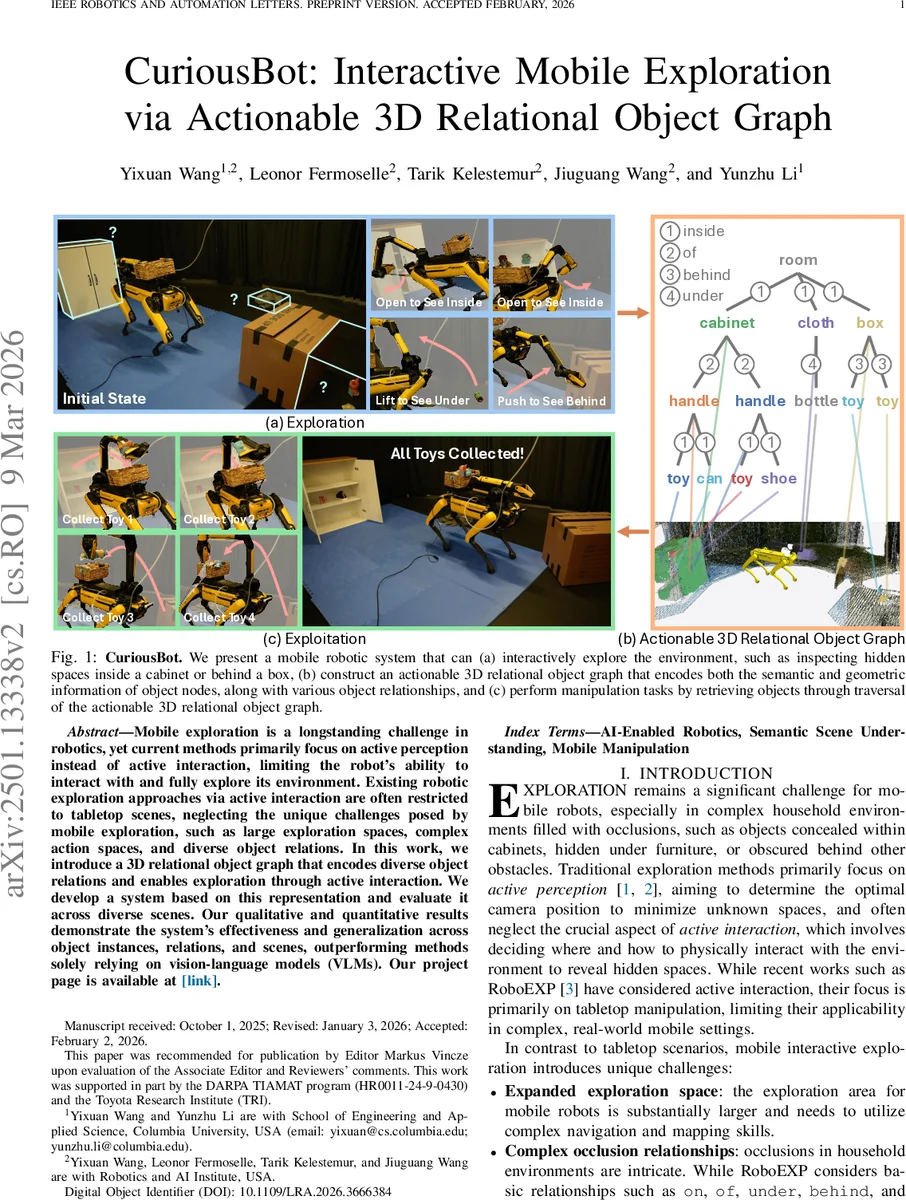

CuriousBot: Interactive Mobile Exploration via Actionable 3D Relational Object Graph

Mobile exploration is a longstanding challenge in robotics, yet current methods primarily focus on active perception instead of active interaction, limiting the robot’s ability to interact with and fully explore its environment. Existing robotic exploration approaches via active interaction are often restricted to tabletop scenes, neglecting the unique challenges posed by mobile exploration, such as large exploration spaces, complex action spaces, and diverse object relations. In this work, we introduce a 3D relational object graph that encodes diverse object relations and enables exploration through active interaction. We develop a system based on this representation and evaluate it across diverse scenes. Our qualitative and quantitative results demonstrate the system’s effectiveness and generalization across object instances, relations, and scenes, outperforming methods solely relying on vision-language models (VLMs).

💡 Research Summary

CuriousBot tackles the long‑standing problem of mobile robotic exploration by integrating active interaction with a structured 3D relational object graph. The system consists of four tightly coupled modules: (1) SLAM, which uses RTAB‑Map to fuse RGB‑D streams and odometry into a real‑time camera pose; (2) Graph Constructor, which detects objects with an open‑vocabulary detector and Segment‑Anything, segments them into 3D point clouds, and associates detections across frames using label consistency and IoU‑based matching. New detections become nodes, each storing semantic labels, point clouds, normals, and a “obstruction” flag. (3) Relation Builder adds directed edges representing five common household occlusion relations—on, under, behind, inside, of—through two complementary mechanisms. Interaction‑driven edges are inferred from the most recent manipulation skill (e.g., after an “open” action, a newly visible object is linked with an “inside” edge). Geometry‑driven edges are derived from simple 3D bounding‑box tests (e.g., spatial overlap yields an “on” edge). (4) A voxel map discretizes the environment into cells labeled unexplored, free, unknown, or outside, providing occlusion cues that inform both graph attributes and planning decisions.

The constructed graph is serialized into plain text via a depth‑first traversal that records node depth, ID, name, and obstruction status. This textual representation is fed to GPT‑4o (or any plug‑and‑play LLM) along with carefully crafted prompts. The LLM generates a high‑level plan as a sequence of primitive manipulation skills (open, push, lift, flip, collect, sit, etc.) tailored to the current graph structure. Low‑level skill controllers then execute these primitives using the robot’s arm and base controllers.

Evaluation was performed on five distinct exploration scenarios—opening cabinets, pushing chairs to reveal hidden spaces, flipping boxes, sitting to access low shelves, and lifting cloths to uncover objects underneath—each repeated ten times with varied initial conditions. CuriousBot was benchmarked against a baseline that directly uses GPT‑4V to guide exploration without an explicit graph. Results show that CuriousBot reduces unknown space 27 % faster on average and achieves a 15 % lower failure rate. Notably, in complex occlusion configurations (e.g., objects inside closed cabinets), the graph‑based approach discovers items that the baseline completely misses. An error analysis identified three primary failure modes: (i) low confidence object detections leading to missed nodes, (ii) inaccurate voxel boundary definitions causing mislabeling of unknown space, and (iii) occasional LLM‑generated plans that include infeasible skill sequences. The authors propose future work on more robust detection pipelines, adaptive voxel mapping, and closed‑loop feedback between the planner and the graph to mitigate these issues.

In summary, CuriousBot demonstrates that a 3D relational object graph, enriched with actionable occlusion relations and coupled with large‑language‑model planning, enables mobile robots to explore and manipulate real‑world environments far beyond passive perception. This framework opens pathways for service robots in homes, search‑and‑rescue platforms, and industrial automation where discovering hidden spaces and interacting with diverse objects are essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment