Rethinking SNN Online Training and Deployment: Gradient-Coherent Learning via Hybrid-Driven LIF Model

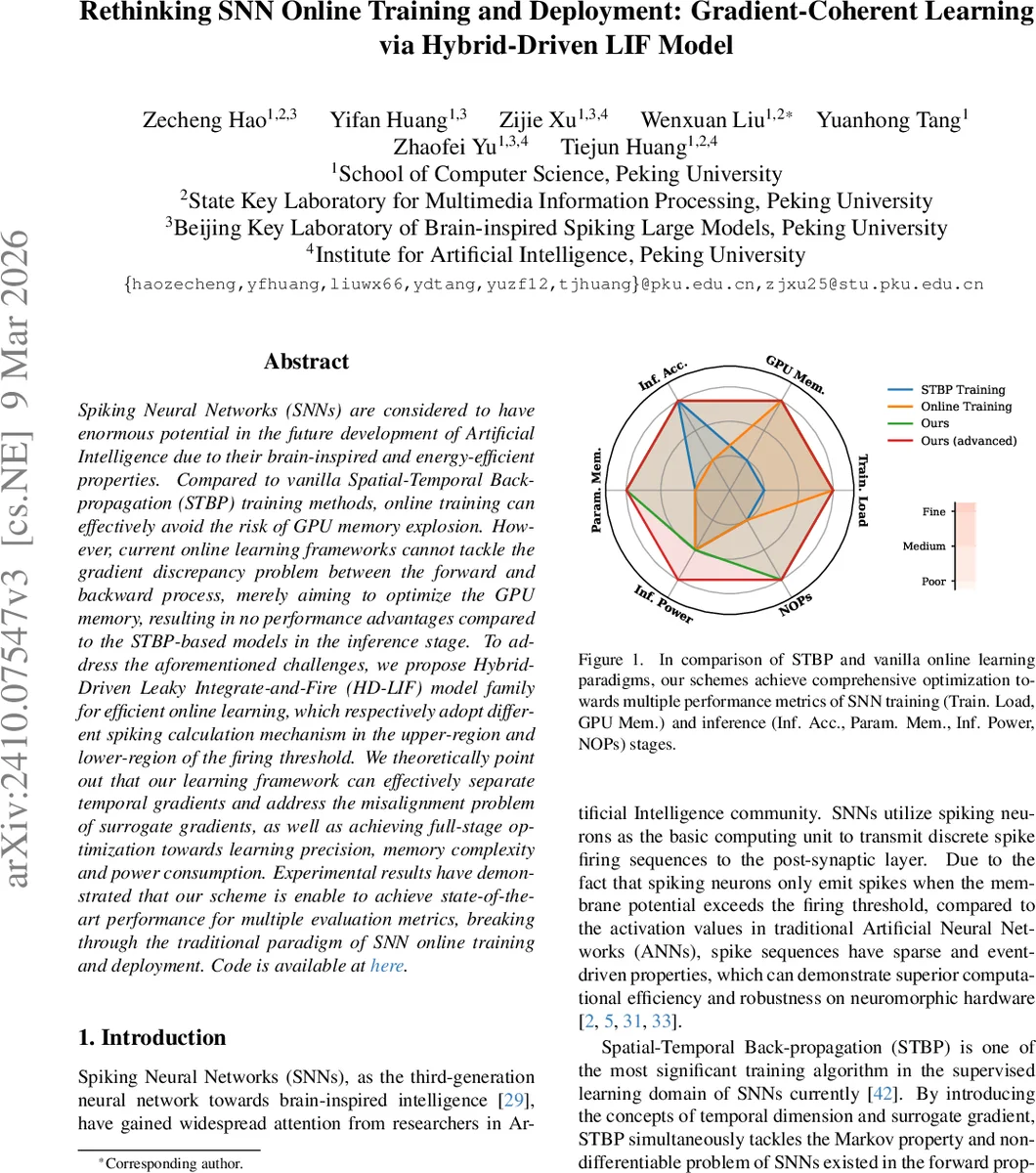

Spiking Neural Networks (SNNs) are considered to have enormous potential in the future development of Artificial Intelligence due to their brain-inspired and energy-efficient properties. Compared to vanilla Spatial-Temporal Back-propagation (STBP) training methods, online training can effectively avoid the risk of GPU memory explosion. However, current online learning frameworks cannot tackle the gradient discrepancy problem between the forward and backward process, merely aiming to optimize the GPU memory, resulting in no performance advantages compared to the STBP-based models in the inference stage. To address the aforementioned challenges, we propose Hybrid-Driven Leaky Integrate-and-Fire (HD-LIF) model family for efficient online learning, which respectively adopt different spiking calculation mechanism in the upper-region and lower-region of the firing threshold. We theoretically point out that our learning framework can effectively separate temporal gradients and address the misalignment problem of surrogate gradients, as well as achieving full-stage optimization towards learning precision, memory complexity and power consumption. Experimental results have demonstrated that our scheme is enable to achieve state-of-the-art performance for multiple evaluation metrics, breaking through the traditional paradigm of SNN online training and deployment. Code is available at \href{https://github.com/hzc1208/HD_LIF}{here}.

💡 Research Summary

Spiking Neural Networks (SNNs) promise brain‑inspired, event‑driven computation with low power consumption, yet their training remains a bottleneck. The dominant Spatial‑Temporal Back‑Propagation (STBP) algorithm unfolds the network across both spatial and temporal dimensions, requiring the storage of intermediate states for every time step. Consequently, GPU memory grows linearly with the number of simulation steps, limiting scalability to deep architectures and long sequences. Recent “online” learning approaches attempt to detach temporal dependencies, updating weights at each step so that memory usage stays constant. However, these methods suffer from a severe gradient discrepancy: the surrogate gradient used for the non‑differentiable spike function depends on the membrane potential, which varies irregularly over time. When temporal gradients are ignored, the forward and backward passes become misaligned, leading to degraded inference accuracy. Moreover, existing online schemes provide no advantage during inference compared with STBP‑trained models.

The paper introduces a novel family of Hybrid‑Driven Leaky Integrate‑and‑Fire (HD‑LIF) neurons designed explicitly for online training. The key idea is to treat the membrane potential region below the firing threshold θ exactly like a conventional LIF neuron (leak, integrate, fire), while the region above θ employs a “Precise‑Positioning Reset” (P2‑Reset). After a spike, the membrane potential is forced back to θ, and the excess voltage is emitted as an additional spike scaled by a quantization function. This bifurcated mechanism yields a surrogate derivative ∂s∗/∂m that is constant (0 or 1) on each side of the threshold, eliminating the time‑varying component that caused gradient misalignment in standard LIF.

The authors formalize “Separable Backward Gradient” (Definition 4.1): if the contribution weight ϵ

Comments & Academic Discussion

Loading comments...

Leave a Comment