CONSTANT: Towards High-Quality One-Shot Handwriting Generation with Patch Contrastive Enhancement and Style-Aware Quantization

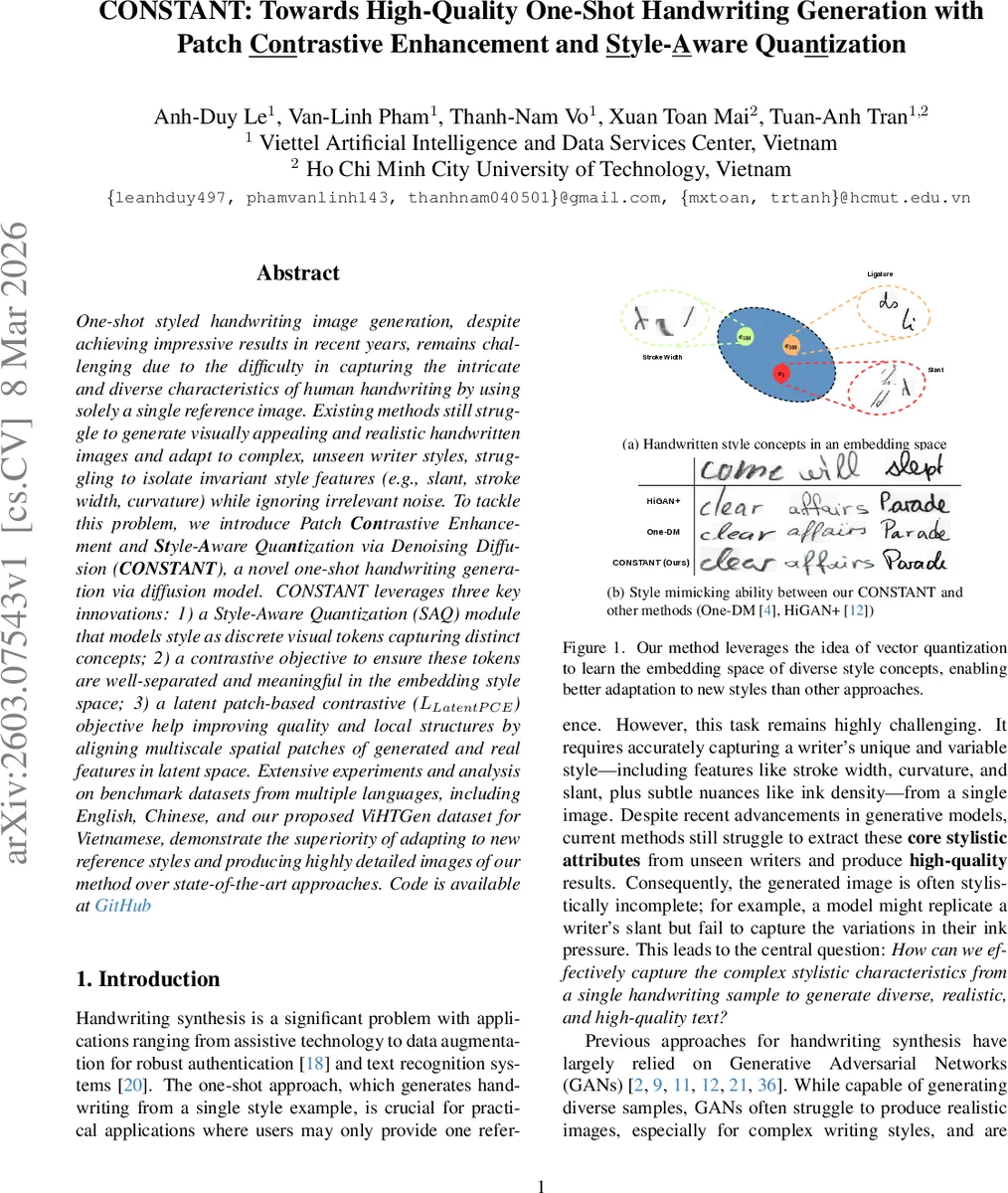

One-shot styled handwriting image generation, despite achieving impressive results in recent years, remains challenging due to the difficulty in capturing the intricate and diverse characteristics of human handwriting by using solely a single reference image. Existing methods still struggle to generate visually appealing and realistic handwritten images and adapt to complex, unseen writer styles, struggling to isolate invariant style features (e.g., slant, stroke width, curvature) while ignoring irrelevant noise. To tackle this problem, we introduce Patch Contrastive Enhancement and Style-Aware Quantization via Denoising Diffusion (CONSTANT), a novel one-shot handwriting generation via diffusion model. CONSTANT leverages three key innovations: 1) a Style-Aware Quantization (SAQ) module that models style as discrete visual tokens capturing distinct concepts; 2) a contrastive objective to ensure these tokens are well-separated and meaningful in the embedding style space; 3) a latent patch-based contrastive (LLatentPCE) objective help improving quality and local structures by aligning multiscale spatial patches of generated and real features in latent space. Extensive experiments and analysis on benchmark datasets from multiple languages, including English, Chinese, and our proposed ViHTGen dataset for Vietnamese, demonstrate the superiority of adapting to new reference styles and producing highly detailed images of our method over state-of-the-art approaches. Code is available at GitHub

💡 Research Summary

The paper introduces CONSTANT, a novel one‑shot handwriting generation framework that leverages diffusion models together with two contrastive mechanisms and a vector‑quantization‑based style encoder. The core idea is to represent a writer’s style as a set of discrete visual tokens rather than a single continuous vector. A pretrained Inception‑V3 backbone extracts multi‑scale features from the single reference image, which are then quantized against a learnable codebook (size K, dimension D) to produce style tokens. These tokens capture fundamental style concepts such as stroke width, slant, and ligature patterns. To preserve fine‑grained information, the quantized features are concatenated with the original continuous features and processed by an attention‑pooling module, yielding a global style vector (used for contrastive learning) and a refined sequence of style tokens that serve as conditioning for the diffusion model.

Two contrastive objectives are introduced. The Style Contrastive Enhancement (L_SCE) loss treats the global style vectors of reference‑target pairs from the same writer as positives and those from different writers as negatives, applying an InfoNCE‑style cosine‑similarity loss with temperature scaling. This encourages the model to cluster same‑writer styles while separating different writers in the embedding space. The Latent Patch Contrastive Enhancement (L_LatentPCE) loss operates on latent representations inside the Latent Diffusion Model (LDM). Multi‑scale patches are sampled from both the generated latent and the ground‑truth latent; a contrastive loss maximizes mutual information between corresponding patches while pushing apart mismatched patches. This auxiliary loss sharpens local details such as curvature, ink density, and stroke connections, addressing the blurriness often observed with pure denoising objectives.

The overall training objective combines the standard diffusion denoising loss with the three auxiliary terms:

L = L_denoising + α·(L_LatentPCE + L_SCE + L_SAQ),

where α is set to 0.1. The model is trained end‑to‑end on latent diffusion, which is memory‑efficient and capable of high‑resolution synthesis. Textual content is encoded by a three‑layer Transformer, producing per‑character embeddings that are fused with the style tokens via cross‑attention.

Experiments are conducted on three multilingual benchmarks: English IAM, Chinese CASIA‑HWDB, and a newly collected Vietnamese dataset (ViHTGen). Evaluation metrics include Fréchet Inception Distance (FID), Inception Score (IS), a style classification accuracy (measuring how well generated samples retain the reference style), and OCR‑based word error rate (WER) to assess readability. CONSTANT outperforms state‑of‑the‑art one‑shot methods such as One‑DM, HiGAN+, and Diff‑Font across all metrics. Notably, the combination of SAQ and L_SCE improves style classification by over 12 %, while adding L_LatentPCE reduces FID by ~8 % and yields a 15 % increase in human preference for fine detail. Ablation studies confirm that each component contributes meaningfully: removing SAQ degrades style separation, omitting L_SCE harms writer discrimination, and dropping L_LatentPCE leads to noticeably blurrier strokes.

The authors discuss limitations, including the sensitivity of codebook size to memory and over‑fitting, and the computational cost of pre‑training a multilingual codebook. They also note that the current setup assumes relatively short reference samples; extending to long paragraphs may require additional mechanisms for maintaining consistent style over longer sequences.

Future directions suggested are dynamic, meta‑learning‑driven codebook expansion, cross‑language style transfer via shared token spaces, and conditional style manipulation (e.g., applying a learned style to arbitrary text).

In summary, CONSTANT demonstrates that discrete style tokenization combined with contrastive learning at both the global style level and the latent‑patch level can dramatically improve one‑shot handwriting synthesis, delivering high‑fidelity, stylistically faithful, and readable handwritten text across multiple languages.

Comments & Academic Discussion

Loading comments...

Leave a Comment