From Static Inference to Dynamic Interaction: A Survey of Streaming Large Language Models

Standard Large Language Models (LLMs) are predominantly designed for static inference with pre-defined inputs, which limits their applicability in dynamic, real-time scenarios. To address this gap, the streaming LLM paradigm has emerged. However, existing definitions of streaming LLMs remain fragmented, conflating streaming generation, streaming inputs, and interactive streaming architectures, while a systematic taxonomy is still lacking. This paper provides a comprehensive overview and analysis of streaming LLMs. First, we establish a unified definition of streaming LLMs based on data flow and dynamic interaction to clarify existing ambiguities. Building on this definition, we propose a systematic taxonomy of current streaming LLMs and conduct an in-depth discussion on their underlying methodologies. Furthermore, we explore the applications of streaming LLMs in real-world scenarios and outline promising research directions to support ongoing advances in streaming intelligence. We maintain a continuously updated repository of relevant papers at https://github.com/EIT-NLP/Awesome-Streaming-LLMs.

💡 Research Summary

The paper addresses a fundamental limitation of current large language models (LLMs): they are built for static, batch‑style inference where the entire input is presented before any output is generated. This “read‑once” assumption makes standard LLMs unsuitable for real‑time scenarios such as live speech transcription, video understanding, sensor streams, or interactive agents that must process multiple concurrent modalities and decide when to respond. To fill this gap, the authors introduce the concept of “streaming LLMs” and provide a unified, mathematically‑grounded definition based on data flow and dynamic interaction. They formalize the conditional probability P(Y|X) with a decision function ϕ(t) that determines how much of the input prefix is visible at generation step t.

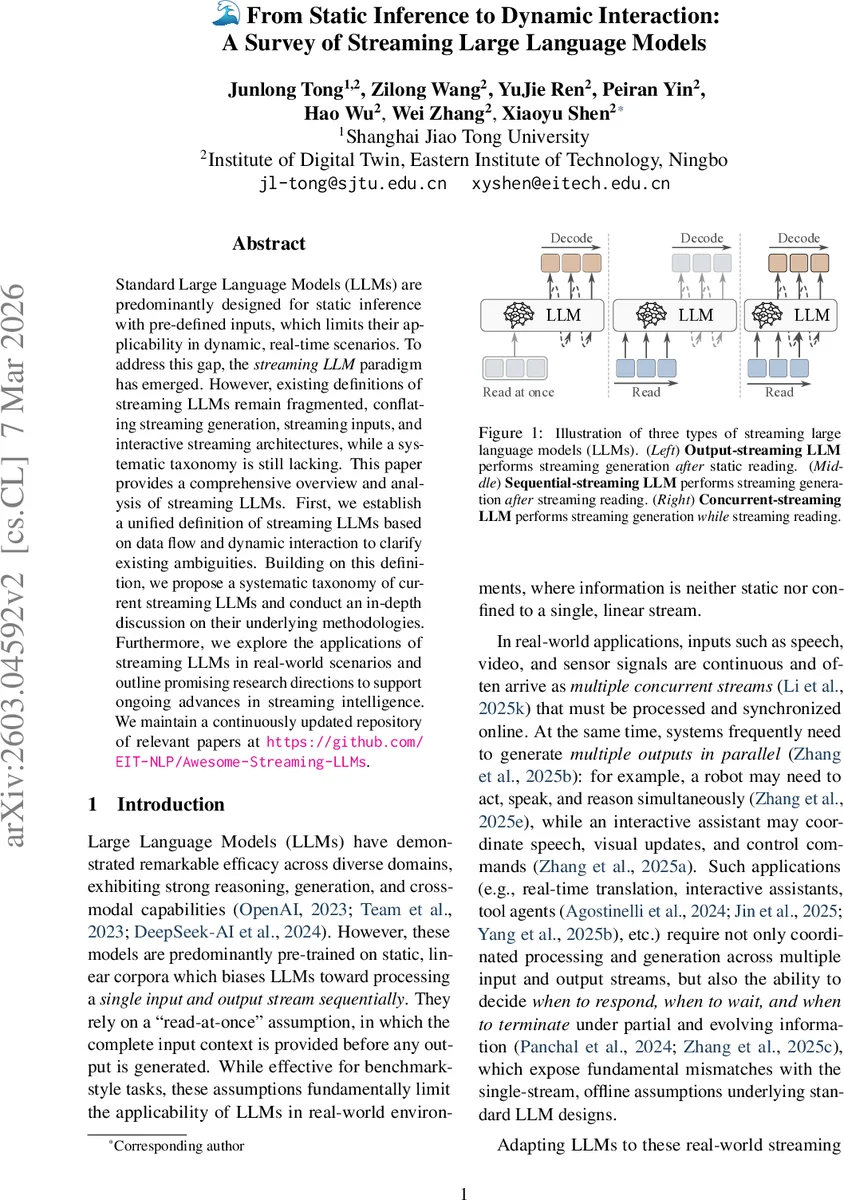

From this definition three distinct streaming paradigms emerge:

-

Output‑Streaming LLMs – The full input is pre‑filled (ϕ(t)=M) before generation begins, but the model emits tokens incrementally. The focus here is on streaming generation mechanisms (token‑wise autoregressive, block‑wise semi‑autoregressive, block‑diffusion, multi‑scale refinement, global diffusion) and on decoding efficiency (speculative decoding, layer‑depth reduction, memory‑efficient caches). These techniques reduce latency while preserving the high‑quality output of standard LLMs.

-

Sequential‑Streaming LLMs – Inputs arrive continuously, yet generation still waits for the complete input. The challenge shifts to incremental encoding and context management. The survey categorizes atomic token encodings (subword regularization, SentencePiece), pre‑discretized units (ViT, CLIP), fixed‑interval partitioning (LLM‑SimulMT, Whisper‑Streaming), and semantic‑driven partitioning (DiSeg, CTC). For context retention, methods such as VideoScan, Flash‑vstream, KV‑cache eviction (StreamingLLM, StreamKV), and representation compression (KIVI, ZipCache) are discussed.

-

Concurrent‑Streaming LLMs – The most demanding scenario where ϕ(t) grows monotonically and the model must simultaneously perceive new inputs and produce outputs. This paradigm requires (a) architectural adaptations (re‑encoding, concatenated or interleaved streaming models, group streaming) and (b) interaction policies that decide when to speak, wait, or terminate. Policies range from rule‑based (STACL), adaptive thresholds (SimulS2SLLM), SFT‑based auxiliary decisions (DiG‑SST, DrFrattn), in‑context prediction (VideoLLM‑online, TransLLaMA), to reinforcement‑learning approaches (MMDuet2, Seed LiveInterpret 2.0).

The taxonomy is presented as a roadmap: output‑streaming tackles low‑latency generation; sequential‑streaming adds incremental encoding and memory efficiency; concurrent‑streaming integrates both and introduces full‑duplex interaction and policy learning. By disentangling these levels, the authors clarify which research challenges are shared, which are incremental, and which are unique to each paradigm.

Beyond the technical taxonomy, the paper surveys emerging applications—real‑time translation, interactive assistants, robotic control, multimodal video understanding—and highlights open research directions: (1) creation of large‑scale pre‑training corpora that reflect streaming, partial‑input supervision, and fine‑grained temporal alignment; (2) development of decoding and encoding algorithms that balance latency and quality; (3) synchronization of multimodal streams and robust policy learning; (4) safety, alignment, and ethical considerations for continuously interactive systems.

To support the community, the authors maintain a continuously updated GitHub repository (https://github.com/EIT‑NLP/Awesome‑Streaming‑LLMs) that curates all relevant papers, datasets, and codebases. In summary, the survey offers the first systematic definition, taxonomy, and comprehensive technical analysis of streaming LLMs, laying a clear foundation for future research aimed at truly interactive, real‑time language intelligence.

Comments & Academic Discussion

Loading comments...

Leave a Comment