TerraLingua: Emergence and Analysis of Open-endedness in LLM Ecologies

As autonomous agents increasingly operate in real-world digital ecosystems, understanding how they coordinate, form institutions, and accumulate shared culture becomes both a scientific and practical priority. This paper introduces TerraLingua, a per…

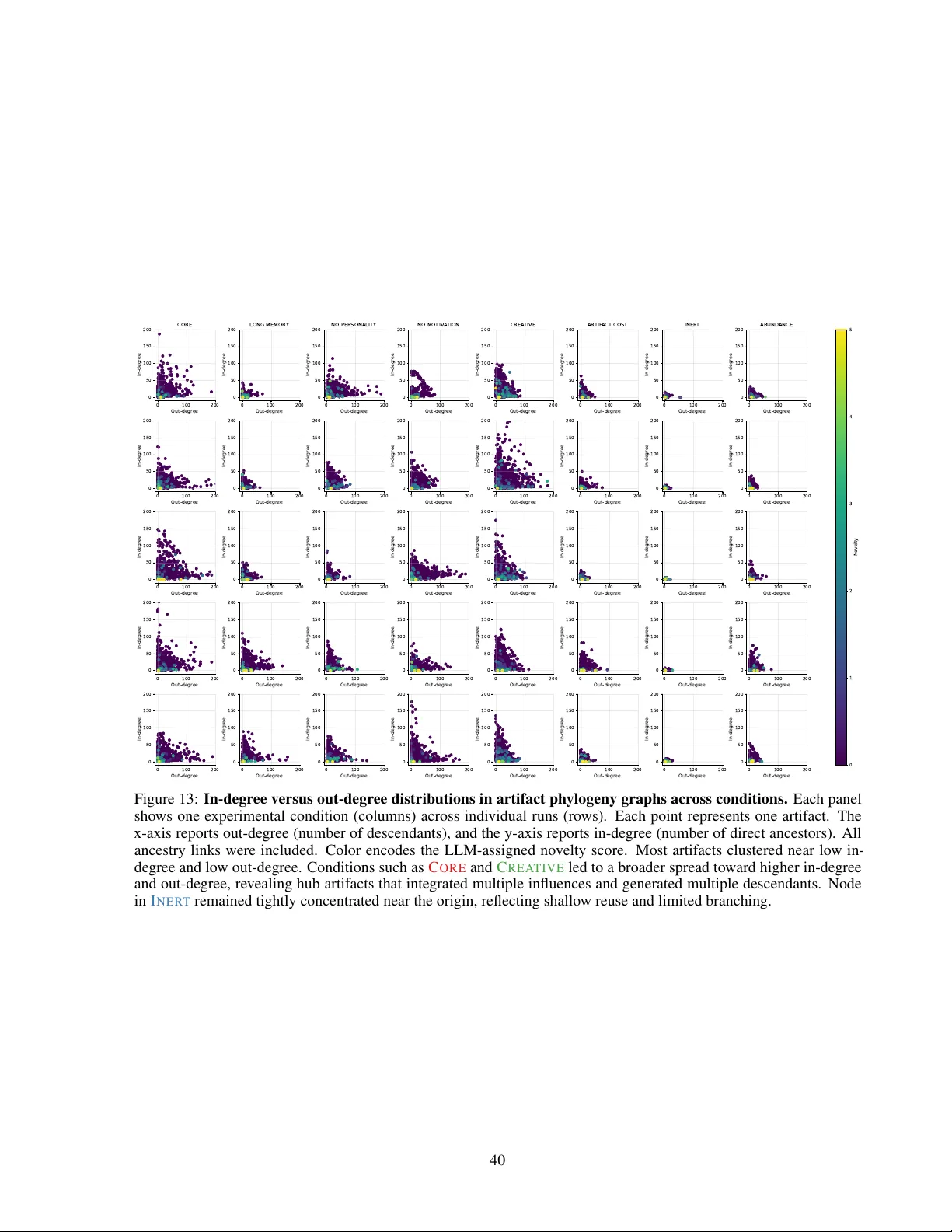

Authors: Giuseppe Paolo, Jamieson Warner, Hormoz Shahrzad