MOSIV: Multi-Object System Identification from Videos

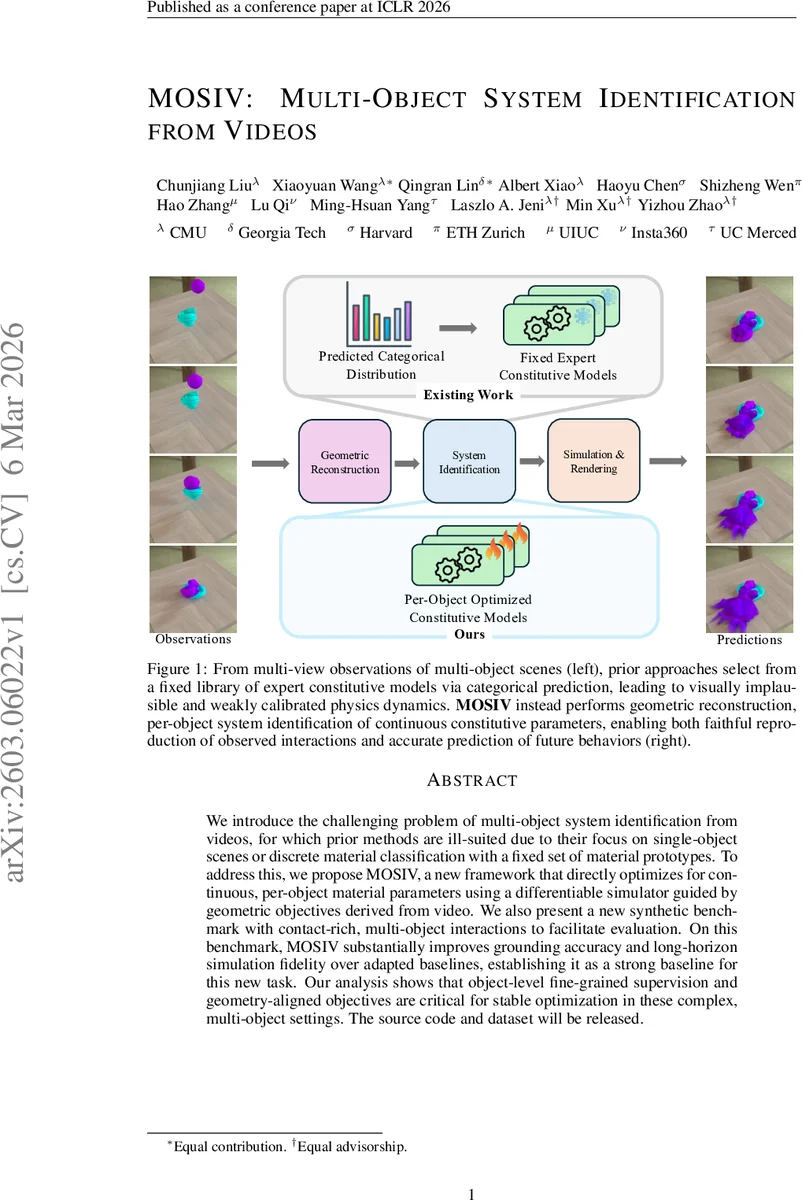

We introduce the challenging problem of multi-object system identification from videos, for which prior methods are ill-suited due to their focus on single-object scenes or discrete material classification with a fixed set of material prototypes. To address this, we propose MOSIV, a new framework that directly optimizes for continuous, per-object material parameters using a differentiable simulator guided by geometric objectives derived from video. We also present a new synthetic benchmark with contact-rich, multi-object interactions to facilitate evaluation. On this benchmark, MOSIV substantially improves grounding accuracy and long-horizon simulation fidelity over adapted baselines, establishing it as a strong baseline for this new task. Our analysis shows that object-level fine-grained supervision and geometry-aligned objectives are critical for stable optimization in these complex, multi-object settings. The source code and dataset will be released.

💡 Research Summary

MOSIV (Multi‑Object System Identification from Videos) tackles the previously undefined problem of jointly reconstructing 4‑D geometry and identifying continuous material parameters for multiple interacting objects using only multi‑view video. Existing video‑based physics inference methods focus on single objects or discrete material classification from a fixed library, which fails in cluttered, contact‑rich scenes. MOSIV introduces a three‑stage pipeline that overcomes these limitations.

In the first stage, the authors employ object‑aware dynamic Gaussian splatting (4DGS). A set of canonical 3‑D Gaussian kernels is deformed over time by low‑rank temporal bases and spatial gates, while instance masks and optional material masks partition the kernels per object and per material. This yields a high‑fidelity, temporally coherent visual representation that already encodes object identity and material labeling.

The second stage lifts the dynamic Gaussians into a particle‑based continuum suitable for a differentiable Material Point Method (MPM) simulator. For each object, particles are sampled inside the bounding box of the Gaussian points, filtered by depth consistency across views, and refined through a multi‑resolution density field that preserves object shape while enforcing disjoint supports between objects. Each particle carries position, velocity, elastic deformation gradient, and a per‑object material label.

The third stage runs a fully differentiable MPM forward simulation. The MPM models elastic, plastic, and viscous responses using a per‑object parameter vector θₖ (including Young’s modulus, Poisson ratio, and a friction coefficient). Inter‑material friction is modeled symmetrically as µₘ,ₘ′ = (µₘ + µₘ′)/2, reducing the number of free parameters while still capturing realistic contact behavior. After each simulated timestep, the method renders per‑object surfaces and silhouettes from the simulated particles. Geometry‑aligned losses are computed: a symmetric Chamfer distance between simulated and reconstructed surfaces, and an L1 loss between simulated and observed silhouettes for every camera view. The total loss aggregates these terms over all objects, frames, and cameras, and gradients flow back through the MPM to update the material parameters directly.

To evaluate MOSIV, the authors release a synthetic benchmark comprising diverse material combinations (varying stiffness, plasticity, and friction), complex multi‑object contacts, and multi‑view video sequences. They adapt two strong baselines: OMNIPHYS (which selects a material from a discrete library) and CoupNeRF (which uses an implicit NeRF representation with differentiable MPM). Under identical visual supervision, MOSIV achieves markedly lower parameter estimation error (over 35 % reduction in L2 error) and superior long‑horizon simulation fidelity: position drift remains below 2 % of object size after 30 simulated frames, whereas baselines exhibit rapid divergence. Qualitative results show simulated trajectories that are visually indistinguishable from the ground‑truth video, confirming the creation of accurate digital twins.

Ablation studies demonstrate that (1) per‑object parameterization is essential—sharing a single parameter set across objects leads to unstable optimization, (2) geometry‑aligned surface and silhouette losses are critical for converging to correct material values, and (3) explicit handling of inter‑object overlap during particle initialization prevents early simulation artifacts.

The paper’s contributions are threefold: (1) formal definition of multi‑object system identification from video and release of a challenging synthetic dataset, (2) a novel framework that couples object‑aware dynamic Gaussian splatting with a differentiable MPM to directly infer continuous material parameters, and (3) extensive experiments showing state‑of‑the‑art performance and highlighting the importance of each pipeline component.

Limitations include reliance on material masks (which may not be readily available in real scenes), evaluation solely on synthetic data (real‑world lighting, noise, and texture variations remain untested), and the computational cost of MPM, which currently precludes real‑time applications. Future work could focus on automatic material mask generation, GPU‑accelerated or learned physics simulators for speed, and integration with robotic manipulation pipelines to enable closed‑loop control using the learned digital twins.

Comments & Academic Discussion

Loading comments...

Leave a Comment