FALCON: Future-Aware Learning with Contextual Object-Centric Pretraining for UAV Action Recognition

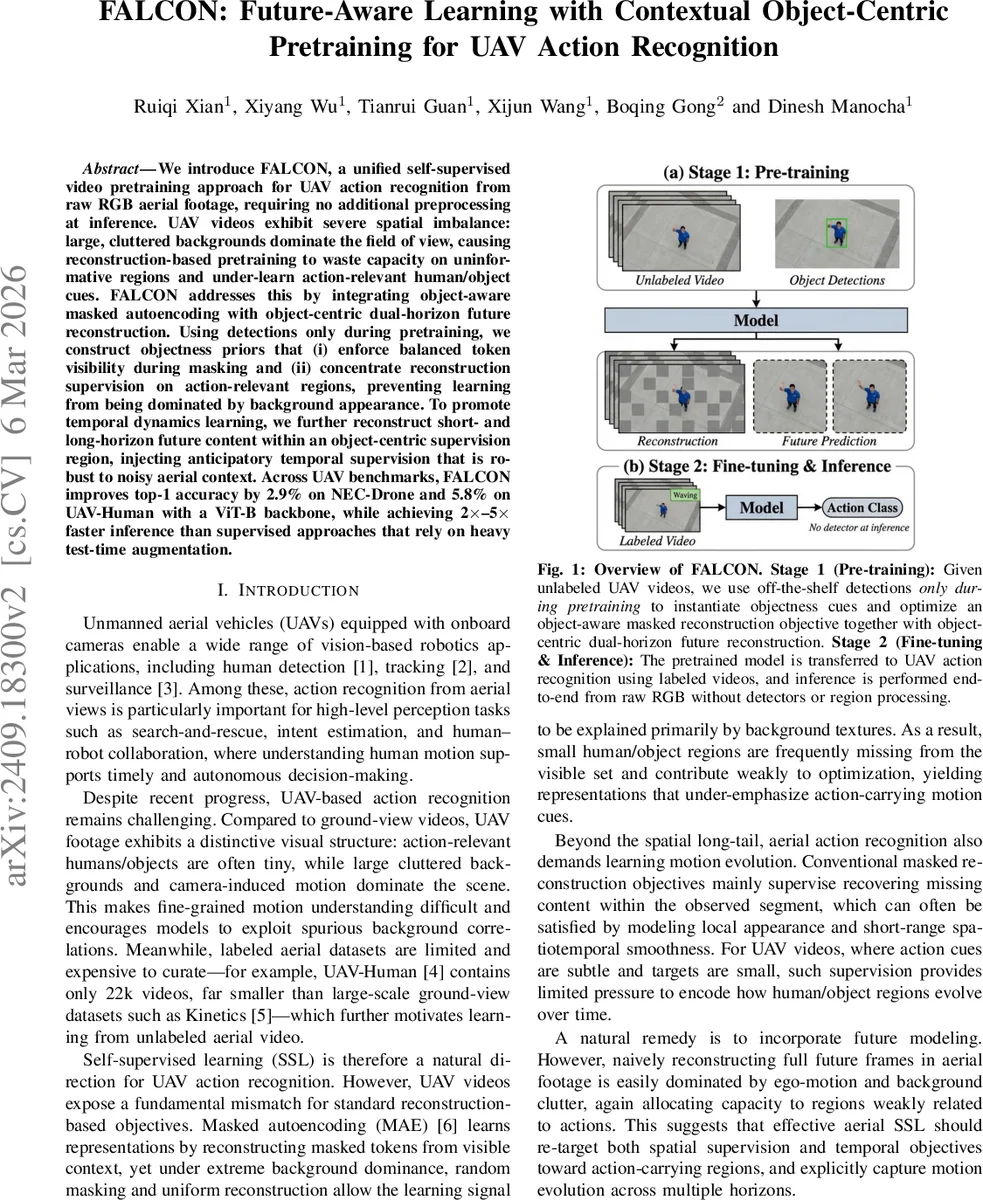

We introduce FALCON, a unified self-supervised video pretraining approach for UAV action recognition from raw RGB aerial footage, requiring no additional preprocessing at inference. UAV videos exhibit severe spatial imbalance: large, cluttered backgrounds dominate the field of view, causing reconstruction-based pretraining to waste capacity on uninformative regions and under-learn action-relevant human/object cues. FALCON addresses this by integrating object-aware masked autoencoding with object-centric dual-horizon future reconstruction. Using detections only during pretraining, we construct objectness priors that (i) enforce balanced token visibility during masking and (ii) concentrate reconstruction supervision on action-relevant regions, preventing learning from being dominated by background appearance. To promote temporal dynamics learning, we further reconstruct short- and long-horizon future content within an object-centric supervision region, injecting anticipatory temporal supervision that is robust to noisy aerial context. Across UAV benchmarks, FALCON improves top-1 accuracy by 2.9% on NEC-Drone and 5.8% on UAV-Human with a ViT-B backbone, while achieving 2$\times$–5$\times$ faster inference than supervised approaches that rely on heavy test-time augmentation.

💡 Research Summary

FALCON (Future‑Aware Learning with Contextual Object‑Centric Pretraining) tackles the unique challenges of UAV‑based action recognition, where tiny human or object actors are surrounded by large, cluttered backgrounds and strong camera motion. Conventional masked autoencoding (MAE) pre‑training suffers from two mismatches in this setting: (1) spatial background dominance—random masking and uniform reconstruction allocate most of the learning signal to background pixels, leaving the small action‑relevant regions under‑represented; (2) limited temporal supervision—reconstruction within the observed clip does not force the model to capture how those regions evolve over time.

To address these issues, FALCON introduces (i) an object‑aware masking scheme and (ii) a dual‑horizon future reconstruction objective, both driven by objectness cues derived from off‑the‑shelf detectors only during pre‑training.

Object‑aware masking. For each frame in the observed clip, detections are aggregated into a pixel‑level heatmap Hₒ via Gaussian kernels centered on detected boxes. Hₒ is projected onto the patch grid to obtain patch scores Sₒ. Patches are sorted by Sₒ, partitioned into equal‑length quantile bins, and one visible patch is sampled from each bin. This “stratified visibility” guarantees that high‑objectness (human/object) patches and low‑objectness (background) patches are both present among the visible tokens, preventing systematic exclusion of small actors while preserving contextual information.

Object‑centric reconstruction weighting. For every masked token i in the observed clip, the reconstruction loss is weighted by Ŵₒᵢ = Sₒᵢ + μ, where μ is the mean objectness score. This gives a non‑zero floor to background tokens but emphasizes high‑objectness regions, steering the model to allocate capacity to action‑relevant content.

Dual‑horizon future reconstruction. The video clip is split into an observed segment Vₒ and a future segment V_f. V_f is further divided into a short‑term horizon (t + 1 … t + n) and a long‑term horizon (t + n + 1 … t + 2n). A future objectness heatmap H_f is built from detections on the future frames. A high‑response support set Ω is defined by thresholding H_f, its bounding rectangle is dilated to form a contextual block R_f, and R_f is projected to a patch‑wise weight map W_f. Only tokens inside this object‑centric region receive supervision, dramatically reducing the influence of ego‑motion and background clutter on the future prediction loss. Two separate losses, L_short and L_long, are computed on the masked short‑ and long‑horizon tokens, each weighted by the normalized W_f. An additional consistency regularizer L_cons encourages the short‑ and long‑term predictions to be coherent.

The total pre‑training objective is:

L_FALCON = L_obs + L_short + L_long + L_cons,

optimized with an asymmetric ViT‑B encoder–decoder (the encoder sees only the visible tokens, the decoder reconstructs all masked tokens, including the fully masked future tokens).

After pre‑training, the encoder is fine‑tuned on labeled UAV action datasets without any detector input; inference proceeds end‑to‑end on raw RGB frames.

Experimental results. On the NEC‑Drone and UAV‑Human benchmarks, FALCON with a ViT‑B backbone improves top‑1 accuracy by 2.9 % and 5.8 % respectively over a standard VideoMAE baseline, while delivering 2 ×–5 × faster inference because no test‑time augmentations or region processing are required. Ablation studies confirm that (a) removing the object‑aware mask collapses performance to that of vanilla MAE, (b) discarding the object‑centric weighting re‑introduces background bias, and (c) using only a single future horizon limits the model’s ability to capture long‑range motion cues.

Strengths and limitations. FALCON’s main strengths are its precise modeling of UAV‑specific spatial imbalance, its use of detection cues only during pre‑training (keeping inference lightweight), and its dual‑horizon future prediction that simultaneously learns fine‑grained and long‑range dynamics. Limitations include dependence on the quality of the pre‑training detector (poor detections could produce inaccurate objectness priors) and the fixed masking‑ratio / binning strategy, which may not be optimal across all UAV scenarios.

Future directions suggested by the authors include detector‑free objectness estimation, adaptive masking ratios, and integration of additional modalities such as optical flow or depth to further enhance robustness to ego‑motion. Overall, FALCON presents a compelling, well‑engineered self‑supervised framework that substantially narrows the gap between self‑supervised and fully supervised UAV action recognition while maintaining real‑time inference capability.

Comments & Academic Discussion

Loading comments...

Leave a Comment