Full Dynamic Range Sky-Modelling For Image Based Lighting

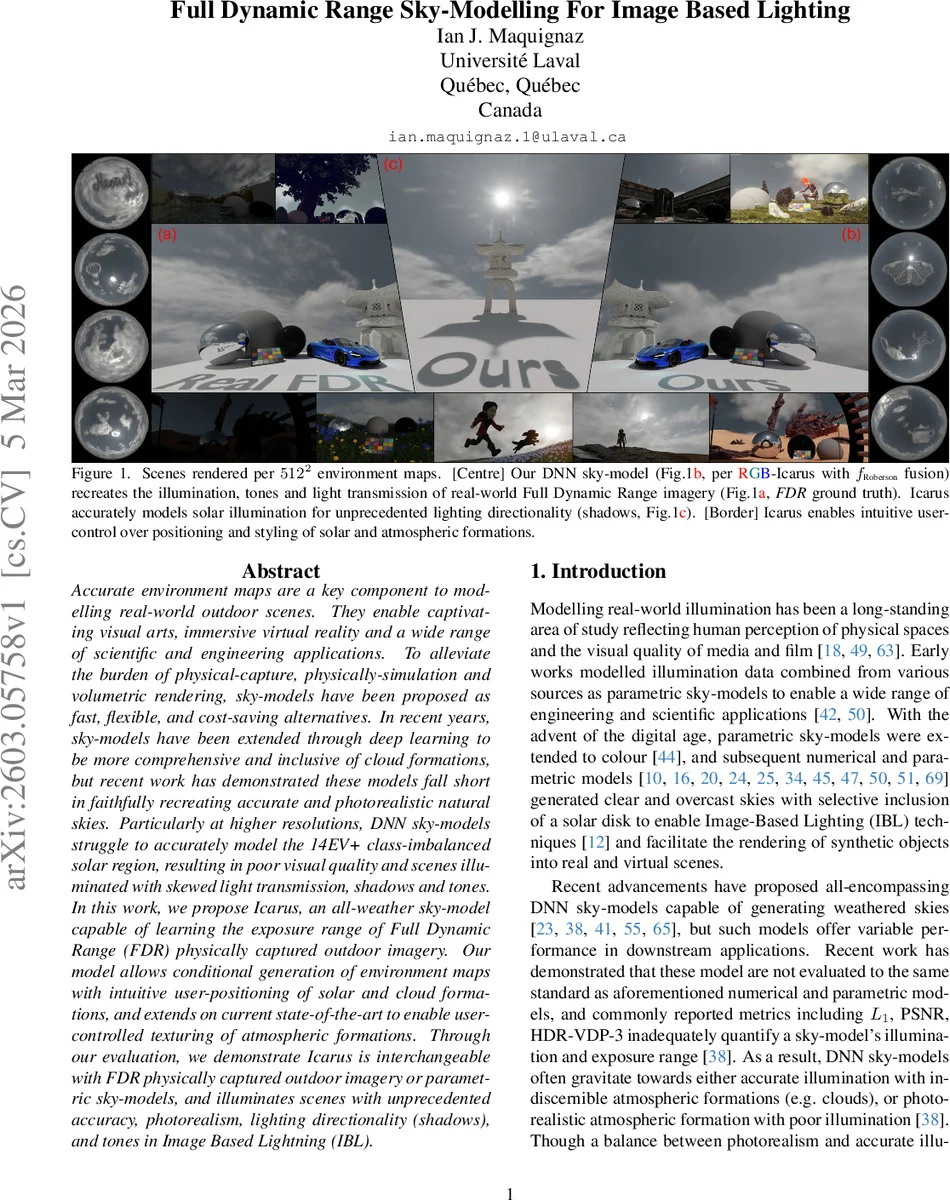

Accurate environment maps are a key component to modelling real-world outdoor scenes. They enable captivating visual arts, immersive virtual reality and a wide range of scientific and engineering applications. To alleviate the burden of physical-capture, physically-simulation and volumetric rendering, sky-models have been proposed as fast, flexible, and cost-saving alternatives. In recent years, sky-models have been extended through deep learning to be more comprehensive and inclusive of cloud formations, but recent work has demonstrated these models fall short in faithfully recreating accurate and photorealistic natural skies. Particularly at higher resolutions, DNN sky-models struggle to accurately model the 14EV+ class-imbalanced solar region, resulting in poor visual quality and scenes illuminated with skewed light transmission, shadows and tones. In this work, we propose Icarus, an all-weather sky-model capable of learning the exposure range of Full Dynamic Range (FDR) physically captured outdoor imagery. Our model allows conditional generation of environment maps with intuitive user-positioning of solar and cloud formations, and extends on current state-of-the-art to enable user-controlled texturing of atmospheric formations. Through our evaluation, we demonstrate Icarus is interchangeable with FDR physically captured outdoor imagery or parametric sky-models, and illuminates scenes with unprecedented accuracy, photorealism, lighting directionality (shadows), and tones in Image Based Lightning (IBL).

💡 Research Summary

The paper addresses a long‑standing challenge in image‑based lighting (IBL): generating environment maps that faithfully capture the full dynamic range (FDR) of real outdoor skies. Conventional parametric sky models are fast but lack realistic clouds and solar illumination, while recent deep‑learning sky models still struggle with the extreme exposure range (>14 EV) of the sun, leading to inaccurate lighting directionality, shadows, and tone mapping. To overcome these limitations, the authors introduce Icarus, the first all‑weather deep neural network (DNN) sky model capable of learning and synthesizing full‑dynamic‑range (FDR) environment maps.

Key contributions:

-

Dynamic‑range decomposition (bracketing) – Instead of compressing HDR images with a tone‑mapping operator (which introduces non‑linear distortions), the authors decompose each HDR sky image into a set of N low‑dynamic‑range (LDR) exposure brackets based on exposure time Δt. This pseudo‑inverse mapping yields multiple LDR images that together span the full HDR intensity range, eliminating class imbalance for the bright solar pixels and allowing the network to learn each exposure independently.

-

Novel architecture – Icarus combines a style‑aware generator (inspired by SEAN) with two discriminators. A RGB style encoder extracts per‑class texture codes from a segmented input image, while an RND style mapper generates compatible style codes from class labels. The generator produces N LDR brackets, which are evaluated by an LDR‑discriminator (ensuring per‑exposure realism) and an HDR‑discriminator (ensuring coherence across brackets). Losses include supervised L1/LPIPS on low‑variability regions, plus physically‑motivated metrics: integrated illumination (∫Ω|I|) and exposure value (EV) differences, directly measuring solar luminance and shadow fidelity.

-

Fusion to FDR – After generation, the N LDR brackets are merged into a single FDR HDR image using a learned Robertson weighting scheme or standard HDR fusion methods. This step restores the full exposure range without the artifacts typical of tone‑mapped reconstructions.

-

User‑controllable conditioning – Icarus allows intuitive placement of the sun (azimuth/elevation) and cloud formations, and supports texture styling via a style‑mixer that can either copy textures from a reference image or generate stochastic styles. This provides artists with fine‑grained control while preserving physical accuracy.

-

Comprehensive evaluation – Using 512 × 512 environment maps (512 samples), the authors compare Icarus against state‑of‑the‑art models such as AllSky and SkyGAN. Quantitative metrics (PSNR, HDR‑VDP‑3) are supplemented with illumination and EV errors, demonstrating that Icarus matches physically captured FDR imagery in both visual quality and lighting correctness. Rendered scenes show accurate shadows, proper solar transmission through clouds, and realistic tone reproduction.

The paper also clarifies terminology around dynamic range (LDR, HDR, EDR, FDR) and argues that most existing sky datasets only cover LDR/EDR, making FDR learning difficult without the proposed bracketing approach. Limitations include the current focus on 512 × 512 resolution and the need for further optimization for real‑time applications.

In summary, Icarus introduces a principled pipeline—dynamic‑range bracketing, style‑conditioned generation, dual‑discriminator training, and learned fusion—that resolves the long‑standing issue of reproducing the extreme luminance of the sun within deep‑learning sky models. By delivering physically accurate, photorealistic FDR environment maps with interactive control, Icarus advances the state of the art for IBL, virtual production, VR, and scientific visualization.

Comments & Academic Discussion

Loading comments...

Leave a Comment