Asymptotic Behavior of Multi--Task Learning: Implicit Regularization and Double Descent Effects

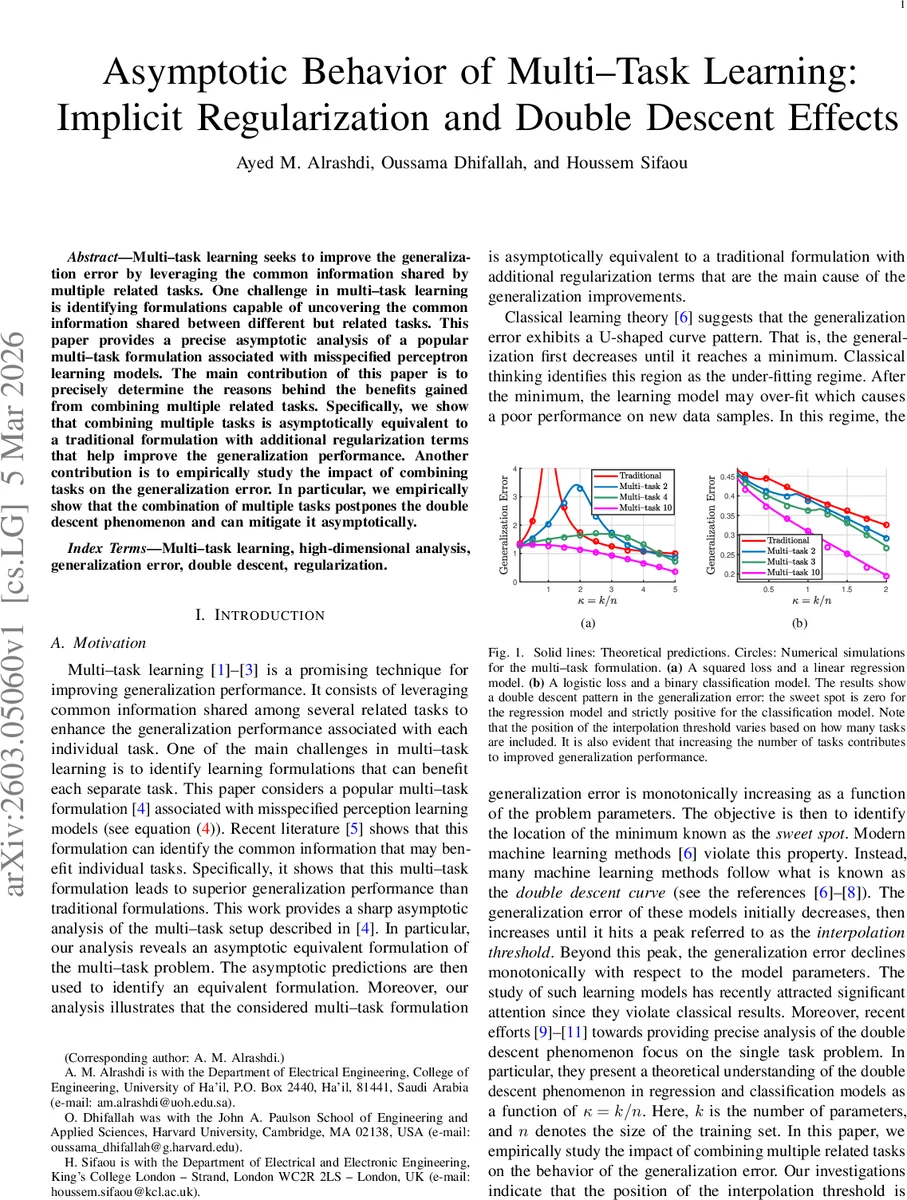

Multi–task learning seeks to improve the generalization error by leveraging the common information shared by multiple related tasks. One challenge in multi–task learning is identifying formulations capable of uncovering the common information shared between different but related tasks. This paper provides a precise asymptotic analysis of a popular multi–task formulation associated with misspecified perceptron learning models. The main contribution of this paper is to precisely determine the reasons behind the benefits gained from combining multiple related tasks. Specifically, we show that combining multiple tasks is asymptotically equivalent to a traditional formulation with additional regularization terms that help improve the generalization performance. Another contribution is to empirically study the impact of combining tasks on the generalization error. In particular, we empirically show that the combination of multiple tasks postpones the double descent phenomenon and can mitigate it asymptotically.

💡 Research Summary

This paper presents a rigorous high‑dimensional asymptotic analysis of a popular multi‑task learning (MTL) formulation that has been empirically observed to improve generalization by exploiting shared structure among related tasks. The authors consider T tasks, each with nₜ training examples drawn from a standard Gaussian feature distribution. Labels are generated by a possibly misspecified perceptron model yₜ,i = φ(aₜ,iᵀ ξₜ), where the task‑specific parameter ξₜ decomposes as ξₜ = σ vₜ + v₀. The scalar σ controls task similarity; the similarity measure ρ = 1/(1+σ²) lies in

Comments & Academic Discussion

Loading comments...

Leave a Comment