Osmosis Distillation: Model Hijacking with the Fewest Samples

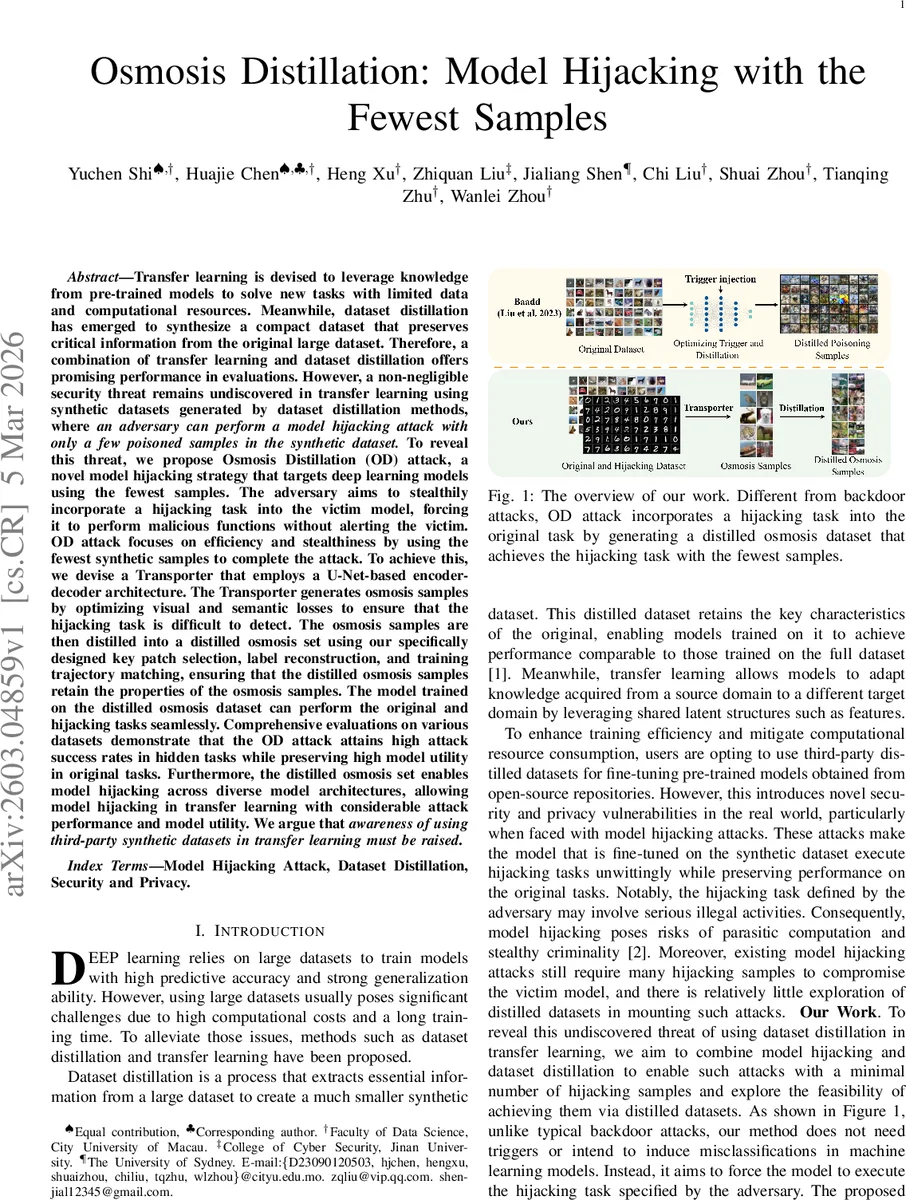

Transfer learning is devised to leverage knowledge from pre-trained models to solve new tasks with limited data and computational resources. Meanwhile, dataset distillation has emerged to synthesize a compact dataset that preserves critical information from the original large dataset. Therefore, a combination of transfer learning and dataset distillation offers promising performance in evaluations. However, a non-negligible security threat remains undiscovered in transfer learning using synthetic datasets generated by dataset distillation methods, where an adversary can perform a model hijacking attack with only a few poisoned samples in the synthetic dataset. To reveal this threat, we propose Osmosis Distillation (OD) attack, a novel model hijacking strategy that targets deep learning models using the fewest samples. Comprehensive evaluations on various datasets demonstrate that the OD attack attains high attack success rates in hidden tasks while preserving high model utility in original tasks. Furthermore, the distilled osmosis set enables model hijacking across diverse model architectures, allowing model hijacking in transfer learning with considerable attack performance and model utility. We argue that awareness of using third-party synthetic datasets in transfer learning must be raised.

💡 Research Summary

The paper introduces a novel model‑hijacking threat that exploits synthetic datasets produced by dataset‑distillation techniques, a scenario increasingly common in modern transfer‑learning pipelines. While dataset distillation enables the creation of a tiny set of synthetic images that retain the training power of a large original corpus, the authors demonstrate that an adversary can embed a hidden malicious task into such a distilled set with only a handful of poisoned samples, thereby hijacking any downstream model fine‑tuned on the data.

The proposed attack, named Osmosis Distillation (OD), consists of two stages: (1) Osmosis – generation of “osmosis samples” that look visually identical to benign data but are semantically aligned with the attacker’s target task, and (2) Distillation – compression of the large pool of osmosis samples into a very small, highly‑effective distilled osmosis dataset (DOD).

In the Osmosis stage, a Transporter network is built on a dual‑encoder U‑Net architecture. One encoder processes original images (xₒ), the other processes attacker‑controlled hijacking images (xₕ). Their latent representations are concatenated and fed to a decoder that outputs the osmosis image (x_c). Two complementary losses guide training: a visual L1 loss (‖x_c‑xₒ‖₁) that forces pixel‑level similarity to the original, and a semantic L1 loss (‖F(x_c)‑F(xₕ)‖₁) where F is a pre‑trained feature extractor, ensuring that the hidden task’s high‑level features are preserved. Weighting coefficients λ_v and λ_s balance stealth versus task fidelity.

The Distillation stage reduces the massive set of osmosis images to a few hundred samples. Each osmosis image is split into equal‑size patches; a “realism score” is computed for each patch, and the highest‑scoring patch is selected as a key patch. These key patches are used to reconstruct full images, dramatically shrinking the dataset while keeping visual realism. Labels are reconstructed using soft‑label blending and training‑trajectory matching, which aligns the optimization path of the distilled set with that of the full real dataset, preserving the hidden task’s learning dynamics.

Extensive experiments on CIFAR‑10, SVHN, and Tiny‑ImageNet, using ResNet‑18, VGG‑16, and ConvNet backbones, show that with only 50 distilled osmosis samples per class (≈500–1000 total), the victim model retains its original task accuracy (≤2 % drop) while achieving >85 % success on the hidden task. The attack also transfers across architectures, confirming the claimed transferability. Compared with prior model‑hijacking or backdoor attacks that require thousands of poisoned examples and explicit triggers, OD achieves higher stealth (no visible trigger) and far greater sample efficiency.

The authors discuss limitations: the semantic loss depends on the choice of feature extractor, and aggressive visual loss weighting can weaken the hidden task. The realism‑score computation adds overhead, and defenses based on data‑sanity checks or label smoothing are only partially effective because OD does not alter labels or embed obvious triggers. They advocate for stricter provenance verification of distilled datasets, runtime monitoring of feature‑distribution shifts, and development of new defenses tailored to synthetic‑data attacks.

In conclusion, the work uncovers a previously overlooked attack surface: third‑party distilled datasets can serve as covert vectors for model hijacking. By demonstrating a practical, low‑cost, and highly stealthy method, the paper calls for heightened awareness and security measures in the growing ecosystem of shared synthetic data for transfer learning. Future directions include extending OD to non‑image modalities (text, audio), designing real‑time detection mechanisms for osmosis‑style poisoning, and formalizing defenses against such distilled‑data attacks.

Comments & Academic Discussion

Loading comments...

Leave a Comment