LLM-supported 3D Modeling Tool for Radio Radiance Field Reconstruction

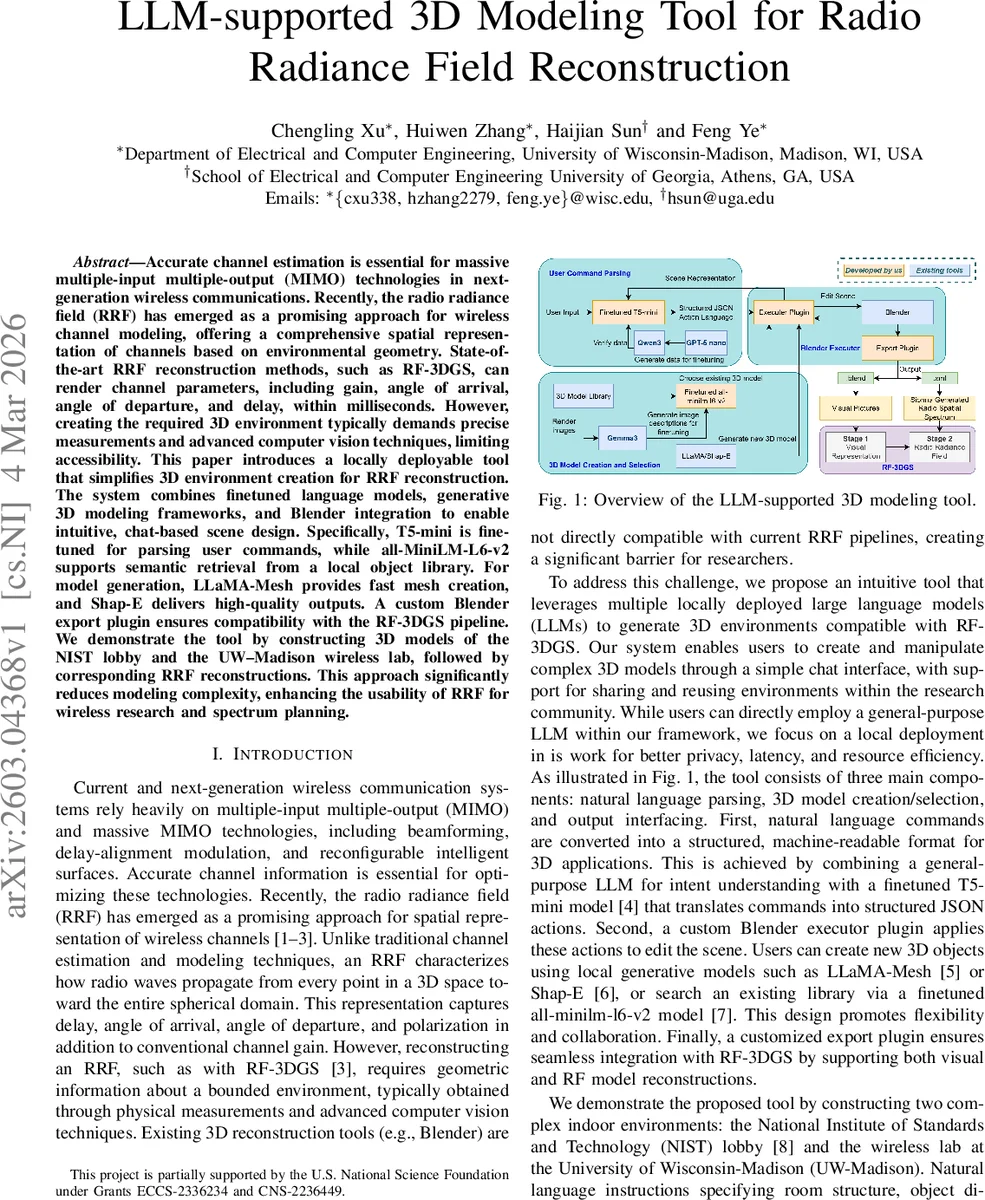

Accurate channel estimation is essential for massive multiple-input multiple-output (MIMO) technologies in next-generation wireless communications. Recently, the radio radiance field (RRF) has emerged as a promising approach for wireless channel modeling, offering a comprehensive spatial representation of channels based on environmental geometry. State-of-the-art RRF reconstruction methods, such as RF-3DGS, can render channel parameters, including gain, angle of arrival, angle of departure, and delay, within milliseconds. However, creating the required 3D environment typically demands precise measurements and advanced computer vision techniques, limiting accessibility. This paper introduces a locally deployable tool that simplifies 3D environment creation for RRF reconstruction. The system combines finetuned language models, generative 3D modeling frameworks, and Blender integration to enable intuitive, chat-based scene design. Specifically, T5-mini is finetuned for parsing user commands, while all-MiniLM-L6-v2 supports semantic retrieval from a local object library. For model generation, LLaMA-Mesh provides fast mesh creation, and Shap-E delivers high-quality outputs. A custom Blender export plugin ensures compatibility with the RF-3DGS pipeline. We demonstrate the tool by constructing 3D models of the NIST lobby and the UW-Madison wireless lab, followed by corresponding RRF reconstructions. This approach significantly reduces modeling complexity, enhancing the usability of RRF for wireless research and spectrum planning.

💡 Research Summary

The paper presents a locally deployable system that dramatically simplifies the creation of three‑dimensional (3D) environments required for Radio Radiance Field (RRF) reconstruction, a spatially comprehensive channel modeling technique gaining traction in massive MIMO research. Traditional RRF pipelines, such as RF‑3DGS, demand accurate geometric models of the surrounding space, which are usually obtained through labor‑intensive measurements, laser scanning, and sophisticated computer‑vision pipelines. This high barrier limits the accessibility of RRF for many researchers and engineers.

To address this, the authors propose an “LLM‑supported 3D modeling tool” that enables users to design complex indoor scenes through a conversational chat interface. The system consists of three tightly coupled components: (1) natural‑language command parsing, (2) 3D object creation or retrieval, and (3) a custom Blender executor/export plugin that translates parsed actions into a scene compatible with the RF‑3DGS pipeline.

Natural‑Language Parsing

User utterances are transformed into a structured JSON array of action objects. Each action specifies an operation (e.g., create object, move object, change material) and the necessary parameters (object type, position, rotation, etc.). While a large general‑purpose LLM could perform this translation, its computational cost and tendency to produce syntactic errors make it unsuitable for real‑time interaction. The authors therefore adopt a distillation approach: a powerful LLM generates a synthetic dataset of natural‑language commands paired with correct JSON outputs; this dataset is then used to fine‑tune a lightweight encoder‑decoder model (Google’s T5‑mini). The fine‑tuned T5‑mini achieves >99 % JSON validity and >95 % semantic correctness after a multi‑stage validation pipeline that includes rule‑based checks and an LLM‑based semantic validator (Qwen‑3‑8B). Prompt engineering—defining the model’s role, providing scene context, specifying the schema, and offering few‑shot examples—ensures deterministic, error‑free outputs.

3D Object Creation and Retrieval

The tool offers two locally deployed generative models: (a) Shap‑E, a diffusion‑based network that produces high‑fidelity meshes from textual prompts, and (b) LLaMA‑Mesh, which encodes meshes as text tokens, enabling rapid generation at the expense of detail. Users can select the model based on a quality‑vs‑latency trade‑off. In addition, a semantic search engine built on a fine‑tuned all‑MiniLM‑L6‑v2 model allows retrieval of existing assets from a curated library (derived from ModelNet‑40). Each object in the library is annotated with up to six textual descriptions generated by a multi‑modal LLM (Gemma‑3‑12B). Contrastive learning with triplet loss aligns descriptions of the same object while pushing apart different objects, yielding instance‑level embeddings. At inference time, a user’s natural‑language description is embedded and matched against the FAISS index, returning the most semantically similar 3D asset.

Blender Integration

Two custom plugins bridge the backend to Blender:

- Executor Plugin runs as an HTTP server, receives the JSON action list, resolves local IDs to actual object names, converts relative placements to absolute coordinates, and sequentially invokes Blender’s Python API to modify the scene. It also provides a “get scene” endpoint that returns room dimensions, object attributes, and geometric metadata (center, bounding box, size).

- Export Plugin addresses the incompatibility of the existing mitsuba‑blender exporter with Blender 4.3+. It exports every visible mesh as a Polygon File Format (PLY) file and generates a single XML descriptor compatible with the open‑source ray‑tracing simulator Sionna, which RF‑3DGS uses. Materials are encoded as simple two‑sided BSDFs with RGB values, and the plugin automatically handles Sionna’s “itu” material prefix and Blender’s “.001” naming suffix. The resulting folder (PLY meshes + XML scene file) can be directly loaded into RF‑3DGS without further conversion.

Experimental Validation

The authors demonstrate the system by reconstructing two indoor environments: the NIST lobby (for which a high‑resolution point‑cloud reference exists) and the UW‑Madison wireless lab (which lacks any prior geometric data). Users interact solely through natural‑language commands such as “Create a wooden table in the center of the room” or “Place four bowls on the table”. The generated scenes are then fed into RF‑3DGS to produce RRFs. Quantitative comparisons show that visual fidelity and extracted channel parameters (gain, AoA, AoD, delay) are statistically indistinguishable from those obtained with manually built models. Parsing performance across ten candidate LLMs (including Qwen‑3, Gemma‑3, DeepSeek‑R1, Llama 3.1, Phi‑4) reveals that the distilled T5‑mini outperforms all others in JSON validity, format compliance, uniqueness, and semantic match.

Runtime measurements on a single RTX 3080 GPU indicate an average command‑to‑action latency of ~6 seconds. Shap‑E generates a high‑quality mesh in under 30 seconds, while LLaMA‑Mesh produces a coarse mesh in <2 seconds, supporting interactive design cycles. All components run locally, preserving user privacy, eliminating cloud‑service latency, and enabling deployment on modest research‑lab hardware.

Implications and Future Work

By removing the need for expensive measurement campaigns and complex vision pipelines, the proposed tool lowers the entry barrier for RRF‑based channel simulation, facilitating rapid prototyping, educational use, and large‑scale scenario generation. The authors envision extensions such as multimodal LLMs that can ingest sketches or photos, automated environment updates based on sensor feedback, and collaborative libraries where researchers share and version‑control 3D assets.

In summary, the paper delivers a practical, end‑to‑end solution that couples lightweight language models, generative 3D networks, and a Blender‑centric workflow to make radio radiance field reconstruction accessible, efficient, and ready for integration into next‑generation wireless system design.

Comments & Academic Discussion

Loading comments...

Leave a Comment