Fine-grained Soundscape Control for Augmented Hearing

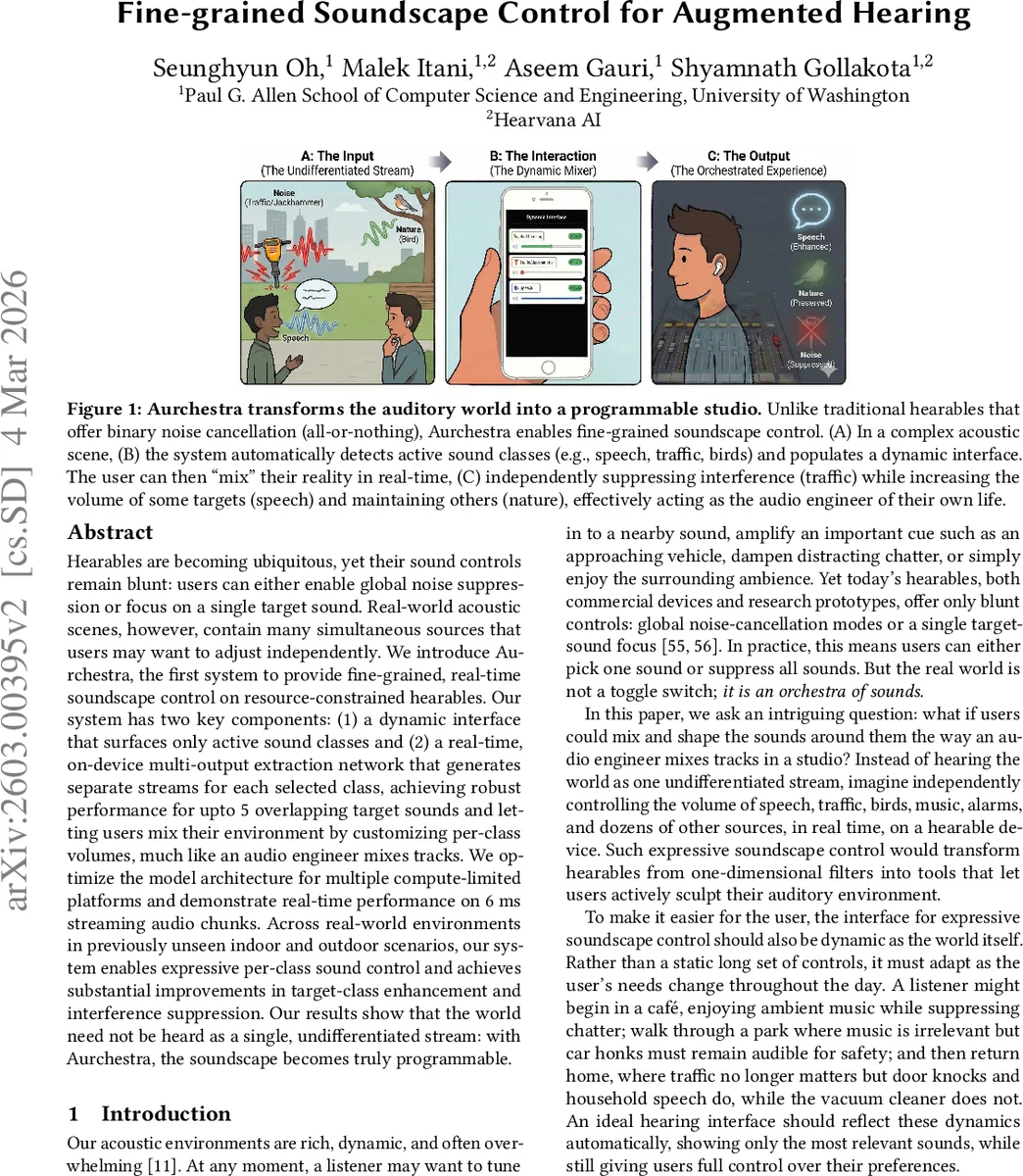

Hearables are becoming ubiquitous, yet their sound controls remain blunt: users can either enable global noise suppression or focus on a single target sound. Real-world acoustic scenes, however, contain many simultaneous sources that users may want to adjust independently. We introduce Aurchestra, the first system to provide fine-grained, real-time soundscape control on resource-constrained hearables. Our system has two key components: (1) a dynamic interface that surfaces only active sound classes and (2) a real-time, on-device multi-output extraction network that generates separate streams for each selected class, achieving robust performance for upto 5 overlapping target sounds, and letting users mix their environment by customizing per-class volumes, much like an audio engineer mixes tracks. We optimize the model architecture for multiple compute-limited platforms and demonstrate real-time performance on 6 ms streaming audio chunks. Across real-world environments in previously unseen indoor and outdoor scenarios, our system enables expressive per-class sound control and achieves substantial improvements in target-class enhancement and interference suppression. Our results show that the world need not be heard as a single, undifferentiated stream: with Aurchestra, the soundscape becomes truly programmable.

💡 Research Summary

The paper introduces Aurchestra, a novel augmented‑hearing system that enables fine‑grained, real‑time control over multiple sound classes on resource‑constrained hearable devices. Current hearables typically offer only binary noise cancellation or single‑target enhancement, which limits users in complex acoustic environments where several sources may need to be amplified, attenuated, or left untouched. Aurchestra addresses this gap with two tightly coupled components: (1) a dynamic user interface that automatically detects which sound classes are present in the current scene and surfaces only those classes for user selection, and (2) an on‑device, multi‑output extraction neural network that produces a separate audio stream for each selected class, allowing independent per‑class volume adjustments much like a mixing console.

The extraction model operates on 6 ms audio chunks, processing them in the time‑frequency domain using a short‑time Fourier transform (STFT). After a convolutional encoder projects the complex spectrogram into a latent space, a series of dual‑path time‑frequency blocks—each consisting of a spectral stage (frequency modeling) and a temporal stage (time modeling)—refine the representation. Crucially, the network is conditioned on a multi‑hot vector indicating the selected classes and is designed to output O streams where O ≪ K (e.g., O = 5 streams for K = 20 possible classes). This “output‑reduction” strategy reduces computational load, improves convergence, and eliminates the need for permutation‑invariant training or post‑hoc channel stitching. The mapping from classes to streams is deterministic: classes are ordered alphabetically among the selected set, and each occupies the corresponding output head.

To meet the strict latency (< 20 ms) and power budgets of hearables, the authors explore several hardware‑aware architectures, combining bidirectional LSTMs, MLP‑Mixers, and dual‑path modules. They benchmark three platforms—Orange Pi 5B, Raspberry Pi 4B, and the GAP9 AI accelerator—showing average inference times of 5.22 ms, 4.47 ms, and 5.23 ms per chunk, respectively, and a power consumption of only 56 mW on GAP9. This demonstrates that the system can run in real time on devices with limited compute and energy resources.

The dynamic interface relies on a transformer‑based sound‑event detection model that has been fine‑tuned on heavily overlapped mixtures. In scenes with up to five simultaneous target sounds, detection accuracy improves from 63.8–81.5 % (baseline) to 93.2 %. By exposing only the detected classes on a companion smartphone, the UI reduces the time required for users to select targets by 67.9 % compared with a static list.

Extensive evaluation includes objective and subjective metrics. Objectively, Aurchestra achieves a signal‑to‑noise ratio improvement (SNRi) of 11.99 dB versus 7.29 dB for the prior real‑time single‑target baseline, while using only 0.5 M parameters (half the baseline). The system remains stable when extracting up to five streams simultaneously. Subjectively, listening tests with 17 participants report significant gains in background‑noise suppression (+1.54 points) and overall listening experience (+0.95 points) without perceptible distortion. A second user study (7 participants) confirms the usability benefits of the dynamic interface.

In summary, Aurchestra is the first hearable system that provides real‑time, multi‑class sound extraction and per‑class volume control on low‑power hardware. It transforms the hearing aid from a simple filter into a programmable audio mixing console, opening avenues for future work such as personalized preference learning, intent prediction, and seamless integration with AR/VR environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment