Metric, inertially aligned monocular state estimation via kinetodynamic priors

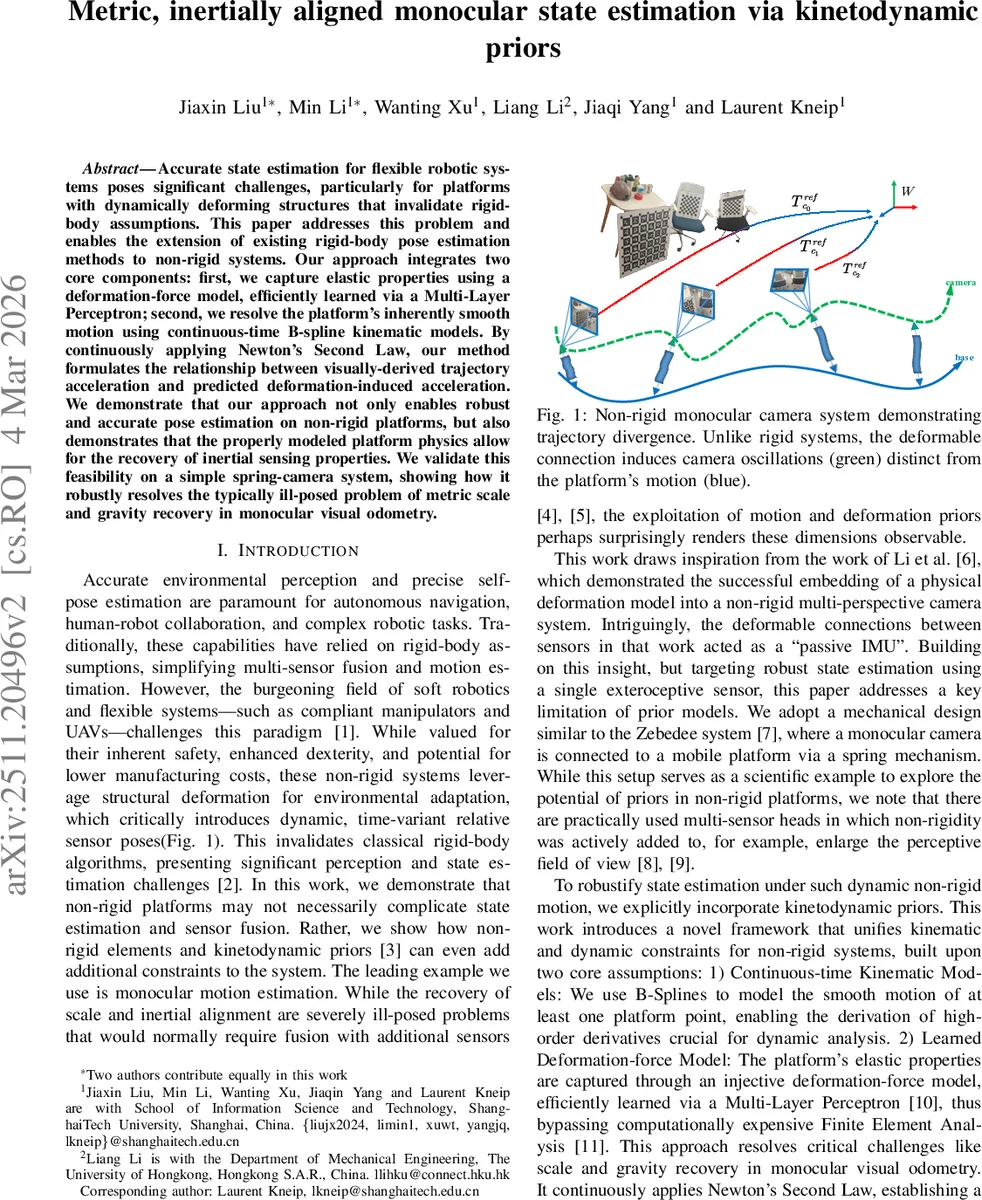

Accurate state estimation for flexible robotic systems poses significant challenges, particularly for platforms with dynamically deforming structures that invalidate rigid-body assumptions. This paper addresses this problem and enables the extension of existing rigid-body pose estimation methods to non-rigid systems. Our approach integrates two core components: first, we capture elastic properties using a deformation-force model, efficiently learned via a Multi-Layer Perceptron; second, we resolve the platform’s inherently smooth motion using continuous-time B-spline kinematic models. By continuously applying Newton’s Second Law, our method formulates the relationship between visually-derived trajectory acceleration and predicted deformation-induced acceleration. We demonstrate that our approach not only enables robust and accurate pose estimation on non-rigid platforms, but also demonstrates that the properly modeled platform physics allow for the recovery of inertial sensing properties. We validate this feasibility on a simple spring-camera system, showing how it robustly resolves the typically ill-posed problem of metric scale and gravity recovery in monocular visual odometry.

💡 Research Summary

This paper tackles the long‑standing problem of metric scale and gravity alignment in monocular visual odometry (VO) when the camera is mounted on a deformable (non‑rigid) platform. Traditional VO assumes a rigid sensor rig, which makes the scale ambiguous and requires additional sensors (IMU, GPS, LiDAR) to resolve. The authors demonstrate that the elastic deformation of the mounting structure itself can provide the missing physical constraints, effectively turning the flexible connection into a passive inertial sensor.

The proposed framework consists of two tightly coupled components. First, a Deformation‑Force Network (DFN) is trained to map the relative pose between the base and the camera (the deformation of the spring‑like connection) to a six‑degree‑of‑freedom force/torque vector expressed in the camera frame. The DFN is a lightweight multilayer perceptron (MLP) trained offline using ground‑truth acceleration and torque data collected with a motion‑capture system. By learning directly in the sensor‑centric frame, the network captures complex, nonlinear elastic and damping behavior without resorting to computationally expensive finite‑element analysis.

Second, the trajectory of the moving base is represented as a continuous‑time B‑spline on SE(3). Control points (K_i) and a spline order (k) define a smooth pose curve; the spline’s analytical derivatives provide velocity and acceleration at any time instant. Visual odometry (COLMAP) supplies an initial, scale‑free camera pose sequence, which is fitted to the B‑spline. Numerical differentiation of the spline yields the visual acceleration (A^{vis}_i).

Newton’s second law is applied in the camera frame: the visual acceleration minus the gravity term gives the specific (non‑gravitational) acceleration (s_c). Physically, this acceleration must equal the elastic force divided by the camera mass. The DFN predicts the elastic force/torque from the current deformation, and after rotating it into the world frame and adding the gravity vector, we obtain a physical acceleration (A^{phy}_i).

Because the visual accelerations are only known up to an unknown similarity transformation (S_{align}) (scale (s), rotation (R_{align}), translation (t_{align})), the authors introduce a metric alignment step. The visual acceleration is scaled and rotated by (M(s)=\begin{bmatrix}sR_{align}&0\0&R_{align}\end{bmatrix}) to bring it into the same metric world frame as the DFN output. The residual for each time step is the difference between the physically predicted acceleration and the scaled visual acceleration: \

Comments & Academic Discussion

Loading comments...

Leave a Comment