Continuous Space-Time Video Super-Resolution with 3D Fourier Fields

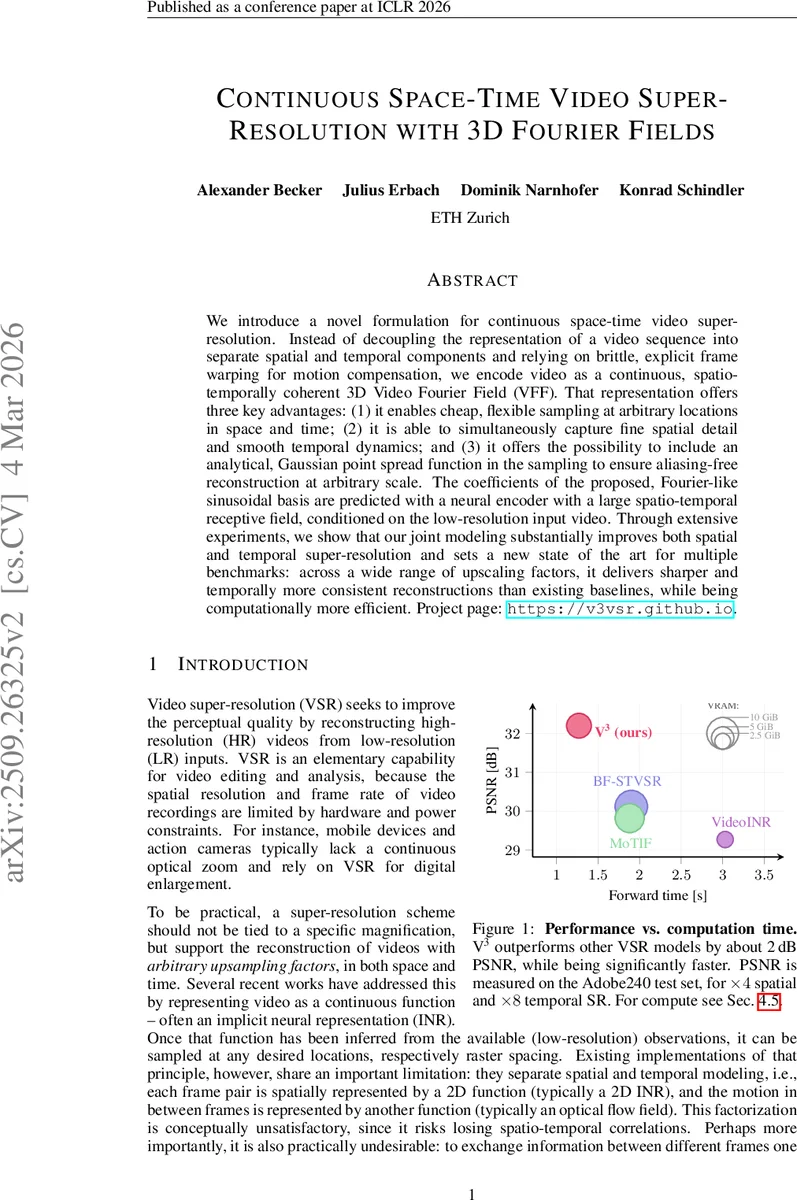

We introduce a novel formulation for continuous space-time video super-resolution. Instead of decoupling the representation of a video sequence into separate spatial and temporal components and relying on brittle, explicit frame warping for motion compensation, we encode video as a continuous, spatio-temporally coherent 3D Video Fourier Field (VFF). That representation offers three key advantages: (1) it enables cheap, flexible sampling at arbitrary locations in space and time; (2) it is able to simultaneously capture fine spatial detail and smooth temporal dynamics; and (3) it offers the possibility to include an analytical, Gaussian point spread function in the sampling to ensure aliasing-free reconstruction at arbitrary scale. The coefficients of the proposed, Fourier-like sinusoidal basis are predicted with a neural encoder with a large spatio-temporal receptive field, conditioned on the low-resolution input video. Through extensive experiments, we show that our joint modeling substantially improves both spatial and temporal super-resolution and sets a new state of the art for multiple benchmarks: across a wide range of upscaling factors, it delivers sharper and temporally more consistent reconstructions than existing baselines, while being computationally more efficient. Project page: https://v3vsr.github.io.

💡 Research Summary

This paper tackles the problem of continuous space‑time video super‑resolution (C‑STVSR), where a low‑resolution, low‑frame‑rate video must be upscaled to arbitrary spatial and temporal resolutions. Existing approaches typically decouple spatial and temporal modeling: they represent each frame with a 2‑D implicit neural representation (INR) and handle motion with a separate optical‑flow field that requires explicit warping. This separation leads to loss of spatio‑temporal correlations, makes the system vulnerable to flow estimation errors (especially near object boundaries), and complicates anti‑aliasing because the representation must contain high‑frequency content that may be beyond the Nyquist limit at lower upscaling factors.

The authors propose a radically different representation: a Video Fourier Field (VFF). VFF models the entire video as a finite sum of 3‑D sinusoidal basis functions defined over the continuous coordinates (x, y, t). Each basis function has a learned global frequency vector ω_i, a per‑voxel amplitude a_i, and a per‑voxel phase ϕ_i, yielding the form B_i(x,y,t)=a_i·sin(ω_i·(x,y,t)+ϕ_i). By fixing the set of frequencies across the whole video and only learning amplitudes and phases locally, the method dramatically reduces the number of parameters while preserving coherence across voxel boundaries. The representation is inherently band‑limited, which allows the authors to incorporate an analytical Gaussian point‑spread function (PSF) directly into the sampling equation: each basis is scaled by ξ(ω_i,σ)=exp(−‖ω_i‖²/(8π²σ²)). This provides a theoretically sound anti‑aliasing mechanism that does not need to be learned, unlike prior INR‑based methods.

To predict the voxel‑wise amplitudes and phases from a low‑resolution input, the paper employs a video backbone with a large spatio‑temporal receptive field (RVRT). The backbone extracts a feature tensor E(x) of shape T×H×W×F, which is then passed through a lightweight 3‑D convolutional head to output the VFF parameters for each voxel. Because the frequencies are shared globally, only the amplitudes and phases need to be inferred, simplifying training and improving stability. The entire pipeline—backbone encoder, VFF parameter prediction, and PSF‑aware sampler—is fully differentiable and trained end‑to‑end with a combination of L1 reconstruction loss and perceptual loss.

During training, spatial upscaling factors are sampled uniformly from 1.2 to 4, and temporal subsampling mimics high‑speed video capture. Random crops of 80×80 pixels and 14 frames are used, along with standard augmentations. The model uses N=512 sinusoidal bases; the RVRT encoder has an embedding dimension of 90 and 12 attention heads.

Experimental evaluation spans several benchmarks (Adobe240, Vid4, REDS, Vimeo‑90K) and includes both spatial upscaling (×2, ×4) and temporal upscaling (×2, ×4, ×8). The proposed V3 model (the VFF‑based system) consistently outperforms prior C‑STVSR methods such as VideoINR, MoTIF, and BF‑STVSR, achieving 1.8–2.2 dB higher PSNR and 0.5–0.8 dB higher SSIM on average. Notably, for high temporal upscaling (×8), V3 reduces motion blur and flickering dramatically, thanks to the phase‑shift representation of translational motion. In terms of efficiency, V3 requires 30–40 % less inference time and 2–3× less memory because it eliminates explicit warping and operates with a single matrix multiplication per sampling operation.

Ablation studies confirm that sharing frequencies across voxels improves temporal consistency, and that the analytical PSF yields alias‑free reconstructions even when the model is evaluated at scales not seen during training. Visualizations show that phase adjustments capture simple translations, while combinations of multiple bases model more complex, non‑linear, and periodic motions without resorting to optical flow.

In summary, the paper introduces a novel continuous video representation grounded in Fourier analysis, demonstrates how it can be conditioned on low‑resolution video via a powerful transformer encoder, and shows that it delivers superior visual quality, robustness to motion, and computational efficiency across a wide range of arbitrary spatial and temporal upscaling factors. This work bridges a gap in C‑STVSR research by providing a unified, warping‑free, anti‑aliasing‑aware framework that is both theoretically sound and practically effective.

Comments & Academic Discussion

Loading comments...

Leave a Comment